The dataset viewer is taking too long to fetch the data. Try to refresh this page.

Error code: ClientConnectionError

Dronescapes dataset

As introduced in our ICCV 2023 workshop paper: link

1. Downloading the data

Option 1. Download the pre-processed dataset from HuggingFace repository

git lfs install # Make sure you have git-lfs installed (https://git-lfs.com)

git clone https://huggingface.co/datasets/Meehai/dronescapes

Note: the dataset has about 300GB, so it may take a while to clone it.

Option 2. Generating the dataset from raw videos and basic labels .

Recommended if you intend on understanding how the dataset was created or add new videos or representations.

1.2.1 Raw videos

Follow the commands in each directory under raw_data/videos/*/commands.txt if you want to start from the 4K videos.

If you only want the 540p videos as used in the paper, they are already provided in the raw_data/videos/* directories.

1.2.2 Semantic segmentation labels (human annotated)

These were human annotated and then propagated using segprop.

cd raw_data/

tar -xzvf segprop_npz_540.tar.gz

1.2.3 Generate the rest of the representations

We use the video-representations-extractor to generate the rest of the labels using pre-traing networks or algoritms.

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=0 vre raw_data/videos/atanasie_DJI_0652_full/atanasie_DJI_0652_full_540p.mp4 -o raw_data/npz_540p/atanasie_DJI_0652_full/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=1 vre raw_data/videos/barsana_DJI_0500_0501_combined_sliced_2700_14700/barsana_DJI_0500_0501_combined_sliced_2700_14700_540p.mp4 -o raw_data/npz_540p/barsana_DJI_0500_0501_combined_sliced_2700_14700/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=2 vre raw_data/videos/comana_DJI_0881_full/comana_DJI_0881_full_540p.mp4 -o raw_data/npz_540p/comana_DJI_0881_full/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=3 vre raw_data/videos/gradistei_DJI_0787_0788_0789_combined_sliced_3510_13110/gradistei_DJI_0787_0788_0789_combined_sliced_3510_13110_540p.mp4 -o raw_data/npz_540p/gradistei_DJI_0787_0788_0789_combined_sliced_3510_13110/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=4 vre raw_data/videos/herculane_DJI_0021_full/herculane_DJI_0021_full_540p.mp4 -o raw_data/npz_540p/herculane_DJI_0021_full/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=5 vre raw_data/videos/jupiter_DJI_0703_0704_0705_combined_sliced_10650_21715/jupiter_DJI_0703_0704_0705_combined_sliced_10650_21715_540p.mp4 -o raw_data/npz_540p/jupiter_DJI_0703_0704_0705_combined_sliced_10650_21715/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=6 vre raw_data/videos/norway_210821_DJI_0015_full/norway_210821_DJI_0015_full_540p.mp4 -o raw_data/npz_540p/norway_210821_DJI_0015_full/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=7 vre raw_data/videos/olanesti_DJI_0416_full/olanesti_DJI_0416_full_540p.mp4 -o raw_data/npz_540p/olanesti_DJI_0416_full/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=0 vre raw_data/videos/petrova_DJI_0525_0526_combined_sliced_2850_11850/petrova_DJI_0525_0526_combined_sliced_2850_11850_540p.mp4 -o raw_data/npz_540p/petrova_DJI_0525_0526_combined_sliced_2850_11850/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

VRE_DEVICE=cuda CUDA_VISIBLE_DEVICES=1 vre raw_data/videos/slanic_DJI_0956_0957_combined_sliced_780_9780/slanic_DJI_0956_0957_combined_sliced_780_9780_540p.mp4 -o raw_data/npz_540p/slanic_DJI_0956_0957_combined_sliced_780_9780/ --cfg_path scripts/cfg.yaml --batch_size 3 --n_threads_data_storer 4 --output_dir_exist_mode overwrite --representations rgb "opticalflow_rife" "depth_dpt" "edges_dexined" "semantic_mask2former_swin_mapillary"

1.2.4 Convert Mask2Former from Mapillary classes to segprop8 classes

Since we are using pre-trained Mask2Former which has either mapillary or COCO panoptic classes, we need to convert them to dronescapes-compatible (8) classes.

To do this, we use the scripts/convert_m2f_to_dronescapes.py script:

python scripts/convert_m2f_to_dronescapes.py in_dir out_dir mapillary/coco [--overwrite]

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/atanasie_DJI_0652_full/semantic_mask2former_swin_mapillary raw_data/npz_540p/atanasie_DJI_0652_full/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/barsana_DJI_0500_0501_combined_sliced_2700_14700/semantic_mask2former_swin_mapillary raw_data/npz_540p/barsana_DJI_0500_0501_combined_sliced_2700_14700/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/comana_DJI_0881_full/semantic_mask2former_swin_mapillary raw_data/npz_540p/comana_DJI_0881_full/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/gradistei_DJI_0787_0788_0789_combined_sliced_3510_13110/semantic_mask2former_swin_mapillary raw_data/npz_540p/gradistei_DJI_0787_0788_0789_combined_sliced_3510_13110/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/herculane_DJI_0021_full/semantic_mask2former_swin_mapillary raw_data/npz_540p/herculane_DJI_0021_full/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/jupiter_DJI_0703_0704_0705_combined_sliced_10650_21715/semantic_mask2former_swin_mapillary raw_data/npz_540p/jupiter_DJI_0703_0704_0705_combined_sliced_10650_21715/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/norway_210821_DJI_0015_full/semantic_mask2former_swin_mapillary raw_data/npz_540p/norway_210821_DJI_0015_full/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/olanesti_DJI_0416_full/semantic_mask2former_swin_mapillary raw_data/npz_540p/olanesti_DJI_0416_full/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/petrova_DJI_0525_0526_combined_sliced_2850_11850/semantic_mask2former_swin_mapillary raw_data/npz_540p/petrova_DJI_0525_0526_combined_sliced_2850_11850/semantic_mask2former_swin_mapillary_converted mapillary

python scripts/convert_m2f_to_dronescapes.py raw_data/npz_540p/slanic_DJI_0956_0957_combined_sliced_780_9780/semantic_mask2former_swin_mapillary raw_data/npz_540p/slanic_DJI_0956_0957_combined_sliced_780_9780/semantic_mask2former_swin_mapillary_converted mapillary

1.2.5 Check counts for consistency

Run: bash scripts/count_npz.sh raw_data/npz_540p. At this point it should return:

| scene | rgb | depth_dpt | depth_sfm_manual20.. | edges_dexined | normals_sfm_manual.. | opticalflow_rife | semantic_mask2form.. | semantic_segprop8 |

|---|---|---|---|---|---|---|---|---|

| atanasie | 9021 | 9021 | 9020 | 9021 | 9020 | 9021 | 9021 | 9001 |

| barsana | 12001 | 12001 | 12001 | 12001 | 12001 | 12000 | 12001 | 1573 |

| comana | 9022 | 9022 | 0 | 9022 | 0 | 9022 | 9022 | 1210 |

| gradistei | 9601 | 9601 | 9600 | 9601 | 9600 | 9600 | 9601 | 1210 |

| herculane | 9022 | 9022 | 9021 | 9022 | 9021 | 9022 | 9022 | 1210 |

| jupiter | 11066 | 11066 | 11065 | 11066 | 11065 | 11066 | 11066 | 1452 |

| norway | 2983 | 2983 | 0 | 2983 | 0 | 2983 | 2983 | 2941 |

| olanesti | 9022 | 9022 | 9021 | 9022 | 9021 | 9022 | 9022 | 1210 |

| petrova | 9001 | 9001 | 9001 | 9001 | 9001 | 9000 | 9001 | 1210 |

| slanic | 9001 | 9001 | 9001 | 9001 | 9001 | 9000 | 9001 | 9001 |

1.2.6. Split intro train, validation, semisupervised and train

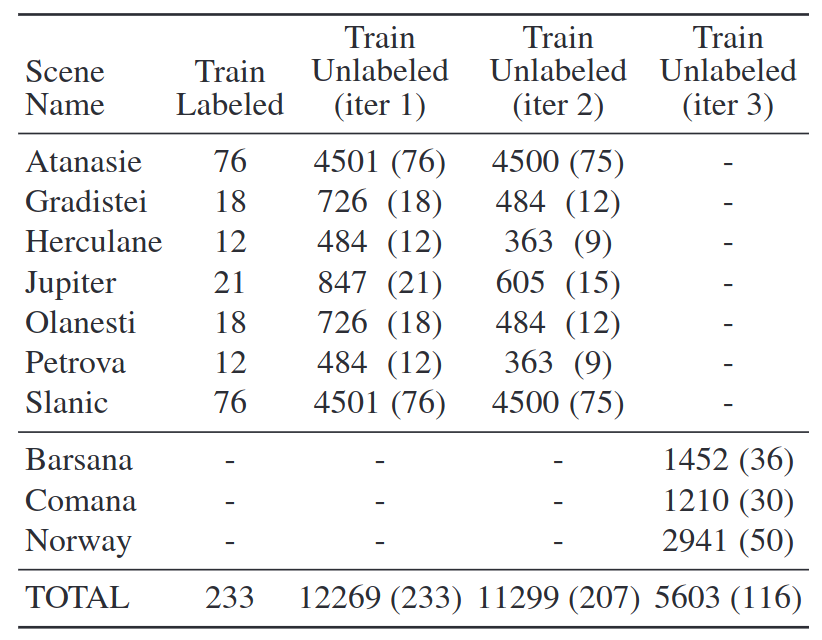

We include 8 splits: 4 using only GT annotated semantic data and 4 using all available data (i.e. segproped between

annotated data). The indexes are taken from txt_files/*, i.e. txt_files/manually_adnotated_files/test_files_116.txt

refers to the fact that the (unseen at train time) test set (norway + petrova + barsana) contains 116 manually

annotated semantic files. We include all representations from above, not just semantic for all possible splits.

Adding new representations is as simple as running VRE on the 540p mp4 file

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/annotated_and_segprop/train_files_11664.txt -o data/train_set --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/annotated_and_segprop/val_files_605.txt -o data/validation_set --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/annotated_and_segprop/semisup_files_11299.txt -o data/semisupervised_set --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/annotated_and_segprop/test_files_5603.txt -o data/test_set --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/manually_annotated_files/train_files_218.txt -o data/train_set_annotated_only --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/manually_annotated_files/val_files_15.txt -o data/validation_set_annotated_only --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/manually_annotated_files/semisup_files_207.txt -o data/semisupervised_set_annotated_nly --overwrite

python scripts/symlinks_from_txt_list.py raw_data/npz_540p/ --txt_file txt_files/manually_annotated_files/test_files_116.txt -o data/test_set_annotated_nly --overwrite

Note: add --copy_files if you want to make copies instead of using symlinks.

Upon calling this, you should be able to see something like this:

user> ls data/*

data/semisupervised_set:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/semisupervised_set_annotated_nly:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/test_set:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/test_set_annotated_nly:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/train_set:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/train_set_annotated_only:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/validation_set:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

data/validation_set_annotated_only:

depth_dpt edges_dexined opticalflow_rife semantic_mask2former_swin_mapillary_converted

depth_sfm_manual202204 normals_sfm_manual202204 rgb semantic_segprop8

1.2.7 Convert Camera Normals to World Normals

This is an optional step, but for some use cases, it may be better to use world normals instead of camera normals, which

are provided by default in normals_sfm_manual202204. To convert, we provide camera rotation matrices in

raw_data/camera_matrics.tar.gz for all 8 scenes that also have SfM.

In order to convert, use this function (for each npz file):

def convert_camera_to_world(normals: np.ndarray, rotation_matrix: np.ndarray) -> np.ndarray:

normals = (normals.copy() - 0.5) * 2 # [-1:1] -> [0:1]

camera_normals = camera_normals @ np.linalg.inv(rotation_matrix)

camera_normals = (camera_normals / 2) + 0.5 # [0:1] => [-1:1]

return np.clip(camera_normals, 0.0, 1.0)

2. Using the data

As per the split from the paper:

The data is in data/* (see the ls call above, it should match even if you download from huggingface).

2.1 Using the provided viewer

Basic usage:

python scripts/dronescapes_viewer.py data/test_set_annotated_only/ # or any of the 8 directories in data/

Expected output

[MultiTaskDataset]

- Path: '/scratch/sdc/datasets/dronescapes/data/test_set_annotated_only'

- Only full data: False

- Representations (8): [NpzRepresentation(depth_dpt), NpzRepresentation(depth_sfm_manual202204), NpzRepresentation(edges_dexined), NpzRepresentation(normals_sfm_manual202204), NpzRepresentation(opticalflow_rife), NpzRepresentation(rgb), NpzRepresentation(semantic_mask2former_swin_mapillary_converted), NpzRepresentation(semantic_segprop8)]

- Length: 116

== Shapes ==

{'depth_dpt': torch.Size([540, 960]),

'depth_sfm_manual202204': torch.Size([540, 960]),

'edges_dexined': torch.Size([540, 960]),

'normals_sfm_manual202204': torch.Size([540, 960, 3]),

'opticalflow_rife': torch.Size([540, 960, 2]),

'rgb': torch.Size([540, 960, 3]),

'semantic_mask2former_swin_mapillary_converted': torch.Size([540, 960]),

'semantic_segprop8': torch.Size([540, 960])}

== Random loaded item ==

/export/home/proiecte/aux/mihai_cristian.pirvu/.conda/envs/ngc/lib/python3.10/site-packages/numpy/core/_methods.py:215: RuntimeWarning: overflow encountered in reduce

arrmean = umr_sum(arr, axis, dtype, keepdims=True, where=where)

{'depth_dpt': tensor[540, 960] x∈[0.031, 1.000] μ=0.060 σ=0.038,

'depth_sfm_manual202204': tensor[540, 960] f16 x∈[0., 1.195e+03] μ=360.250 σ=inf,

'edges_dexined': tensor[540, 960] x∈[0.131, 1.000] μ=0.848 σ=0.188,

'normals_sfm_manual202204': tensor[540, 960, 3] f16 x∈[0.000, 1.000] μ=0.525 σ=inf,

'opticalflow_rife': tensor[540, 960, 2] f16 x∈[-0.000, 0.007] μ=0.002 σ=0.002,

'rgb': tensor[540, 960, 3] u8 x∈[0, 255] μ=68.154 σ=33.902,

'semantic_mask2former_swin_mapillary_converted': tensor[540, 960] u8 x∈[0, 7] μ=3.591 σ=3.058,

'semantic_segprop8': tensor[540, 960] u8 x∈[0, 6] μ=1.057 σ=0.916}

== Random loaded batch ==

{'depth_dpt': torch.Size([5, 540, 960]),

'depth_sfm_manual202204': torch.Size([5, 540, 960]),

'edges_dexined': torch.Size([5, 540, 960]),

'normals_sfm_manual202204': torch.Size([5, 540, 960, 3]),

'opticalflow_rife': torch.Size([5, 540, 960, 2]),

'rgb': torch.Size([5, 540, 960, 3]),

'semantic_mask2former_swin_mapillary_converted': torch.Size([5, 540, 960]),

'semantic_segprop8': torch.Size([5, 540, 960])}

== Random loaded batch using torch DataLoader ==

{'depth_dpt': torch.Size([5, 540, 960]),

'depth_sfm_manual202204': torch.Size([5, 540, 960]),

'edges_dexined': torch.Size([5, 540, 960]),

'normals_sfm_manual202204': torch.Size([5, 540, 960, 3]),

'opticalflow_rife': torch.Size([5, 540, 960, 2]),

'rgb': torch.Size([5, 540, 960, 3]),

'semantic_mask2former_swin_mapillary_converted': torch.Size([5, 540, 960]),

'semantic_segprop8': torch.Size([5, 540, 960])}

3. Evaluation for semantic segmentation

We evaluate in the paper on the 3 test scenes (unsees at train) as well as the semi-supervised scenes (seen, but

different split) against the human annotated frames. The general evaluation script is in

scripts/evaluate_semantic_segmentation.py.

General usage is:

python scripts/evaluate_semantic_segmentation.py y_dir gt_dir -o results.csv --classes C1 C2 .. Cn

[--class_weights W1 W2 ... Wn] [--scenes s1 s2 ... sm]

Script explanation

The script is a bit convoluted, so let's break it into parts:y_dirandgt_dirTwo directories of .npz files in the same format as the dataset (y_dir/1.npz, gt_dir/55.npz etc.)classesA list of classes in the order that they appear in the predictions and gt filesclass_weights(optional, but used in paper) How much to weigh each class. In the paper we compute these weights as the number of pixels in all the dataset (train/val/semisup/test) for each of the 8 classes resulting in the numbers below.scenesif they_dirandgt_dircontains multiple scenes that you want to evaluate separately, the script allows you to pass the prefix of all the scenes. For example, indata/test_set_annotated_only/semantic_segprop8/there are actually 3 scenes in the npz files and in the paper, we evaluate each scene independently. Even though the script outputs one csv file with predictions for each npz file, the scenes are used for proper aggregation at scene level.

Reproducing paper results for Mask2Former

python scripts/evaluate_semantic_segmentation.py \

data/test_set_annotated_only/semantic_mask2former_swin_mapillary_converted/ \ # change this with your predictions dir

data/test_set_annotated_only/semantic_segprop8/ \

-o results.csv \

--classes land forest residential road little-objects water sky hill \

--class_weights 0.28172092 0.30589653 0.13341699 0.05937348 0.00474491 0.05987466 0.08660721 0.06836531 \

--scenes barsana_DJI_0500_0501_combined_sliced_2700_14700 comana_DJI_0881_full norway_210821_DJI_0015_full

Should output:

scene iou f1

barsana_DJI_0500_0501_combined_sliced_2700_14700 63.367 75.327

comana_DJI_0881_full 60.554 73.757

norway_210821_DJI_0015_full 37.998 45.928

overall avg 53.973 65.004

Not providing --scenes will make an average across all 3 scenes (not average after each metric individually):

iou f1

scene

all 60.456 73.261

- Downloads last month

- 0