Merge branch 'next' into main

Browse files- README.md +453 -2

- assets/whisper_fr_eval_long_form.png +0 -0

- assets/whisper_fr_eval_short_form.png +0 -0

README.md

CHANGED

|

@@ -1,5 +1,456 @@

|

|

| 1 |

---

|

| 2 |

-

license:

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

---

|

| 4 |

|

| 5 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

license: mit

|

| 3 |

+

language: fr

|

| 4 |

+

library_name: transformers

|

| 5 |

+

pipeline_tag: automatic-speech-recognition

|

| 6 |

+

thumbnail: null

|

| 7 |

+

tags:

|

| 8 |

+

- automatic-speech-recognition

|

| 9 |

+

- hf-asr-leaderboard

|

| 10 |

+

datasets:

|

| 11 |

+

- mozilla-foundation/common_voice_13_0

|

| 12 |

+

- facebook/multilingual_librispeech

|

| 13 |

+

- facebook/voxpopuli

|

| 14 |

+

- google/fleurs

|

| 15 |

+

- gigant/african_accented_french

|

| 16 |

+

metrics:

|

| 17 |

+

- wer

|

| 18 |

+

model-index:

|

| 19 |

+

- name: whisper-large-v3-french

|

| 20 |

+

results:

|

| 21 |

+

- task:

|

| 22 |

+

name: Automatic Speech Recognition

|

| 23 |

+

type: automatic-speech-recognition

|

| 24 |

+

dataset:

|

| 25 |

+

name: Common Voice 13.0

|

| 26 |

+

type: mozilla-foundation/common_voice_13_0

|

| 27 |

+

config: fr

|

| 28 |

+

split: test

|

| 29 |

+

args:

|

| 30 |

+

language: fr

|

| 31 |

+

metrics:

|

| 32 |

+

- name: WER

|

| 33 |

+

type: wer

|

| 34 |

+

value: 7.28

|

| 35 |

+

- task:

|

| 36 |

+

name: Automatic Speech Recognition

|

| 37 |

+

type: automatic-speech-recognition

|

| 38 |

+

dataset:

|

| 39 |

+

name: Multilingual LibriSpeech (MLS)

|

| 40 |

+

type: facebook/multilingual_librispeech

|

| 41 |

+

config: french

|

| 42 |

+

split: test

|

| 43 |

+

args:

|

| 44 |

+

language: fr

|

| 45 |

+

metrics:

|

| 46 |

+

- name: WER

|

| 47 |

+

type: wer

|

| 48 |

+

value: 3.98

|

| 49 |

+

- task:

|

| 50 |

+

name: Automatic Speech Recognition

|

| 51 |

+

type: automatic-speech-recognition

|

| 52 |

+

dataset:

|

| 53 |

+

name: VoxPopuli

|

| 54 |

+

type: facebook/voxpopuli

|

| 55 |

+

config: fr

|

| 56 |

+

split: test

|

| 57 |

+

args:

|

| 58 |

+

language: fr

|

| 59 |

+

metrics:

|

| 60 |

+

- name: WER

|

| 61 |

+

type: wer

|

| 62 |

+

value: 8.91

|

| 63 |

+

- task:

|

| 64 |

+

name: Automatic Speech Recognition

|

| 65 |

+

type: automatic-speech-recognition

|

| 66 |

+

dataset:

|

| 67 |

+

name: Fleurs

|

| 68 |

+

type: google/fleurs

|

| 69 |

+

config: fr_fr

|

| 70 |

+

split: test

|

| 71 |

+

args:

|

| 72 |

+

language: fr

|

| 73 |

+

metrics:

|

| 74 |

+

- name: WER

|

| 75 |

+

type: wer

|

| 76 |

+

value: 4.84

|

| 77 |

+

- task:

|

| 78 |

+

name: Automatic Speech Recognition

|

| 79 |

+

type: automatic-speech-recognition

|

| 80 |

+

dataset:

|

| 81 |

+

name: African Accented French

|

| 82 |

+

type: gigant/african_accented_french

|

| 83 |

+

config: fr

|

| 84 |

+

split: test

|

| 85 |

+

args:

|

| 86 |

+

language: fr

|

| 87 |

+

metrics:

|

| 88 |

+

- name: WER

|

| 89 |

+

type: wer

|

| 90 |

+

value: 4.20

|

| 91 |

---

|

| 92 |

|

| 93 |

+

# Whisper-Large-V3-French

|

| 94 |

+

|

| 95 |

+

Whisper-Large-V3-French is fine-tuned on `openai/whisper-large-v3` to further enhance its performance on the French language. This model has been trained to predict casing, punctuation, and numbers. While this might slightly sacrifice performance, we believe it allows for broader usage.

|

| 96 |

+

|

| 97 |

+

This model has been converted into various formats, facilitating its usage across different libraries, including transformers, openai-whisper, fasterwhisper, whisper.cpp, candle, mlx, etc.

|

| 98 |

+

|

| 99 |

+

## Table of Contents

|

| 100 |

+

|

| 101 |

+

- [Performance](#performance)

|

| 102 |

+

- [Usage](#usage)

|

| 103 |

+

- [Hugging Face Pipeline](#hugging-face-pipeline)

|

| 104 |

+

- [Hugging Face Low-level APIs](#hugging-face-low-level-apis)

|

| 105 |

+

- [Speculative Decoding](#speculative-decoding)

|

| 106 |

+

- [OpenAI Whisper](#openai-whisper)

|

| 107 |

+

- [Faster Whisper](#faster-whisper)

|

| 108 |

+

- [Whisper.cpp](#whispercpp)

|

| 109 |

+

- [Candle](#candle)

|

| 110 |

+

- [MLX](#mlx)

|

| 111 |

+

- [Training details](#training-details)

|

| 112 |

+

- [Acknowledgements](#acknowledgements)

|

| 113 |

+

|

| 114 |

+

## Performance

|

| 115 |

+

|

| 116 |

+

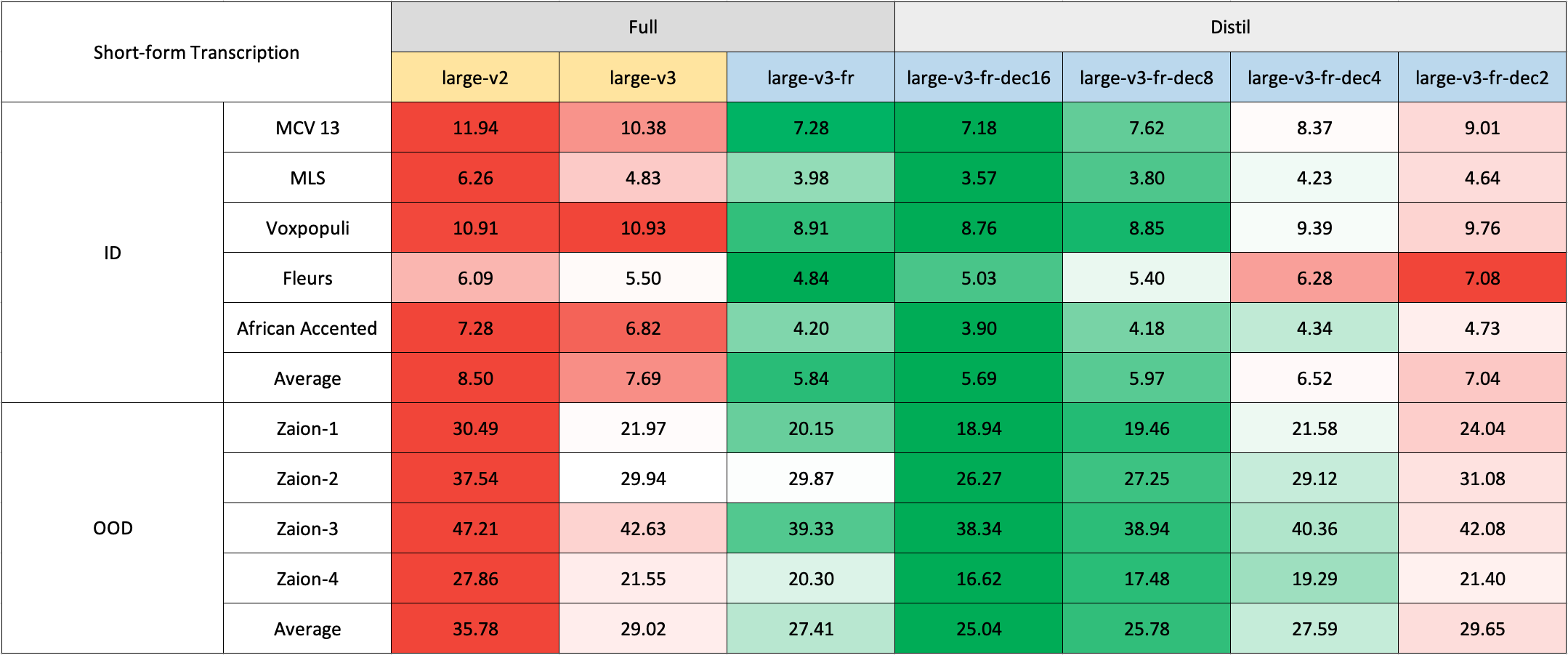

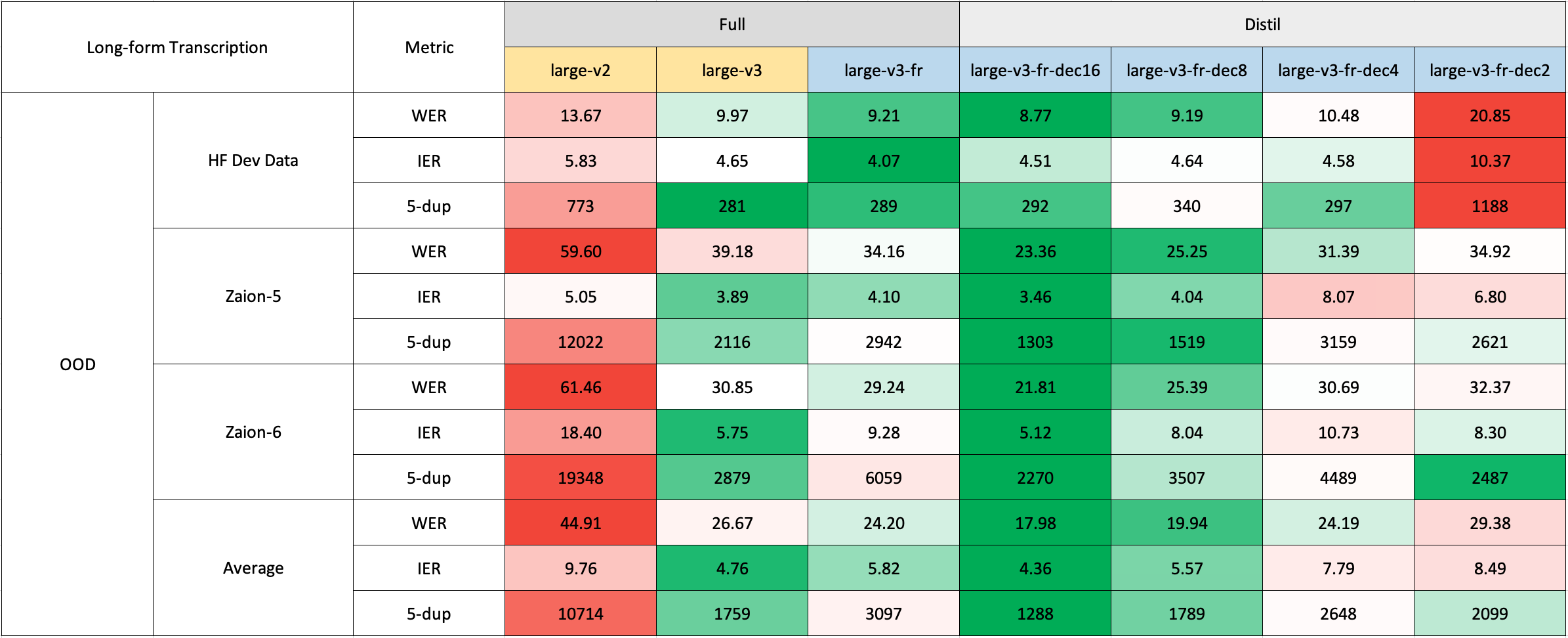

We evaluated our model on both short and long-form transcriptions, and also tested it on both in-distribution and out-of-distribution datasets to conduct a comprehensive analysis assessing its accuracy, generalizability, and robustness.

|

| 117 |

+

|

| 118 |

+

Please note that the reported WER is the result after converting numbers to text, removing punctuation (except for apostrophes and hyphens), and converting all characters to lowercase.

|

| 119 |

+

|

| 120 |

+

All evaluation results on the public datasets can be found [here](https://drive.google.com/drive/folders/1rFIh6yXRVa9RZ0ieZoKiThFZgQ4STPPI?usp=drive_link).

|

| 121 |

+

|

| 122 |

+

### Short-Form Transcription

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

Due to the lack of readily available out-of-domain (OOD) and long-form test sets in French, we evaluated using internal test sets from [Zaion Lab](https://zaion.ai/). These sets comprise human-annotated audio-transcription pairs from call center conversations, which are notable for their significant background noise and domain-specific terminology.

|

| 127 |

+

|

| 128 |

+

### Long-Form Transcription

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

The long-form transcription was run using the 🤗 Hugging Face pipeline for quicker evaluation. Audio files were segmented into 30-second chunks and processed in parallel.

|

| 133 |

+

|

| 134 |

+

## Usage

|

| 135 |

+

|

| 136 |

+

### Hugging Face Pipeline

|

| 137 |

+

|

| 138 |

+

The model can easily used with the 🤗 Hugging Face [`pipeline`](https://huggingface.co/docs/transformers/main_classes/pipelines#transformers.AutomaticSpeechRecognitionPipeline) class for audio transcription.

|

| 139 |

+

|

| 140 |

+

For long-form transcription (> 30 seconds), you can activate the process by passing the `chunk_length_s` argument. This approach segments the audio into smaller segments, processes them in parallel, and then joins them at the strides by finding the longest common sequence. While this chunked long-form approach may have a slight compromise in performance compared to OpenAI's sequential algorithm, it provides 9x faster inference speed.

|

| 141 |

+

|

| 142 |

+

```python

|

| 143 |

+

import torch

|

| 144 |

+

from datasets import load_dataset

|

| 145 |

+

from transformers import AutoModelForSpeechSeq2Seq, AutoProcessor, pipeline

|

| 146 |

+

|

| 147 |

+

device = "cuda:0" if torch.cuda.is_available() else "cpu"

|

| 148 |

+

torch_dtype = torch.float16 if torch.cuda.is_available() else torch.float32

|

| 149 |

+

|

| 150 |

+

# Load model

|

| 151 |

+

model_name_or_path = "bofenghuang/whisper-large-v3-french"

|

| 152 |

+

processor = AutoProcessor.from_pretrained(model_name_or_path)

|

| 153 |

+

model = AutoModelForSpeechSeq2Seq.from_pretrained(

|

| 154 |

+

model_name_or_path,

|

| 155 |

+

torch_dtype=torch_dtype,

|

| 156 |

+

low_cpu_mem_usage=True,

|

| 157 |

+

)

|

| 158 |

+

model.to(device)

|

| 159 |

+

|

| 160 |

+

# Init pipeline

|

| 161 |

+

pipe = pipeline(

|

| 162 |

+

"automatic-speech-recognition",

|

| 163 |

+

model=model,

|

| 164 |

+

feature_extractor=processor.feature_extractor,

|

| 165 |

+

tokenizer=processor.tokenizer,

|

| 166 |

+

torch_dtype=torch_dtype,

|

| 167 |

+

device=device,

|

| 168 |

+

# chunk_length_s=30, # for long-form transcription

|

| 169 |

+

max_new_tokens=128,

|

| 170 |

+

)

|

| 171 |

+

|

| 172 |

+

# Example audio

|

| 173 |

+

dataset = load_dataset("bofenghuang/asr-dummy", "fr", split="test")

|

| 174 |

+

sample = dataset[0]["audio"]

|

| 175 |

+

|

| 176 |

+

# Run pipeline

|

| 177 |

+

result = pipe(sample)

|

| 178 |

+

print(result["text"])

|

| 179 |

+

```

|

| 180 |

+

|

| 181 |

+

### Hugging Face Low-level APIs

|

| 182 |

+

|

| 183 |

+

You can also use the 🤗 Hugging Face low-level APIs for transcription, offering greater control over the process, as demonstrated below:

|

| 184 |

+

|

| 185 |

+

```python

|

| 186 |

+

import torch

|

| 187 |

+

from datasets import load_dataset

|

| 188 |

+

from transformers import AutoModelForSpeechSeq2Seq, AutoProcessor

|

| 189 |

+

|

| 190 |

+

device = "cuda:0" if torch.cuda.is_available() else "cpu"

|

| 191 |

+

torch_dtype = torch.float16 if torch.cuda.is_available() else torch.float32

|

| 192 |

+

|

| 193 |

+

# Load model

|

| 194 |

+

model_name_or_path = "bofenghuang/whisper-large-v3-french"

|

| 195 |

+

processor = AutoProcessor.from_pretrained(model_name_or_path)

|

| 196 |

+

model = AutoModelForSpeechSeq2Seq.from_pretrained(

|

| 197 |

+

model_name_or_path,

|

| 198 |

+

torch_dtype=torch_dtype,

|

| 199 |

+

low_cpu_mem_usage=True,

|

| 200 |

+

)

|

| 201 |

+

model.to(device)

|

| 202 |

+

|

| 203 |

+

# Example audio

|

| 204 |

+

dataset = load_dataset("bofenghuang/asr-dummy", "fr", split="test")

|

| 205 |

+

sample = dataset[0]["audio"]

|

| 206 |

+

|

| 207 |

+

# Extract feautres

|

| 208 |

+

input_features = processor(

|

| 209 |

+

sample["array"], sampling_rate=sample["sampling_rate"], return_tensors="pt"

|

| 210 |

+

).input_features

|

| 211 |

+

|

| 212 |

+

|

| 213 |

+

# Generate tokens

|

| 214 |

+

predicted_ids = model.generate(

|

| 215 |

+

input_features.to(dtype=torch_dtype).to(device), max_new_tokens=128

|

| 216 |

+

)

|

| 217 |

+

|

| 218 |

+

# Detokenize to text

|

| 219 |

+

transcription = processor.batch_decode(predicted_ids, skip_special_tokens=True)[0]

|

| 220 |

+

print(transcription)

|

| 221 |

+

```

|

| 222 |

+

|

| 223 |

+

### Speculative Decoding

|

| 224 |

+

|

| 225 |

+

[Speculative decoding](https://huggingface.co/blog/whisper-speculative-decoding) can be achieved using a draft model, essentially a distilled version of Whisper. This approach guarantees identical outputs to using the main Whisper model alone, offers a 2x faster inference speed, and incurs only a slight increase in memory overhead.

|

| 226 |

+

|

| 227 |

+

Since the distilled Whisper has the same encoder as the original, only its decoder need to be loaded, and encoder outputs are shared between the main and draft models during inference.

|

| 228 |

+

|

| 229 |

+

Using speculative decoding with the Hugging Face pipeline is simple - just specify the `assistant_model` within the generation configurations.

|

| 230 |

+

|

| 231 |

+

```python

|

| 232 |

+

import torch

|

| 233 |

+

from datasets import load_dataset

|

| 234 |

+

from transformers import (

|

| 235 |

+

AutoModelForCausalLM,

|

| 236 |

+

AutoModelForSpeechSeq2Seq,

|

| 237 |

+

AutoProcessor,

|

| 238 |

+

pipeline,

|

| 239 |

+

)

|

| 240 |

+

|

| 241 |

+

device = "cuda:0" if torch.cuda.is_available() else "cpu"

|

| 242 |

+

torch_dtype = torch.float16 if torch.cuda.is_available() else torch.float32

|

| 243 |

+

|

| 244 |

+

# Load model

|

| 245 |

+

model_name_or_path = "bofenghuang/whisper-large-v3-french"

|

| 246 |

+

processor = AutoProcessor.from_pretrained(model_name_or_path)

|

| 247 |

+

model = AutoModelForSpeechSeq2Seq.from_pretrained(

|

| 248 |

+

model_name_or_path,

|

| 249 |

+

torch_dtype=torch_dtype,

|

| 250 |

+

low_cpu_mem_usage=True,

|

| 251 |

+

)

|

| 252 |

+

model.to(device)

|

| 253 |

+

|

| 254 |

+

# Load draft model

|

| 255 |

+

assistant_model_name_or_path = "bofenghuang/whisper-large-v3-french-distil-dec2"

|

| 256 |

+

assistant_model = AutoModelForCausalLM.from_pretrained(

|

| 257 |

+

assistant_model_name_or_path,

|

| 258 |

+

torch_dtype=torch_dtype,

|

| 259 |

+

low_cpu_mem_usage=True,

|

| 260 |

+

)

|

| 261 |

+

assistant_model.to(device)

|

| 262 |

+

|

| 263 |

+

# Init pipeline

|

| 264 |

+

pipe = pipeline(

|

| 265 |

+

"automatic-speech-recognition",

|

| 266 |

+

model=model,

|

| 267 |

+

feature_extractor=processor.feature_extractor,

|

| 268 |

+

tokenizer=processor.tokenizer,

|

| 269 |

+

torch_dtype=torch_dtype,

|

| 270 |

+

device=device,

|

| 271 |

+

generate_kwargs={"assistant_model": assistant_model},

|

| 272 |

+

max_new_tokens=128,

|

| 273 |

+

)

|

| 274 |

+

|

| 275 |

+

# Example audio

|

| 276 |

+

dataset = load_dataset("bofenghuang/asr-dummy", "fr", split="test")

|

| 277 |

+

sample = dataset[0]["audio"]

|

| 278 |

+

|

| 279 |

+

# Run pipeline

|

| 280 |

+

result = pipe(sample)

|

| 281 |

+

print(result["text"])

|

| 282 |

+

```

|

| 283 |

+

|

| 284 |

+

### OpenAI Whisper

|

| 285 |

+

|

| 286 |

+

You can also employ the sequential long-form decoding algorithm with a sliding window and temperature fallback, as outlined by OpenAI in their original [paper](https://arxiv.org/abs/2212.04356).

|

| 287 |

+

|

| 288 |

+

First, install the [openai-whisper](https://github.com/openai/whisper) package:

|

| 289 |

+

|

| 290 |

+

```bash

|

| 291 |

+

pip install -U openai-whisper

|

| 292 |

+

```

|

| 293 |

+

|

| 294 |

+

Then, download the converted model:

|

| 295 |

+

|

| 296 |

+

```bash

|

| 297 |

+

python -c "from huggingface_hub import hf_hub_download; hf_hub_download(repo_id='bofenghuang/whisper-large-v3-french', filename='original_model.pt', local_dir='./models/whisper-large-v3-french')"

|

| 298 |

+

```

|

| 299 |

+

|

| 300 |

+

Now, you can transcirbe audio files by following the usage instructions provided in the repository:

|

| 301 |

+

|

| 302 |

+

```python

|

| 303 |

+

import whisper

|

| 304 |

+

from datasets import load_dataset

|

| 305 |

+

|

| 306 |

+

# Load model

|

| 307 |

+

model = whisper.load_model("./models/whisper-large-v3-french/original_model.pt")

|

| 308 |

+

|

| 309 |

+

# Example audio

|

| 310 |

+

dataset = load_dataset("bofenghuang/asr-dummy", "fr", split="test")

|

| 311 |

+

sample = dataset[0]["audio"]["array"].astype("float32")

|

| 312 |

+

|

| 313 |

+

# Transcribe

|

| 314 |

+

result = model.transcribe(sample, language="fr")

|

| 315 |

+

print(result["text"])

|

| 316 |

+

```

|

| 317 |

+

|

| 318 |

+

### Faster Whisper

|

| 319 |

+

|

| 320 |

+

Faster Whisper is a reimplementation of OpenAI's Whisper models and the sequential long-form decoding algorithm in the [CTranslate2](https://github.com/OpenNMT/CTranslate2) format.

|

| 321 |

+

|

| 322 |

+

Compared to openai-whisper, it offers up to 4x faster inference speed, while consuming less memory. Additionally, the model can be quantized into int8, further enhancing its efficiency on both CPU and GPU.

|

| 323 |

+

|

| 324 |

+

First, install the [faster-whisper](https://github.com/SYSTRAN/faster-whisper) package:

|

| 325 |

+

|

| 326 |

+

```bash

|

| 327 |

+

pip install faster-whisper

|

| 328 |

+

```

|

| 329 |

+

|

| 330 |

+

Then, download the model converted to the CTranslate2 format:

|

| 331 |

+

|

| 332 |

+

```bash

|

| 333 |

+

python -c "from huggingface_hub import snapshot_download; snapshot_download(repo_id='bofenghuang/whisper-large-v3-french', local_dir='./models/whisper-large-v3-french', allow_patterns='ctranslate2/*')"

|

| 334 |

+

```

|

| 335 |

+

|

| 336 |

+

Now, you can transcirbe audio files by following the usage instructions provided in the repository:

|

| 337 |

+

|

| 338 |

+

```python

|

| 339 |

+

from datasets import load_dataset

|

| 340 |

+

from faster_whisper import WhisperModel

|

| 341 |

+

|

| 342 |

+

# Load model

|

| 343 |

+

model = WhisperModel("./models/whisper-large-v3-french/ctranslate2", device="cuda", compute_type="float16") # Run on GPU with FP16

|

| 344 |

+

|

| 345 |

+

# Example audio

|

| 346 |

+

dataset = load_dataset("bofenghuang/asr-dummy", "fr", split="test")

|

| 347 |

+

sample = dataset[0]["audio"]["array"].astype("float32")

|

| 348 |

+

|

| 349 |

+

segments, info = model.transcribe(sample, beam_size=5, language="fr")

|

| 350 |

+

|

| 351 |

+

for segment in segments:

|

| 352 |

+

print("[%.2fs -> %.2fs] %s" % (segment.start, segment.end, segment.text))

|

| 353 |

+

```

|

| 354 |

+

|

| 355 |

+

### Whisper.cpp

|

| 356 |

+

|

| 357 |

+

Whisper.cpp is a reimplementation of OpenAI's Whisper models, crafted in plain C/C++ without any dependencies. It offers compatibility with various backends and platforms.

|

| 358 |

+

|

| 359 |

+

Additionally, the model can be quantized to either 4-bit or 5-bit integers, further enhancing its efficiency.

|

| 360 |

+

|

| 361 |

+

First, clone and build the [whisper.cpp](https://github.com/ggerganov/whisper.cpp) repository:

|

| 362 |

+

|

| 363 |

+

```bash

|

| 364 |

+

git clone https://github.com/ggerganov/whisper.cpp.git

|

| 365 |

+

cd whisper.cpp

|

| 366 |

+

|

| 367 |

+

# build the main example

|

| 368 |

+

make

|

| 369 |

+

```

|

| 370 |

+

|

| 371 |

+

Next, download the converted ggml weights from the Hugging Face Hub:

|

| 372 |

+

|

| 373 |

+

```bash

|

| 374 |

+

# Download model quantized with Q5_0 method

|

| 375 |

+

python -c "from huggingface_hub import hf_hub_download; hf_hub_download(repo_id='bofenghuang/whisper-large-v3-french', filename='ggml-model-q5_0.bin', local_dir='./models/whisper-large-v3-french')"

|

| 376 |

+

```

|

| 377 |

+

|

| 378 |

+

Now, you can transcribe an audio file using the following command:

|

| 379 |

+

|

| 380 |

+

```bash

|

| 381 |

+

./main -m ./models/whisper-large-v3-french/ggml-model-q5_0.bin -l fr -f /path/to/audio/file --print-colors

|

| 382 |

+

```

|

| 383 |

+

|

| 384 |

+

### Candle

|

| 385 |

+

|

| 386 |

+

[Candle-whisper](https://github.com/huggingface/candle/tree/main/candle-examples/examples/whisper) is a reimplementation of OpenAI's Whisper models in the candle format - a lightweight ML framework built in Rust.

|

| 387 |

+

|

| 388 |

+

First, clone the [candle](https://github.com/huggingface/candle) repository:

|

| 389 |

+

|

| 390 |

+

```bash

|

| 391 |

+

git clone https://github.com/huggingface/candle.git

|

| 392 |

+

cd candle/candle-examples/examples/whisper

|

| 393 |

+

```

|

| 394 |

+

|

| 395 |

+

Transcribe an audio file using the following command:

|

| 396 |

+

|

| 397 |

+

```bash

|

| 398 |

+

cargo run --example whisper --release -- --model large-v3 --model-id bofenghuang/whisper-large-v3-french --language fr --input /path/to/audio/file

|

| 399 |

+

```

|

| 400 |

+

|

| 401 |

+

In order to use CUDA add `--features cuda` to the example command line:

|

| 402 |

+

|

| 403 |

+

```bash

|

| 404 |

+

cargo run --example whisper --release --features cuda -- --model large-v3 --model-id bofenghuang/whisper-large-v3-french --language fr --input /path/to/audio/file

|

| 405 |

+

```

|

| 406 |

+

|

| 407 |

+

### MLX

|

| 408 |

+

|

| 409 |

+

[MLX-Whisper](https://github.com/ml-explore/mlx-examples/tree/main/whisper) is a reimplementation of OpenAI's Whisper models in the [MLX](https://github.com/ml-explore/mlx) format - a ML framework on Apple silicon. It supports features like lazy computation, unified memory management, etc.

|

| 410 |

+

|

| 411 |

+

First, clone the [MLX Examples](https://github.com/ml-explore/mlx-examples) repository:

|

| 412 |

+

|

| 413 |

+

```bash

|

| 414 |

+

git clone https://github.com/ml-explore/mlx-examples.git

|

| 415 |

+

cd mlx-examples/whisper

|

| 416 |

+

```

|

| 417 |

+

|

| 418 |

+

Next, install the dependencies:

|

| 419 |

+

|

| 420 |

+

```bash

|

| 421 |

+

pip install -r requirements.txt

|

| 422 |

+

```

|

| 423 |

+

|

| 424 |

+

Download the pytorch checkpoint in the original OpenAI format and convert it into MLX format (We haven't included the converted version here since the repository is already heavy and the conversion is very fast):

|

| 425 |

+

|

| 426 |

+

```bash

|

| 427 |

+

# Download

|

| 428 |

+

python -c "from huggingface_hub import hf_hub_download; hf_hub_download(repo_id='bofenghuang/whisper-large-v3-french', filename='original_model.pt', local_dir='./models/whisper-large-v3-french')"

|

| 429 |

+

# Convert into .npz

|

| 430 |

+

python convert.py --torch-name-or-path ./models/whisper-large-v3-french/original_model.pt --mlx-path ./mlx_models/whisper-large-v3-french

|

| 431 |

+

```

|

| 432 |

+

|

| 433 |

+

Now, you can transcribe audio with:

|

| 434 |

+

|

| 435 |

+

```python

|

| 436 |

+

import whisper

|

| 437 |

+

|

| 438 |

+

result = whisper.transcribe("/path/to/audio/file", path_or_hf_repo="mlx_models/whisper-large-v3-french", language="fr")

|

| 439 |

+

print(result["text"])

|

| 440 |

+

```

|

| 441 |

+

|

| 442 |

+

## Training details

|

| 443 |

+

|

| 444 |

+

We've collected a composite dataset consisting of over 2,500 hours of French speech recognition data, which incldues datasets such as [Common Voice 13.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_13_0), [Multilingual LibriSpeech](https://huggingface.co/datasets/facebook/multilingual_librispeech), [Voxpopuli](https://huggingface.co/datasets/facebook/voxpopuli), [Fleurs](https://huggingface.co/datasets/google/fleurs), [Multilingual TEDx](https://www.openslr.org/100/), [MediaSpeech](https://www.openslr.org/108/), [African Accented French](https://huggingface.co/datasets/gigant/african_accented_french), etc.

|

| 445 |

+

|

| 446 |

+

Given that some datasets, like MLS, only offer text without case or punctuation, we employed a customized version of 🤗 [Speechbox](https://github.com/huggingface/speechbox) to restore case and punctuation from a limited set of symbols using the [bofenghuang/whisper-large-v2-cv11-french](bofenghuang/whisper-large-v2-cv11-french) model.

|

| 447 |

+

|

| 448 |

+

However, even within these datasets, we observed certain quality issues. These ranged from mismatches between audio and transcription in terms of language or content, poorly segmented utterances, to missing words in scripted speech, etc. We've built a pipeline to filter out many of these problematic utterances, aiming to enhance the dataset's quality. As a result, we excluded more than 10% of the data, and when we retrained the model, we noticed a significant reduction of hallucination.

|

| 449 |

+

|

| 450 |

+

For training, we employed the [script](https://github.com/huggingface/transformers/blob/main/examples/pytorch/speech-recognition/run_speech_recognition_seq2seq.py) available in the 🤗 Transformers repository. The model training took place on the [Jean-Zay supercomputer](http://www.idris.fr/eng/jean-zay/jean-zay-presentation-eng.html) at GENCI, and we extend our gratitude to the IDRIS team for their responsive support throughout the project.

|

| 451 |

+

|

| 452 |

+

## Acknowledgements

|

| 453 |

+

|

| 454 |

+

- OpenAI for creating and open-sourcing the [Whisper model](https://arxiv.org/abs/2212.04356)

|

| 455 |

+

- 🤗 Hugging Face for integrating the Whisper model and providing the training codebase within the [Transformers](https://github.com/huggingface/transformers) repository

|

| 456 |

+

- [Genci](https://genci.fr/) for their generous contribution of GPU hours to this project

|

assets/whisper_fr_eval_long_form.png

ADDED

|

assets/whisper_fr_eval_short_form.png

ADDED

|