ruDALL-E Malevich (XL)

Generate images from text

"Avocado painting in the style of Malevich"

"Avocado painting in the style of Malevich"

Model was trained by Sber AI and SberDevices teams.

- Task:

text2image generation - Type:

encoder-decoder - Num Parameters:

1.3 B - Training Data Volume:

120 million text-image pairs

Model Description

This is a 1.3 billion parameter model for Russian, recreating OpenAI's DALL·E, a model capable of generating arbitrary images from a text prompt that describes the desired result.

The generation pipeline includes ruDALL-E, ruCLIP for ranging results, and a superresolution model. You can use automatic translation into Russian to create desired images with ruDALL-E.

How to Use

The easiest way to get familiar with the code and the models is to follow the inference notebook we provide in our github repo.

Motivation

One might say that “investigate, master, and train” is our engineering motto. Well, we caught the scent, and today we can say that we created from scratch a complete pipeline for generating images from descriptive textual input written in Russian.

Teams at SberAI, SberDevices, Samara University, AIRI and SberCloud all actively contributed.

We trained two versions of the model, each a different size, and named them after Russia’s great abstractionists: Vasily Kandinsky and Kazimir Malevich.

- ruDALL-E Kandinsky (XXL), with 12 billion parameters

- ruDALL-E Malevich (XL), having 1.3 billion parameters

Some of our models are already freely available:

- ruDALL-E Malevich (XL) [GitHub, HuggingFace]

- Sber VQ-GAN [GitHub, HuggingFace]

- ruCLIP Small [GitHub, HuggingFace]

- Super Resolution (Real ESRGAN) [GitHub, HuggingFace] The latter two models are included in the pipeline for generating images from text (as you’ll see later on).

The models ruDALL-E Malevich (XL), ruDALL-E Kandinsky (XXL), ruCLIP Small, ruCLIP Large, and Super Resolution (Real ESRGAN) will also soon be available on DataHub.

Training the ruDALL-E neural networks on the Christofari cluster has become the largest calculation task in Russia:

- ruDALL-E Kandinsky (XXL) was trained for 37 days on the 512 GPU TESLA V100, and then also for 11 more days on the 128 GPU TESLA V100, for a total of 20,352 GPU-days;

- ruDALL-E Malevich (XL) was trained for 8 days on the 128 GPU TESLA V100, and then also for 15 more days on the 192 GPU TESLA V100, for a total of 3,904 GPU-days.

Accordingly, training for both models totalled 24,256 GPU-days.

Model capabilities

The long term goal of this research is the creation of multimodal neural networks. They will be able to pull on concepts from a variety of mediums---from text and visuals at first---in order to better understand the world as a whole.

Image generation might seem like the wrong rabbit hole in our century of big data and search engines. But it actually addresses two important requirements that search is currently unable to cope with:

- Being able to describe in writing exactly what you’re looking for and getting a completely new image created personally for you.

- Being able to create at any time as many license-free illustrations as you could possibly want

"Grand Canyon"

"Salvador Dali picture"

"An eagle sits in a tree, looking to the side"

"Elegant living room with green stuffed chairs"

“Raccoon with a gun”

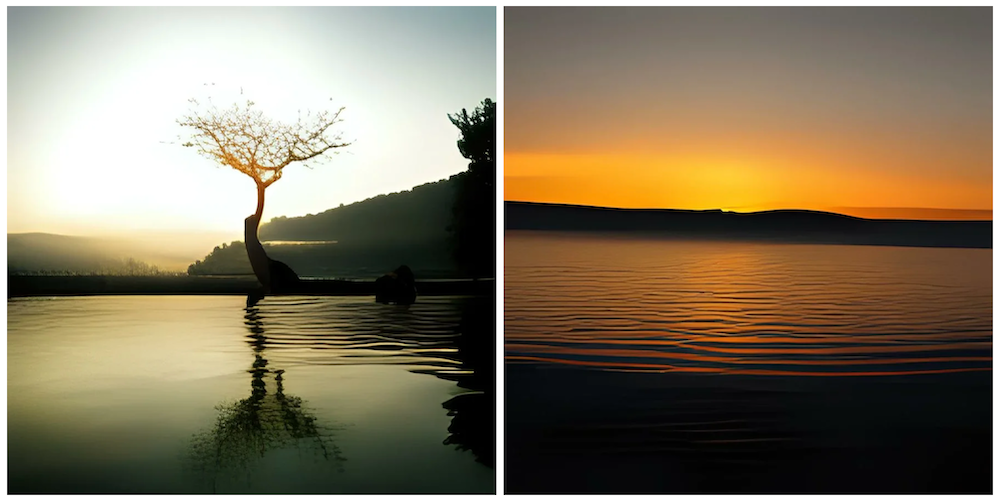

“Pretty lake at sunset”