library_name: transformers

tags:

- image-captioning

- visual-question-answering

license: apache-2.0

datasets:

- X2FD/LVIS-Instruct4V

- BAAI/SVIT

- HuggingFaceH4/ultrachat_200k

- MMInstruction/VLFeedback

- zhiqings/LLaVA-Human-Preference-10K

language:

- en

pipeline_tag: image-to-text

widget:

- src: interior.jpg

example_title: Detailed caption

output:

text: >-

The image shows a serene and well-lit bedroom with a white bed, a black

bed frame, and a white comforter. There’s a gray armchair with a white

cushion, a black dresser with a mirror and a vase, and a white rug on

the floor. The room has a large window with white curtains, and there

are several decorative items, including a picture frame, a vase with a

flower, and a lamp. The room is well-organized and has a calming

atmosphere.

- src: cat.jpg

example_title: Short caption

output:

text: >-

A white and orange cat stands on its hind legs, reaching towards a

wooden table with a white teapot and a basket of red raspberries. The

table is on a small wooden bench, surrounded by orange flowers. The

cat’s position and action create a serene, playful scene in a garden.

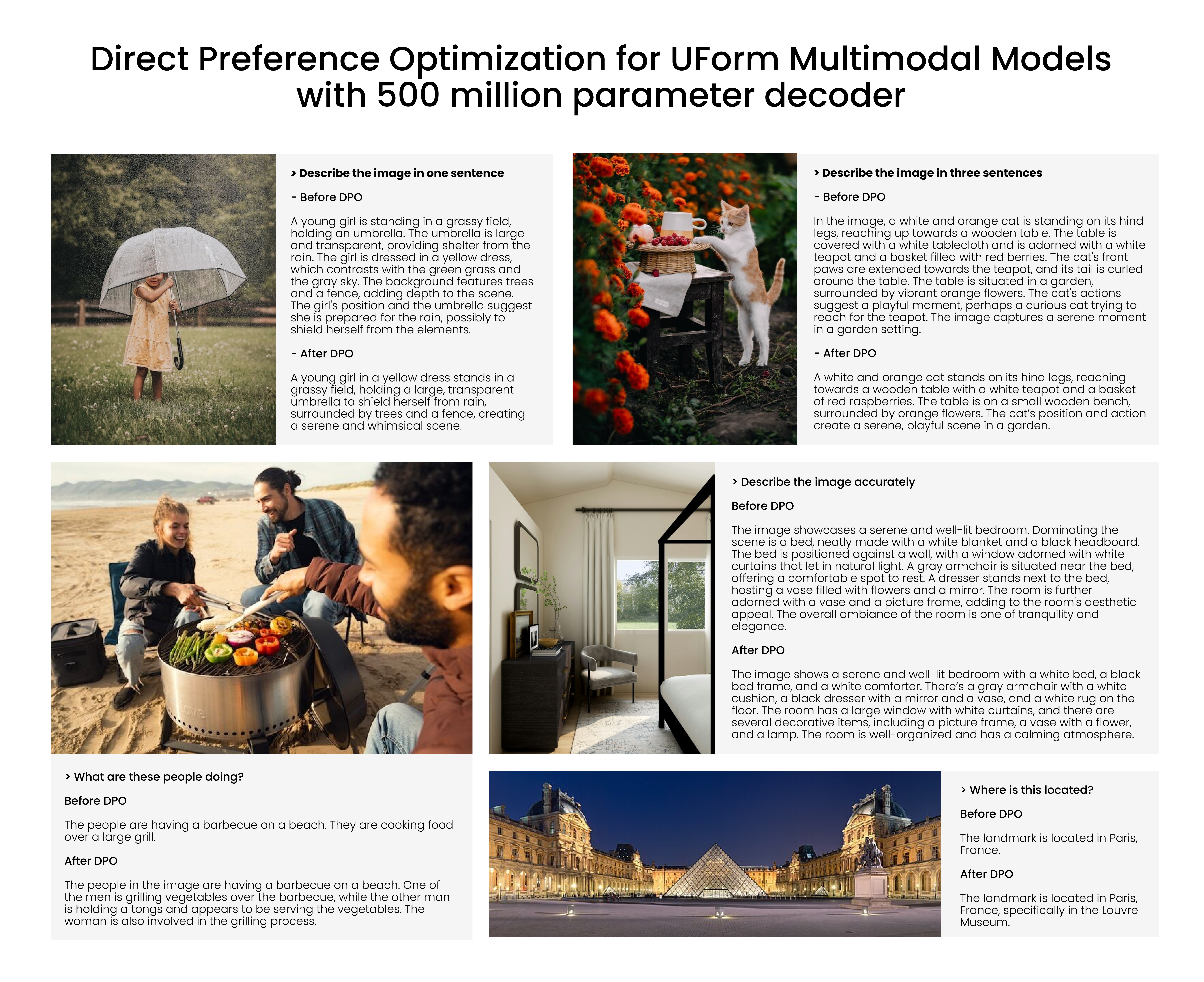

Description

UForm-Gen2-dpo is a small generative vision-language model alined for Image Captioning and Visual Question Answering on preference datasets VLFeedback and LLaVA-Human-Preference-10K using Direct Preference Optimization (DPO).

The model consists of two parts:

- CLIP-like ViT-H/14

- Qwen1.5-0.5B-Chat

The model took less than one day to train on a DGX-H100 with 8x H100 GPUs. Thanks to Nebius.ai for providing the compute 🤗

Usage

The generative model can be used to caption images, answer questions about them. Also it is suitable for a multimodal chat.

from transformers import AutoModel, AutoProcessor

model = AutoModel.from_pretrained("unum-cloud/uform-gen2-dpo", trust_remote_code=True)

processor = AutoProcessor.from_pretrained("unum-cloud/uform-gen2-dpo", trust_remote_code=True)

prompt = "Question or Instruction"

image = Image.open("image.jpg")

inputs = processor(text=[prompt], images=[image], return_tensors="pt")

with torch.inference_mode():

output = model.generate(

**inputs,

do_sample=False,

use_cache=True,

max_new_tokens=256,

eos_token_id=151645,

pad_token_id=processor.tokenizer.pad_token_id

)

prompt_len = inputs["input_ids"].shape[1]

decoded_text = processor.batch_decode(output[:, prompt_len:])[0]

You can check examples of different prompts in our demo space.

Evaluation

perception reasoning OCR artwork celebrity code_reasoning color commonsense_reasoning count existence landmark numerical_calculation position posters scene text_translation

MME Benchmark

| Model | perception | reasoning | OCR | artwork | celebrity | code_reasoning | color | commonsense_reasoning | count | existence | landmark | numerical_calculation | position | posters | scene | text_translation |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| uform-gen2-dpo | 1,048.75 | 224.64 | 72.50 | 97.25 | 62.65 | 67.50 | 123.33 | 57.14 | 136.67 | 195.00 | 104.00 | 50.00 | 51.67 | 59.18 | 146.50 | 50.00 |

| uform-gen2-qwen-500m | 863.40 | 236.43 | 57.50 | 93.00 | 67.06 | 57.50 | 78.33 | 81.43 | 53.33 | 150.00 | 98.00 | 50.00 | 50.00 | 62.93 | 153.25 | 47.50 |