Spaces:

Sleeping

Sleeping

commit from `quarto publish`

Browse files- src/.quarto/idx/index.qmd.json +1 -1

- src/.quarto/idx/notebooks/advanced_rag.qmd.json +0 -1

- src/.quarto/idx/notebooks/automatic_embedding.ipynb.json +0 -1

- src/.quarto/idx/notebooks/faiss.ipynb.json +0 -1

- src/.quarto/idx/notebooks/rag_evaluation.qmd.json +0 -1

- src/.quarto/idx/notebooks/rag_zephyr_langchain.qmd.json +0 -1

- src/.quarto/idx/notebooks/single_gpu.ipynb.json +0 -1

- src/.quarto/idx/presentation.qmd.json +0 -1

- src/_site/index.html +39 -23

- src/_site/media/PMA.png +0 -0

- src/_site/media/PMR.png +0 -0

- src/_site/media/kl_mem.png +0 -0

- src/_site/media/kl_speed.png +0 -0

- src/_site/media/qr.png +0 -0

- src/_site/search.json +6 -6

- src/index.qmd +20 -7

- src/media/PMA.png +0 -0

- src/media/PMR.png +0 -0

- src/media/qr.png +0 -0

src/.quarto/idx/index.qmd.json

CHANGED

|

@@ -1 +1 @@

|

|

| 1 |

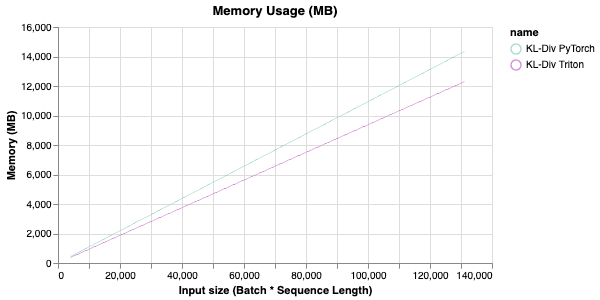

-

{"title":"Optimizing LLM Performance Using Triton","markdown":{"yaml":{"title":"Optimizing LLM Performance Using Triton","format":{"revealjs":{"theme":"dark","transition":"slide","slide-number":true}},"author":"Matej Sirovatka","date":"today"},"headingText":"`whoami`","containsRefs":false,"markdown":"\n\n\n- My name is Matej\n- I'm a Master's student at the Brno University of Technology\n- I currently make GPUs go `brrrrrr` at Hugging Face 🤗\n\n## `What is Triton?`\n\n- NVIDIA's open-source programming language for GPU kernels\n- Designed for AI/ML workloads\n- Simplifies GPU programming compared to CUDA\n\n{.center fig-align=\"center\"}\n\n## `Why Optimize with Triton?`\n\n- Simple yet effective\n- Less headache than CUDA\n- GPUs go `brrrrrrr` 🚀\n- Feel cool when your kernel is faster than PyTorch 😎\n\n## `Example Problem: KL Divergence`\n\n- commonly used in LLMs for knowledge distillation\n- for probability distributions $P$ and $Q$, the Kullback-Leibler divergence is defined as:\n\n$$\nD_{KL}(P \\| Q) = \\sum_{i} P_i \\log\\left(\\frac{P_i}{Q_i}\\right)\n$$\n\n\n```python\nimport torch\nfrom torch.nn.functional import kl_div\n\ndef kl_div_torch(p: torch.Tensor, q: torch.Tensor) -> torch.Tensor:\n return kl_div(p, q, reduction='none')\n```\n\n## `How about Triton?`\n\n```python\nimport triton.language as tl\n\n@triton.jit\ndef kl_div_triton(\n p_ptr, q_ptr, output_ptr, n_elements, BLOCK_SIZE: tl.constexpr\n):\n pid = tl.program_id(0)\n block_start = pid * BLOCK_SIZE\n offsets = block_start + tl.arange(0, BLOCK_SIZE)\n mask = offsets < n_elements\n \n p = tl.load(p_ptr + offsets, mask=mask)\n q = tl.load(q_ptr + offsets, mask=mask)\n \n output = p * (tl.log(p) - tl.log(q))\n \n tl.store(output_ptr + offsets, output, mask=mask)\n```\n\n## `How to integrate with PyTorch?`\n\n- Triton works with pointers\n- How to use our custom kernel with PyTorch autograd?\n\n```python\nimport torch\n\nclass VectorAdd(torch.autograd.Function):\n @staticmethod\n def forward(ctx, p, q):\n ctx.save_for_backward(q)\n output = torch.empty_like(p)\n grid = (len(p) + 512 - 1) // 512\n kl_div_triton[grid](p, q, output, len(p), BLOCK_SIZE=512)\n return output\n\n @staticmethod\n def backward(ctx, grad_output):\n q = ctx.saved_tensors[0]\n # Calculate gradients (another triton kernel)\n return ...\n```\n\n## `Some benchmarks`\n\n- A KL Divergence kernel that is currently used in [Liger Kernel](https://github.com/linkedin/liger-kernel) written by @me\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Do I have to write everything?`\n\n- TLDR: No\n- Many cool projects already using Triton\n- Better Integration with PyTorch and even Hugging Face 🤗\n- Liger Kernel, Unsloth AI, etc.\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n\n## `So how can I use this in my LLM? 🚀`\n\n- Liger Kernel is a great example, providing examples of how to integrate with Hugging Face 🤗 Trainer\n\n```diff\n- from transformers import AutoModelForCausalLM\n+ from liger_kernel.transformers import AutoLigerKernelForCausalLM\n\nmodel_path = \"meta-llama/Meta-Llama-3-8B-Instruct\"\n\n- model = AutoModelForCausalLM.from_pretrained(model_path)\n+ model = AutoLigerKernelForCausalLM.from_pretrained(model_path)\n\n# training/inference logic...\n```\n## `Key Optimization Techniques adapted by Liger Kernel`\n\n- Kernel Fusion\n- Domain-specific optimizations\n- Memory Access Patterns\n- Preemptive memory freeing\n \n\n## `Aaand some more benchmarks 🚀`\n\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Last benchmark I promise...`\n\n\n{height=\"50%\" width=\"50%\" }\n\n::: {.incremental}\n*Attention is all you need, so I thank you for yours!* 🤗\n:::\n\n","srcMarkdownNoYaml":"\n\n## `whoami`\n\n- My name is Matej\n- I'm a Master's student at the Brno University of Technology\n- I currently make GPUs go `brrrrrr` at Hugging Face 🤗\n\n## `What is Triton?`\n\n- NVIDIA's open-source programming language for GPU kernels\n- Designed for AI/ML workloads\n- Simplifies GPU programming compared to CUDA\n\n{.center fig-align=\"center\"}\n\n## `Why Optimize with Triton?`\n\n- Simple yet effective\n- Less headache than CUDA\n- GPUs go `brrrrrrr` 🚀\n- Feel cool when your kernel is faster than PyTorch 😎\n\n## `Example Problem: KL Divergence`\n\n- commonly used in LLMs for knowledge distillation\n- for probability distributions $P$ and $Q$, the Kullback-Leibler divergence is defined as:\n\n$$\nD_{KL}(P \\| Q) = \\sum_{i} P_i \\log\\left(\\frac{P_i}{Q_i}\\right)\n$$\n\n\n```python\nimport torch\nfrom torch.nn.functional import kl_div\n\ndef kl_div_torch(p: torch.Tensor, q: torch.Tensor) -> torch.Tensor:\n return kl_div(p, q, reduction='none')\n```\n\n## `How about Triton?`\n\n```python\nimport triton.language as tl\n\n@triton.jit\ndef kl_div_triton(\n p_ptr, q_ptr, output_ptr, n_elements, BLOCK_SIZE: tl.constexpr\n):\n pid = tl.program_id(0)\n block_start = pid * BLOCK_SIZE\n offsets = block_start + tl.arange(0, BLOCK_SIZE)\n mask = offsets < n_elements\n \n p = tl.load(p_ptr + offsets, mask=mask)\n q = tl.load(q_ptr + offsets, mask=mask)\n \n output = p * (tl.log(p) - tl.log(q))\n \n tl.store(output_ptr + offsets, output, mask=mask)\n```\n\n## `How to integrate with PyTorch?`\n\n- Triton works with pointers\n- How to use our custom kernel with PyTorch autograd?\n\n```python\nimport torch\n\nclass VectorAdd(torch.autograd.Function):\n @staticmethod\n def forward(ctx, p, q):\n ctx.save_for_backward(q)\n output = torch.empty_like(p)\n grid = (len(p) + 512 - 1) // 512\n kl_div_triton[grid](p, q, output, len(p), BLOCK_SIZE=512)\n return output\n\n @staticmethod\n def backward(ctx, grad_output):\n q = ctx.saved_tensors[0]\n # Calculate gradients (another triton kernel)\n return ...\n```\n\n## `Some benchmarks`\n\n- A KL Divergence kernel that is currently used in [Liger Kernel](https://github.com/linkedin/liger-kernel) written by @me\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Do I have to write everything?`\n\n- TLDR: No\n- Many cool projects already using Triton\n- Better Integration with PyTorch and even Hugging Face 🤗\n- Liger Kernel, Unsloth AI, etc.\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n\n## `So how can I use this in my LLM? 🚀`\n\n- Liger Kernel is a great example, providing examples of how to integrate with Hugging Face 🤗 Trainer\n\n```diff\n- from transformers import AutoModelForCausalLM\n+ from liger_kernel.transformers import AutoLigerKernelForCausalLM\n\nmodel_path = \"meta-llama/Meta-Llama-3-8B-Instruct\"\n\n- model = AutoModelForCausalLM.from_pretrained(model_path)\n+ model = AutoLigerKernelForCausalLM.from_pretrained(model_path)\n\n# training/inference logic...\n```\n## `Key Optimization Techniques adapted by Liger Kernel`\n\n- Kernel Fusion\n- Domain-specific optimizations\n- Memory Access Patterns\n- Preemptive memory freeing\n \n\n## `Aaand some more benchmarks 🚀`\n\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Last benchmark I promise...`\n\n\n{height=\"50%\" width=\"50%\" }\n\n::: {.incremental}\n*Attention is all you need, so I thank you for yours!* 🤗\n:::\n\n"},"formats":{"revealjs":{"identifier":{"display-name":"RevealJS","target-format":"revealjs","base-format":"revealjs"},"execute":{"fig-width":10,"fig-height":5,"fig-format":"retina","fig-dpi":96,"df-print":"default","error":false,"eval":true,"cache":null,"freeze":false,"echo":false,"output":true,"warning":false,"include":true,"keep-md":false,"keep-ipynb":false,"ipynb":null,"enabled":null,"daemon":null,"daemon-restart":false,"debug":false,"ipynb-filters":[],"ipynb-shell-interactivity":null,"plotly-connected":true,"engine":"markdown"},"render":{"keep-tex":false,"keep-typ":false,"keep-source":false,"keep-hidden":false,"prefer-html":false,"output-divs":true,"output-ext":"html","fig-align":"default","fig-pos":null,"fig-env":null,"code-fold":"none","code-overflow":"scroll","code-link":false,"code-line-numbers":true,"code-tools":false,"tbl-colwidths":"auto","merge-includes":true,"inline-includes":false,"preserve-yaml":false,"latex-auto-mk":true,"latex-auto-install":true,"latex-clean":true,"latex-min-runs":1,"latex-max-runs":10,"latex-makeindex":"makeindex","latex-makeindex-opts":[],"latex-tlmgr-opts":[],"latex-input-paths":[],"latex-output-dir":null,"link-external-icon":false,"link-external-newwindow":false,"self-contained-math":false,"format-resources":[]},"pandoc":{"standalone":true,"wrap":"none","default-image-extension":"png","html-math-method":{"method":"mathjax","url":"https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.9/MathJax.js?config=TeX-AMS_HTML-full"},"slide-level":2,"to":"revealjs","output-file":"index.html"},"language":{"toc-title-document":"Table of contents","toc-title-website":"On this page","related-formats-title":"Other Formats","related-notebooks-title":"Notebooks","source-notebooks-prefix":"Source","other-links-title":"Other Links","code-links-title":"Code Links","launch-dev-container-title":"Launch Dev Container","launch-binder-title":"Launch Binder","article-notebook-label":"Article Notebook","notebook-preview-download":"Download Notebook","notebook-preview-download-src":"Download Source","notebook-preview-back":"Back to Article","manuscript-meca-bundle":"MECA Bundle","section-title-abstract":"Abstract","section-title-appendices":"Appendices","section-title-footnotes":"Footnotes","section-title-references":"References","section-title-reuse":"Reuse","section-title-copyright":"Copyright","section-title-citation":"Citation","appendix-attribution-cite-as":"For attribution, please cite this work as:","appendix-attribution-bibtex":"BibTeX citation:","appendix-view-license":"View License","title-block-author-single":"Author","title-block-author-plural":"Authors","title-block-affiliation-single":"Affiliation","title-block-affiliation-plural":"Affiliations","title-block-published":"Published","title-block-modified":"Modified","title-block-keywords":"Keywords","callout-tip-title":"Tip","callout-note-title":"Note","callout-warning-title":"Warning","callout-important-title":"Important","callout-caution-title":"Caution","code-summary":"Code","code-tools-menu-caption":"Code","code-tools-show-all-code":"Show All Code","code-tools-hide-all-code":"Hide All Code","code-tools-view-source":"View Source","code-tools-source-code":"Source Code","tools-share":"Share","tools-download":"Download","code-line":"Line","code-lines":"Lines","copy-button-tooltip":"Copy to Clipboard","copy-button-tooltip-success":"Copied!","repo-action-links-edit":"Edit this page","repo-action-links-source":"View source","repo-action-links-issue":"Report an issue","back-to-top":"Back to top","search-no-results-text":"No results","search-matching-documents-text":"matching documents","search-copy-link-title":"Copy link to search","search-hide-matches-text":"Hide additional matches","search-more-match-text":"more match in this document","search-more-matches-text":"more matches in this document","search-clear-button-title":"Clear","search-text-placeholder":"","search-detached-cancel-button-title":"Cancel","search-submit-button-title":"Submit","search-label":"Search","toggle-section":"Toggle section","toggle-sidebar":"Toggle sidebar navigation","toggle-dark-mode":"Toggle dark mode","toggle-reader-mode":"Toggle reader mode","toggle-navigation":"Toggle navigation","crossref-fig-title":"Figure","crossref-tbl-title":"Table","crossref-lst-title":"Listing","crossref-thm-title":"Theorem","crossref-lem-title":"Lemma","crossref-cor-title":"Corollary","crossref-prp-title":"Proposition","crossref-cnj-title":"Conjecture","crossref-def-title":"Definition","crossref-exm-title":"Example","crossref-exr-title":"Exercise","crossref-ch-prefix":"Chapter","crossref-apx-prefix":"Appendix","crossref-sec-prefix":"Section","crossref-eq-prefix":"Equation","crossref-lof-title":"List of Figures","crossref-lot-title":"List of Tables","crossref-lol-title":"List of Listings","environment-proof-title":"Proof","environment-remark-title":"Remark","environment-solution-title":"Solution","listing-page-order-by":"Order By","listing-page-order-by-default":"Default","listing-page-order-by-date-asc":"Oldest","listing-page-order-by-date-desc":"Newest","listing-page-order-by-number-desc":"High to Low","listing-page-order-by-number-asc":"Low to High","listing-page-field-date":"Date","listing-page-field-title":"Title","listing-page-field-description":"Description","listing-page-field-author":"Author","listing-page-field-filename":"File Name","listing-page-field-filemodified":"Modified","listing-page-field-subtitle":"Subtitle","listing-page-field-readingtime":"Reading Time","listing-page-field-wordcount":"Word Count","listing-page-field-categories":"Categories","listing-page-minutes-compact":"{0} min","listing-page-category-all":"All","listing-page-no-matches":"No matching items","listing-page-words":"{0} words","listing-page-filter":"Filter","draft":"Draft"},"metadata":{"lang":"en","fig-responsive":false,"quarto-version":"1.6.40","auto-stretch":true,"title":"Optimizing LLM Performance Using Triton","author":"Matej Sirovatka","date":"today","theme":"dark","transition":"slide","slideNumber":true}}},"projectFormats":["html"]}

|

|

|

|

| 1 |

+

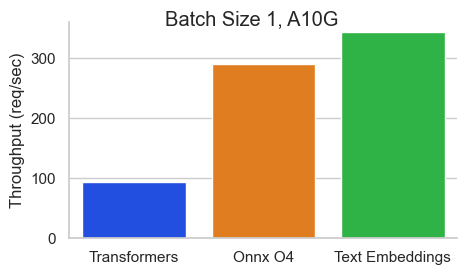

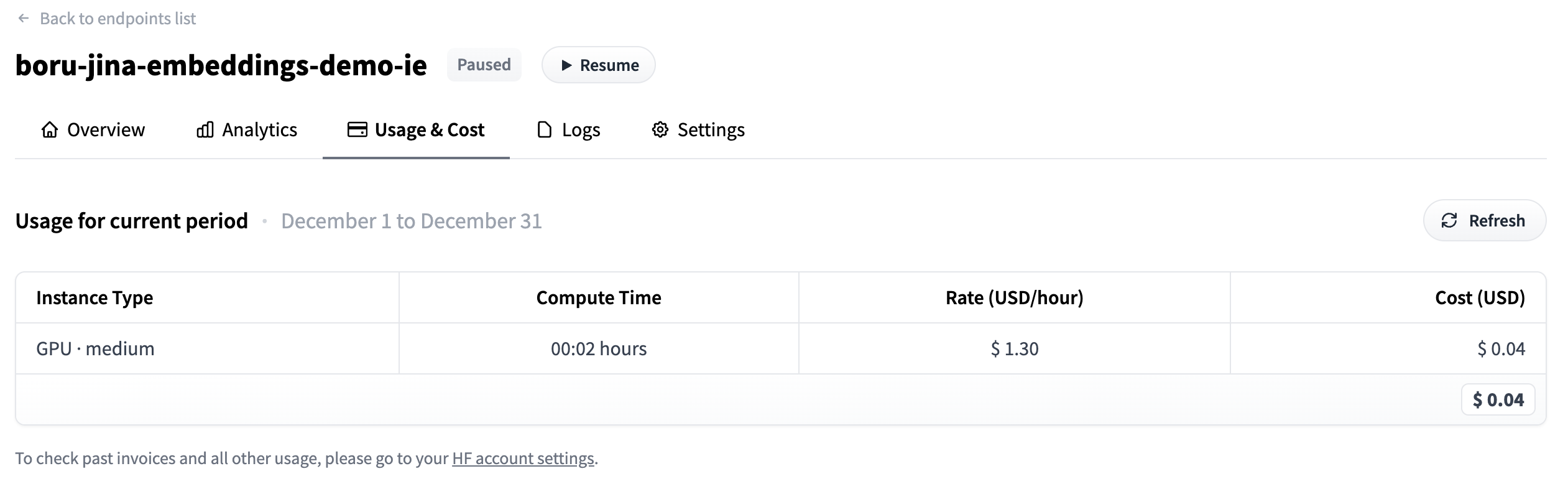

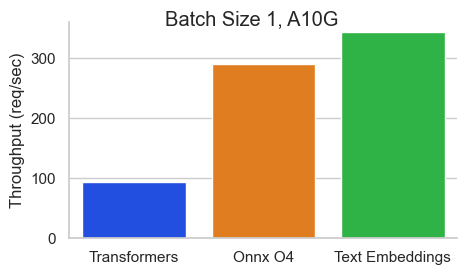

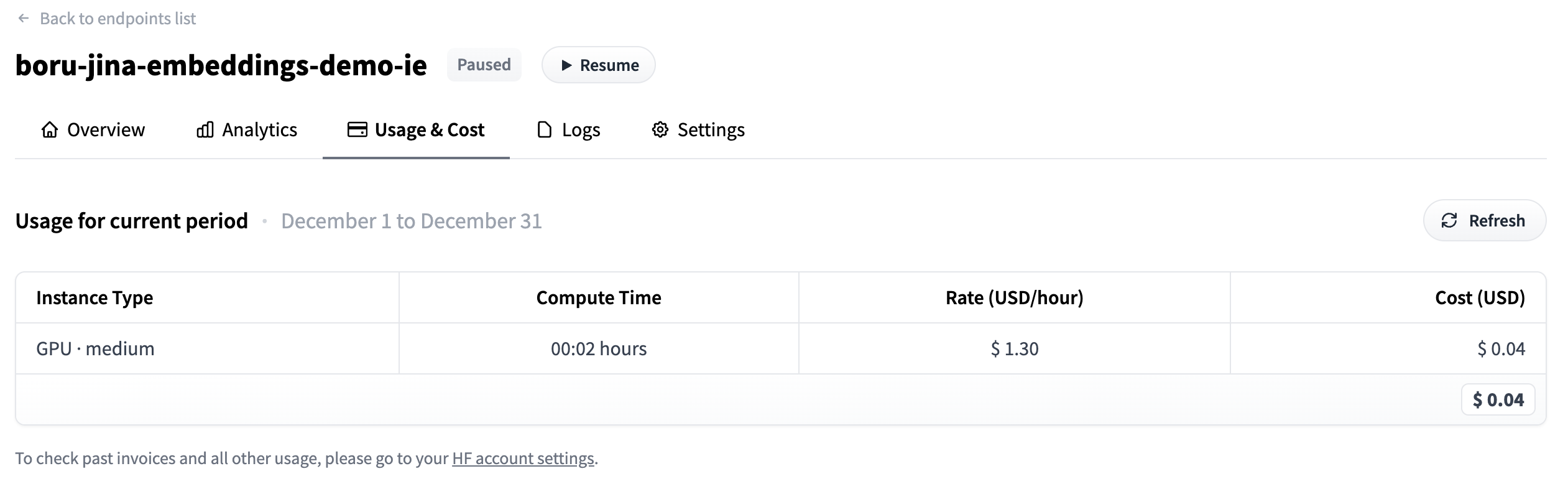

{"title":"Optimizing LLM Performance Using Triton","markdown":{"yaml":{"title":"Optimizing LLM Performance Using Triton","format":{"revealjs":{"theme":"dark","transition":"slide","slide-number":true}},"author":"Matej Sirovatka","date":"2025-02-22"},"headingText":"`whoami`","containsRefs":false,"markdown":"\n\n\n- My name is Matej\n- I'm a Master's student at the Brno University of Technology\n- I'm currently working on distributed training at Hugging Face 🤗\n\n## `What is Triton?`\n\n- NVIDIA's open-source programming language for GPU kernels\n- Designed for AI/ML workloads\n- Simplifies GPU programming compared to CUDA\n\n{.center fig-align=\"center\"}\n\n## `Why Optimize with Triton?`\n\n- Simple yet effective\n- Less headache than CUDA\n- GPUs go `brrrrrrr` 🚀\n- Feel cool when your kernel is faster than PyTorch 😎\n\n## `Example Problem: KL Divergence`\n\n- commonly used in LLMs for knowledge distillation\n- for probability distributions $P$ and $Q$, the Kullback-Leibler divergence is defined as:\n\n$$\nD_{KL}(P \\| Q) = \\sum_{i} P_i \\log\\left(\\frac{P_i}{Q_i}\\right)\n$$\n\n\n```python\nimport torch\nfrom torch.nn.functional import kl_div\n\ndef kl_div_torch(p: torch.Tensor, q: torch.Tensor) -> torch.Tensor:\n return kl_div(p, q)\n```\n\n## `How about Triton?`\n\n```python\nimport triton\nimport triton.language as tl\n\n@triton.jit\ndef kl_div_triton(\n p_ptr, q_ptr, output_ptr, n_elements, BLOCK_SIZE: tl.constexpr\n):\n pid = tl.program_id(0)\n block_start = pid * BLOCK_SIZE\n offsets = block_start + tl.arange(0, BLOCK_SIZE)\n mask = offsets < n_elements\n \n p = tl.load(p_ptr + offsets, mask=mask)\n q = tl.load(q_ptr + offsets, mask=mask)\n \n output = p * (tl.log(p) - tl.log(q))\n tl.store(output_ptr + offsets, output, mask=mask)\n```\n\n## `How to integrate with PyTorch?`\n\n- How to use our custom kernel with PyTorch autograd?\n\n```python\nimport torch\n\nclass VectorAdd(torch.autograd.Function):\n @staticmethod\n def forward(ctx, p, q):\n ctx.save_for_backward(q)\n output = torch.empty_like(p)\n grid = (len(p) + 512 - 1) // 512\n kl_div_triton[grid](p, q, output, len(p), BLOCK_SIZE=512)\n return output\n\n @staticmethod\n def backward(ctx, grad_output):\n q = ctx.saved_tensors[0]\n # Calculate gradients (another triton kernel)\n return ...\n```\n\n## `Some benchmarks`\n\n- A KL Divergence kernel that is currently used in [Liger Kernel](https://github.com/linkedin/liger-kernel) written by @me\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Do I have to write everything?`\n\n- TLDR: No\n- Many cool projects already using Triton\n- Better Integration with PyTorch and even Hugging Face 🤗\n- Liger Kernel, Unsloth AI, etc.\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n\n## `So how can I use this in my LLM? 🚀`\n\n- Liger Kernel is a great example, providing examples of how to integrate with Hugging Face 🤗 Trainer\n\n```diff\n- from transformers import AutoModelForCausalLM\n+ from liger_kernel.transformers import AutoLigerKernelForCausalLM\n\nmodel_path = \"meta-llama/Meta-Llama-3-8B-Instruct\"\n\n- model = AutoModelForCausalLM.from_pretrained(model_path)\n+ model = AutoLigerKernelForCausalLM.from_pretrained(model_path)\n\n# training/inference logic...\n```\n## `Key Optimization Techniques adapted by Liger Kernel`\n\n- Kernel Fusion\n- Domain-specific optimizations\n- Memory Access Patterns\n- Preemptive memory freeing\n \n\n## `Aaand some more benchmarks 🚀`\n\n- Saving memory is key to run bigger batch size on smaller GPUs\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Last benchmark I promise...`\n\n- But is it faster? Yes, it is!\n\n{fig-align=\"center\" height=50% width=50%}\n\n:::: {.columns}\n\n::: {.column width=\"60%\"}\n\n*Attention is all you need, so I thank you for yours!* 🤗\n\n:::\n\n::: {.column width=\"40%\"}\n\n{height=25% width=25% fig-align=\"center\"}\n\n:::\n\n::::\n\n","srcMarkdownNoYaml":"\n\n## `whoami`\n\n- My name is Matej\n- I'm a Master's student at the Brno University of Technology\n- I'm currently working on distributed training at Hugging Face 🤗\n\n## `What is Triton?`\n\n- NVIDIA's open-source programming language for GPU kernels\n- Designed for AI/ML workloads\n- Simplifies GPU programming compared to CUDA\n\n{.center fig-align=\"center\"}\n\n## `Why Optimize with Triton?`\n\n- Simple yet effective\n- Less headache than CUDA\n- GPUs go `brrrrrrr` 🚀\n- Feel cool when your kernel is faster than PyTorch 😎\n\n## `Example Problem: KL Divergence`\n\n- commonly used in LLMs for knowledge distillation\n- for probability distributions $P$ and $Q$, the Kullback-Leibler divergence is defined as:\n\n$$\nD_{KL}(P \\| Q) = \\sum_{i} P_i \\log\\left(\\frac{P_i}{Q_i}\\right)\n$$\n\n\n```python\nimport torch\nfrom torch.nn.functional import kl_div\n\ndef kl_div_torch(p: torch.Tensor, q: torch.Tensor) -> torch.Tensor:\n return kl_div(p, q)\n```\n\n## `How about Triton?`\n\n```python\nimport triton\nimport triton.language as tl\n\n@triton.jit\ndef kl_div_triton(\n p_ptr, q_ptr, output_ptr, n_elements, BLOCK_SIZE: tl.constexpr\n):\n pid = tl.program_id(0)\n block_start = pid * BLOCK_SIZE\n offsets = block_start + tl.arange(0, BLOCK_SIZE)\n mask = offsets < n_elements\n \n p = tl.load(p_ptr + offsets, mask=mask)\n q = tl.load(q_ptr + offsets, mask=mask)\n \n output = p * (tl.log(p) - tl.log(q))\n tl.store(output_ptr + offsets, output, mask=mask)\n```\n\n## `How to integrate with PyTorch?`\n\n- How to use our custom kernel with PyTorch autograd?\n\n```python\nimport torch\n\nclass VectorAdd(torch.autograd.Function):\n @staticmethod\n def forward(ctx, p, q):\n ctx.save_for_backward(q)\n output = torch.empty_like(p)\n grid = (len(p) + 512 - 1) // 512\n kl_div_triton[grid](p, q, output, len(p), BLOCK_SIZE=512)\n return output\n\n @staticmethod\n def backward(ctx, grad_output):\n q = ctx.saved_tensors[0]\n # Calculate gradients (another triton kernel)\n return ...\n```\n\n## `Some benchmarks`\n\n- A KL Divergence kernel that is currently used in [Liger Kernel](https://github.com/linkedin/liger-kernel) written by @me\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Do I have to write everything?`\n\n- TLDR: No\n- Many cool projects already using Triton\n- Better Integration with PyTorch and even Hugging Face 🤗\n- Liger Kernel, Unsloth AI, etc.\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{.center fig-align=\"center\"}\n\n:::\n\n::::\n\n\n## `So how can I use this in my LLM? 🚀`\n\n- Liger Kernel is a great example, providing examples of how to integrate with Hugging Face 🤗 Trainer\n\n```diff\n- from transformers import AutoModelForCausalLM\n+ from liger_kernel.transformers import AutoLigerKernelForCausalLM\n\nmodel_path = \"meta-llama/Meta-Llama-3-8B-Instruct\"\n\n- model = AutoModelForCausalLM.from_pretrained(model_path)\n+ model = AutoLigerKernelForCausalLM.from_pretrained(model_path)\n\n# training/inference logic...\n```\n## `Key Optimization Techniques adapted by Liger Kernel`\n\n- Kernel Fusion\n- Domain-specific optimizations\n- Memory Access Patterns\n- Preemptive memory freeing\n \n\n## `Aaand some more benchmarks 🚀`\n\n- Saving memory is key to run bigger batch size on smaller GPUs\n\n:::: {.columns}\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::: {.column width=\"50%\"}\n\n{fig-align=\"center\"}\n\n:::\n\n::::\n\n## `Last benchmark I promise...`\n\n- But is it faster? Yes, it is!\n\n{fig-align=\"center\" height=50% width=50%}\n\n:::: {.columns}\n\n::: {.column width=\"60%\"}\n\n*Attention is all you need, so I thank you for yours!* 🤗\n\n:::\n\n::: {.column width=\"40%\"}\n\n{height=25% width=25% fig-align=\"center\"}\n\n:::\n\n::::\n\n"},"formats":{"revealjs":{"identifier":{"display-name":"RevealJS","target-format":"revealjs","base-format":"revealjs"},"execute":{"fig-width":10,"fig-height":5,"fig-format":"retina","fig-dpi":96,"df-print":"default","error":false,"eval":true,"cache":null,"freeze":false,"echo":false,"output":true,"warning":false,"include":true,"keep-md":false,"keep-ipynb":false,"ipynb":null,"enabled":null,"daemon":null,"daemon-restart":false,"debug":false,"ipynb-filters":[],"ipynb-shell-interactivity":null,"plotly-connected":true,"engine":"markdown"},"render":{"keep-tex":false,"keep-typ":false,"keep-source":false,"keep-hidden":false,"prefer-html":false,"output-divs":true,"output-ext":"html","fig-align":"default","fig-pos":null,"fig-env":null,"code-fold":"none","code-overflow":"scroll","code-link":false,"code-line-numbers":true,"code-tools":false,"tbl-colwidths":"auto","merge-includes":true,"inline-includes":false,"preserve-yaml":false,"latex-auto-mk":true,"latex-auto-install":true,"latex-clean":true,"latex-min-runs":1,"latex-max-runs":10,"latex-makeindex":"makeindex","latex-makeindex-opts":[],"latex-tlmgr-opts":[],"latex-input-paths":[],"latex-output-dir":null,"link-external-icon":false,"link-external-newwindow":false,"self-contained-math":false,"format-resources":[]},"pandoc":{"standalone":true,"wrap":"none","default-image-extension":"png","html-math-method":{"method":"mathjax","url":"https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.9/MathJax.js?config=TeX-AMS_HTML-full"},"slide-level":2,"to":"revealjs","output-file":"index.html"},"language":{"toc-title-document":"Table of contents","toc-title-website":"On this page","related-formats-title":"Other Formats","related-notebooks-title":"Notebooks","source-notebooks-prefix":"Source","other-links-title":"Other Links","code-links-title":"Code Links","launch-dev-container-title":"Launch Dev Container","launch-binder-title":"Launch Binder","article-notebook-label":"Article Notebook","notebook-preview-download":"Download Notebook","notebook-preview-download-src":"Download Source","notebook-preview-back":"Back to Article","manuscript-meca-bundle":"MECA Bundle","section-title-abstract":"Abstract","section-title-appendices":"Appendices","section-title-footnotes":"Footnotes","section-title-references":"References","section-title-reuse":"Reuse","section-title-copyright":"Copyright","section-title-citation":"Citation","appendix-attribution-cite-as":"For attribution, please cite this work as:","appendix-attribution-bibtex":"BibTeX citation:","appendix-view-license":"View License","title-block-author-single":"Author","title-block-author-plural":"Authors","title-block-affiliation-single":"Affiliation","title-block-affiliation-plural":"Affiliations","title-block-published":"Published","title-block-modified":"Modified","title-block-keywords":"Keywords","callout-tip-title":"Tip","callout-note-title":"Note","callout-warning-title":"Warning","callout-important-title":"Important","callout-caution-title":"Caution","code-summary":"Code","code-tools-menu-caption":"Code","code-tools-show-all-code":"Show All Code","code-tools-hide-all-code":"Hide All Code","code-tools-view-source":"View Source","code-tools-source-code":"Source Code","tools-share":"Share","tools-download":"Download","code-line":"Line","code-lines":"Lines","copy-button-tooltip":"Copy to Clipboard","copy-button-tooltip-success":"Copied!","repo-action-links-edit":"Edit this page","repo-action-links-source":"View source","repo-action-links-issue":"Report an issue","back-to-top":"Back to top","search-no-results-text":"No results","search-matching-documents-text":"matching documents","search-copy-link-title":"Copy link to search","search-hide-matches-text":"Hide additional matches","search-more-match-text":"more match in this document","search-more-matches-text":"more matches in this document","search-clear-button-title":"Clear","search-text-placeholder":"","search-detached-cancel-button-title":"Cancel","search-submit-button-title":"Submit","search-label":"Search","toggle-section":"Toggle section","toggle-sidebar":"Toggle sidebar navigation","toggle-dark-mode":"Toggle dark mode","toggle-reader-mode":"Toggle reader mode","toggle-navigation":"Toggle navigation","crossref-fig-title":"Figure","crossref-tbl-title":"Table","crossref-lst-title":"Listing","crossref-thm-title":"Theorem","crossref-lem-title":"Lemma","crossref-cor-title":"Corollary","crossref-prp-title":"Proposition","crossref-cnj-title":"Conjecture","crossref-def-title":"Definition","crossref-exm-title":"Example","crossref-exr-title":"Exercise","crossref-ch-prefix":"Chapter","crossref-apx-prefix":"Appendix","crossref-sec-prefix":"Section","crossref-eq-prefix":"Equation","crossref-lof-title":"List of Figures","crossref-lot-title":"List of Tables","crossref-lol-title":"List of Listings","environment-proof-title":"Proof","environment-remark-title":"Remark","environment-solution-title":"Solution","listing-page-order-by":"Order By","listing-page-order-by-default":"Default","listing-page-order-by-date-asc":"Oldest","listing-page-order-by-date-desc":"Newest","listing-page-order-by-number-desc":"High to Low","listing-page-order-by-number-asc":"Low to High","listing-page-field-date":"Date","listing-page-field-title":"Title","listing-page-field-description":"Description","listing-page-field-author":"Author","listing-page-field-filename":"File Name","listing-page-field-filemodified":"Modified","listing-page-field-subtitle":"Subtitle","listing-page-field-readingtime":"Reading Time","listing-page-field-wordcount":"Word Count","listing-page-field-categories":"Categories","listing-page-minutes-compact":"{0} min","listing-page-category-all":"All","listing-page-no-matches":"No matching items","listing-page-words":"{0} words","listing-page-filter":"Filter","draft":"Draft"},"metadata":{"lang":"en","fig-responsive":false,"quarto-version":"1.6.40","auto-stretch":true,"title":"Optimizing LLM Performance Using Triton","author":"Matej Sirovatka","date":"2025-02-22","theme":"dark","transition":"slide","slideNumber":true}}},"projectFormats":["html"]}

|

src/.quarto/idx/notebooks/advanced_rag.qmd.json

DELETED

|

@@ -1 +0,0 @@

|

|

| 1 |

-