Spaces:

Sleeping

Sleeping

Dacho688

commited on

Commit

•

e89ef0e

1

Parent(s):

49099ea

App Updates

Browse files- improve base prompt

- include an example

- __pycache__/streaming.cpython-312.pyc +0 -0

- __pycache__/test_streaming.cpython-312.pyc +0 -0

- __pycache__/test_streaming.cpython-39.pyc +0 -0

- app.py +38 -20

- figures/classification_report.png +0 -0

- figures/confusion_matrix.png +0 -0

- figures/fare_sex_boxplot.png +0 -0

- requirements.txt +1 -1

- test_app.py +0 -134

- test_streaming.py +0 -64

__pycache__/streaming.cpython-312.pyc

ADDED

|

Binary file (3.43 kB). View file

|

|

|

__pycache__/test_streaming.cpython-312.pyc

ADDED

|

Binary file (3.43 kB). View file

|

|

|

__pycache__/test_streaming.cpython-39.pyc

ADDED

|

Binary file (2.1 kB). View file

|

|

|

app.py

CHANGED

|

@@ -16,7 +16,7 @@ llm_engine = HfEngine("meta-llama/Meta-Llama-3.1-70B-Instruct")

|

|

| 16 |

agent = ReactCodeAgent(

|

| 17 |

tools=[],

|

| 18 |

llm_engine=llm_engine,

|

| 19 |

-

additional_authorized_imports=["numpy", "pandas", "matplotlib", "seaborn","scipy"],

|

| 20 |

max_iterations=10,

|

| 21 |

)

|

| 22 |

|

|

@@ -24,13 +24,19 @@ base_prompt = """You are an expert full stack data analyst.

|

|

| 24 |

You are given a data file and the data structure below.

|

| 25 |

The data file is passed to you as the variable data_file, it is a pandas dataframe, you can use it directly.

|

| 26 |

DO NOT try to load data_file, it is already a dataframe pre-loaded in your python interpreter!

|

| 27 |

-

When plotting using matplotlib/seaborn save the figures to the (already existing) folder'./figures/': take care to clear

|

| 28 |

-

|

| 29 |

-

When

|

| 30 |

-

For example: from matplotlib import pyplot as plt

|

| 31 |

-

Not: import matplotlib.pyplot as plt

|

| 32 |

|

| 33 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 34 |

|

| 35 |

Structure of the data:

|

| 36 |

{structure_notes}

|

|

@@ -39,7 +45,7 @@ Question/Problem:

|

|

| 39 |

"""

|

| 40 |

|

| 41 |

example_notes="""This data is about the Titanic wreck in 1912.

|

| 42 |

-

The target

|

| 43 |

pclass: A proxy for socio-economic status (SES)

|

| 44 |

1st = Upper

|

| 45 |

2nd = Middle

|

|

@@ -51,7 +57,9 @@ Spouse = husband, wife (mistresses and fiancés were ignored)

|

|

| 51 |

parch: The dataset defines family relations in this way...

|

| 52 |

Parent = mother, father

|

| 53 |

Child = daughter, son, stepdaughter, stepson

|

| 54 |

-

Some children travelled only with a nanny, therefore parch=0 for them.

|

|

|

|

|

|

|

| 55 |

|

| 56 |

def get_images_in_directory(directory):

|

| 57 |

image_extensions = {'.png', '.jpg', '.jpeg', '.gif', '.bmp', '.tiff'}

|

|

@@ -106,13 +114,22 @@ with gr.Blocks(

|

|

| 106 |

secondary_hue=gr.themes.colors.yellow,

|

| 107 |

)

|

| 108 |

) as demo:

|

| 109 |

-

gr.Markdown("""#

|

| 110 |

-

|

| 111 |

-

|

| 112 |

-

|

| 113 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 114 |

text_input = gr.Textbox(

|

| 115 |

-

label="Ask a question

|

| 116 |

)

|

| 117 |

submit = gr.Button("Run", variant="primary")

|

| 118 |

chatbot = gr.Chatbot(

|

|

@@ -123,11 +140,12 @@ Drop a `.csv` file below and ask a question about your data.

|

|

| 123 |

"https://em-content.zobj.net/source/twitter/53/robot-face_1f916.png",

|

| 124 |

),

|

| 125 |

)

|

| 126 |

-

|

| 127 |

-

|

| 128 |

-

|

| 129 |

-

|

| 130 |

-

|

|

|

|

| 131 |

|

| 132 |

submit.click(interact_with_agent, [file_input, text_input], [chatbot])

|

| 133 |

|

|

|

|

| 16 |

agent = ReactCodeAgent(

|

| 17 |

tools=[],

|

| 18 |

llm_engine=llm_engine,

|

| 19 |

+

additional_authorized_imports=["numpy", "pandas", "matplotlib", "seaborn","scipy","sklearn"],

|

| 20 |

max_iterations=10,

|

| 21 |

)

|

| 22 |

|

|

|

|

| 24 |

You are given a data file and the data structure below.

|

| 25 |

The data file is passed to you as the variable data_file, it is a pandas dataframe, you can use it directly.

|

| 26 |

DO NOT try to load data_file, it is already a dataframe pre-loaded in your python interpreter!

|

| 27 |

+

When plotting using matplotlib/seaborn save the figures to the (already existing) folder'./figures/': take care to clear

|

| 28 |

+

each figure with plt.clf() before doing another plot.

|

| 29 |

+

When plotting make the plots as pretty as possible given your tools. Same with tables, charts, or anything else.

|

|

|

|

|

|

|

| 30 |

|

| 31 |

+

In your final answer: summarize your findings and steps taken.

|

| 32 |

+

After each number derive real worlds insights, for instance: "Correlation between is_december and boredness is 1.3453, which suggest people are more bored in winter".

|

| 33 |

+

Your final answer should be a long string with at least 4 numbered and detailed parts:

|

| 34 |

+

1. Summary of Question/Problem

|

| 35 |

+

2. Summary of Actions

|

| 36 |

+

3. Summary of Findings

|

| 37 |

+

3. Potential Next Steps

|

| 38 |

+

|

| 39 |

+

Use the data file to answer the question or perform a task below.

|

| 40 |

|

| 41 |

Structure of the data:

|

| 42 |

{structure_notes}

|

|

|

|

| 45 |

"""

|

| 46 |

|

| 47 |

example_notes="""This data is about the Titanic wreck in 1912.

|

| 48 |

+

The target variable is the survival of passengers, noted by 'Survived'

|

| 49 |

pclass: A proxy for socio-economic status (SES)

|

| 50 |

1st = Upper

|

| 51 |

2nd = Middle

|

|

|

|

| 57 |

parch: The dataset defines family relations in this way...

|

| 58 |

Parent = mother, father

|

| 59 |

Child = daughter, son, stepdaughter, stepson

|

| 60 |

+

Some children travelled only with a nanny, therefore parch=0 for them.

|

| 61 |

+

|

| 62 |

+

Run a logistic regression."""

|

| 63 |

|

| 64 |

def get_images_in_directory(directory):

|

| 65 |

image_extensions = {'.png', '.jpg', '.jpeg', '.gif', '.bmp', '.tiff'}

|

|

|

|

| 114 |

secondary_hue=gr.themes.colors.yellow,

|

| 115 |

)

|

| 116 |

) as demo:

|

| 117 |

+

gr.Markdown("""# Data Analyst (ReAct Code Agent) 📊🤔

|

| 118 |

+

|

| 119 |

+

**Who am I?**

|

| 120 |

+

I'm your personal Data Analyst built on top of Llama-3.1-70B and the ReAct agent framework.

|

| 121 |

+

I break down your task step-by-step until I reach an answer/solution.

|

| 122 |

+

Along the way I share my thoughts, actions (Python code blobs), and observations.

|

| 123 |

+

I come packed with pandas, numpy, sklearn, matplotlib, seaborn, and more!

|

| 124 |

+

|

| 125 |

+

**Instructions**

|

| 126 |

+

1. Drop or upload a `.csv` file below.

|

| 127 |

+

2. Ask a question or give it a task.

|

| 128 |

+

3. **Watch Llama-3.1-70B think, act, and observe until final answer.

|

| 129 |

+

\n**For an example, click on the example at the bottom of page to auto populate.**""")

|

| 130 |

+

file_input = gr.File(label="Drop/upload a .csv file to analyze")

|

| 131 |

text_input = gr.Textbox(

|

| 132 |

+

label="Ask a question or give it a task."

|

| 133 |

)

|

| 134 |

submit = gr.Button("Run", variant="primary")

|

| 135 |

chatbot = gr.Chatbot(

|

|

|

|

| 140 |

"https://em-content.zobj.net/source/twitter/53/robot-face_1f916.png",

|

| 141 |

),

|

| 142 |

)

|

| 143 |

+

gr.Examples(

|

| 144 |

+

examples=[["./example/titanic.csv", example_notes]],

|

| 145 |

+

inputs=[file_input, text_input],

|

| 146 |

+

cache_examples=False,

|

| 147 |

+

label='Click anywhere below to try this example.'

|

| 148 |

+

)

|

| 149 |

|

| 150 |

submit.click(interact_with_agent, [file_input, text_input], [chatbot])

|

| 151 |

|

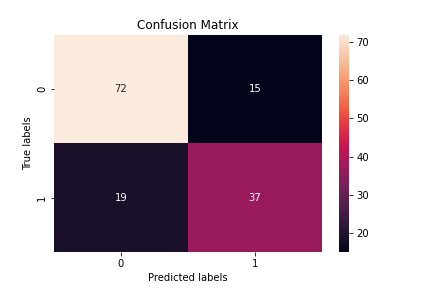

figures/classification_report.png

ADDED

|

figures/confusion_matrix.png

ADDED

|

figures/fare_sex_boxplot.png

DELETED

|

Binary file (9.84 kB)

|

|

|

requirements.txt

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

git+https://github.com/huggingface/transformers.git#egg=transformers[agents]

|

| 2 |

matplotlib

|

| 3 |

seaborn

|

| 4 |

-

|

| 5 |

scipy

|

|

|

|

| 1 |

git+https://github.com/huggingface/transformers.git#egg=transformers[agents]

|

| 2 |

matplotlib

|

| 3 |

seaborn

|

| 4 |

+

sklearn

|

| 5 |

scipy

|

test_app.py

DELETED

|

@@ -1,134 +0,0 @@

|

|

| 1 |

-

import os

|

| 2 |

-

import shutil

|

| 3 |

-

import gradio as gr

|

| 4 |

-

from transformers import ReactCodeAgent, HfEngine, Tool

|

| 5 |

-

import pandas as pd

|

| 6 |

-

|

| 7 |

-

from gradio import Chatbot

|

| 8 |

-

from test_streaming import stream_to_gradio

|

| 9 |

-

from huggingface_hub import login

|

| 10 |

-

from gradio.data_classes import FileData

|

| 11 |

-

|

| 12 |

-

#login(os.getenv("HUGGINGFACEHUB_API_TOKEN"))

|

| 13 |

-

|

| 14 |

-

llm_engine = HfEngine("meta-llama/Meta-Llama-3.1-70B-Instruct")

|

| 15 |

-

|

| 16 |

-

agent = ReactCodeAgent(

|

| 17 |

-

tools=[],

|

| 18 |

-

llm_engine=llm_engine,

|

| 19 |

-

additional_authorized_imports=["numpy", "pandas", "matplotlib", "seaborn","scipy"],

|

| 20 |

-

max_iterations=10,

|

| 21 |

-

)

|

| 22 |

-

base_prompt = """You are an expert full stack data analyst.

|

| 23 |

-

You are given a data file and the data structure below.

|

| 24 |

-

The data file is passed to you as the variable data_file, it is a pandas dataframe, you can use it directly.

|

| 25 |

-

DO NOT try to load data_file, it is already a dataframe pre-loaded in your python interpreter!

|

| 26 |

-

When plotting using matplotlib/seaborn save the figures to the (already existing) folder'./figures/': take care to clear each figure with plt.clf() before doing another plot.

|

| 27 |

-

When filtering pandas dataframe use the iloc.

|

| 28 |

-

When importing packages use this format: from package import module

|

| 29 |

-

For example: from matplotlib import pyplot as plt

|

| 30 |

-

Not: import matplotlib.pyplot as plt

|

| 31 |

-

|

| 32 |

-

Use the data file to answer the question or solve a problem given below.

|

| 33 |

-

|

| 34 |

-

Structure of the data:

|

| 35 |

-

{structure_notes}

|

| 36 |

-

|

| 37 |

-

Question/Problem:

|

| 38 |

-

"""

|

| 39 |

-

|

| 40 |

-

example_notes="""This data is about the Titanic wreck in 1912.

|

| 41 |

-

The target figure is the survival of passengers, notes by 'Survived'

|

| 42 |

-

pclass: A proxy for socio-economic status (SES)

|

| 43 |

-

1st = Upper

|

| 44 |

-

2nd = Middle

|

| 45 |

-

3rd = Lower

|

| 46 |

-

age: Age is fractional if less than 1. If the age is estimated, is it in the form of xx.5

|

| 47 |

-

sibsp: The dataset defines family relations in this way...

|

| 48 |

-

Sibling = brother, sister, stepbrother, stepsister

|

| 49 |

-

Spouse = husband, wife (mistresses and fiancés were ignored)

|

| 50 |

-

parch: The dataset defines family relations in this way...

|

| 51 |

-

Parent = mother, father

|

| 52 |

-

Child = daughter, son, stepdaughter, stepson

|

| 53 |

-

Some children travelled only with a nanny, therefore parch=0 for them."""

|

| 54 |

-

|

| 55 |

-

def get_images_in_directory(directory):

|

| 56 |

-

image_extensions = {'.png', '.jpg', '.jpeg', '.gif', '.bmp', '.tiff'}

|

| 57 |

-

|

| 58 |

-

image_files = []

|

| 59 |

-

for root, dirs, files in os.walk(directory):

|

| 60 |

-

for file in files:

|

| 61 |

-

if os.path.splitext(file)[1].lower() in image_extensions:

|

| 62 |

-

image_files.append(os.path.join(root, file))

|

| 63 |

-

return image_files

|

| 64 |

-

|

| 65 |

-

def interact_with_agent(file_input, additional_notes):

|

| 66 |

-

shutil.rmtree("./figures")

|

| 67 |

-

os.makedirs("./figures")

|

| 68 |

-

|

| 69 |

-

data_file = pd.read_csv(file_input)

|

| 70 |

-

data_structure_notes = f"""- Description (output of .describe()):

|

| 71 |

-

{data_file.describe()}

|

| 72 |

-

- Columns with dtypes:

|

| 73 |

-

{data_file.dtypes}"""

|

| 74 |

-

|

| 75 |

-

prompt = base_prompt.format(structure_notes=data_structure_notes)

|

| 76 |

-

|

| 77 |

-

if additional_notes and len(additional_notes) > 0:

|

| 78 |

-

prompt += additional_notes

|

| 79 |

-

|

| 80 |

-

messages = [gr.ChatMessage(role="user", content=additional_notes)]

|

| 81 |

-

yield messages + [

|

| 82 |

-

gr.ChatMessage(role="assistant", content="⏳ _Starting task..._")

|

| 83 |

-

]

|

| 84 |

-

|

| 85 |

-

plot_image_paths = {}

|

| 86 |

-

for msg in stream_to_gradio(agent, prompt, data_file=data_file):

|

| 87 |

-

messages.append(msg)

|

| 88 |

-

for image_path in get_images_in_directory("./figures"):

|

| 89 |

-

if image_path not in plot_image_paths:

|

| 90 |

-

image_message = gr.ChatMessage(

|

| 91 |

-

role="assistant",

|

| 92 |

-

content=FileData(path=image_path, mime_type="image/png"),

|

| 93 |

-

)

|

| 94 |

-

plot_image_paths[image_path] = True

|

| 95 |

-

messages.append(image_message)

|

| 96 |

-

yield messages + [

|

| 97 |

-

gr.ChatMessage(role="assistant", content="⏳ _Still processing..._")

|

| 98 |

-

]

|

| 99 |

-

yield messages

|

| 100 |

-

|

| 101 |

-

|

| 102 |

-

with gr.Blocks(

|

| 103 |

-

theme=gr.themes.Soft(

|

| 104 |

-

primary_hue=gr.themes.colors.blue,

|

| 105 |

-

secondary_hue=gr.themes.colors.yellow,

|

| 106 |

-

)

|

| 107 |

-

) as demo:

|

| 108 |

-

gr.Markdown("""# Llama-3.1 Data analyst 📊🤔

|

| 109 |

-

|

| 110 |

-

Drop a `.csv` file below and ask a question about your data.

|

| 111 |

-

**Llama-3.1-70B will analyze and answer.**""")

|

| 112 |

-

file_input = gr.File(label="Your file to analyze")

|

| 113 |

-

text_input = gr.Textbox(

|

| 114 |

-

label="Ask a question about your data?"

|

| 115 |

-

)

|

| 116 |

-

submit = gr.Button("Run", variant="primary")

|

| 117 |

-

chatbot = gr.Chatbot(

|

| 118 |

-

label="Data Analyst Agent",

|

| 119 |

-

type="messages",

|

| 120 |

-

avatar_images=(

|

| 121 |

-

None,

|

| 122 |

-

"https://em-content.zobj.net/source/twitter/53/robot-face_1f916.png",

|

| 123 |

-

),

|

| 124 |

-

)

|

| 125 |

-

# gr.Examples(

|

| 126 |

-

# examples=[["./example/titanic.csv", example_notes]],

|

| 127 |

-

# inputs=[file_input, text_input],

|

| 128 |

-

# cache_examples=False

|

| 129 |

-

# )

|

| 130 |

-

|

| 131 |

-

submit.click(interact_with_agent, [file_input, text_input], [chatbot])

|

| 132 |

-

|

| 133 |

-

if __name__ == "__main__":

|

| 134 |

-

demo.launch(server_port=7860)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

test_streaming.py

DELETED

|

@@ -1,64 +0,0 @@

|

|

| 1 |

-

from transformers.agents.agent_types import AgentAudio, AgentImage, AgentText, AgentType

|

| 2 |

-

from transformers.agents import ReactAgent

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

def pull_message(step_log: dict):

|

| 6 |

-

try:

|

| 7 |

-

from gradio import ChatMessage

|

| 8 |

-

except ImportError:

|

| 9 |

-

raise ImportError("Gradio should be installed in order to launch a gradio demo.")

|

| 10 |

-

|

| 11 |

-

if step_log.get("rationale"):

|

| 12 |

-

yield ChatMessage(role="assistant", content=step_log["rationale"])

|

| 13 |

-

if step_log.get("tool_call"):

|

| 14 |

-

used_code = step_log["tool_call"]["tool_name"] == "code interpreter"

|

| 15 |

-

content = step_log["tool_call"]["tool_arguments"]

|

| 16 |

-

if used_code:

|

| 17 |

-

content = f"```py\n{content}\n```"

|

| 18 |

-

yield ChatMessage(

|

| 19 |

-

role="assistant",

|

| 20 |

-

metadata={"title": f"🛠️ Used tool {step_log['tool_call']['tool_name']}"},

|

| 21 |

-

content=content,

|

| 22 |

-

)

|

| 23 |

-

if step_log.get("observation"):

|

| 24 |

-

yield ChatMessage(role="assistant", content=f"```\n{step_log['observation']}\n```")

|

| 25 |

-

if step_log.get("error"):

|

| 26 |

-

yield ChatMessage(

|

| 27 |

-

role="assistant",

|

| 28 |

-

content=str(step_log["error"]),

|

| 29 |

-

metadata={"title": "💥 Error"},

|

| 30 |

-

)

|

| 31 |

-

|

| 32 |

-

|

| 33 |

-

def stream_to_gradio(agent: ReactAgent, task: str, **kwargs):

|

| 34 |

-

"""Runs an agent with the given task and streams the messages from the agent as gradio ChatMessages."""

|

| 35 |

-

|

| 36 |

-

try:

|

| 37 |

-

from gradio import ChatMessage

|

| 38 |

-

except ImportError:

|

| 39 |

-

raise ImportError("Gradio should be installed in order to launch a gradio demo.")

|

| 40 |

-

|

| 41 |

-

class Output:

|

| 42 |

-

output: AgentType | str = None

|

| 43 |

-

|

| 44 |

-

for step_log in agent.run(task, stream=True, **kwargs):

|

| 45 |

-

if isinstance(step_log, dict):

|

| 46 |

-

for message in pull_message(step_log):

|

| 47 |

-

print("message", message)

|

| 48 |

-

yield message

|

| 49 |

-

|

| 50 |

-

Output.output = step_log

|

| 51 |

-

if isinstance(Output.output, AgentText):

|

| 52 |

-

yield ChatMessage(role="assistant", content=f"**Final answer:**\n```\n{Output.output.to_string()}\n```")

|

| 53 |

-

elif isinstance(Output.output, AgentImage):

|

| 54 |

-

yield ChatMessage(

|

| 55 |

-

role="assistant",

|

| 56 |

-

content={"path": Output.output.to_string(), "mime_type": "image/png"},

|

| 57 |

-

)

|

| 58 |

-

elif isinstance(Output.output, AgentAudio):

|

| 59 |

-

yield ChatMessage(

|

| 60 |

-

role="assistant",

|

| 61 |

-

content={"path": Output.output.to_string(), "mime_type": "audio/wav"},

|

| 62 |

-

)

|

| 63 |

-

else:

|

| 64 |

-

yield ChatMessage(role="assistant", content=Output.output)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|