如果需要支持清华ChatGLM2/复旦MOSS/RWKV作为后端,请点击展开此处

-【可选步骤】如果需要支持清华ChatGLM/复旦MOSS作为后端,需要额外安装更多依赖(前提条件:熟悉Python + 用过Pytorch + 电脑配置够强):

+【可选步骤】如果需要支持清华ChatGLM2/复旦MOSS作为后端,需要额外安装更多依赖(前提条件:熟悉Python + 用过Pytorch + 电脑配置够强):

```sh

-# 【可选步骤I】支持清华ChatGLM。清华ChatGLM备注:如果遇到"Call ChatGLM fail 不能正常加载ChatGLM的参数" 错误,参考如下: 1:以上默认安装的为torch+cpu版,使用cuda需要卸载torch重新安装torch+cuda; 2:如因本机配置不够无法加载模型,可以修改request_llm/bridge_chatglm.py中的模型精度, 将 AutoTokenizer.from_pretrained("THUDM/chatglm-6b", trust_remote_code=True) 都修改为 AutoTokenizer.from_pretrained("THUDM/chatglm-6b-int4", trust_remote_code=True)

+# 【可选步骤I】支持清华ChatGLM2。清华ChatGLM备注:如果遇到"Call ChatGLM fail 不能正常加载ChatGLM的参数" 错误,参考如下: 1:以上默认安装的为torch+cpu版,使用cuda需要卸载torch重新安装torch+cuda; 2:如因本机配置不够无法加载模型,可以修改request_llm/bridge_chatglm.py中的模型精度, 将 AutoTokenizer.from_pretrained("THUDM/chatglm-6b", trust_remote_code=True) 都修改为 AutoTokenizer.from_pretrained("THUDM/chatglm-6b-int4", trust_remote_code=True)

python -m pip install -r request_llm/requirements_chatglm.txt

# 【可选步骤II】支持复旦MOSS

python -m pip install -r request_llm/requirements_moss.txt

-git clone https://github.com/OpenLMLab/MOSS.git request_llm/moss # 注意执行此行代码时,必须处于项目根路径

+git clone --depth=1 https://github.com/OpenLMLab/MOSS.git request_llm/moss # 注意执行此行代码时,必须处于项目根路径

+

+# 【可选步骤III】支持RWKV Runner

+参考wiki:https://github.com/binary-husky/gpt_academic/wiki/%E9%80%82%E9%85%8DRWKV-Runner

-# 【可选步骤III】确保config.py配置文件的AVAIL_LLM_MODELS包含了期望的模型,目前支持的全部模型如下(jittorllms系列目前仅支持docker方案):

+# 【可选步骤IV】确保config.py配置文件的AVAIL_LLM_MODELS包含了期望的模型,目前支持的全部模型如下(jittorllms系列目前仅支持docker方案):

AVAIL_LLM_MODELS = ["gpt-3.5-turbo", "api2d-gpt-3.5-turbo", "gpt-4", "api2d-gpt-4", "chatglm", "newbing", "moss"] # + ["jittorllms_rwkv", "jittorllms_pangualpha", "jittorllms_llama"]

```

@@ -152,24 +158,28 @@ AVAIL_LLM_MODELS = ["gpt-3.5-turbo", "api2d-gpt-3.5-turbo", "gpt-4", "api2d-gpt-

python main.py

```

-## 安装-方法2:使用Docker

+### 安装方法II:使用Docker

1. 仅ChatGPT(推荐大多数人选择,等价于docker-compose方案1)

+[](https://github.com/binary-husky/gpt_academic/actions/workflows/build-without-local-llms.yml)

+[](https://github.com/binary-husky/gpt_academic/actions/workflows/build-with-latex.yml)

+[](https://github.com/binary-husky/gpt_academic/actions/workflows/build-with-audio-assistant.yml)

``` sh

-git clone https://github.com/binary-husky/gpt_academic.git # 下载项目

+git clone --depth=1 https://github.com/binary-husky/gpt_academic.git # 下载项目

cd gpt_academic # 进入路径

nano config.py # 用任意文本编辑器编辑config.py, 配置 “Proxy”, “API_KEY” 以及 “WEB_PORT” (例如50923) 等

docker build -t gpt-academic . # 安装

-#(最后一步-选择1)在Linux环境下,用`--net=host`更方便快捷

+#(最后一步-Linux操作系统)用`--net=host`更方便快捷

docker run --rm -it --net=host gpt-academic

-#(最后一步-选择2)在macOS/windows环境下,只能用-p选项将容器上的端口(例如50923)暴露给主机上的端口

+#(最后一步-MacOS/Windows操作系统)只能用-p选项将容器上的端口(例如50923)暴露给主机上的端口

docker run --rm -it -e WEB_PORT=50923 -p 50923:50923 gpt-academic

```

P.S. 如果需要依赖Latex的插件功能,请见Wiki。另外,您也可以直接使用docker-compose获取Latex功能(修改docker-compose.yml,保留方案4并删除其他方案)。

-2. ChatGPT + ChatGLM + MOSS(需要熟悉Docker)

+2. ChatGPT + ChatGLM2 + MOSS(需要熟悉Docker)

+[](https://github.com/binary-husky/gpt_academic/actions/workflows/build-with-chatglm.yml)

``` sh

# 修改docker-compose.yml,保留方案2并删除其他方案。修改docker-compose.yml中方案2的配置,参考其中注释即可

@@ -177,13 +187,15 @@ docker-compose up

```

3. ChatGPT + LLAMA + 盘古 + RWKV(需要熟悉Docker)

+[](https://github.com/binary-husky/gpt_academic/actions/workflows/build-with-jittorllms.yml)

+

``` sh

# 修改docker-compose.yml,保留方案3并删除其他方案。修改docker-compose.yml中方案3的配置,参考其中注释即可

docker-compose up

```

-## 安装-方法3:其他部署姿势

+### 安装方法III:其他部署姿势

1. 一键运行脚本。

完全不熟悉python环境的Windows用户可以下载[Release](https://github.com/binary-husky/gpt_academic/releases)中发布的一键运行脚本安装无本地模型的版本。

脚本的贡献来源是[oobabooga](https://github.com/oobabooga/one-click-installers)。

@@ -200,17 +212,17 @@ docker-compose up

5. 远程云服务器部署(需要云服务器知识与经验)。

请访问[部署wiki-1](https://github.com/binary-husky/gpt_academic/wiki/%E4%BA%91%E6%9C%8D%E5%8A%A1%E5%99%A8%E8%BF%9C%E7%A8%8B%E9%83%A8%E7%BD%B2%E6%8C%87%E5%8D%97)

-6. 使用WSL2(Windows Subsystem for Linux 子系统)。

+6. 使用Sealos[一键部署](https://github.com/binary-husky/gpt_academic/issues/993)。

+

+7. 使用WSL2(Windows Subsystem for Linux 子系统)。

请访问[部署wiki-2](https://github.com/binary-husky/gpt_academic/wiki/%E4%BD%BF%E7%94%A8WSL2%EF%BC%88Windows-Subsystem-for-Linux-%E5%AD%90%E7%B3%BB%E7%BB%9F%EF%BC%89%E9%83%A8%E7%BD%B2)

-7. 如何在二级网址(如`http://localhost/subpath`)下运行。

+8. 如何在二级网址(如`http://localhost/subpath`)下运行。

请访问[FastAPI运行说明](docs/WithFastapi.md)

----

-# Advanced Usage

-## 自定义新的便捷按钮 / 自定义函数插件

-1. 自定义新的便捷按钮(学术快捷键)

+# Advanced Usage

+### I:自定义新的便捷按钮(学术快捷键)

任意文本编辑器打开`core_functional.py`,添加条目如下,然后重启程序即可。(如果按钮已经添加成功并可见,那么前缀、后缀都支持热修改,无需重启程序即可生效。)

例如

```

@@ -226,15 +238,15 @@ docker-compose up

-2. 自定义函数插件

+### II:自定义函数插件

编写强大的函数插件来执行任何你想得到的和想不到的任务。

本项目的插件编写、调试难度很低,只要您具备一定的python基础知识,就可以仿照我们提供的模板实现自己的插件功能。

详情请参考[函数插件指南](https://github.com/binary-husky/gpt_academic/wiki/%E5%87%BD%E6%95%B0%E6%8F%92%E4%BB%B6%E6%8C%87%E5%8D%97)。

----

+

# Latest Update

-## 新功能动态

+### I:新功能动态

1. 对话保存功能。在函数插件区调用 `保存当前的对话` 即可将当前对话保存为可读+可复原的html文件,

另外在函数插件区(下拉菜单)调用 `载入对话历史存档` ,即可还原之前的会话。

@@ -293,10 +305,17 @@ Tip:不指定文件直接点击 `载入对话历史存档` 可以查看历史h

-2. 自定义函数插件

+### II:自定义函数插件

编写强大的函数插件来执行任何你想得到的和想不到的任务。

本项目的插件编写、调试难度很低,只要您具备一定的python基础知识,就可以仿照我们提供的模板实现自己的插件功能。

详情请参考[函数插件指南](https://github.com/binary-husky/gpt_academic/wiki/%E5%87%BD%E6%95%B0%E6%8F%92%E4%BB%B6%E6%8C%87%E5%8D%97)。

----

+

# Latest Update

-## 新功能动态

+### I:新功能动态

1. 对话保存功能。在函数插件区调用 `保存当前的对话` 即可将当前对话保存为可读+可复原的html文件,

另外在函数插件区(下拉菜单)调用 `载入对话历史存档` ,即可还原之前的会话。

@@ -293,10 +305,17 @@ Tip:不指定文件直接点击 `载入对话历史存档` 可以查看历史h

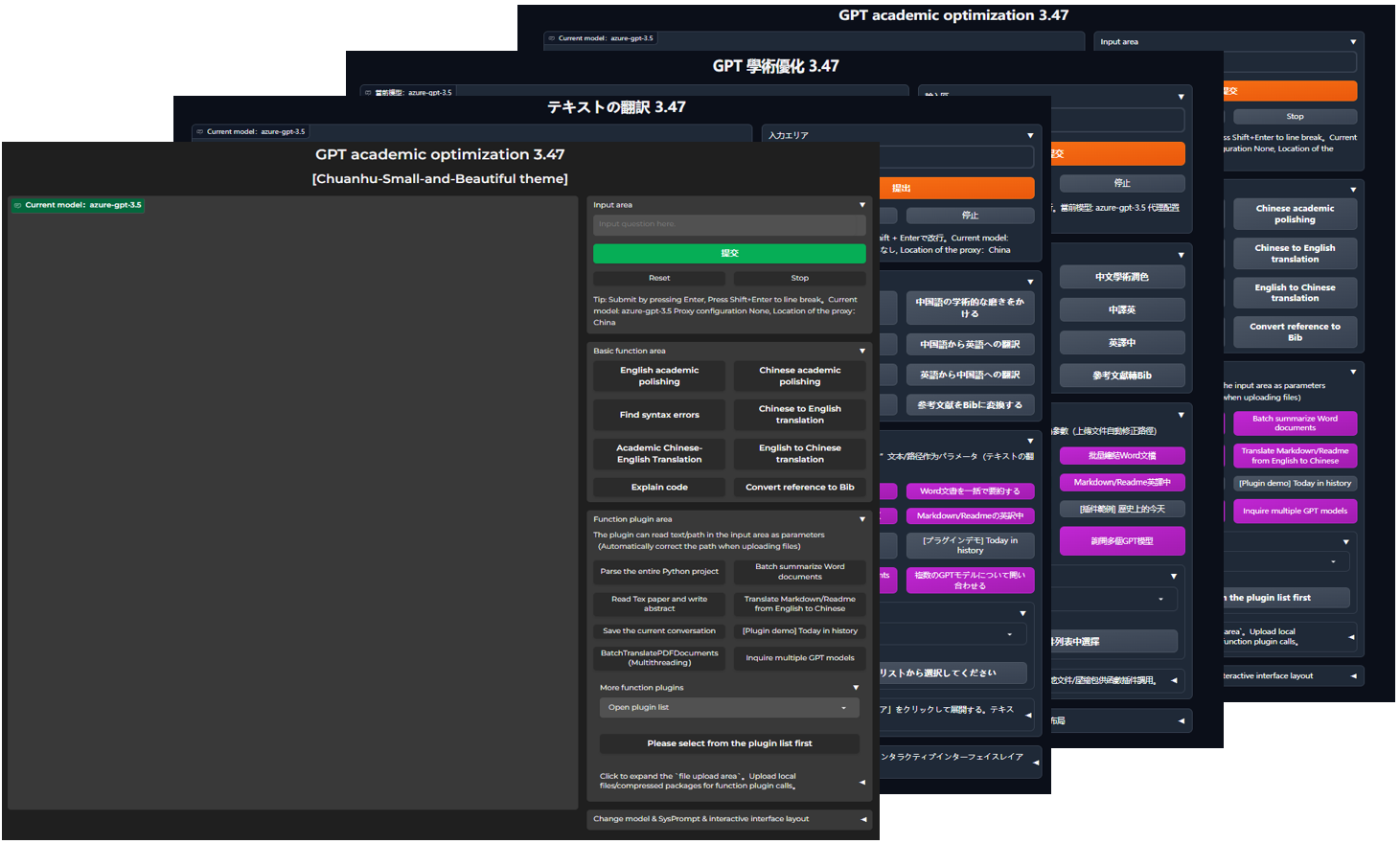

+11. 语言、主题切换

+

+11. 语言、主题切换

+

+

+

ChatGPT 学术优化 {get_current_version()}

"

+ title_html = f"GPT 学术优化 {get_current_version()}

{theme_declaration}"

description = """代码开源和更新[地址🚀](https://github.com/binary-husky/chatgpt_academic),感谢热情的[开发者们❤️](https://github.com/binary-husky/chatgpt_academic/graphs/contributors)"""

# 问询记录, python 版本建议3.9+(越新越好)

- import logging

+ import logging, uuid

os.makedirs("gpt_log", exist_ok=True)

- try:logging.basicConfig(filename="gpt_log/chat_secrets.log", level=logging.INFO, encoding="utf-8")

- except:logging.basicConfig(filename="gpt_log/chat_secrets.log", level=logging.INFO)

+ try:logging.basicConfig(filename="gpt_log/chat_secrets.log", level=logging.INFO, encoding="utf-8", format="%(asctime)s %(levelname)-8s %(message)s", datefmt="%Y-%m-%d %H:%M:%S")

+ except:logging.basicConfig(filename="gpt_log/chat_secrets.log", level=logging.INFO, format="%(asctime)s %(levelname)-8s %(message)s", datefmt="%Y-%m-%d %H:%M:%S")

+ # Disable logging output from the 'httpx' logger

+ logging.getLogger("httpx").setLevel(logging.WARNING)

print("所有问询记录将自动保存在本地目录./gpt_log/chat_secrets.log, 请注意自我隐私保护哦!")

# 一些普通功能模块

@@ -39,7 +42,6 @@ def main():

gr.Chatbot.postprocess = format_io

# 做一些外观色彩上的调整

- from theme import adjust_theme, advanced_css

set_theme = adjust_theme()

# 代理与自动更新

@@ -47,24 +49,24 @@ def main():

proxy_info = check_proxy(proxies)

gr_L1 = lambda: gr.Row().style()

- gr_L2 = lambda scale: gr.Column(scale=scale)

+ gr_L2 = lambda scale, elem_id: gr.Column(scale=scale, elem_id=elem_id)

if LAYOUT == "TOP-DOWN":

gr_L1 = lambda: DummyWith()

- gr_L2 = lambda scale: gr.Row()

+ gr_L2 = lambda scale, elem_id: gr.Row()

CHATBOT_HEIGHT /= 2

cancel_handles = []

- with gr.Blocks(title="ChatGPT 学术优化", theme=set_theme, analytics_enabled=False, css=advanced_css) as demo:

+ with gr.Blocks(title="GPT 学术优化", theme=set_theme, analytics_enabled=False, css=advanced_css) as demo:

gr.HTML(title_html)

gr.HTML(''' 请您打开此页面后务必点击上方的“复制空间”(Duplicate Space)按钮!使用时,先在输入框填入API-KEY然后回车。

请您打开此页面后务必点击上方的“复制空间”(Duplicate Space)按钮!使用时,先在输入框填入API-KEY然后回车。

切忌在“复制空间”(Duplicate Space)之前填入API_KEY或进行提问,否则您的API_KEY将极可能被空间所有者攫取!

支持任意数量的OpenAI的密钥和API2D的密钥共存,例如输入"OpenAI密钥1,API2D密钥2",然后提交,即可同时使用两种模型接口。''')

- cookies = gr.State({'api_key': API_KEY, 'llm_model': LLM_MODEL})

+ cookies = gr.State(load_chat_cookies())

with gr_L1():

- with gr_L2(scale=2):

- chatbot = gr.Chatbot(label=f"当前模型:{LLM_MODEL}")

- chatbot.style(height=CHATBOT_HEIGHT)

+ with gr_L2(scale=2, elem_id="gpt-chat"):

+ chatbot = gr.Chatbot(label=f"当前模型:{LLM_MODEL}", elem_id="gpt-chatbot")

+ if LAYOUT == "TOP-DOWN": chatbot.style(height=CHATBOT_HEIGHT)

history = gr.State([])

- with gr_L2(scale=1):

- with gr.Accordion("输入区", open=True) as area_input_primary:

+ with gr_L2(scale=1, elem_id="gpt-panel"):

+ with gr.Accordion("输入区", open=True, elem_id="input-panel") as area_input_primary:

with gr.Row():

txt = gr.Textbox(show_label=False, lines=2, placeholder="输入问题或API密钥,输入多个密钥时,用英文逗号间隔。支持OpenAI密钥和API2D密钥共存。").style(container=False)

with gr.Row():

@@ -73,17 +75,20 @@ def main():

resetBtn = gr.Button("重置", variant="secondary"); resetBtn.style(size="sm")

stopBtn = gr.Button("停止", variant="secondary"); stopBtn.style(size="sm")

clearBtn = gr.Button("清除", variant="secondary", visible=False); clearBtn.style(size="sm")

+ if ENABLE_AUDIO:

+ with gr.Row():

+ audio_mic = gr.Audio(source="microphone", type="numpy", streaming=True, show_label=False).style(container=False)

with gr.Row():

- status = gr.Markdown(f"Tip: 按Enter提交, 按Shift+Enter换行。当前模型: {LLM_MODEL} \n {proxy_info}")

- with gr.Accordion("基础功能区", open=True) as area_basic_fn:

+ status = gr.Markdown(f"Tip: 按Enter提交, 按Shift+Enter换行。当前模型: {LLM_MODEL} \n {proxy_info}", elem_id="state-panel")

+ with gr.Accordion("基础功能区", open=True, elem_id="basic-panel") as area_basic_fn:

with gr.Row():

for k in functional:

if ("Visible" in functional[k]) and (not functional[k]["Visible"]): continue

variant = functional[k]["Color"] if "Color" in functional[k] else "secondary"

functional[k]["Button"] = gr.Button(k, variant=variant)

- with gr.Accordion("函数插件区", open=True) as area_crazy_fn:

+ with gr.Accordion("函数插件区", open=True, elem_id="plugin-panel") as area_crazy_fn:

with gr.Row():

- gr.Markdown("注意:以下“红颜色”标识的函数插件需从输入区读取路径作为参数.")

+ gr.Markdown("插件可读取“输入区”文本/路径作为参数(上传文件自动修正路径)")

with gr.Row():

for k in crazy_fns:

if not crazy_fns[k].get("AsButton", True): continue

@@ -94,25 +99,25 @@ def main():

with gr.Accordion("更多函数插件", open=True):

dropdown_fn_list = [k for k in crazy_fns.keys() if not crazy_fns[k].get("AsButton", True)]

with gr.Row():

- dropdown = gr.Dropdown(dropdown_fn_list, value=r"打开插件列表", label="").style(container=False)

+ dropdown = gr.Dropdown(dropdown_fn_list, value=r"打开插件列表", label="", show_label=False).style(container=False)

with gr.Row():

plugin_advanced_arg = gr.Textbox(show_label=True, label="高级参数输入区", visible=False,

placeholder="这里是特殊函数插件的高级参数输入区").style(container=False)

with gr.Row():

switchy_bt = gr.Button(r"请先从插件列表中选择", variant="secondary")

with gr.Row():

- with gr.Accordion("点击展开“文件上传区”。上传本地文件可供红色函数插件调用。", open=False) as area_file_up:

+ with gr.Accordion("点击展开“文件上传区”。上传本地文件/压缩包供函数插件调用。", open=False) as area_file_up:

file_upload = gr.Files(label="任何文件, 但推荐上传压缩文件(zip, tar)", file_count="multiple")

- with gr.Accordion("更换模型 & SysPrompt & 交互界面布局", open=(LAYOUT == "TOP-DOWN")):

+ with gr.Accordion("更换模型 & SysPrompt & 交互界面布局", open=(LAYOUT == "TOP-DOWN"), elem_id="interact-panel"):

system_prompt = gr.Textbox(show_label=True, placeholder=f"System Prompt", label="System prompt", value=initial_prompt)

top_p = gr.Slider(minimum=-0, maximum=1.0, value=1.0, step=0.01,interactive=True, label="Top-p (nucleus sampling)",)

temperature = gr.Slider(minimum=-0, maximum=2.0, value=1.0, step=0.01, interactive=True, label="Temperature",)

- max_length_sl = gr.Slider(minimum=256, maximum=4096, value=512, step=1, interactive=True, label="Local LLM MaxLength",)

+ max_length_sl = gr.Slider(minimum=256, maximum=8192, value=4096, step=1, interactive=True, label="Local LLM MaxLength",)

checkboxes = gr.CheckboxGroup(["基础功能区", "函数插件区", "底部输入区", "输入清除键", "插件参数区"], value=["基础功能区", "函数插件区"], label="显示/隐藏功能区")

md_dropdown = gr.Dropdown(AVAIL_LLM_MODELS, value=LLM_MODEL, label="更换LLM模型/请求源").style(container=False)

gr.Markdown(description)

- with gr.Accordion("备选输入区", open=True, visible=False) as area_input_secondary:

+ with gr.Accordion("备选输入区", open=True, visible=False, elem_id="input-panel2") as area_input_secondary:

with gr.Row():

txt2 = gr.Textbox(show_label=False, placeholder="Input question here.", label="输入区2").style(container=False)

with gr.Row():

@@ -147,6 +152,11 @@ def main():

resetBtn2.click(lambda: ([], [], "已重置"), None, [chatbot, history, status])

clearBtn.click(lambda: ("",""), None, [txt, txt2])

clearBtn2.click(lambda: ("",""), None, [txt, txt2])

+ if AUTO_CLEAR_TXT:

+ submitBtn.click(lambda: ("",""), None, [txt, txt2])

+ submitBtn2.click(lambda: ("",""), None, [txt, txt2])

+ txt.submit(lambda: ("",""), None, [txt, txt2])

+ txt2.submit(lambda: ("",""), None, [txt, txt2])

# 基础功能区的回调函数注册

for k in functional:

if ("Visible" in functional[k]) and (not functional[k]["Visible"]): continue

@@ -174,16 +184,29 @@ def main():

return {chatbot: gr.update(label="当前模型:"+k)}

md_dropdown.select(on_md_dropdown_changed, [md_dropdown], [chatbot] )

# 随变按钮的回调函数注册

- def route(k, *args, **kwargs):

+ def route(request: gr.Request, k, *args, **kwargs):

if k in [r"打开插件列表", r"请先从插件列表中选择"]: return

- yield from ArgsGeneralWrapper(crazy_fns[k]["Function"])(*args, **kwargs)

+ yield from ArgsGeneralWrapper(crazy_fns[k]["Function"])(request, *args, **kwargs)

click_handle = switchy_bt.click(route,[switchy_bt, *input_combo, gr.State(PORT)], output_combo)

click_handle.then(on_report_generated, [cookies, file_upload, chatbot], [cookies, file_upload, chatbot])

cancel_handles.append(click_handle)

# 终止按钮的回调函数注册

stopBtn.click(fn=None, inputs=None, outputs=None, cancels=cancel_handles)

stopBtn2.click(fn=None, inputs=None, outputs=None, cancels=cancel_handles)

-

+ if ENABLE_AUDIO:

+ from crazy_functions.live_audio.audio_io import RealtimeAudioDistribution

+ rad = RealtimeAudioDistribution()

+ def deal_audio(audio, cookies):

+ rad.feed(cookies['uuid'].hex, audio)

+ audio_mic.stream(deal_audio, inputs=[audio_mic, cookies])

+

+ def init_cookie(cookies, chatbot):

+ # 为每一位访问的用户赋予一个独一无二的uuid编码

+ cookies.update({'uuid': uuid.uuid4()})

+ return cookies

+ demo.load(init_cookie, inputs=[cookies, chatbot], outputs=[cookies])

+ demo.load(lambda: 0, inputs=None, outputs=None, _js='()=>{ChatBotHeight();}')

+

# gradio的inbrowser触发不太稳定,回滚代码到原始的浏览器打开函数

def auto_opentab_delay():

import threading, webbrowser, time

diff --git a/check_proxy.py b/check_proxy.py

index 977802db49babe079a191dbda6815c216e156548..474988c129d51e3f1a4fa59634f073cbda553c09 100644

--- a/check_proxy.py

+++ b/check_proxy.py

@@ -3,15 +3,20 @@ def check_proxy(proxies):

import requests

proxies_https = proxies['https'] if proxies is not None else '无'

try:

- response = requests.get("https://ipapi.co/json/",

- proxies=proxies, timeout=4)

+ response = requests.get("https://ipapi.co/json/", proxies=proxies, timeout=4)

data = response.json()

print(f'查询代理的地理位置,返回的结果是{data}')

if 'country_name' in data:

country = data['country_name']

result = f"代理配置 {proxies_https}, 代理所在地:{country}"

elif 'error' in data:

- result = f"代理配置 {proxies_https}, 代理所在地:未知,IP查询频率受限"

+ alternative = _check_with_backup_source(proxies)

+ if alternative is None:

+ result = f"代理配置 {proxies_https}, 代理所在地:未知,IP查询频率受限"

+ else:

+ result = f"代理配置 {proxies_https}, 代理所在地:{alternative}"

+ else:

+ result = f"代理配置 {proxies_https}, 代理数据解析失败:{data}"

print(result)

return result

except:

@@ -19,6 +24,11 @@ def check_proxy(proxies):

print(result)

return result

+def _check_with_backup_source(proxies):

+ import random, string, requests

+ random_string = ''.join(random.choices(string.ascii_letters + string.digits, k=32))

+ try: return requests.get(f"http://{random_string}.edns.ip-api.com/json", proxies=proxies, timeout=4).json()['dns']['geo']

+ except: return None

def backup_and_download(current_version, remote_version):

"""

@@ -115,7 +125,7 @@ def auto_update(raise_error=False):

with open('./version', 'r', encoding='utf8') as f:

current_version = f.read()

current_version = json.loads(current_version)['version']

- if (remote_version - current_version) >= 0.01:

+ if (remote_version - current_version) >= 0.01-1e-5:

from colorful import print亮黄

print亮黄(

f'\n新版本可用。新版本:{remote_version},当前版本:{current_version}。{new_feature}')

@@ -137,7 +147,7 @@ def auto_update(raise_error=False):

else:

return

except:

- msg = '自动更新程序:已禁用'

+ msg = '自动更新程序:已禁用。建议排查:代理网络配置。'

if raise_error:

from toolbox import trimmed_format_exc

msg += trimmed_format_exc()

diff --git a/config.py b/config.py

index 350a99b7a405f663d9a633616adb880e9f45fd1e..21e77d50793a6a208ad952118648578d75625dae 100644

--- a/config.py

+++ b/config.py

@@ -1,17 +1,27 @@

-# [step 1]>> 例如: API_KEY = "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" (此key无效)

-API_KEY = "sk-此处填API密钥" # 可同时填写多个API-KEY,用英文逗号分割,例如API_KEY = "sk-openaikey1,sk-openaikey2,fkxxxx-api2dkey1,fkxxxx-api2dkey2"

+"""

+ 以下所有配置也都支持利用环境变量覆写,环境变量配置格式见docker-compose.yml。

+ 读取优先级:环境变量 > config_private.py > config.py

+ --- --- --- --- --- --- --- --- --- --- --- --- --- --- --- --- --- --- --- --- ---

+ All the following configurations also support using environment variables to override,

+ and the environment variable configuration format can be seen in docker-compose.yml.

+ Configuration reading priority: environment variable > config_private.py > config.py

+"""

+

+# [step 1]>> API_KEY = "sk-123456789xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx123456789"。极少数情况下,还需要填写组织(格式如org-123456789abcdefghijklmno的),请向下翻,找 API_ORG 设置项

+API_KEY = "此处填API密钥" # 可同时填写多个API-KEY,用英文逗号分割,例如API_KEY = "sk-openaikey1,sk-openaikey2,fkxxxx-api2dkey3,azure-apikey4"

# [step 2]>> 改为True应用代理,如果直接在海外服务器部署,此处不修改

USE_PROXY = False

if USE_PROXY:

- # 填写格式是 [协议]:// [地址] :[端口],填写之前不要忘记把USE_PROXY改成True,如果直接在海外服务器部署,此处不修改

- # 例如 "socks5h://localhost:11284"

- # [协议] 常见协议无非socks5h/http; 例如 v2**y 和 ss* 的默认本地协议是socks5h; 而cl**h 的默认本地协议是http

- # [地址] 懂的都懂,不懂就填localhost或者127.0.0.1肯定错不了(localhost意思是代理软件安装在本机上)

- # [端口] 在代理软件的设置里找。虽然不同的代理软件界面不一样,但端口号都应该在最显眼的位置上

-

- # 代理网络的地址,打开你的*学*网软件查看代理的协议(socks5/http)、地址(localhost)和端口(11284)

+ """

+ 填写格式是 [协议]:// [地址] :[端口],填写之前不要忘记把USE_PROXY改成True,如果直接在海外服务器部署,此处不修改

+ <配置教程&视频教程> https://github.com/binary-husky/gpt_academic/issues/1>

+ [协议] 常见协议无非socks5h/http; 例如 v2**y 和 ss* 的默认本地协议是socks5h; 而cl**h 的默认本地协议是http

+ [地址] 懂的都懂,不懂就填localhost或者127.0.0.1肯定错不了(localhost意思是代理软件安装在本机上)

+ [端口] 在代理软件的设置里找。虽然不同的代理软件界面不一样,但端口号都应该在最显眼的位置上

+ """

+ # 代理网络的地址,打开你的*学*网软件查看代理的协议(socks5h / http)、地址(localhost)和端口(11284)

proxies = {

# [协议]:// [地址] :[端口]

"http": "socks5h://localhost:11284", # 再例如 "http": "http://127.0.0.1:7890",

@@ -20,28 +30,40 @@ if USE_PROXY:

else:

proxies = None

-# [step 3]>> 多线程函数插件中,默认允许多少路线程同时访问OpenAI。Free trial users的限制是每分钟3次,Pay-as-you-go users的限制是每分钟3500次

-# 一言以蔽之:免费用户填3,OpenAI绑了信用卡的用户可以填 16 或者更高。提高限制请查询:https://platform.openai.com/docs/guides/rate-limits/overview

+# ------------------------------------ 以下配置可以优化体验, 但大部分场合下并不需要修改 ------------------------------------

+

+# 重新URL重新定向,实现更换API_URL的作用(高危设置! 常规情况下不要修改! 通过修改此设置,您将把您的API-KEY和对话隐私完全暴露给您设定的中间人!)

+# 格式: API_URL_REDIRECT = {"https://api.openai.com/v1/chat/completions": "在这里填写重定向的api.openai.com的URL"}

+# 举例: API_URL_REDIRECT = {"https://api.openai.com/v1/chat/completions": "https://reverse-proxy-url/v1/chat/completions"}

+API_URL_REDIRECT = {}

+

+

+# 多线程函数插件中,默认允许多少路线程同时访问OpenAI。Free trial users的限制是每分钟3次,Pay-as-you-go users的限制是每分钟3500次

+# 一言以蔽之:免费(5刀)用户填3,OpenAI绑了信用卡的用户可以填 16 或者更高。提高限制请查询:https://platform.openai.com/docs/guides/rate-limits/overview

DEFAULT_WORKER_NUM = 3

-# [step 4]>> 以下配置可以优化体验,但大部分场合下并不需要修改

# 对话窗的高度

CHATBOT_HEIGHT = 1115

+

# 代码高亮

CODE_HIGHLIGHT = True

+

# 窗口布局

-LAYOUT = "LEFT-RIGHT" # "LEFT-RIGHT"(左右布局) # "TOP-DOWN"(上下布局)

-DARK_MODE = True # "LEFT-RIGHT"(左右布局) # "TOP-DOWN"(上下布局)

+LAYOUT = "LEFT-RIGHT" # "LEFT-RIGHT"(左右布局) # "TOP-DOWN"(上下布局)

+DARK_MODE = True # 暗色模式 / 亮色模式

+

# 发送请求到OpenAI后,等待多久判定为超时

TIMEOUT_SECONDS = 30

+

# 网页的端口, -1代表随机端口

WEB_PORT = -1

+

# 如果OpenAI不响应(网络卡顿、代理失败、KEY失效),重试的次数限制

MAX_RETRY = 2

@@ -49,34 +71,43 @@ MAX_RETRY = 2

LLM_MODEL = "gpt-3.5-turbo" # 可选 "chatglm"

AVAIL_LLM_MODELS = ["newbing-free", "gpt-3.5-turbo", "gpt-4", "api2d-gpt-4", "api2d-gpt-3.5-turbo"]

+# ChatGLM(2) Finetune Model Path (如果使用ChatGLM2微调模型,需要把"chatglmft"加入AVAIL_LLM_MODELS中)

+ChatGLM_PTUNING_CHECKPOINT = "" # 例如"/home/hmp/ChatGLM2-6B/ptuning/output/6b-pt-128-1e-2/checkpoint-100"

+

+

# 本地LLM模型如ChatGLM的执行方式 CPU/GPU

LOCAL_MODEL_DEVICE = "cpu" # 可选 "cuda"

+LOCAL_MODEL_QUANT = "FP16" # 默认 "FP16" "INT4" 启用量化INT4版本 "INT8" 启用量化INT8版本

+

# 设置gradio的并行线程数(不需要修改)

CONCURRENT_COUNT = 100

+

+# 是否在提交时自动清空输入框

+AUTO_CLEAR_TXT = False

+

+

+# 色彩主体,可选 ["Default", "Chuanhu-Small-and-Beautiful"]

+THEME = "Default"

+

+

# 加一个live2d装饰

ADD_WAIFU = False

+

# 设置用户名和密码(不需要修改)(相关功能不稳定,与gradio版本和网络都相关,如果本地使用不建议加这个)

# [("username", "password"), ("username2", "password2"), ...]

AUTHENTICATION = []

-# 重新URL重新定向,实现更换API_URL的作用(常规情况下,不要修改!!)

-# (高危设置!通过修改此设置,您将把您的API-KEY和对话隐私完全暴露给您设定的中间人!)

-# 格式 {"https://api.openai.com/v1/chat/completions": "在这里填写重定向的api.openai.com的URL"}

-# 例如 API_URL_REDIRECT = {"https://api.openai.com/v1/chat/completions": "https://ai.open.com/api/conversation"}

-API_URL_REDIRECT = {}

# 如果需要在二级路径下运行(常规情况下,不要修改!!)(需要配合修改main.py才能生效!)

CUSTOM_PATH = "/"

-# 如果需要使用newbing,把newbing的长长的cookie放到这里

-NEWBING_STYLE = "creative" # ["creative", "balanced", "precise"]

-# 从现在起,如果您调用"newbing-free"模型,则无需填写NEWBING_COOKIES

-NEWBING_COOKIES = """

-your bing cookies here

-"""

+

+# 极少数情况下,openai的官方KEY需要伴随组织编码(格式如org-xxxxxxxxxxxxxxxxxxxxxxxx)使用

+API_ORG = ""

+

# 如果需要使用Slack Claude,使用教程详情见 request_llm/README.md

SLACK_CLAUDE_BOT_ID = ''

@@ -84,7 +115,35 @@ SLACK_CLAUDE_USER_TOKEN = ''

# 如果需要使用AZURE 详情请见额外文档 docs\use_azure.md

-AZURE_ENDPOINT = "https://你的api名称.openai.azure.com/"

-AZURE_API_KEY = "填入azure openai api的密钥"

-AZURE_API_VERSION = "填入api版本"

-AZURE_ENGINE = "填入ENGINE"

+AZURE_ENDPOINT = "https://你亲手写的api名称.openai.azure.com/"

+AZURE_API_KEY = "填入azure openai api的密钥" # 建议直接在API_KEY处填写,该选项即将被弃用

+AZURE_ENGINE = "填入你亲手写的部署名" # 读 docs\use_azure.md

+

+

+# 使用Newbing

+NEWBING_STYLE = "creative" # ["creative", "balanced", "precise"]

+NEWBING_COOKIES = """

+put your new bing cookies here

+"""

+

+

+# 阿里云实时语音识别 配置难度较高 仅建议高手用户使用 参考 https://github.com/binary-husky/gpt_academic/blob/master/docs/use_audio.md

+ENABLE_AUDIO = False

+ALIYUN_TOKEN="" # 例如 f37f30e0f9934c34a992f6f64f7eba4f

+ALIYUN_APPKEY="" # 例如 RoPlZrM88DnAFkZK

+ALIYUN_ACCESSKEY="" # (无需填写)

+ALIYUN_SECRET="" # (无需填写)

+

+

+# 接入讯飞星火大模型 https://console.xfyun.cn/services/iat

+XFYUN_APPID = "00000000"

+XFYUN_API_SECRET = "bbbbbbbbbbbbbbbbbbbbbbbbbbbbbbbb"

+XFYUN_API_KEY = "aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa"

+

+

+# Claude API KEY

+ANTHROPIC_API_KEY = ""

+

+

+# 自定义API KEY格式

+CUSTOM_API_KEY_PATTERN = ""

diff --git a/core_functional.py b/core_functional.py

index e126b5733a26b2c06668755fc44763efe3d30bac..b04e1e0df07ce20a9700307bc898934c3f1d90cc 100644

--- a/core_functional.py

+++ b/core_functional.py

@@ -1,20 +1,25 @@

# 'primary' 颜色对应 theme.py 中的 primary_hue

# 'secondary' 颜色对应 theme.py 中的 neutral_hue

# 'stop' 颜色对应 theme.py 中的 color_er

-# 默认按钮颜色是 secondary

+import importlib

from toolbox import clear_line_break

def get_core_functions():

return {

"英语学术润色": {

- # 前言

+ # 前缀,会被加在你的输入之前。例如,用来描述你的要求,例如翻译、解释代码、润色等等

"Prefix": r"Below is a paragraph from an academic paper. Polish the writing to meet the academic style, " +

r"improve the spelling, grammar, clarity, concision and overall readability. When necessary, rewrite the whole sentence. " +

r"Furthermore, list all modification and explain the reasons to do so in markdown table." + "\n\n",

- # 后语

+ # 后缀,会被加在你的输入之后。例如,配合前缀可以把你的输入内容用引号圈起来

"Suffix": r"",

- "Color": r"secondary", # 按钮颜色

+ # 按钮颜色 (默认 secondary)

+ "Color": r"secondary",

+ # 按钮是否可见 (默认 True,即可见)

+ "Visible": True,

+ # 是否在触发时清除历史 (默认 False,即不处理之前的对话历史)

+ "AutoClearHistory": False

},

"中文学术润色": {

"Prefix": r"作为一名中文学术论文写作改进助理,你的任务是改进所提供文本的拼写、语法、清晰、简洁和整体可读性," +

@@ -63,6 +68,7 @@ def get_core_functions():

"Prefix": r"我需要你找一张网络图片。使用Unsplash API(https://source.unsplash.com/960x640/?<英语关键词>)获取图片URL," +

r"然后请使用Markdown格式封装,并且不要有反斜线,不要用代码块。现在,请按以下描述给我发送图片:" + "\n\n",

"Suffix": r"",

+ "Visible": False,

},

"解释代码": {

"Prefix": r"请解释以下代码:" + "\n```\n",

@@ -73,6 +79,16 @@ def get_core_functions():

r"Note that, reference styles maybe more than one kind, you should transform each item correctly." +

r"Items need to be transformed:",

"Suffix": r"",

- "Visible": False,

}

}

+

+

+def handle_core_functionality(additional_fn, inputs, history, chatbot):

+ import core_functional

+ importlib.reload(core_functional) # 热更新prompt

+ core_functional = core_functional.get_core_functions()

+ if "PreProcess" in core_functional[additional_fn]: inputs = core_functional[additional_fn]["PreProcess"](inputs) # 获取预处理函数(如果有的话)

+ inputs = core_functional[additional_fn]["Prefix"] + inputs + core_functional[additional_fn]["Suffix"]

+ if core_functional[additional_fn].get("AutoClearHistory", False):

+ history = []

+ return inputs, history

diff --git "a/crazy_functions/Langchain\347\237\245\350\257\206\345\272\223.py" "b/crazy_functions/Langchain\347\237\245\350\257\206\345\272\223.py"

index 31c459aa1fe5ba35efb85988ff18528d4851f2e5..12735dfd733c345ebba80dc3481734c4282abb15 100644

--- "a/crazy_functions/Langchain\347\237\245\350\257\206\345\272\223.py"

+++ "b/crazy_functions/Langchain\347\237\245\350\257\206\345\272\223.py"

@@ -30,7 +30,7 @@ def 知识库问答(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_pro

)

yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

from .crazy_utils import try_install_deps

- try_install_deps(['zh_langchain==0.2.1'])

+ try_install_deps(['zh_langchain==0.2.1', 'pypinyin'])

# < --------------------读取参数--------------- >

if ("advanced_arg" in plugin_kwargs) and (plugin_kwargs["advanced_arg"] == ""): plugin_kwargs.pop("advanced_arg")

diff --git "a/crazy_functions/Latex\350\276\223\345\207\272PDF\347\273\223\346\236\234.py" "b/crazy_functions/Latex\350\276\223\345\207\272PDF\347\273\223\346\236\234.py"

index 810d80247c60bc2a7ca8eb8d9943677092872561..e79cf8223826e74c31b4cfb0220a395f38b335b2 100644

--- "a/crazy_functions/Latex\350\276\223\345\207\272PDF\347\273\223\346\236\234.py"

+++ "b/crazy_functions/Latex\350\276\223\345\207\272PDF\347\273\223\346\236\234.py"

@@ -157,7 +157,7 @@ def Latex英文纠错加PDF对比(txt, llm_kwargs, plugin_kwargs, chatbot, histo

try:

import glob, os, time, subprocess

subprocess.Popen(['pdflatex', '-version'])

- from .latex_utils import Latex精细分解与转化, 编译Latex

+ from .latex_fns.latex_actions import Latex精细分解与转化, 编译Latex

except Exception as e:

chatbot.append([ f"解析项目: {txt}",

f"尝试执行Latex指令失败。Latex没有安装, 或者不在环境变量PATH中。安装方法https://tug.org/texlive/。报错信息\n\n```\n\n{trimmed_format_exc()}\n\n```\n\n"])

@@ -234,7 +234,7 @@ def Latex翻译中文并重新编译PDF(txt, llm_kwargs, plugin_kwargs, chatbot,

try:

import glob, os, time, subprocess

subprocess.Popen(['pdflatex', '-version'])

- from .latex_utils import Latex精细分解与转化, 编译Latex

+ from .latex_fns.latex_actions import Latex精细分解与转化, 编译Latex

except Exception as e:

chatbot.append([ f"解析项目: {txt}",

f"尝试执行Latex指令失败。Latex没有安装, 或者不在环境变量PATH中。安装方法https://tug.org/texlive/。报错信息\n\n```\n\n{trimmed_format_exc()}\n\n```\n\n"])

diff --git "a/crazy_functions/chatglm\345\276\256\350\260\203\345\267\245\345\205\267.py" "b/crazy_functions/chatglm\345\276\256\350\260\203\345\267\245\345\205\267.py"

new file mode 100644

index 0000000000000000000000000000000000000000..336d7cfc85ac159841758123fa057bd20a0bbbec

--- /dev/null

+++ "b/crazy_functions/chatglm\345\276\256\350\260\203\345\267\245\345\205\267.py"

@@ -0,0 +1,141 @@

+from toolbox import CatchException, update_ui, promote_file_to_downloadzone

+from .crazy_utils import request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency

+import datetime, json

+

+def fetch_items(list_of_items, batch_size):

+ for i in range(0, len(list_of_items), batch_size):

+ yield list_of_items[i:i + batch_size]

+

+def string_to_options(arguments):

+ import argparse

+ import shlex

+

+ # Create an argparse.ArgumentParser instance

+ parser = argparse.ArgumentParser()

+

+ # Add command-line arguments

+ parser.add_argument("--llm_to_learn", type=str, help="LLM model to learn", default="gpt-3.5-turbo")

+ parser.add_argument("--prompt_prefix", type=str, help="Prompt prefix", default='')

+ parser.add_argument("--system_prompt", type=str, help="System prompt", default='')

+ parser.add_argument("--batch", type=int, help="System prompt", default=50)

+ parser.add_argument("--pre_seq_len", type=int, help="pre_seq_len", default=50)

+ parser.add_argument("--learning_rate", type=float, help="learning_rate", default=2e-2)

+ parser.add_argument("--num_gpus", type=int, help="num_gpus", default=1)

+ parser.add_argument("--json_dataset", type=str, help="json_dataset", default="")

+ parser.add_argument("--ptuning_directory", type=str, help="ptuning_directory", default="")

+

+

+

+ # Parse the arguments

+ args = parser.parse_args(shlex.split(arguments))

+

+ return args

+

+@CatchException

+def 微调数据集生成(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ """

+ txt 输入栏用户输入的文本,例如需要翻译的一段话,再例如一个包含了待处理文件的路径

+ llm_kwargs gpt模型参数,如温度和top_p等,一般原样传递下去就行

+ plugin_kwargs 插件模型的参数

+ chatbot 聊天显示框的句柄,用于显示给用户

+ history 聊天历史,前情提要

+ system_prompt 给gpt的静默提醒

+ web_port 当前软件运行的端口号

+ """

+ history = [] # 清空历史,以免输入溢出

+ chatbot.append(("这是什么功能?", "[Local Message] 微调数据集生成"))

+ if ("advanced_arg" in plugin_kwargs) and (plugin_kwargs["advanced_arg"] == ""): plugin_kwargs.pop("advanced_arg")

+ args = plugin_kwargs.get("advanced_arg", None)

+ if args is None:

+ chatbot.append(("没给定指令", "退出"))

+ yield from update_ui(chatbot=chatbot, history=history); return

+ else:

+ arguments = string_to_options(arguments=args)

+

+ dat = []

+ with open(txt, 'r', encoding='utf8') as f:

+ for line in f.readlines():

+ json_dat = json.loads(line)

+ dat.append(json_dat["content"])

+

+ llm_kwargs['llm_model'] = arguments.llm_to_learn

+ for batch in fetch_items(dat, arguments.batch):

+ res = yield from request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency(

+ inputs_array=[f"{arguments.prompt_prefix}\n\n{b}" for b in (batch)],

+ inputs_show_user_array=[f"Show Nothing" for _ in (batch)],

+ llm_kwargs=llm_kwargs,

+ chatbot=chatbot,

+ history_array=[[] for _ in (batch)],

+ sys_prompt_array=[arguments.system_prompt for _ in (batch)],

+ max_workers=10 # OpenAI所允许的最大并行过载

+ )

+

+ with open(txt+'.generated.json', 'a+', encoding='utf8') as f:

+ for b, r in zip(batch, res[1::2]):

+ f.write(json.dumps({"content":b, "summary":r}, ensure_ascii=False)+'\n')

+

+ promote_file_to_downloadzone(txt+'.generated.json', rename_file='generated.json', chatbot=chatbot)

+ return

+

+

+

+@CatchException

+def 启动微调(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ """

+ txt 输入栏用户输入的文本,例如需要翻译的一段话,再例如一个包含了待处理文件的路径

+ llm_kwargs gpt模型参数,如温度和top_p等,一般原样传递下去就行

+ plugin_kwargs 插件模型的参数

+ chatbot 聊天显示框的句柄,用于显示给用户

+ history 聊天历史,前情提要

+ system_prompt 给gpt的静默提醒

+ web_port 当前软件运行的端口号

+ """

+ import subprocess

+ history = [] # 清空历史,以免输入溢出

+ chatbot.append(("这是什么功能?", "[Local Message] 微调数据集生成"))

+ if ("advanced_arg" in plugin_kwargs) and (plugin_kwargs["advanced_arg"] == ""): plugin_kwargs.pop("advanced_arg")

+ args = plugin_kwargs.get("advanced_arg", None)

+ if args is None:

+ chatbot.append(("没给定指令", "退出"))

+ yield from update_ui(chatbot=chatbot, history=history); return

+ else:

+ arguments = string_to_options(arguments=args)

+

+

+

+ pre_seq_len = arguments.pre_seq_len # 128

+ learning_rate = arguments.learning_rate # 2e-2

+ num_gpus = arguments.num_gpus # 1

+ json_dataset = arguments.json_dataset # 't_code.json'

+ ptuning_directory = arguments.ptuning_directory # '/home/hmp/ChatGLM2-6B/ptuning'

+

+ command = f"torchrun --standalone --nnodes=1 --nproc-per-node={num_gpus} main.py \

+ --do_train \

+ --train_file AdvertiseGen/{json_dataset} \

+ --validation_file AdvertiseGen/{json_dataset} \

+ --preprocessing_num_workers 20 \

+ --prompt_column content \

+ --response_column summary \

+ --overwrite_cache \

+ --model_name_or_path THUDM/chatglm2-6b \

+ --output_dir output/clothgen-chatglm2-6b-pt-{pre_seq_len}-{learning_rate} \

+ --overwrite_output_dir \

+ --max_source_length 256 \

+ --max_target_length 256 \

+ --per_device_train_batch_size 1 \

+ --per_device_eval_batch_size 1 \

+ --gradient_accumulation_steps 16 \

+ --predict_with_generate \

+ --max_steps 100 \

+ --logging_steps 10 \

+ --save_steps 20 \

+ --learning_rate {learning_rate} \

+ --pre_seq_len {pre_seq_len} \

+ --quantization_bit 4"

+

+ process = subprocess.Popen(command, shell=True, cwd=ptuning_directory)

+ try:

+ process.communicate(timeout=3600*24)

+ except subprocess.TimeoutExpired:

+ process.kill()

+ return

diff --git a/crazy_functions/crazy_utils.py b/crazy_functions/crazy_utils.py

index a1b1493ce7b196094b6b8ffdf08273bf392a4e30..ffe95e2be56e969c787f5d2895fa502540501660 100644

--- a/crazy_functions/crazy_utils.py

+++ b/crazy_functions/crazy_utils.py

@@ -130,6 +130,11 @@ def request_gpt_model_in_new_thread_with_ui_alive(

yield from update_ui(chatbot=chatbot, history=[]) # 如果最后成功了,则删除报错信息

return final_result

+def can_multi_process(llm):

+ if llm.startswith('gpt-'): return True

+ if llm.startswith('api2d-'): return True

+ if llm.startswith('azure-'): return True

+ return False

def request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency(

inputs_array, inputs_show_user_array, llm_kwargs,

@@ -175,7 +180,7 @@ def request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency(

except: max_workers = 8

if max_workers <= 0: max_workers = 3

# 屏蔽掉 chatglm的多线程,可能会导致严重卡顿

- if not (llm_kwargs['llm_model'].startswith('gpt-') or llm_kwargs['llm_model'].startswith('api2d-')):

+ if not can_multi_process(llm_kwargs['llm_model']):

max_workers = 1

executor = ThreadPoolExecutor(max_workers=max_workers)

diff --git a/crazy_functions/latex_fns/latex_actions.py b/crazy_functions/latex_fns/latex_actions.py

new file mode 100644

index 0000000000000000000000000000000000000000..c59bc31d4b445f69ac58b1e84db789cc4bbe4b43

--- /dev/null

+++ b/crazy_functions/latex_fns/latex_actions.py

@@ -0,0 +1,447 @@

+from toolbox import update_ui, update_ui_lastest_msg # 刷新Gradio前端界面

+from toolbox import zip_folder, objdump, objload, promote_file_to_downloadzone

+from .latex_toolbox import PRESERVE, TRANSFORM

+from .latex_toolbox import set_forbidden_text, set_forbidden_text_begin_end, set_forbidden_text_careful_brace

+from .latex_toolbox import reverse_forbidden_text_careful_brace, reverse_forbidden_text, convert_to_linklist, post_process

+from .latex_toolbox import fix_content, find_main_tex_file, merge_tex_files, compile_latex_with_timeout

+

+import os, shutil

+import re

+import numpy as np

+

+pj = os.path.join

+

+

+def split_subprocess(txt, project_folder, return_dict, opts):

+ """

+ break down latex file to a linked list,

+ each node use a preserve flag to indicate whether it should

+ be proccessed by GPT.

+ """

+ text = txt

+ mask = np.zeros(len(txt), dtype=np.uint8) + TRANSFORM

+

+ # 吸收title与作者以上的部分

+ text, mask = set_forbidden_text(text, mask, r"^(.*?)\\maketitle", re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, r"^(.*?)\\begin{document}", re.DOTALL)

+ # 吸收iffalse注释

+ text, mask = set_forbidden_text(text, mask, r"\\iffalse(.*?)\\fi", re.DOTALL)

+ # 吸收在42行以内的begin-end组合

+ text, mask = set_forbidden_text_begin_end(text, mask, r"\\begin\{([a-z\*]*)\}(.*?)\\end\{\1\}", re.DOTALL, limit_n_lines=42)

+ # 吸收匿名公式

+ text, mask = set_forbidden_text(text, mask, [ r"\$\$([^$]+)\$\$", r"\\\[.*?\\\]" ], re.DOTALL)

+ # 吸收其他杂项

+ text, mask = set_forbidden_text(text, mask, [ r"\\section\{(.*?)\}", r"\\section\*\{(.*?)\}", r"\\subsection\{(.*?)\}", r"\\subsubsection\{(.*?)\}" ])

+ text, mask = set_forbidden_text(text, mask, [ r"\\bibliography\{(.*?)\}", r"\\bibliographystyle\{(.*?)\}" ])

+ text, mask = set_forbidden_text(text, mask, r"\\begin\{thebibliography\}.*?\\end\{thebibliography\}", re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, r"\\begin\{lstlisting\}(.*?)\\end\{lstlisting\}", re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, r"\\begin\{wraptable\}(.*?)\\end\{wraptable\}", re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, r"\\begin\{algorithm\}(.*?)\\end\{algorithm\}", re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{wrapfigure\}(.*?)\\end\{wrapfigure\}", r"\\begin\{wrapfigure\*\}(.*?)\\end\{wrapfigure\*\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{figure\}(.*?)\\end\{figure\}", r"\\begin\{figure\*\}(.*?)\\end\{figure\*\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{multline\}(.*?)\\end\{multline\}", r"\\begin\{multline\*\}(.*?)\\end\{multline\*\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{table\}(.*?)\\end\{table\}", r"\\begin\{table\*\}(.*?)\\end\{table\*\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{minipage\}(.*?)\\end\{minipage\}", r"\\begin\{minipage\*\}(.*?)\\end\{minipage\*\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{align\*\}(.*?)\\end\{align\*\}", r"\\begin\{align\}(.*?)\\end\{align\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\begin\{equation\}(.*?)\\end\{equation\}", r"\\begin\{equation\*\}(.*?)\\end\{equation\*\}"], re.DOTALL)

+ text, mask = set_forbidden_text(text, mask, [r"\\includepdf\[(.*?)\]\{(.*?)\}", r"\\clearpage", r"\\newpage", r"\\appendix", r"\\tableofcontents", r"\\include\{(.*?)\}"])

+ text, mask = set_forbidden_text(text, mask, [r"\\vspace\{(.*?)\}", r"\\hspace\{(.*?)\}", r"\\label\{(.*?)\}", r"\\begin\{(.*?)\}", r"\\end\{(.*?)\}", r"\\item "])

+ text, mask = set_forbidden_text_careful_brace(text, mask, r"\\hl\{(.*?)\}", re.DOTALL)

+ # reverse 操作必须放在最后

+ text, mask = reverse_forbidden_text_careful_brace(text, mask, r"\\caption\{(.*?)\}", re.DOTALL, forbid_wrapper=True)

+ text, mask = reverse_forbidden_text_careful_brace(text, mask, r"\\abstract\{(.*?)\}", re.DOTALL, forbid_wrapper=True)

+ text, mask = reverse_forbidden_text(text, mask, r"\\begin\{abstract\}(.*?)\\end\{abstract\}", re.DOTALL, forbid_wrapper=True)

+ root = convert_to_linklist(text, mask)

+

+ # 最后一步处理,增强稳健性

+ root = post_process(root)

+

+ # 输出html调试文件,用红色标注处保留区(PRESERVE),用黑色标注转换区(TRANSFORM)

+ with open(pj(project_folder, 'debug_log.html'), 'w', encoding='utf8') as f:

+ segment_parts_for_gpt = []

+ nodes = []

+ node = root

+ while True:

+ nodes.append(node)

+ show_html = node.string.replace('\n','

')

+ if not node.preserve:

+ segment_parts_for_gpt.append(node.string)

+ f.write(f'#{node.range}{show_html}#

')

+ else:

+ f.write(f'{show_html}

')

+ node = node.next

+ if node is None: break

+

+ for n in nodes: n.next = None # break

+ return_dict['nodes'] = nodes

+ return_dict['segment_parts_for_gpt'] = segment_parts_for_gpt

+ return return_dict

+

+class LatexPaperSplit():

+ """

+ break down latex file to a linked list,

+ each node use a preserve flag to indicate whether it should

+ be proccessed by GPT.

+ """

+ def __init__(self) -> None:

+ self.nodes = None

+ self.msg = "*{\\scriptsize\\textbf{警告:该PDF由GPT-Academic开源项目调用大语言模型+Latex翻译插件一键生成," + \

+ "版权归原文作者所有。翻译内容可靠性无保障,请仔细鉴别并以原文为准。" + \

+ "项目Github地址 \\url{https://github.com/binary-husky/gpt_academic/}。"

+ # 请您不要删除或修改这行警告,除非您是论文的原作者(如果您是论文原作者,欢迎加REAME中的QQ联系开发者)

+ self.msg_declare = "为了防止大语言模型的意外谬误产生扩散影响,禁止移除或修改此警告。}}\\\\"

+

+

+ def merge_result(self, arr, mode, msg, buggy_lines=[], buggy_line_surgery_n_lines=10):

+ """

+ Merge the result after the GPT process completed

+ """

+ result_string = ""

+ node_cnt = 0

+ line_cnt = 0

+

+ for node in self.nodes:

+ if node.preserve:

+ line_cnt += node.string.count('\n')

+ result_string += node.string

+ else:

+ translated_txt = fix_content(arr[node_cnt], node.string)

+ begin_line = line_cnt

+ end_line = line_cnt + translated_txt.count('\n')

+

+ # reverse translation if any error

+ if any([begin_line-buggy_line_surgery_n_lines <= b_line <= end_line+buggy_line_surgery_n_lines for b_line in buggy_lines]):

+ translated_txt = node.string

+

+ result_string += translated_txt

+ node_cnt += 1

+ line_cnt += translated_txt.count('\n')

+

+ if mode == 'translate_zh':

+ pattern = re.compile(r'\\begin\{abstract\}.*\n')

+ match = pattern.search(result_string)

+ if not match:

+ # match \abstract{xxxx}

+ pattern_compile = re.compile(r"\\abstract\{(.*?)\}", flags=re.DOTALL)

+ match = pattern_compile.search(result_string)

+ position = match.regs[1][0]

+ else:

+ # match \begin{abstract}xxxx\end{abstract}

+ position = match.end()

+ result_string = result_string[:position] + self.msg + msg + self.msg_declare + result_string[position:]

+ return result_string

+

+

+ def split(self, txt, project_folder, opts):

+ """

+ break down latex file to a linked list,

+ each node use a preserve flag to indicate whether it should

+ be proccessed by GPT.

+ P.S. use multiprocessing to avoid timeout error

+ """

+ import multiprocessing

+ manager = multiprocessing.Manager()

+ return_dict = manager.dict()

+ p = multiprocessing.Process(

+ target=split_subprocess,

+ args=(txt, project_folder, return_dict, opts))

+ p.start()

+ p.join()

+ p.close()

+ self.nodes = return_dict['nodes']

+ self.sp = return_dict['segment_parts_for_gpt']

+ return self.sp

+

+

+class LatexPaperFileGroup():

+ """

+ use tokenizer to break down text according to max_token_limit

+ """

+ def __init__(self):

+ self.file_paths = []

+ self.file_contents = []

+ self.sp_file_contents = []

+ self.sp_file_index = []

+ self.sp_file_tag = []

+

+ # count_token

+ from request_llm.bridge_all import model_info

+ enc = model_info["gpt-3.5-turbo"]['tokenizer']

+ def get_token_num(txt): return len(enc.encode(txt, disallowed_special=()))

+ self.get_token_num = get_token_num

+

+ def run_file_split(self, max_token_limit=1900):

+ """

+ use tokenizer to break down text according to max_token_limit

+ """

+ for index, file_content in enumerate(self.file_contents):

+ if self.get_token_num(file_content) < max_token_limit:

+ self.sp_file_contents.append(file_content)

+ self.sp_file_index.append(index)

+ self.sp_file_tag.append(self.file_paths[index])

+ else:

+ from ..crazy_utils import breakdown_txt_to_satisfy_token_limit_for_pdf

+ segments = breakdown_txt_to_satisfy_token_limit_for_pdf(file_content, self.get_token_num, max_token_limit)

+ for j, segment in enumerate(segments):

+ self.sp_file_contents.append(segment)

+ self.sp_file_index.append(index)

+ self.sp_file_tag.append(self.file_paths[index] + f".part-{j}.tex")

+ print('Segmentation: done')

+

+ def merge_result(self):

+ self.file_result = ["" for _ in range(len(self.file_paths))]

+ for r, k in zip(self.sp_file_result, self.sp_file_index):

+ self.file_result[k] += r

+

+ def write_result(self):

+ manifest = []

+ for path, res in zip(self.file_paths, self.file_result):

+ with open(path + '.polish.tex', 'w', encoding='utf8') as f:

+ manifest.append(path + '.polish.tex')

+ f.write(res)

+ return manifest

+

+

+def Latex精细分解与转化(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, mode='proofread', switch_prompt=None, opts=[]):

+ import time, os, re

+ from ..crazy_utils import request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency

+ from .latex_actions import LatexPaperFileGroup, LatexPaperSplit

+

+ # <-------- 寻找主tex文件 ---------->

+ maintex = find_main_tex_file(file_manifest, mode)

+ chatbot.append((f"定位主Latex文件", f'[Local Message] 分析结果:该项目的Latex主文件是{maintex}, 如果分析错误, 请立即终止程序, 删除或修改歧义文件, 然后重试。主程序即将开始, 请稍候。'))

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+ time.sleep(3)

+

+ # <-------- 读取Latex文件, 将多文件tex工程融合为一个巨型tex ---------->

+ main_tex_basename = os.path.basename(maintex)

+ assert main_tex_basename.endswith('.tex')

+ main_tex_basename_bare = main_tex_basename[:-4]

+ may_exist_bbl = pj(project_folder, f'{main_tex_basename_bare}.bbl')

+ if os.path.exists(may_exist_bbl):

+ shutil.copyfile(may_exist_bbl, pj(project_folder, f'merge.bbl'))

+ shutil.copyfile(may_exist_bbl, pj(project_folder, f'merge_{mode}.bbl'))

+ shutil.copyfile(may_exist_bbl, pj(project_folder, f'merge_diff.bbl'))

+

+ with open(maintex, 'r', encoding='utf-8', errors='replace') as f:

+ content = f.read()

+ merged_content = merge_tex_files(project_folder, content, mode)

+

+ with open(project_folder + '/merge.tex', 'w', encoding='utf-8', errors='replace') as f:

+ f.write(merged_content)

+

+ # <-------- 精细切分latex文件 ---------->

+ chatbot.append((f"Latex文件融合完成", f'[Local Message] 正在精细切分latex文件,这需要一段时间计算,文档越长耗时越长,请耐心等待。'))

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+ lps = LatexPaperSplit()

+ res = lps.split(merged_content, project_folder, opts) # 消耗时间的函数

+

+ # <-------- 拆分过长的latex片段 ---------->

+ pfg = LatexPaperFileGroup()

+ for index, r in enumerate(res):

+ pfg.file_paths.append('segment-' + str(index))

+ pfg.file_contents.append(r)

+

+ pfg.run_file_split(max_token_limit=1024)

+ n_split = len(pfg.sp_file_contents)

+

+ # <-------- 根据需要切换prompt ---------->

+ inputs_array, sys_prompt_array = switch_prompt(pfg, mode)

+ inputs_show_user_array = [f"{mode} {f}" for f in pfg.sp_file_tag]

+

+ if os.path.exists(pj(project_folder,'temp.pkl')):

+

+ # <-------- 【仅调试】如果存在调试缓存文件,则跳过GPT请求环节 ---------->

+ pfg = objload(file=pj(project_folder,'temp.pkl'))

+

+ else:

+ # <-------- gpt 多线程请求 ---------->

+ gpt_response_collection = yield from request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency(

+ inputs_array=inputs_array,

+ inputs_show_user_array=inputs_show_user_array,

+ llm_kwargs=llm_kwargs,

+ chatbot=chatbot,

+ history_array=[[""] for _ in range(n_split)],

+ sys_prompt_array=sys_prompt_array,

+ # max_workers=5, # 并行任务数量限制, 最多同时执行5个, 其他的排队等待

+ scroller_max_len = 40

+ )

+

+ # <-------- 文本碎片重组为完整的tex片段 ---------->

+ pfg.sp_file_result = []

+ for i_say, gpt_say, orig_content in zip(gpt_response_collection[0::2], gpt_response_collection[1::2], pfg.sp_file_contents):

+ pfg.sp_file_result.append(gpt_say)

+ pfg.merge_result()

+

+ # <-------- 临时存储用于调试 ---------->

+ pfg.get_token_num = None

+ objdump(pfg, file=pj(project_folder,'temp.pkl'))

+

+ write_html(pfg.sp_file_contents, pfg.sp_file_result, chatbot=chatbot, project_folder=project_folder)

+

+ # <-------- 写出文件 ---------->

+ msg = f"当前大语言模型: {llm_kwargs['llm_model']},当前语言模型温度设定: {llm_kwargs['temperature']}。"

+ final_tex = lps.merge_result(pfg.file_result, mode, msg)

+ objdump((lps, pfg.file_result, mode, msg), file=pj(project_folder,'merge_result.pkl'))

+

+ with open(project_folder + f'/merge_{mode}.tex', 'w', encoding='utf-8', errors='replace') as f:

+ if mode != 'translate_zh' or "binary" in final_tex: f.write(final_tex)

+

+

+ # <-------- 整理结果, 退出 ---------->

+ chatbot.append((f"完成了吗?", 'GPT结果已输出, 即将编译PDF'))

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+

+ # <-------- 返回 ---------->

+ return project_folder + f'/merge_{mode}.tex'

+

+

+def remove_buggy_lines(file_path, log_path, tex_name, tex_name_pure, n_fix, work_folder_modified, fixed_line=[]):

+ try:

+ with open(log_path, 'r', encoding='utf-8', errors='replace') as f:

+ log = f.read()

+ import re

+ buggy_lines = re.findall(tex_name+':([0-9]{1,5}):', log)

+ buggy_lines = [int(l) for l in buggy_lines]

+ buggy_lines = sorted(buggy_lines)

+ buggy_line = buggy_lines[0]-1

+ print("reversing tex line that has errors", buggy_line)

+

+ # 重组,逆转出错的段落

+ if buggy_line not in fixed_line:

+ fixed_line.append(buggy_line)

+

+ lps, file_result, mode, msg = objload(file=pj(work_folder_modified,'merge_result.pkl'))

+ final_tex = lps.merge_result(file_result, mode, msg, buggy_lines=fixed_line, buggy_line_surgery_n_lines=5*n_fix)

+

+ with open(pj(work_folder_modified, f"{tex_name_pure}_fix_{n_fix}.tex"), 'w', encoding='utf-8', errors='replace') as f:

+ f.write(final_tex)

+

+ return True, f"{tex_name_pure}_fix_{n_fix}", buggy_lines

+ except:

+ print("Fatal error occurred, but we cannot identify error, please download zip, read latex log, and compile manually.")

+ return False, -1, [-1]

+

+

+def 编译Latex(chatbot, history, main_file_original, main_file_modified, work_folder_original, work_folder_modified, work_folder, mode='default'):

+ import os, time

+ n_fix = 1

+ fixed_line = []

+ max_try = 32

+ chatbot.append([f"正在编译PDF文档", f'编译已经开始。当前工作路径为{work_folder},如果程序停顿5分钟以上,请直接去该路径下取回翻译结果,或者重启之后再度尝试 ...']); yield from update_ui(chatbot=chatbot, history=history)

+ chatbot.append([f"正在编译PDF文档", '...']); yield from update_ui(chatbot=chatbot, history=history); time.sleep(1); chatbot[-1] = list(chatbot[-1]) # 刷新界面

+ yield from update_ui_lastest_msg('编译已经开始...', chatbot, history) # 刷新Gradio前端界面

+

+ while True:

+ import os

+ may_exist_bbl = pj(work_folder_modified, f'merge.bbl')

+ target_bbl = pj(work_folder_modified, f'{main_file_modified}.bbl')

+ if os.path.exists(may_exist_bbl) and not os.path.exists(target_bbl):

+ shutil.copyfile(may_exist_bbl, target_bbl)

+

+ # https://stackoverflow.com/questions/738755/dont-make-me-manually-abort-a-latex-compile-when-theres-an-error

+ yield from update_ui_lastest_msg(f'尝试第 {n_fix}/{max_try} 次编译, 编译原始PDF ...', chatbot, history) # 刷新Gradio前端界面

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error {main_file_original}.tex', work_folder_original)

+

+ yield from update_ui_lastest_msg(f'尝试第 {n_fix}/{max_try} 次编译, 编译转化后的PDF ...', chatbot, history) # 刷新Gradio前端界面

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error {main_file_modified}.tex', work_folder_modified)

+

+ if ok and os.path.exists(pj(work_folder_modified, f'{main_file_modified}.pdf')):

+ # 只有第二步成功,才能继续下面的步骤

+ yield from update_ui_lastest_msg(f'尝试第 {n_fix}/{max_try} 次编译, 编译BibTex ...', chatbot, history) # 刷新Gradio前端界面

+ if not os.path.exists(pj(work_folder_original, f'{main_file_original}.bbl')):

+ ok = compile_latex_with_timeout(f'bibtex {main_file_original}.aux', work_folder_original)

+ if not os.path.exists(pj(work_folder_modified, f'{main_file_modified}.bbl')):

+ ok = compile_latex_with_timeout(f'bibtex {main_file_modified}.aux', work_folder_modified)

+

+ yield from update_ui_lastest_msg(f'尝试第 {n_fix}/{max_try} 次编译, 编译文献交叉引用 ...', chatbot, history) # 刷新Gradio前端界面

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error {main_file_original}.tex', work_folder_original)

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error {main_file_modified}.tex', work_folder_modified)

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error {main_file_original}.tex', work_folder_original)

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error {main_file_modified}.tex', work_folder_modified)

+

+ if mode!='translate_zh':

+ yield from update_ui_lastest_msg(f'尝试第 {n_fix}/{max_try} 次编译, 使用latexdiff生成论文转化前后对比 ...', chatbot, history) # 刷新Gradio前端界面

+ print( f'latexdiff --encoding=utf8 --append-safecmd=subfile {work_folder_original}/{main_file_original}.tex {work_folder_modified}/{main_file_modified}.tex --flatten > {work_folder}/merge_diff.tex')

+ ok = compile_latex_with_timeout(f'latexdiff --encoding=utf8 --append-safecmd=subfile {work_folder_original}/{main_file_original}.tex {work_folder_modified}/{main_file_modified}.tex --flatten > {work_folder}/merge_diff.tex')

+

+ yield from update_ui_lastest_msg(f'尝试第 {n_fix}/{max_try} 次编译, 正在编译对比PDF ...', chatbot, history) # 刷新Gradio前端界面

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error merge_diff.tex', work_folder)

+ ok = compile_latex_with_timeout(f'bibtex merge_diff.aux', work_folder)

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error merge_diff.tex', work_folder)

+ ok = compile_latex_with_timeout(f'pdflatex -interaction=batchmode -file-line-error merge_diff.tex', work_folder)

+

+ # <---------- 检查结果 ----------->

+ results_ = ""

+ original_pdf_success = os.path.exists(pj(work_folder_original, f'{main_file_original}.pdf'))

+ modified_pdf_success = os.path.exists(pj(work_folder_modified, f'{main_file_modified}.pdf'))

+ diff_pdf_success = os.path.exists(pj(work_folder, f'merge_diff.pdf'))

+ results_ += f"原始PDF编译是否成功: {original_pdf_success};"

+ results_ += f"转化PDF编译是否成功: {modified_pdf_success};"

+ results_ += f"对比PDF编译是否成功: {diff_pdf_success};"

+ yield from update_ui_lastest_msg(f'第{n_fix}编译结束:

{results_}...', chatbot, history) # 刷新Gradio前端界面

+

+ if diff_pdf_success:

+ result_pdf = pj(work_folder_modified, f'merge_diff.pdf') # get pdf path

+ promote_file_to_downloadzone(result_pdf, rename_file=None, chatbot=chatbot) # promote file to web UI

+ if modified_pdf_success:

+ yield from update_ui_lastest_msg(f'转化PDF编译已经成功, 即将退出 ...', chatbot, history) # 刷新Gradio前端界面

+ result_pdf = pj(work_folder_modified, f'{main_file_modified}.pdf') # get pdf path

+ origin_pdf = pj(work_folder_original, f'{main_file_original}.pdf') # get pdf path

+ if os.path.exists(pj(work_folder, '..', 'translation')):

+ shutil.copyfile(result_pdf, pj(work_folder, '..', 'translation', 'translate_zh.pdf'))

+ promote_file_to_downloadzone(result_pdf, rename_file=None, chatbot=chatbot) # promote file to web UI

+ # 将两个PDF拼接

+ if original_pdf_success:

+ try:

+ from .latex_toolbox import merge_pdfs

+ concat_pdf = pj(work_folder_modified, f'comparison.pdf')

+ merge_pdfs(origin_pdf, result_pdf, concat_pdf)

+ promote_file_to_downloadzone(concat_pdf, rename_file=None, chatbot=chatbot) # promote file to web UI

+ except Exception as e:

+ pass

+ return True # 成功啦

+ else:

+ if n_fix>=max_try: break

+ n_fix += 1

+ can_retry, main_file_modified, buggy_lines = remove_buggy_lines(

+ file_path=pj(work_folder_modified, f'{main_file_modified}.tex'),

+ log_path=pj(work_folder_modified, f'{main_file_modified}.log'),

+ tex_name=f'{main_file_modified}.tex',

+ tex_name_pure=f'{main_file_modified}',

+ n_fix=n_fix,

+ work_folder_modified=work_folder_modified,

+ fixed_line=fixed_line

+ )

+ yield from update_ui_lastest_msg(f'由于最为关键的转化PDF编译失败, 将根据报错信息修正tex源文件并重试, 当前报错的latex代码处于第{buggy_lines}行 ...', chatbot, history) # 刷新Gradio前端界面

+ if not can_retry: break

+

+ return False # 失败啦

+

+

+def write_html(sp_file_contents, sp_file_result, chatbot, project_folder):

+ # write html

+ try:

+ import shutil

+ from ..crazy_utils import construct_html

+ from toolbox import gen_time_str

+ ch = construct_html()

+ orig = ""

+ trans = ""

+ final = []

+ for c,r in zip(sp_file_contents, sp_file_result):

+ final.append(c)

+ final.append(r)

+ for i, k in enumerate(final):

+ if i%2==0:

+ orig = k

+ if i%2==1:

+ trans = k

+ ch.add_row(a=orig, b=trans)

+ create_report_file_name = f"{gen_time_str()}.trans.html"

+ ch.save_file(create_report_file_name)

+ shutil.copyfile(pj('./gpt_log/', create_report_file_name), pj(project_folder, create_report_file_name))

+ promote_file_to_downloadzone(file=f'./gpt_log/{create_report_file_name}', chatbot=chatbot)

+ except:

+ from toolbox import trimmed_format_exc

+ print('writing html result failed:', trimmed_format_exc())

diff --git a/crazy_functions/latex_fns/latex_toolbox.py b/crazy_functions/latex_fns/latex_toolbox.py

new file mode 100644

index 0000000000000000000000000000000000000000..a0c889a80867903efab84498fea71b4c7120fd21

--- /dev/null

+++ b/crazy_functions/latex_fns/latex_toolbox.py

@@ -0,0 +1,456 @@

+import os, shutil

+import re

+import numpy as np

+PRESERVE = 0

+TRANSFORM = 1

+

+pj = os.path.join

+

+class LinkedListNode():

+ """

+ Linked List Node

+ """

+ def __init__(self, string, preserve=True) -> None:

+ self.string = string

+ self.preserve = preserve

+ self.next = None

+ self.range = None

+ # self.begin_line = 0

+ # self.begin_char = 0

+

+def convert_to_linklist(text, mask):

+ root = LinkedListNode("", preserve=True)

+ current_node = root

+ for c, m, i in zip(text, mask, range(len(text))):

+ if (m==PRESERVE and current_node.preserve) \

+ or (m==TRANSFORM and not current_node.preserve):

+ # add

+ current_node.string += c

+ else:

+ current_node.next = LinkedListNode(c, preserve=(m==PRESERVE))

+ current_node = current_node.next

+ return root

+

+def post_process(root):

+ # 修复括号

+ node = root

+ while True:

+ string = node.string

+ if node.preserve:

+ node = node.next

+ if node is None: break

+ continue

+ def break_check(string):

+ str_stack = [""] # (lv, index)

+ for i, c in enumerate(string):

+ if c == '{':

+ str_stack.append('{')

+ elif c == '}':

+ if len(str_stack) == 1:

+ print('stack fix')

+ return i

+ str_stack.pop(-1)

+ else:

+ str_stack[-1] += c

+ return -1

+ bp = break_check(string)

+

+ if bp == -1:

+ pass

+ elif bp == 0:

+ node.string = string[:1]

+ q = LinkedListNode(string[1:], False)

+ q.next = node.next

+ node.next = q

+ else:

+ node.string = string[:bp]

+ q = LinkedListNode(string[bp:], False)

+ q.next = node.next

+ node.next = q

+

+ node = node.next

+ if node is None: break

+

+ # 屏蔽空行和太短的句子

+ node = root

+ while True:

+ if len(node.string.strip('\n').strip(''))==0: node.preserve = True

+ if len(node.string.strip('\n').strip(''))<42: node.preserve = True

+ node = node.next

+ if node is None: break

+ node = root

+ while True:

+ if node.next and node.preserve and node.next.preserve:

+ node.string += node.next.string

+ node.next = node.next.next

+ node = node.next

+ if node is None: break

+

+ # 将前后断行符脱离

+ node = root

+ prev_node = None

+ while True:

+ if not node.preserve:

+ lstriped_ = node.string.lstrip().lstrip('\n')

+ if (prev_node is not None) and (prev_node.preserve) and (len(lstriped_)!=len(node.string)):

+ prev_node.string += node.string[:-len(lstriped_)]

+ node.string = lstriped_

+ rstriped_ = node.string.rstrip().rstrip('\n')

+ if (node.next is not None) and (node.next.preserve) and (len(rstriped_)!=len(node.string)):

+ node.next.string = node.string[len(rstriped_):] + node.next.string

+ node.string = rstriped_

+ # =====

+ prev_node = node

+ node = node.next

+ if node is None: break

+

+ # 标注节点的行数范围

+ node = root

+ n_line = 0

+ expansion = 2

+ while True:

+ n_l = node.string.count('\n')

+ node.range = [n_line-expansion, n_line+n_l+expansion] # 失败时,扭转的范围

+ n_line = n_line+n_l

+ node = node.next

+ if node is None: break

+ return root

+

+

+"""

+=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

+Latex segmentation with a binary mask (PRESERVE=0, TRANSFORM=1)

+=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

+"""

+

+

+def set_forbidden_text(text, mask, pattern, flags=0):

+ """

+ Add a preserve text area in this paper

+ e.g. with pattern = r"\\begin\{algorithm\}(.*?)\\end\{algorithm\}"

+ you can mask out (mask = PRESERVE so that text become untouchable for GPT)

+ everything between "\begin{equation}" and "\end{equation}"

+ """

+ if isinstance(pattern, list): pattern = '|'.join(pattern)

+ pattern_compile = re.compile(pattern, flags)

+ for res in pattern_compile.finditer(text):

+ mask[res.span()[0]:res.span()[1]] = PRESERVE

+ return text, mask

+

+def reverse_forbidden_text(text, mask, pattern, flags=0, forbid_wrapper=True):

+ """

+ Move area out of preserve area (make text editable for GPT)

+ count the number of the braces so as to catch compelete text area.

+ e.g.

+ \begin{abstract} blablablablablabla. \end{abstract}

+ """

+ if isinstance(pattern, list): pattern = '|'.join(pattern)

+ pattern_compile = re.compile(pattern, flags)

+ for res in pattern_compile.finditer(text):

+ if not forbid_wrapper:

+ mask[res.span()[0]:res.span()[1]] = TRANSFORM

+ else:

+ mask[res.regs[0][0]: res.regs[1][0]] = PRESERVE # '\\begin{abstract}'

+ mask[res.regs[1][0]: res.regs[1][1]] = TRANSFORM # abstract

+ mask[res.regs[1][1]: res.regs[0][1]] = PRESERVE # abstract

+ return text, mask

+

+def set_forbidden_text_careful_brace(text, mask, pattern, flags=0):

+ """

+ Add a preserve text area in this paper (text become untouchable for GPT).

+ count the number of the braces so as to catch compelete text area.

+ e.g.

+ \caption{blablablablabla\texbf{blablabla}blablabla.}

+ """

+ pattern_compile = re.compile(pattern, flags)

+ for res in pattern_compile.finditer(text):

+ brace_level = -1

+ p = begin = end = res.regs[0][0]

+ for _ in range(1024*16):

+ if text[p] == '}' and brace_level == 0: break

+ elif text[p] == '}': brace_level -= 1

+ elif text[p] == '{': brace_level += 1

+ p += 1

+ end = p+1

+ mask[begin:end] = PRESERVE

+ return text, mask

+

+def reverse_forbidden_text_careful_brace(text, mask, pattern, flags=0, forbid_wrapper=True):

+ """

+ Move area out of preserve area (make text editable for GPT)

+ count the number of the braces so as to catch compelete text area.

+ e.g.

+ \caption{blablablablabla\texbf{blablabla}blablabla.}

+ """

+ pattern_compile = re.compile(pattern, flags)

+ for res in pattern_compile.finditer(text):

+ brace_level = 0

+ p = begin = end = res.regs[1][0]

+ for _ in range(1024*16):

+ if text[p] == '}' and brace_level == 0: break

+ elif text[p] == '}': brace_level -= 1

+ elif text[p] == '{': brace_level += 1

+ p += 1

+ end = p

+ mask[begin:end] = TRANSFORM

+ if forbid_wrapper:

+ mask[res.regs[0][0]:begin] = PRESERVE

+ mask[end:res.regs[0][1]] = PRESERVE

+ return text, mask

+

+def set_forbidden_text_begin_end(text, mask, pattern, flags=0, limit_n_lines=42):

+ """

+ Find all \begin{} ... \end{} text block that with less than limit_n_lines lines.

+ Add it to preserve area

+ """

+ pattern_compile = re.compile(pattern, flags)

+ def search_with_line_limit(text, mask):

+ for res in pattern_compile.finditer(text):

+ cmd = res.group(1) # begin{what}

+ this = res.group(2) # content between begin and end

+ this_mask = mask[res.regs[2][0]:res.regs[2][1]]

+ white_list = ['document', 'abstract', 'lemma', 'definition', 'sproof',

+ 'em', 'emph', 'textit', 'textbf', 'itemize', 'enumerate']

+ if (cmd in white_list) or this.count('\n') >= limit_n_lines: # use a magical number 42

+ this, this_mask = search_with_line_limit(this, this_mask)

+ mask[res.regs[2][0]:res.regs[2][1]] = this_mask

+ else:

+ mask[res.regs[0][0]:res.regs[0][1]] = PRESERVE

+ return text, mask

+ return search_with_line_limit(text, mask)

+

+

+

+"""

+=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

+Latex Merge File

+=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

+"""

+

+def find_main_tex_file(file_manifest, mode):

+ """

+ 在多Tex文档中,寻找主文件,必须包含documentclass,返回找到的第一个。

+ P.S. 但愿没人把latex模板放在里面传进来 (6.25 加入判定latex模板的代码)

+ """

+ canidates = []

+ for texf in file_manifest:

+ if os.path.basename(texf).startswith('merge'):

+ continue

+ with open(texf, 'r', encoding='utf8', errors='ignore') as f:

+ file_content = f.read()

+ if r'\documentclass' in file_content:

+ canidates.append(texf)

+ else:

+ continue

+

+ if len(canidates) == 0:

+ raise RuntimeError('无法找到一个主Tex文件(包含documentclass关键字)')

+ elif len(canidates) == 1:

+ return canidates[0]

+ else: # if len(canidates) >= 2 通过一些Latex模板中常见(但通常不会出现在正文)的单词,对不同latex源文件扣分,取评分最高者返回

+ canidates_score = []

+ # 给出一些判定模板文档的词作为扣分项

+ unexpected_words = ['\LaTeX', 'manuscript', 'Guidelines', 'font', 'citations', 'rejected', 'blind review', 'reviewers']

+ expected_words = ['\input', '\ref', '\cite']

+ for texf in canidates:

+ canidates_score.append(0)

+ with open(texf, 'r', encoding='utf8', errors='ignore') as f:

+ file_content = f.read()

+ for uw in unexpected_words:

+ if uw in file_content:

+ canidates_score[-1] -= 1

+ for uw in expected_words:

+ if uw in file_content:

+ canidates_score[-1] += 1

+ select = np.argmax(canidates_score) # 取评分最高者返回

+ return canidates[select]

+

+def rm_comments(main_file):

+ new_file_remove_comment_lines = []

+ for l in main_file.splitlines():

+ # 删除整行的空注释

+ if l.lstrip().startswith("%"):

+ pass

+ else:

+ new_file_remove_comment_lines.append(l)

+ main_file = '\n'.join(new_file_remove_comment_lines)

+ # main_file = re.sub(r"\\include{(.*?)}", r"\\input{\1}", main_file) # 将 \include 命令转换为 \input 命令

+ main_file = re.sub(r'(? 0 and node_string.count('\_') > final_tex.count('\_'):

+ # walk and replace any _ without \

+ final_tex = re.sub(r"(?{}".format(args))

+ pass

+

+ def test_on_close(self, *args):

+ self.aliyun_service_ok = False

+ pass

+

+ def test_on_result_chg(self, message, *args):

+ # print("test_on_chg:{}".format(message))

+ message = json.loads(message)

+ self.parsed_text = message['payload']['result']

+ self.event_on_result_chg.set()

+

+ def test_on_completed(self, message, *args):

+ # print("on_completed:args=>{} message=>{}".format(args, message))

+ pass

+

+

+ def audio_convertion_thread(self, uuid):

+ # 在一个异步线程中采集音频

+ import nls # pip install git+https://github.com/aliyun/alibabacloud-nls-python-sdk.git

+ import tempfile

+ from scipy import io

+ from toolbox import get_conf

+ from .audio_io import change_sample_rate

+ from .audio_io import RealtimeAudioDistribution

+ NEW_SAMPLERATE = 16000

+ rad = RealtimeAudioDistribution()

+ rad.clean_up()

+ temp_folder = tempfile.gettempdir()

+ TOKEN, APPKEY = get_conf('ALIYUN_TOKEN', 'ALIYUN_APPKEY')

+ if len(TOKEN) == 0:

+ TOKEN = self.get_token()

+ self.aliyun_service_ok = True

+ URL="wss://nls-gateway.aliyuncs.com/ws/v1"

+ sr = nls.NlsSpeechTranscriber(

+ url=URL,

+ token=TOKEN,

+ appkey=APPKEY,

+ on_sentence_begin=self.test_on_sentence_begin,

+ on_sentence_end=self.test_on_sentence_end,

+ on_start=self.test_on_start,

+ on_result_changed=self.test_on_result_chg,

+ on_completed=self.test_on_completed,

+ on_error=self.test_on_error,

+ on_close=self.test_on_close,

+ callback_args=[uuid.hex]

+ )

+

+ r = sr.start(aformat="pcm",

+ enable_intermediate_result=True,

+ enable_punctuation_prediction=True,

+ enable_inverse_text_normalization=True)

+

+ while not self.stop:

+ # time.sleep(self.capture_interval)

+ audio = rad.read(uuid.hex)

+ if audio is not None:

+ # convert to pcm file

+ temp_file = f'{temp_folder}/{uuid.hex}.pcm' #

+ dsdata = change_sample_rate(audio, rad.rate, NEW_SAMPLERATE) # 48000 --> 16000

+ io.wavfile.write(temp_file, NEW_SAMPLERATE, dsdata)

+ # read pcm binary

+ with open(temp_file, "rb") as f: data = f.read()

+ # print('audio len:', len(audio), '\t ds len:', len(dsdata), '\t need n send:', len(data)//640)

+ slices = zip(*(iter(data),) * 640) # 640个字节为一组

+ for i in slices: sr.send_audio(bytes(i))

+ else:

+ time.sleep(0.1)

+

+ if not self.aliyun_service_ok:

+ self.stop = True

+ self.stop_msg = 'Aliyun音频服务异常,请检查ALIYUN_TOKEN和ALIYUN_APPKEY是否过期。'

+ r = sr.stop()

+

+ def get_token(self):

+ from toolbox import get_conf

+ import json

+ from aliyunsdkcore.request import CommonRequest

+ from aliyunsdkcore.client import AcsClient

+ AccessKey_ID, AccessKey_secret = get_conf('ALIYUN_ACCESSKEY', 'ALIYUN_SECRET')

+

+ # 创建AcsClient实例

+ client = AcsClient(

+ AccessKey_ID,

+ AccessKey_secret,

+ "cn-shanghai"

+ )

+

+ # 创建request,并设置参数。

+ request = CommonRequest()

+ request.set_method('POST')

+ request.set_domain('nls-meta.cn-shanghai.aliyuncs.com')

+ request.set_version('2019-02-28')

+ request.set_action_name('CreateToken')

+

+ try:

+ response = client.do_action_with_exception(request)

+ print(response)

+ jss = json.loads(response)

+ if 'Token' in jss and 'Id' in jss['Token']:

+ token = jss['Token']['Id']

+ expireTime = jss['Token']['ExpireTime']

+ print("token = " + token)

+ print("expireTime = " + str(expireTime))

+ except Exception as e:

+ print(e)

+

+ return token

diff --git a/crazy_functions/live_audio/audio_io.py b/crazy_functions/live_audio/audio_io.py

new file mode 100644

index 0000000000000000000000000000000000000000..3ff83a66e8d9f0bb15250f1c3c2b5ea36745ff55

--- /dev/null

+++ b/crazy_functions/live_audio/audio_io.py

@@ -0,0 +1,51 @@

+import numpy as np

+from scipy import interpolate

+

+def Singleton(cls):

+ _instance = {}

+

+ def _singleton(*args, **kargs):

+ if cls not in _instance:

+ _instance[cls] = cls(*args, **kargs)

+ return _instance[cls]

+

+ return _singleton

+

+

+@Singleton

+class RealtimeAudioDistribution():

+ def __init__(self) -> None:

+ self.data = {}

+ self.max_len = 1024*1024

+ self.rate = 48000 # 只读,每秒采样数量

+

+ def clean_up(self):

+ self.data = {}

+

+ def feed(self, uuid, audio):

+ self.rate, audio_ = audio

+ # print('feed', len(audio_), audio_[-25:])

+ if uuid not in self.data:

+ self.data[uuid] = audio_

+ else:

+ new_arr = np.concatenate((self.data[uuid], audio_))

+ if len(new_arr) > self.max_len: new_arr = new_arr[-self.max_len:]

+ self.data[uuid] = new_arr

+

+ def read(self, uuid):

+ if uuid in self.data:

+ res = self.data.pop(uuid)

+ print('\r read-', len(res), '-', max(res), end='', flush=True)

+ else:

+ res = None

+ return res

+

+def change_sample_rate(audio, old_sr, new_sr):

+ duration = audio.shape[0] / old_sr

+

+ time_old = np.linspace(0, duration, audio.shape[0])

+ time_new = np.linspace(0, duration, int(audio.shape[0] * new_sr / old_sr))

+

+ interpolator = interpolate.interp1d(time_old, audio.T)

+ new_audio = interpolator(time_new).T

+ return new_audio.astype(np.int16)

\ No newline at end of file

diff --git "a/crazy_functions/\344\270\213\350\275\275arxiv\350\256\272\346\226\207\347\277\273\350\257\221\346\221\230\350\246\201.py" "b/crazy_functions/\344\270\213\350\275\275arxiv\350\256\272\346\226\207\347\277\273\350\257\221\346\221\230\350\246\201.py"

index 3da831fd07e361a532777c83bb02cff265b94abd..46275bf4fed59ca5692581ac9b354c4d4ad91d7c 100644

--- "a/crazy_functions/\344\270\213\350\275\275arxiv\350\256\272\346\226\207\347\277\273\350\257\221\346\221\230\350\246\201.py"

+++ "b/crazy_functions/\344\270\213\350\275\275arxiv\350\256\272\346\226\207\347\277\273\350\257\221\346\221\230\350\246\201.py"

@@ -144,11 +144,11 @@ def 下载arxiv论文并翻译摘要(txt, llm_kwargs, plugin_kwargs, chatbot, hi

# 尝试导入依赖,如果缺少依赖,则给出安装建议

try:

- import pdfminer, bs4

+ import bs4

except:

report_execption(chatbot, history,

a = f"解析项目: {txt}",

- b = f"导入软件依赖失败。使用该模块需要额外依赖,安装方法```pip install --upgrade pdfminer beautifulsoup4```。")

+ b = f"导入软件依赖失败。使用该模块需要额外依赖,安装方法```pip install --upgrade beautifulsoup4```。")

yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

return