import os

from PIL import Image

import torch

import gradio as gr

os.system("git clone https://github.com/mchong6/JoJoGAN.git")

os.chdir("JoJoGAN")

import torch

torch.backends.cudnn.benchmark = True

from torchvision import transforms, utils

from util import *

from PIL import Image

import math

import random

import numpy as np

from torch import nn, autograd, optim

from torch.nn import functional as F

from tqdm import tqdm

import lpips

from model import *

from e4e_projection import projection as e4e_projection

from google.colab import files

from copy import deepcopy

from pydrive.auth import GoogleAuth

from pydrive.drive import GoogleDrive

from google.colab import auth

from oauth2client.client import GoogleCredentials

os.makedirs('inversion_codes', exist_ok=True)

os.makedirs('style_images', exist_ok=True)

os.makedirs('style_images_aligned', exist_ok=True)

os.makedirs('models', exist_ok=True)

os.system("wget http://dlib.net/files/shape_predictor_68_face_landmarks.dat.bz2")

os.system("bzip2 -dk shape_predictor_68_face_landmarks.dat.bz2")

os.system("mv shape_predictor_68_face_landmarks.dat models/dlibshape_predictor_68_face_landmarks.dat")

device = 'cpu'

download_with_pydrive = True #@param {type:"boolean"}

drive_ids = {

"stylegan2-ffhq-config-f.pt": "1Yr7KuD959btpmcKGAUsbAk5rPjX2MytK",

"e4e_ffhq_encode.pt": "1o6ijA3PkcewZvwJJ73dJ0fxhndn0nnh7",

"restyle_psp_ffhq_encode.pt": "1nbxCIVw9H3YnQsoIPykNEFwWJnHVHlVd",

"arcane_caitlyn.pt": "1gOsDTiTPcENiFOrhmkkxJcTURykW1dRc",

"arcane_caitlyn_preserve_color.pt": "1cUTyjU-q98P75a8THCaO545RTwpVV-aH",

"arcane_jinx_preserve_color.pt": "1jElwHxaYPod5Itdy18izJk49K1nl4ney",

"arcane_jinx.pt": "1quQ8vPjYpUiXM4k1_KIwP4EccOefPpG_",

"disney.pt": "1zbE2upakFUAx8ximYnLofFwfT8MilqJA",

"disney_preserve_color.pt": "1Bnh02DjfvN_Wm8c4JdOiNV4q9J7Z_tsi",

"jojo.pt": "13cR2xjIBj8Ga5jMO7gtxzIJj2PDsBYK4",

"jojo_preserve_color.pt": "1ZRwYLRytCEKi__eT2Zxv1IlV6BGVQ_K2",

"jojo_yasuho.pt": "1grZT3Gz1DLzFoJchAmoj3LoM9ew9ROX_",

"jojo_yasuho_preserve_color.pt": "1SKBu1h0iRNyeKBnya_3BBmLr4pkPeg_L",

"supergirl.pt": "1L0y9IYgzLNzB-33xTpXpecsKU-t9DpVC",

"supergirl_preserve_color.pt": "1VmKGuvThWHym7YuayXxjv0fSn32lfDpE",

}

os.system("gdown https://drive.google.com/uc?id=1Yr7KuD959btpmcKGAUsbAk5rPjX2MytK")

os.system("mv stylegan2-ffhq-config-f.pt models/stylegan2-ffhq-config-f.pt")

latent_dim = 512

# Load original generator

original_generator = Generator(1024, latent_dim, 8, 2).to(device)

ckpt = torch.load(os.path.join('models', ckpt), map_location=lambda storage, loc: storage)

original_generator.load_state_dict(ckpt["g_ema"], strict=False)

mean_latent = original_generator.mean_latent(10000)

# to be finetuned generator

generator = deepcopy(original_generator)

transform = transforms.Compose(

[

transforms.Resize((1024, 1024)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)),

]

)

plt.rcParams['figure.dpi'] = 150

filepath = f'test_input/{filename}'

name = strip_path_extension(filepath)+'.pt'

aligned_face = align_face(filepath)

os.system("gdown https://drive.google.com/uc?id=1o6ijA3PkcewZvwJJ73dJ0fxhndn0nnh7")

os.system("mv e4e_ffhq_encode.pt models/e4e_ffhq_encode.pt")

my_w = e4e_projection(aligned_face, name, device).unsqueeze(0)

plt.rcParams['figure.dpi'] = 150

pretrained = 'jojo' #@param ['supergirl', 'arcane_jinx', 'arcane_caitlyn', 'jojo_yasuho', 'jojo', 'disney']

#@markdown Preserve color tries to preserve color of original image by limiting family of allowable transformations. Otherwise, the stylized image will inherit the colors of the reference images, leading to heavier stylizations.

preserve_color = False #@param{type:"boolean"}

if preserve_color:

ckpt = f'{pretrained}_preserve_color.pt'

else:

ckpt = f'{pretrained}.pt'

downloader.download_file(ckpt)

ckpt = torch.load(os.path.join('models', ckpt), map_location=lambda storage, loc: storage)

generator.load_state_dict(ckpt["g"], strict=False)

#@title Generate results

n_sample = 1#@param {type:"number"}

seed = 3000 #@param {type:"number"}

torch.manual_seed(seed)

with torch.no_grad():

generator.eval()

z = torch.randn(n_sample, latent_dim, device=device)

original_sample = original_generator([z], truncation=0.7, truncation_latent=mean_latent)

sample = generator([z], truncation=0.7, truncation_latent=mean_latent)

original_my_sample = original_generator(my_w, input_is_latent=True)

my_sample = generator(my_w, input_is_latent=True)

# display reference images

style_path = f'style_images_aligned/{pretrained}.png'

style_image = transform(Image.open(style_path)).unsqueeze(0).to(device)

face = transform(aligned_face).unsqueeze(0).to(device)

my_output = torch.cat([style_image, face, my_sample], 0)

display_image(utils.make_grid(my_output, normalize=True, range=(-1, 1)), title='My sample')

output = torch.cat([original_sample, sample], 0)

display_image(utils.make_grid(output, normalize=True, range=(-1, 1), nrow=n_sample), title='Random samples')

def inference(img, ver):

if ver == 'version 2 (🔺 robustness,🔻 stylization)':

out = face2paint(model2, img)

else:

out = face2paint(model1, img)

return out

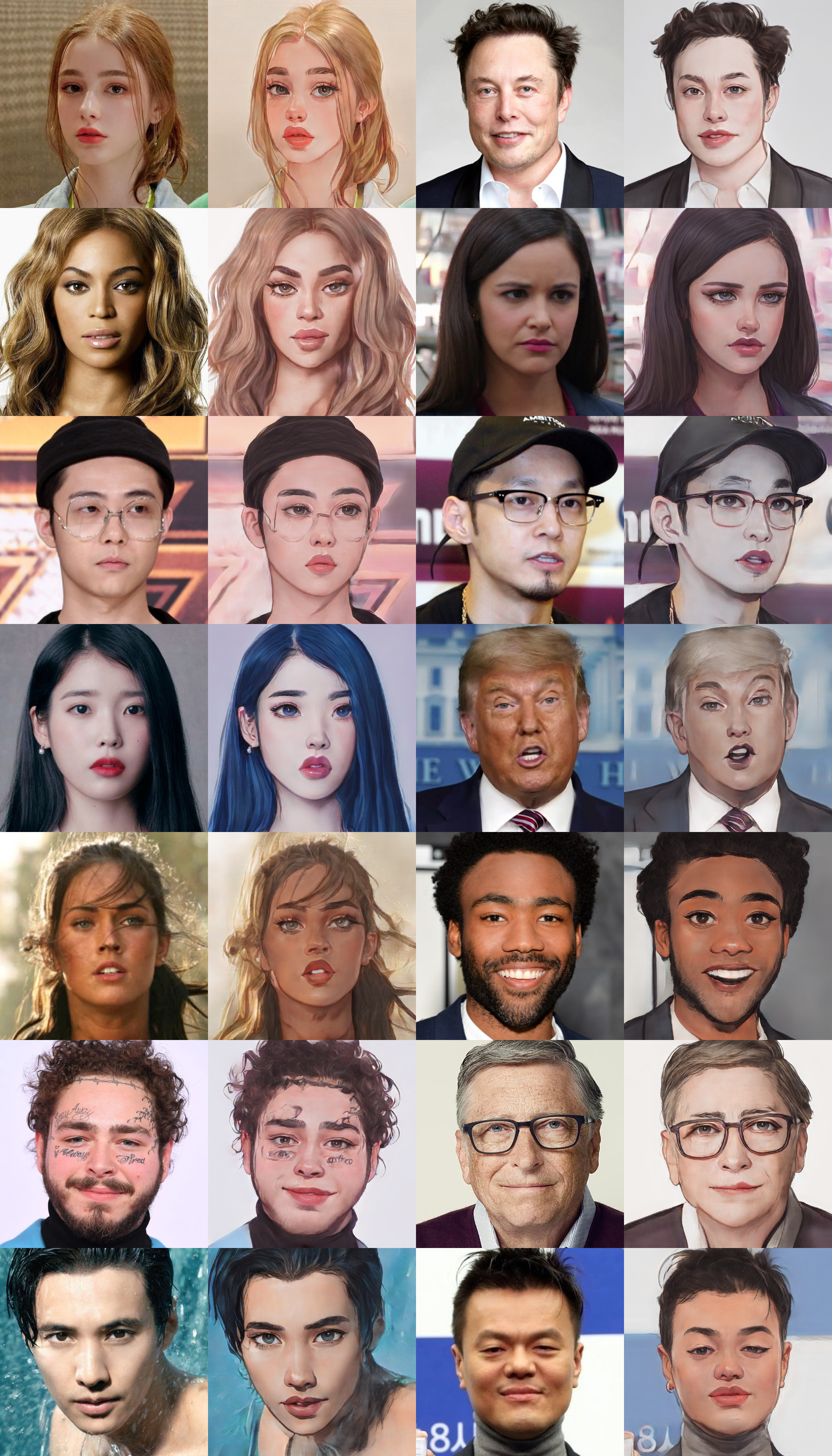

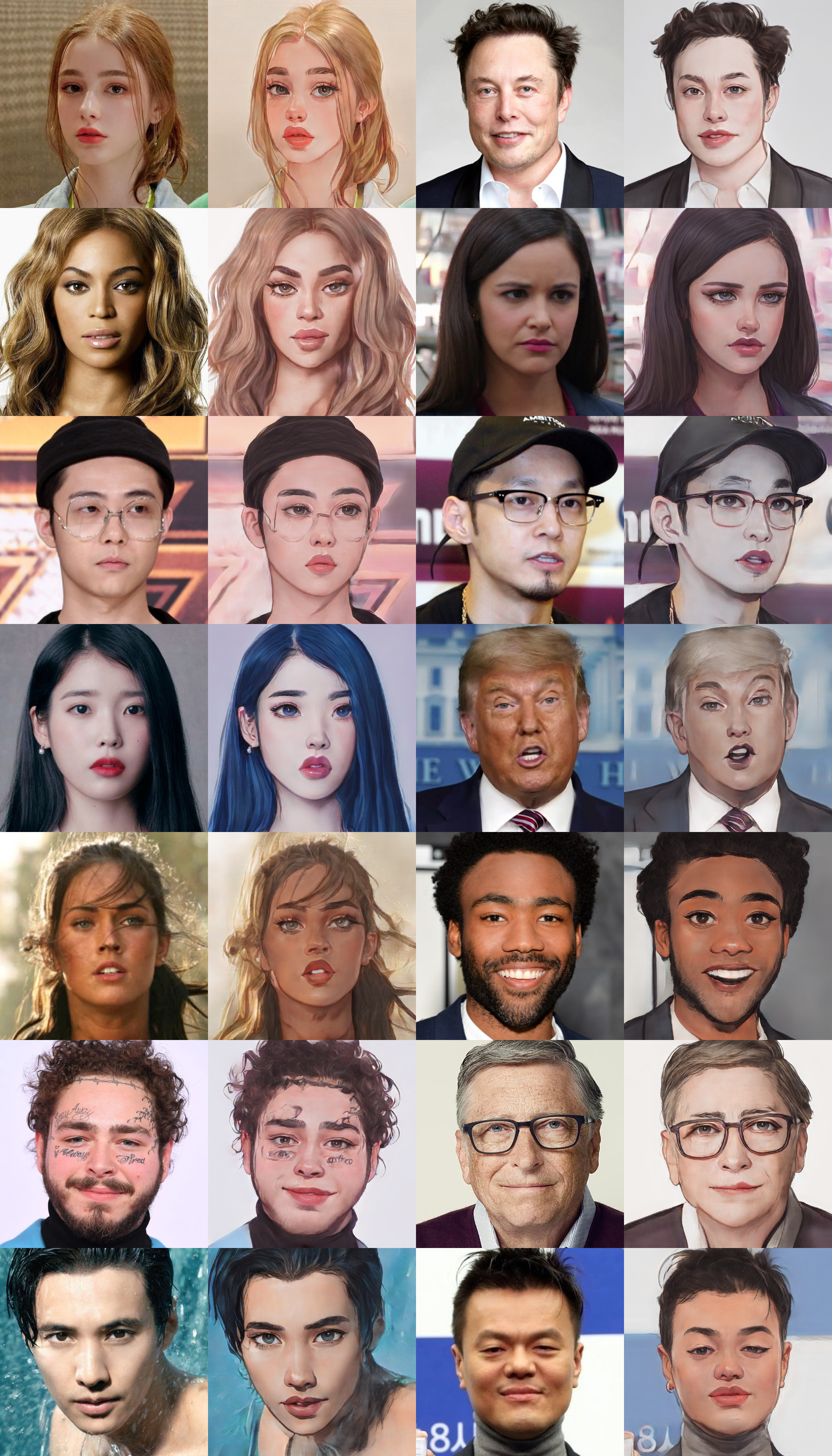

title = "AnimeGANv2"

description = "Gradio Demo for AnimeGanv2 Face Portrait. To use it, simply upload your image, or click one of the examples to load them. Read more at the links below. Please use a cropped portrait picture for best results similar to the examples below."

article = "Github Repo Pytorch

samples from repo:

"

examples=[['groot.jpeg','version 2 (🔺 robustness,🔻 stylization)'],['bill.png','version 1 (🔺 stylization, 🔻 robustness)'],['tony.png','version 1 (🔺 stylization, 🔻 robustness)'],['elon.png','version 2 (🔺 robustness,🔻 stylization)'],['IU.png','version 1 (🔺 stylization, 🔻 robustness)'],['billie.png','version 2 (🔺 robustness,🔻 stylization)'],['will.png','version 2 (🔺 robustness,🔻 stylization)'],['beyonce.png','version 1 (🔺 stylization, 🔻 robustness)'],['gongyoo.jpeg','version 1 (🔺 stylization, 🔻 robustness)']]

gr.Interface(inference, [gr.inputs.Image(type="pil"),gr.inputs.Radio(['version 1 (🔺 stylization, 🔻 robustness)','version 2 (🔺 robustness,🔻 stylization)'], type="value", default='version 2 (🔺 robustness,🔻 stylization)', label='version')

], gr.outputs.Image(type="pil"),title=title,description=description,article=article,enable_queue=True,examples=examples,allow_flagging=False).launch()