# 🔉👄 Wav2Lip STUDIO

##

https://user-images.githubusercontent.com/800903/262435301-af205a91-30d7-43f2-afcc-05980d581fe0.mp4

## 💡 Description

This repository contains a Wav2Lip Studio Standalone Version.

It's an all-in-one solution: just choose a video and a speech file (wav or mp3), and the tools will generate a lip-sync video, faceswap, voice clone, and translate video with voice clone (HeyGen like).

It improves the quality of the lip-sync videos generated by the [Wav2Lip tool](https://github.com/Rudrabha/Wav2Lip) by applying specific post-processing techniques.

## 📖 Quick Index

* [🚀 Updates](#-updates)

* [🔗 Requirements](#-requirements)

* [💻 Installation](#-installation)

* [🐍 Tutorial](#-tutorial)

* [🐍 Usage](#-usage)

* [👄 Keyframes Manager](#-keyframes-manager)

* [👄 Input Video](#-input-video)

* [📺 Examples](#-examples)

* [📖 Behind the scenes](#-behind-the-scenes)

* [💪 Quality tips](#-quality-tips)

* [⚠️Noted Constraints](#-noted-constraints)

* [📝 To do](#-to-do)

* [😎 Contributing](#-contributing)

* [🙏 Appreciation](#-appreciation)

* [📝 Citation](#-citation)

* [📜 License](#-license)

* [☕ Support Wav2lip Studio](#-support-wav2lip-studio)

## 🚀 Updates

**2024.10.13 Add avatar for driving video**

- 💪 Add 10 new avatars for driving video, you can now choose an avatar before generate the driving video.

- 📺 Add a feature to close or not the mouth before generating lip sync video.

- 🐛 Easy docker installation, follow instructions bellow.

- ♻ Better macos integration, follow instructions bellow.

- 🚀 In Comfyui panel, you can now regenerate mask and keyframe after modification of your video, allow better mouth mask.

**2024.09.03 ComfyUI Integration in Lip Sync Studio**

- 💪Manage and chain your comfyui worklows from end to end.

**2024.08.07 Major Update (Standalone version only)**

- 📺"Add Driving video feature": this feature allows you to generate a driving video to generate better lip sync.

**2024.05.06 Major Update (Standalone version only)**

- 🐛"Data Structure": I had to restructure the files to allow for better quality in the video output. The previous version did everything in RAM at the expense of video quality; each pass degraded the videos, for example, if you did a face swap + Wav2Lip, there was a degradation of quality because of creating a first pass for Wav2Lip and a second for face swap. You will now find a "data" directory in each project containing all the files necessary for the tool's work and maintaining quality through different passes (quality above all).

- ♻"Wav2Lip Video Outputs": After generating Wav2Lip videos, the videos are numbered in the output directory. Clicking on "video quality" loads the last video of the specified quality.

- 👄"Zero Mouth": this feature should allow closing a person's mouth before proceeding with lip-syncing, sometimes it doesn't have much effect or can add some flickering to the image, but I have had good results in some cases. Technically, this will take two passes to close the mouth, you will find the frames used by the tool in "data\zero."

- 👬"Clone Voice": the interface has been revised.

- 💪"High Quality Vs Best Quality": In this version, there is not much difference between High and Best. Best is to be used with videos where faces are large on the screen like on a 4K video for example. The process behind just uses different GFPGAN models and a different face alignment.

- ▶ "Show Frame Number": In Low Quality only, the frame number appears in the top left corner. This helps to identify the frame where you want to make modifications.

- 📺"Show Wav2Lip Output": this feature allows you to see the Wav2Lip output taking into account the input audio.

- "New Face Alignment": The face alignment has been reviewed.

- 🔑"Zoom In, Zoom Out, Move Right,...": Now you will understand why sometimes the results are strange and generate deformed lips, broken teeth, or other very strange things.I recommend the video tutorial here: https://www.patreon.com/posts/key-feature-103716855

**2024.02.09 Spped Up Update (Standalone version only)**

- 👬 Clone voice: Add controls to manage the voice clone (See Usage section)

- 🎏 translate video: Add features to translate panel to manage translation (See Usage section)

- 📺 Add Trim feature: Add a feature to trim the video.

- 🔑 Automatic mask: Add a feature to automatically calculate the mask parameters (padding, dilate...). You can change parameters if needed.

- 🚀 Speed up processes : All processes are now faster, Analysis, Face Swap, Generation in High quality

- 💪 Less disk space used : Remove temporary files after generation and keep only necessary data, will greatly reduce disk space used.

**2024.01.20 Major Update (Standalone version only)**

- ♻ Manage project: Add a feature to manage multiple project

- 👪 Introduced multiple face swap: Can now Swap multiple face in one shot (See Usage section)

- ⛔ Visible face restriction: Can now make whole process even if no face detected on frame!

- 📺 Video Size: works with high resolution video input, (test with 1980x1080, should works with 4K but slow)

- 🔑 Keyframe manager: Add a keyframe manager for better control of the video generation

- 🍪 coqui TTS integration: Remove bark integration, use coqui TTS instead (See Usage section)

- 💬 Conversation: Add a conversation feature with multiple person (See Usage section)

- 🔈 Record your own voice: Add a feature to record your own voice (See Usage section)

- 👬 Clone voice: Add a feature to clone voice from video (See Usage section)

- 🎏 translate video: Add a feature to translate video with voice clone (See Usage section)

- 🔉 Volume amplifier for wav2lip: Add a feature to amplify the volume of the wav2lip output (See Usage section)

- 🕡 Add delay before sound speech start

- 🚀 Speed up process: Speed up the process

**2023.09.13**

- 👪 Introduced face swap: facefusion integration (See Usage section) **this feature is under experimental**.

**2023.08.22**

- 👄 Introduced [bark](https://github.com/suno-ai/bark/) (See Usage section), **this feature is under experimental**.

**2023.08.20**

- 🚢 Introduced the GFPGAN model as an option.

- ▶ Added the feature to resume generation.

- 📏 Optimized to release memory post-generation.

**2023.08.17**

- 🐛 Fixed purple lips bug

**2023.08.16**

- ⚡ Added Wav2lip and enhanced video output, with the option to download the one that's best for you, likely the "generated video".

- 🚢 Updated User Interface: Introduced control over CodeFormer Fidelity.

- 👄 Removed image as input, [SadTalker](https://github.com/OpenTalker/SadTalker) is better suited for this.

- 🐛 Fixed a bug regarding the discrepancy between input and output video that incorrectly positioned the mask.

- 💪 Refined the quality process for greater efficiency.

- 🚫 Interruption will now generate videos if the process creates frames

**2023.08.13**

- ⚡ Speed-up computation

- 🚢 Change User Interface : Add controls on hidden parameters

- 👄 Only Track mouth if needed

- 📰 Control debug

- 🐛 Fix resize factor bug

# 💻 Installation

## 🔗 Requirements (windows, linux, macos)

1. FFmpeg : download it from the [official FFmpeg site](https://ffmpeg.org/download.html). Follow the instructions appropriate for your operating system, note ffmpeg have to be accessible from the command line.

- Make sure ffmpeg is in your PATH environment variable. If not, add it to your PATH environment variable.

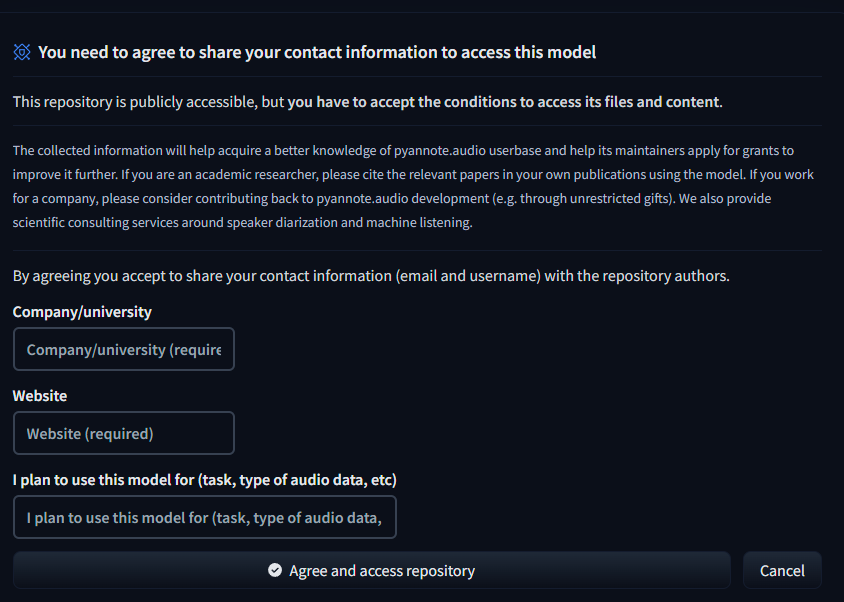

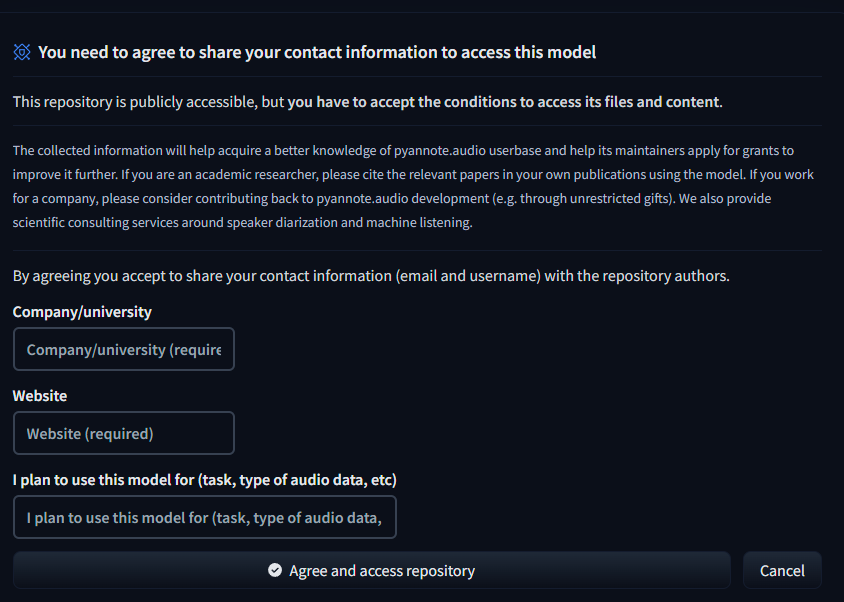

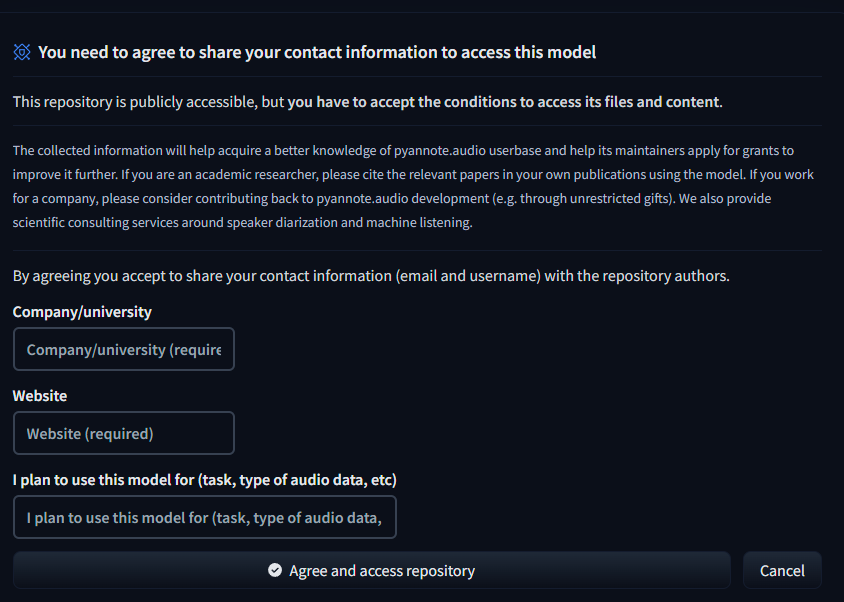

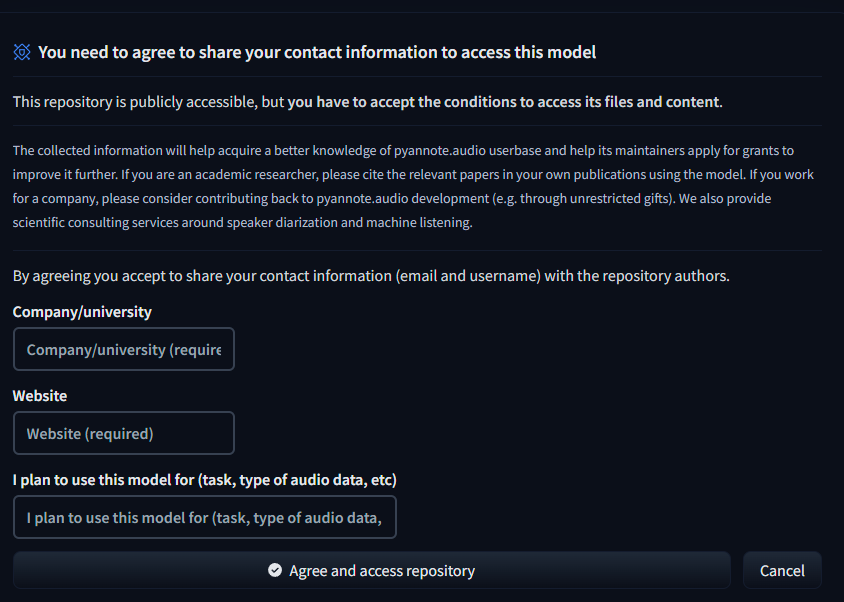

2. pyannote.audio:You need to agree to share your contact information to access pyannote models.

To do so, go to both link:

- [pyannote diarization-3.1 huggingface repository](https://huggingface.co/pyannote/speaker-diarization-3.1)

- [pyannote segmentation-3.0 huggingface repository](https://huggingface.co/pyannote/segmentation-3.0)

set each field and click "Agree and access repository"

2. Create an access token to Huggingface:

1. Connect with your account

2. go to [access tokens](https://huggingface.co/settings/token) in settings

3. create a new token in read mode

4. copy the token

5. paste it in the file api_keys.json

```json

{

"huggingface_token": "your token"

}

```

3. Install [python 3.10.11](https://www.python.org/downloads/release/python-31011/) (for mac users follow instructions bellow)

4. Install [git](https://git-scm.com/downloads)

5. Check ffmpeg, python, cuda and git installation

```bash

python --version

git --version

ffmpeg -version

nvcc --version (only if you have a Nvidia GPU and not MacOS)

```

Must return something like

```bash

Python 3.10.11

git version 2.35.1.windows.2

ffmpeg version N-110509-g722ff74055-20230506 Copyright (c) 2000-2023 the FFmpeg developers built with gcc 12.2.0 (crosstool-NG 1.25.0.152_89671bf) bla bla bla...

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2022 NVIDIA Corporation

Built on Wed_Sep_21_10:41:10_Pacific_Daylight_Time_2022

Cuda compilation tools, release 11.8, V11.8.89

Build cuda_11.8.r11.8/compiler.31833905_0

```

## Linux Users

1. make sure to have git-lfs installed

```bash

sudo apt-get install git-lfs

```

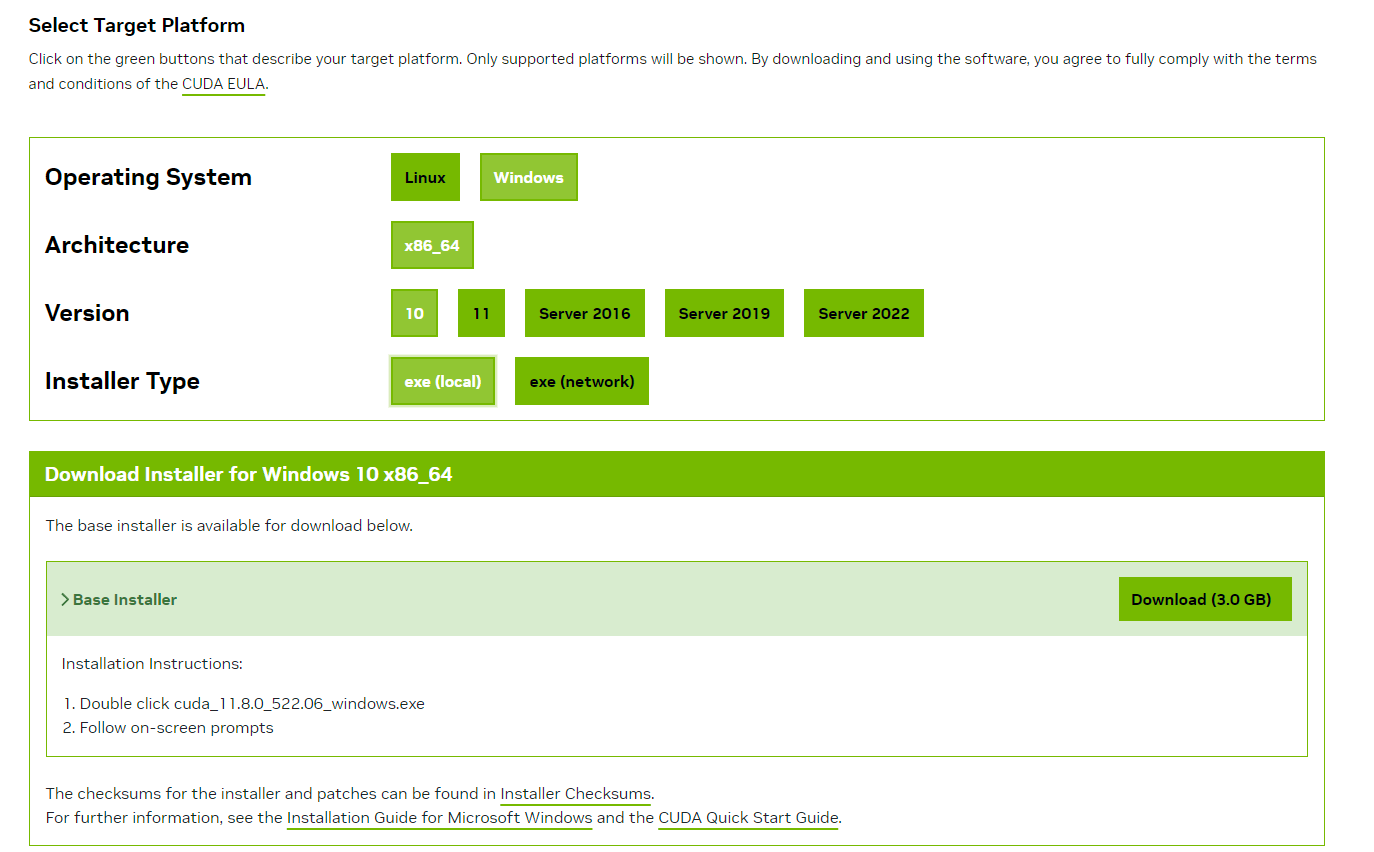

## Windows Users

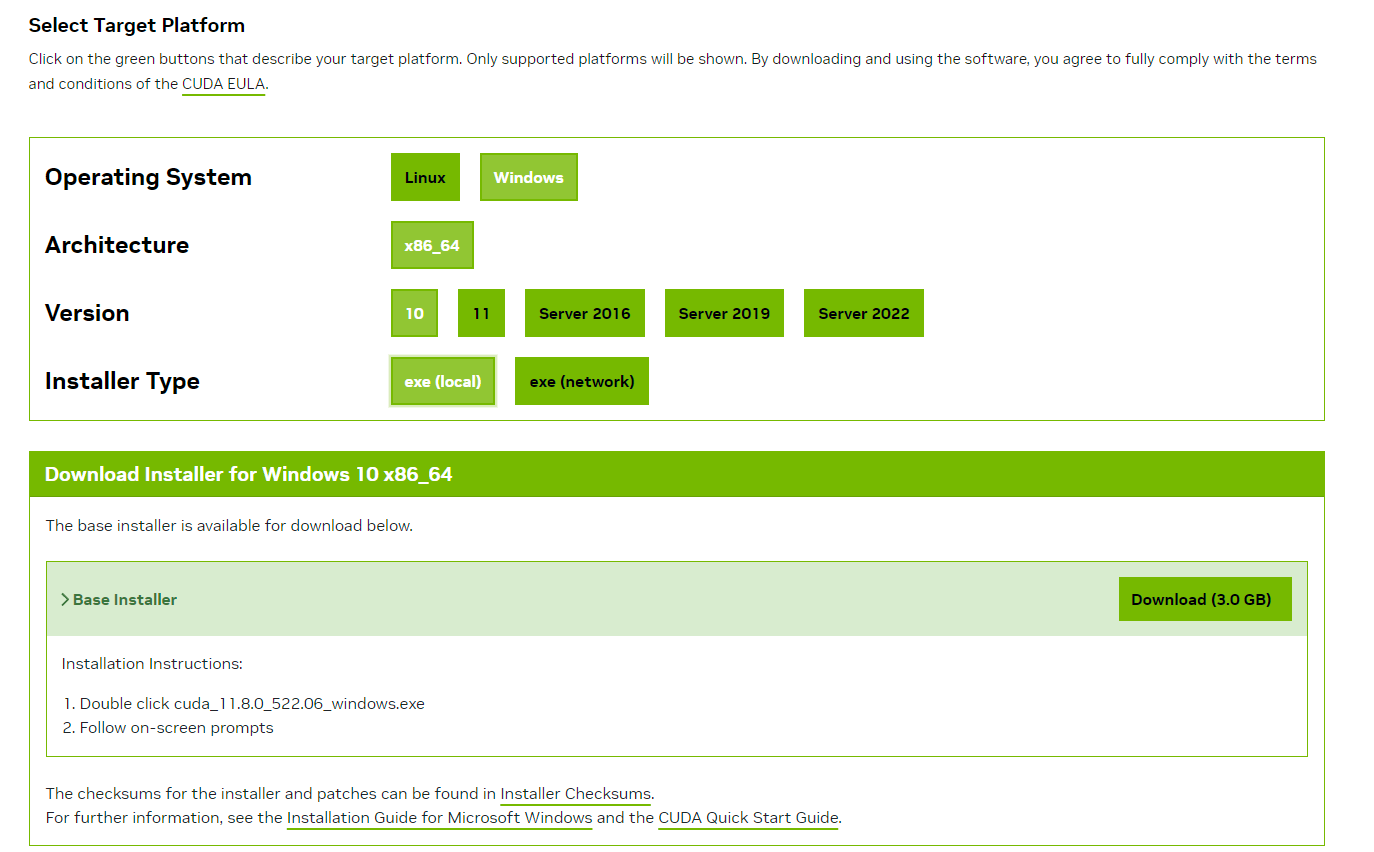

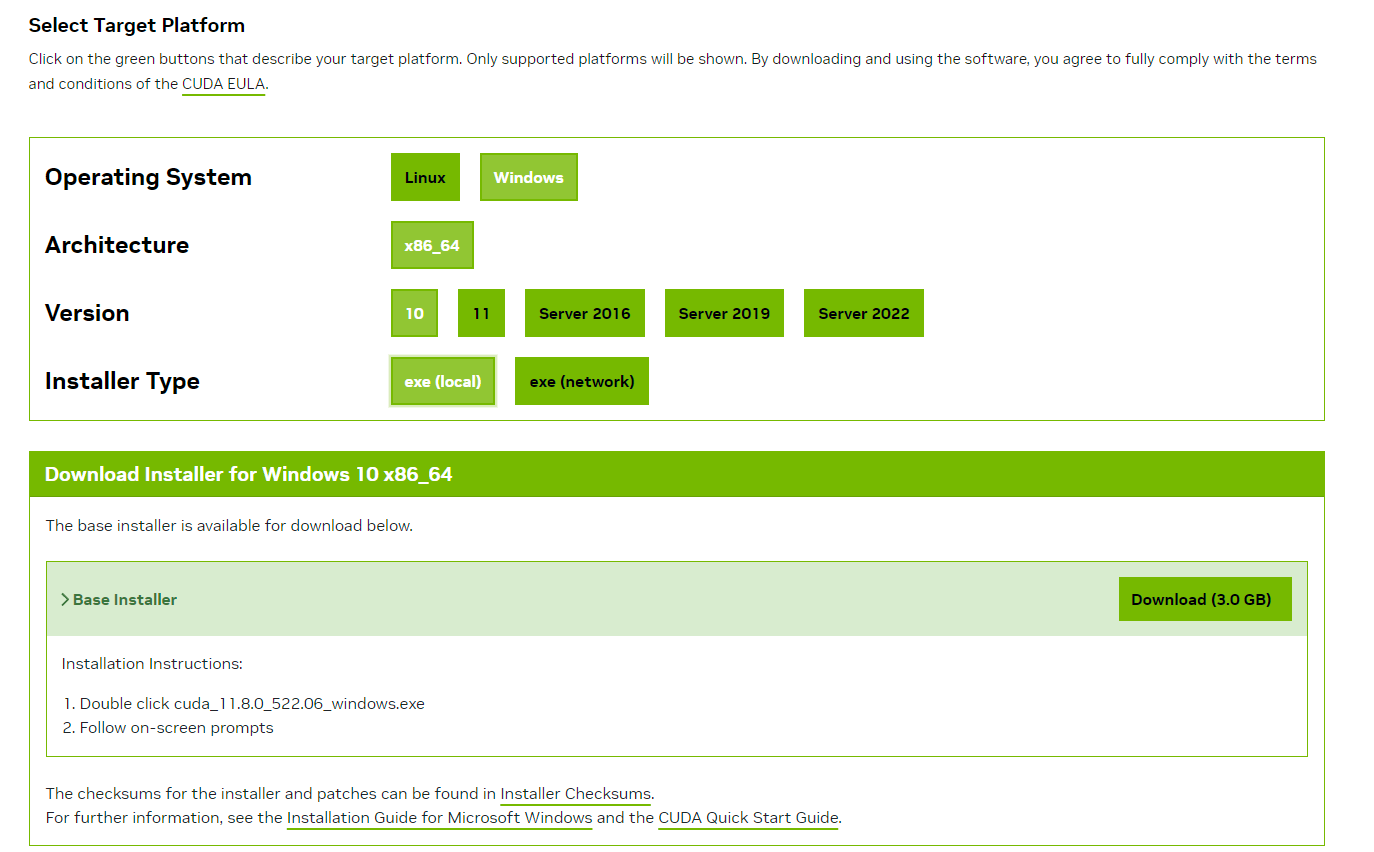

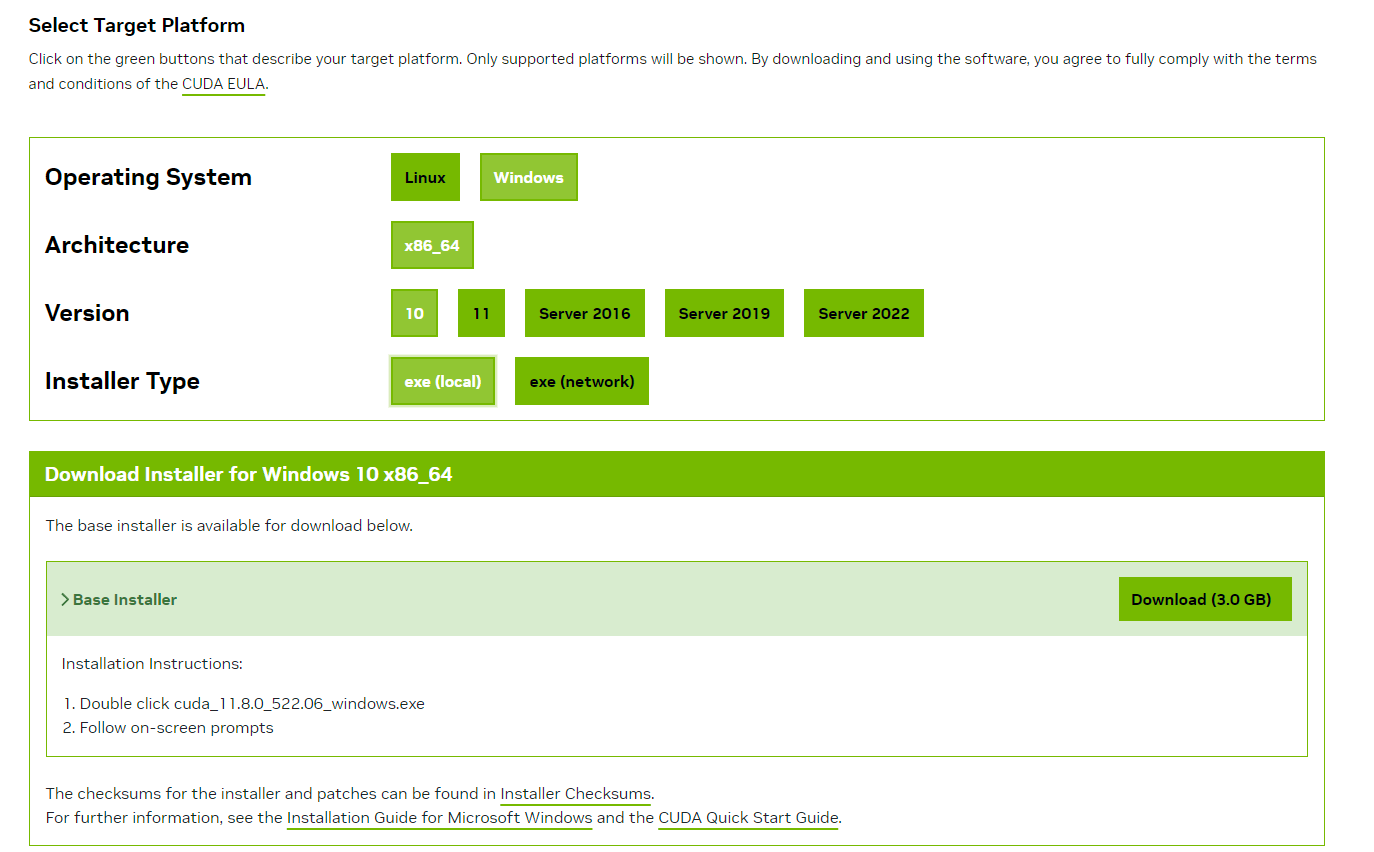

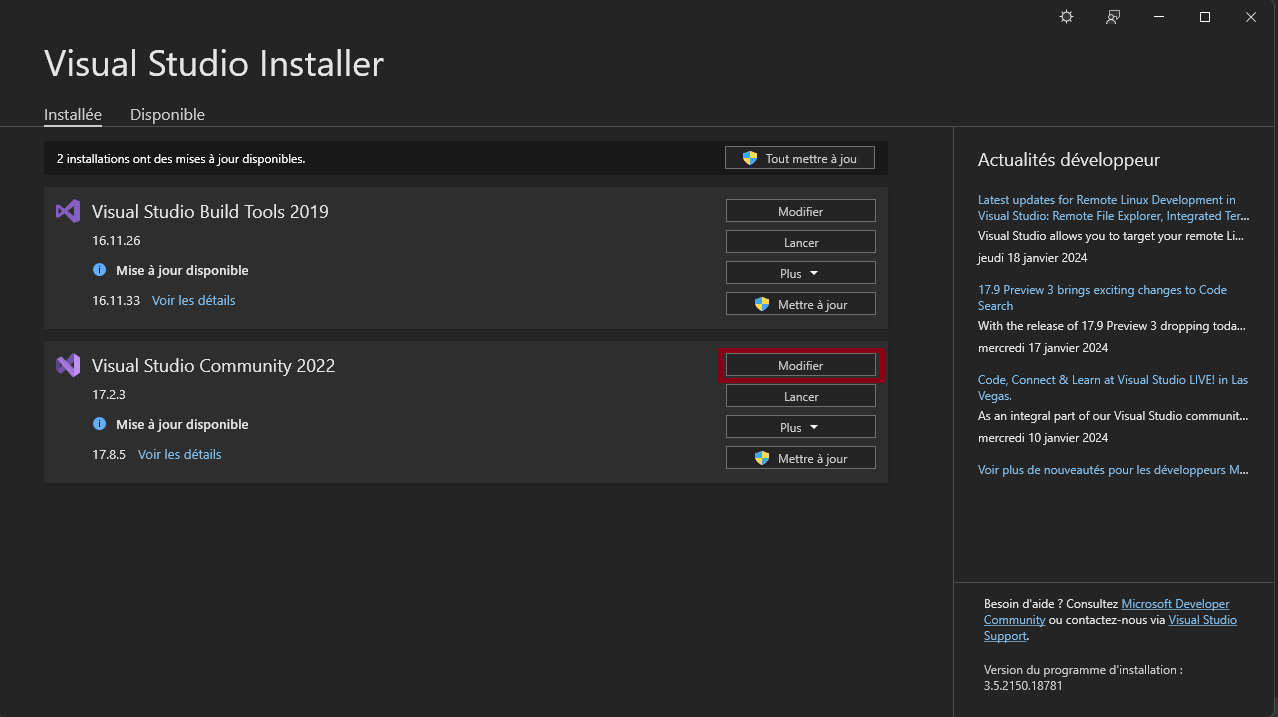

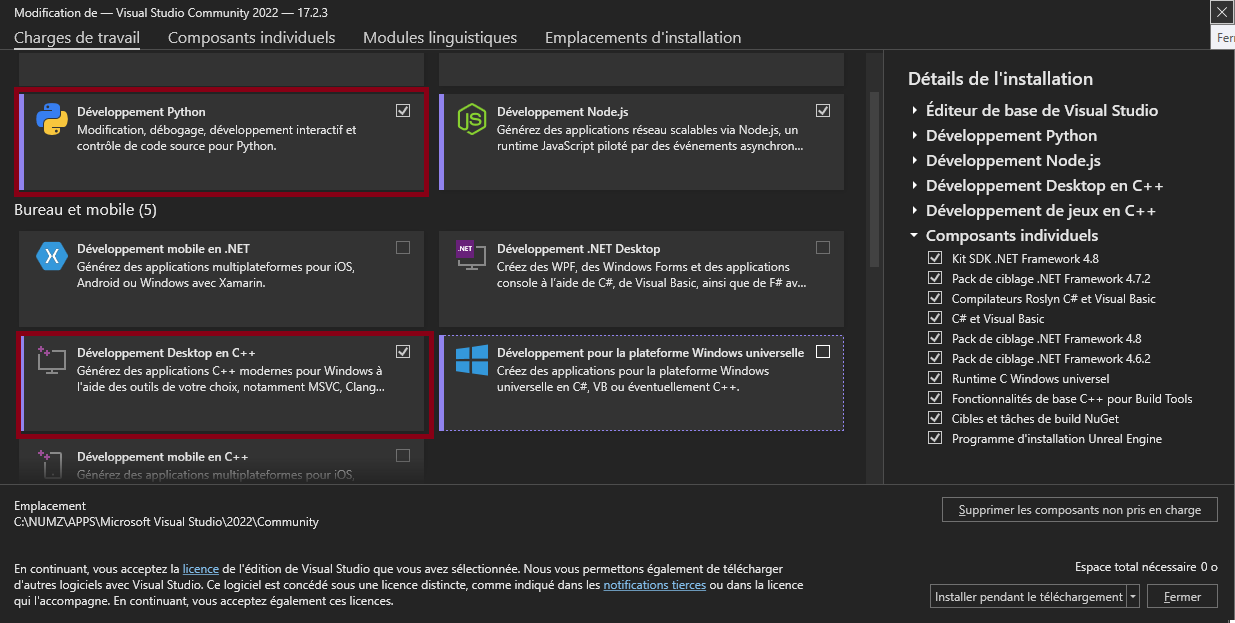

1. Install [Cuda 11.8](https://developer.nvidia.com/cuda-11-8-0-download-archive) if not ever done.

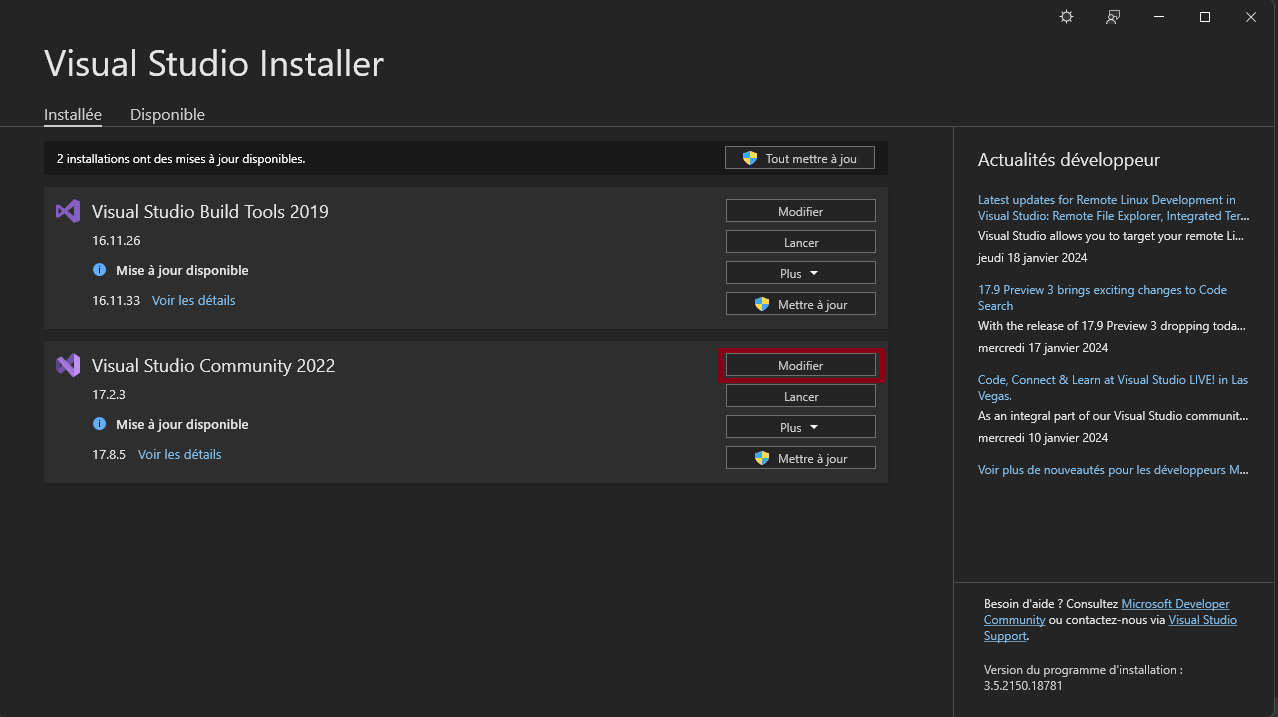

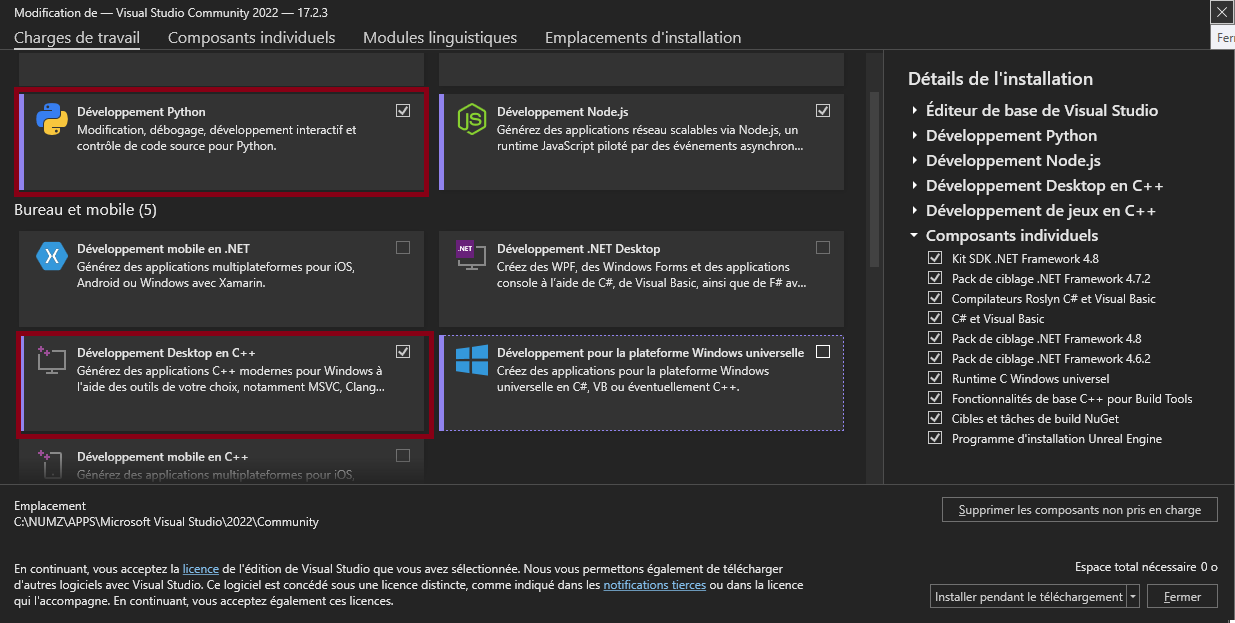

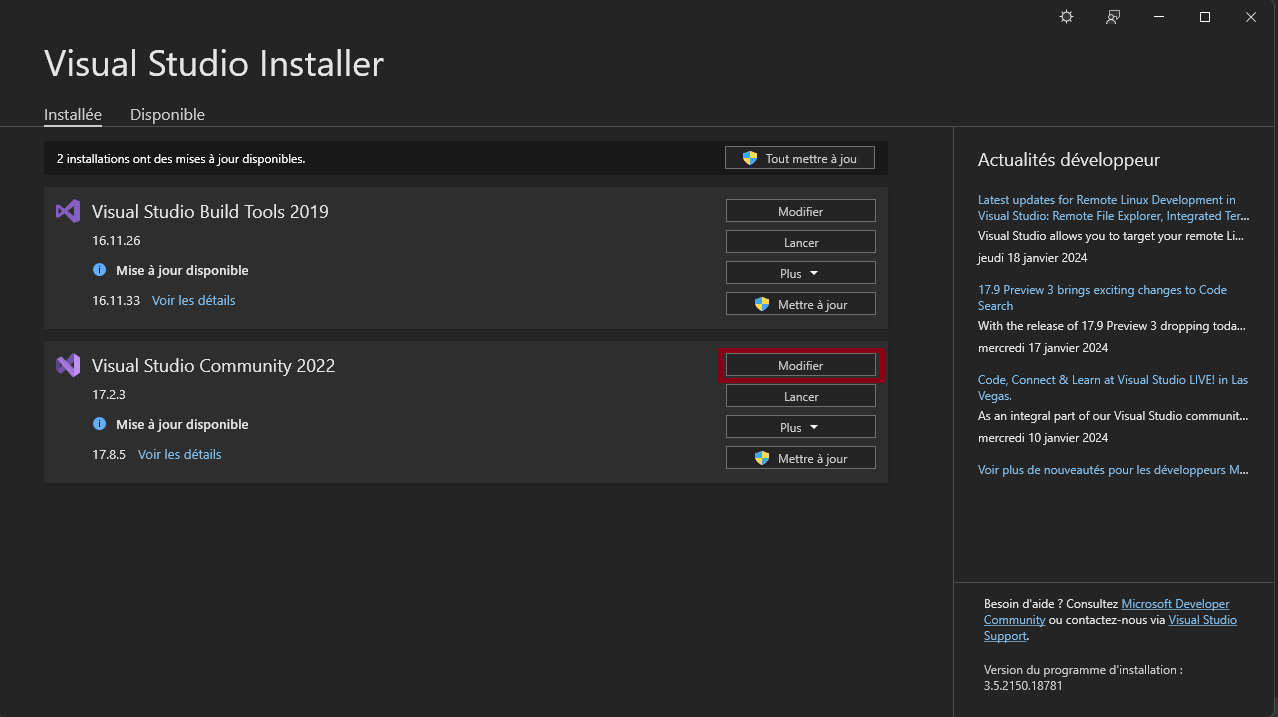

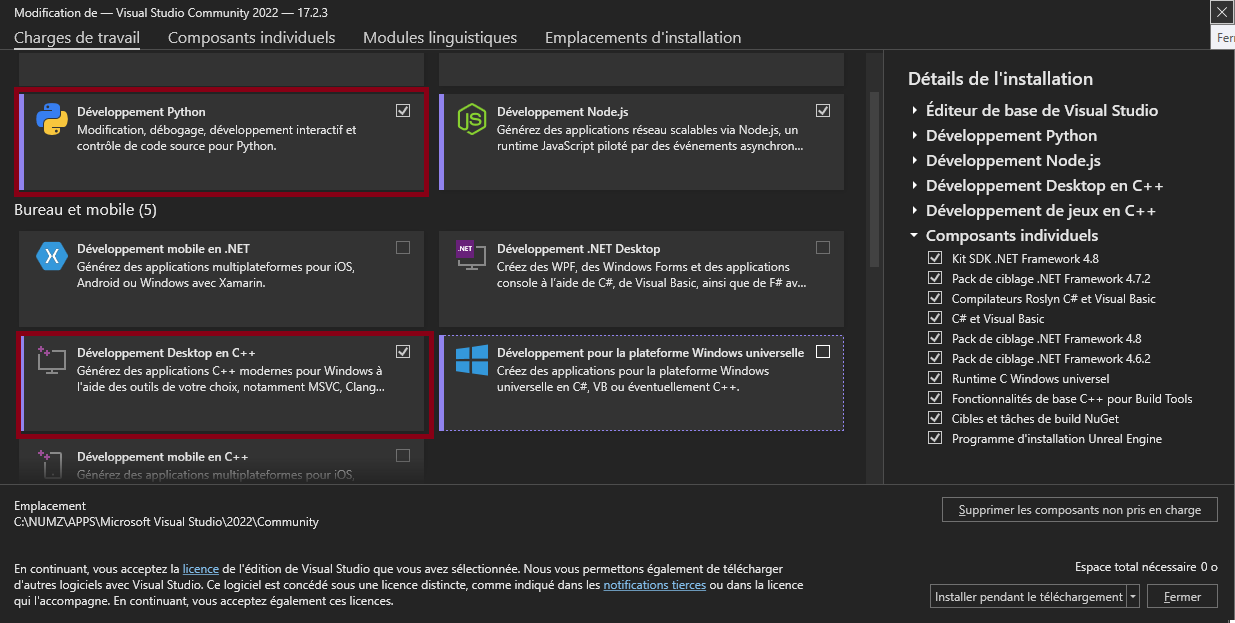

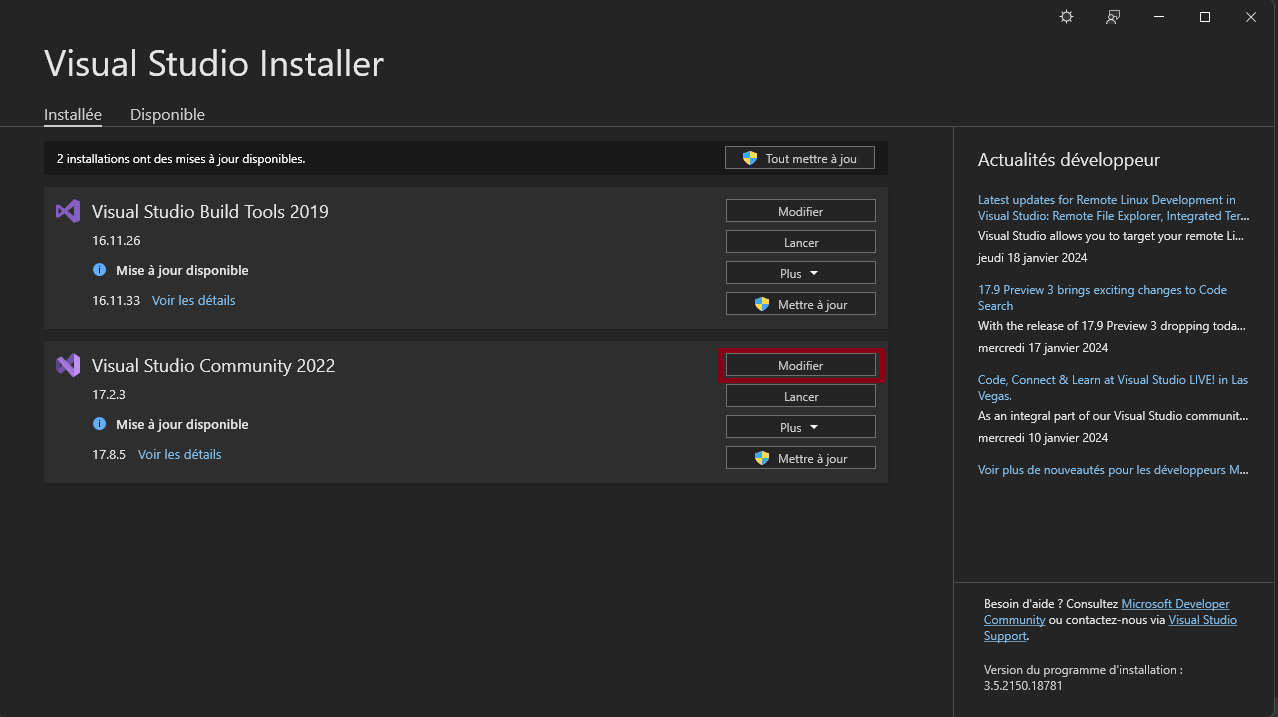

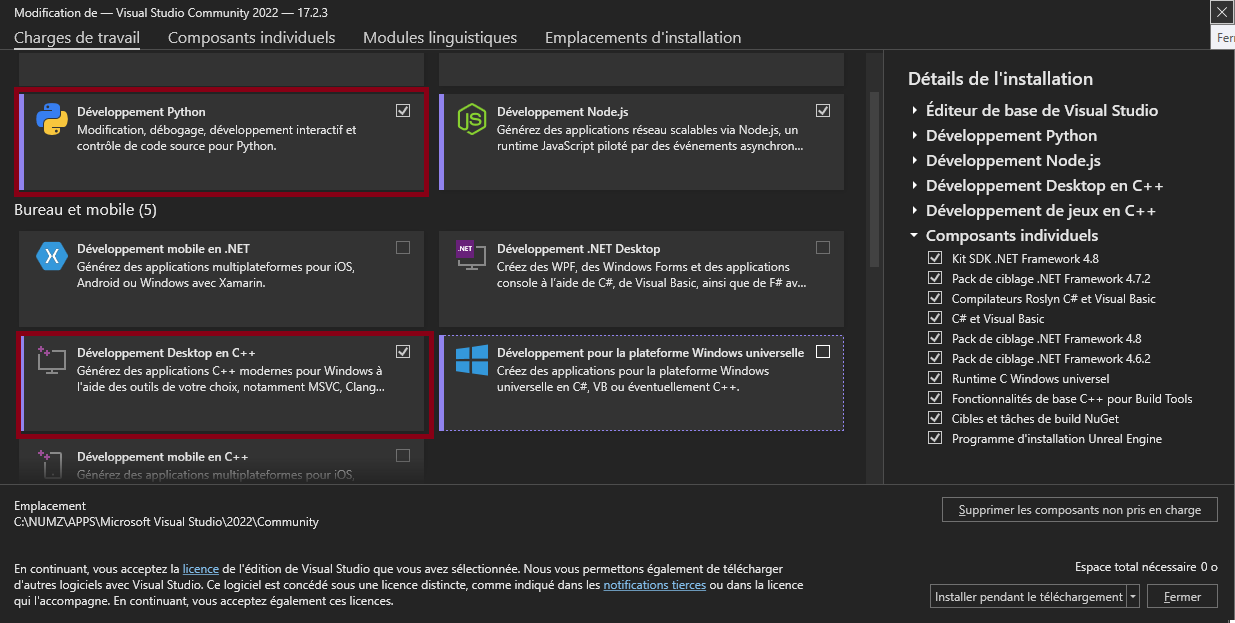

2. Install [Visual Studio](https://visualstudio.microsoft.com/fr/downloads/). During the install, make sure to include the Python and C++ packages in visual studio installer.

3. if you have multiple Python version on your computer edit launch.py and change the following line:

```bash

REM set PYTHON="your python.exe path"

```

```bash

set PYTHON="your python.exe path"

```

4. double click on wav2lip-studio.bat, that will install the requirements and download the models

## MACOS Users

1. Install python 3.9

```

brew update

brew install python@3.9

brew install ffmpeg

brew install git-lfs

git-lfs install

xcode-select --install

```

2. unzip the wav2lip-studio.zip in a folder

```

unzip wav2lip-studio.zip

```

3. Install environnement and requirements

```

cd /YourWav2lipStudioFolder

/opt/homebrew/bin/python3.9 -m venv venv

./venv/bin/python3.9 -m pip install inaSpeechSegmenter

./venv/bin/python3.9 -m pip install tyro==0.8.5 pykalman==0.9.7

./venv/bin/python3.9 -m pip install transformers==4.33.2

./venv/bin/python3.9 -m pip install spacy==3.7.4

./venv/bin/python3.9 -m pip install TTS==0.21.2

./venv/bin/python3.9 -m pip install gradio==4.14.0 imutils==0.5.4 moviepy websocket-client requests_toolbelt filetype numpy opencv-python==4.8.0.76 scipy==1.11.2 requests==2.28.1 pillow==9.3.0 librosa==0.10.0 opencv-contrib-python==4.8.0.76 huggingface_hub==0.20.2 tqdm==4.66.1 cutlet==0.3.0 numba==0.57.1 imageio_ffmpeg==0.4.9 insightface==0.7.3 unidic==1.1.0 onnx==1.14.1 onnxruntime==1.16.0 psutil==5.9.5 lpips==0.1.4 GitPython==3.1.36 facexlib==0.3.0 gfpgan==1.3.8 gdown==4.7.1 pyannote.audio==3.1.1 openai-whisper==20231117 resampy==0.4.0 scenedetect==0.6.2 uvicorn==0.23.2 starlette==0.35.1 fastapi==0.109.0

./venv/bin/python3.9 -m pip install torch==2.1.2 torchvision==0.16.2 torchaudio==2.1.2

./venv/bin/python3.9 -m pip install numpy==1.24.4

```

3.1. for silicon, one more step is needed

```

./venv/bin/python3.9 -m pip uninstall torch torchvision torchaudio

./venv/bin/python3.9 -m pip install --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/cpu

sed -i '' 's/from torchvision.transforms.functional_tensor import rgb_to_grayscale/from torchvision.transforms.functional import rgb_to_grayscale/' venv/lib/python3.9/site-packages/basicsr/data/degradations.py

```

4. Install models

```

git clone https://huggingface.co/numz/wav2lip_studio-0.2 models

git clone https://huggingface.co/KwaiVGI/LivePortrait models/pretrained_weights

```

5. Launch UI

```

mkdir projects

export PYTORCH_ENABLE_MPS_FALLBACK=1

./venv/bin/python3.9 wav2lip_studio.py

```

# Tutorial

- [FR version](https://youtu.be/43Q8YASkcUA)

- [EN Version](https://youtu.be/B84A5alpPDc)

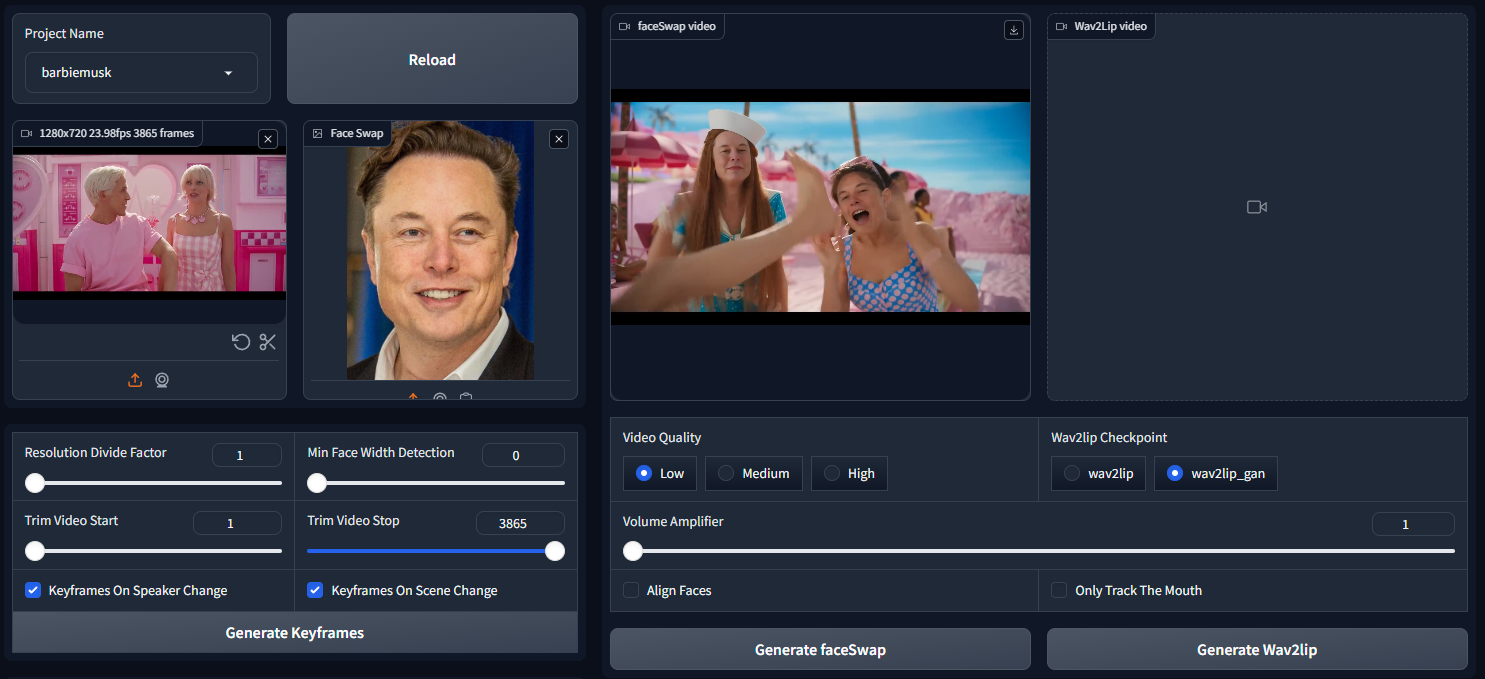

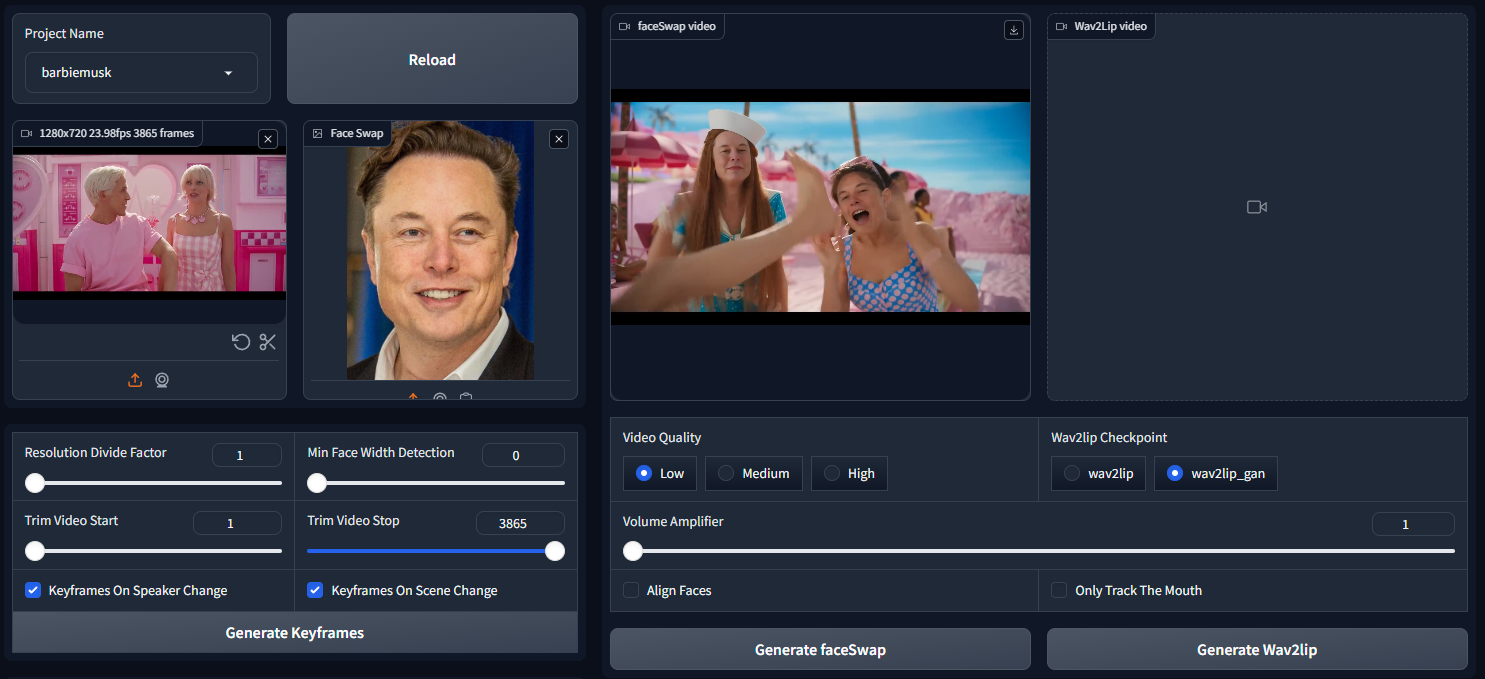

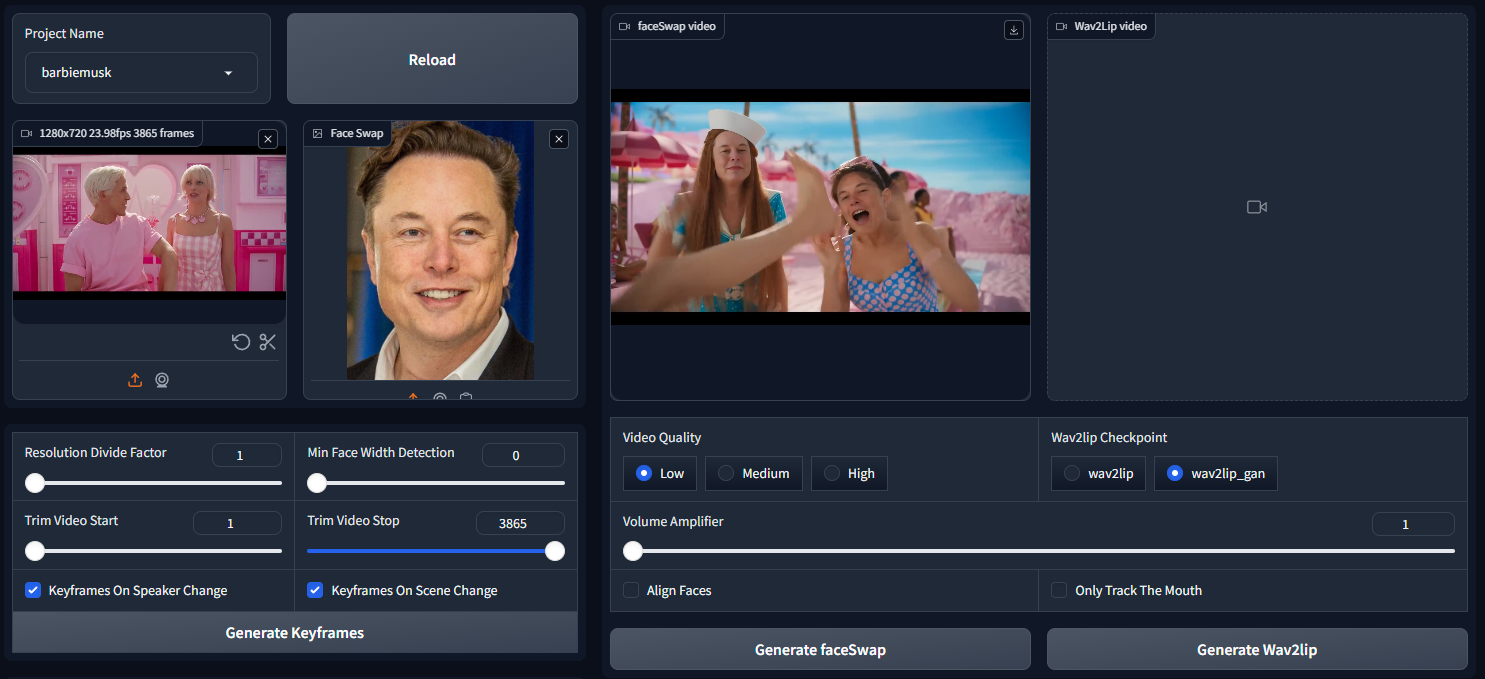

# 🐍 Usage

##PARAMETERS

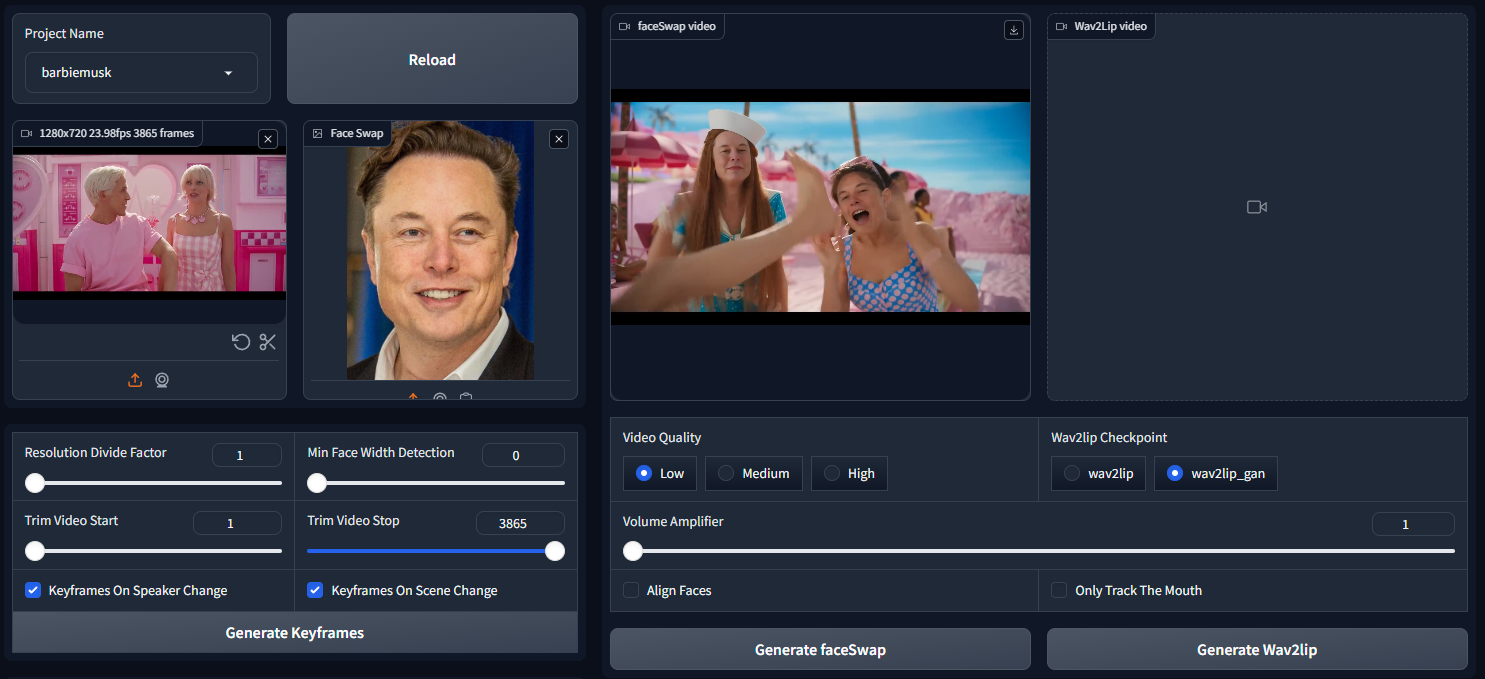

1. Enter project name and click enter.

2. Choose a video (avi or mp4 format). Note avi file will not appear in Video input but process will works.

3. Face Swap (take times so be patient):

- **Face Swap**: choose the image of the faces you want to swap with the face in the video (multiple faces are now available), left face is id 0.

4. **Resolution Divide Factor**: The resolution of the video will be divided by this factor. The higher the factor, the faster the process, but the lower the resolution of the output video.

5. **Min Face Width Detection**: The minimum width of the face to detect. Allow to ignore little face in the video.

6. **Align Faces**: allows for straightening the head before sending it for Wav2Lip processing.

7. **Keyframes On Speaker Change**: Allows you to generate a keyframe when the speaker changes. This allows you to better control the video generation.

8. **Keyframes On scene Change**: Allows you to generate a keyframe when the scene changes. This allows you to better control the video generation.

9. When parameters above are set click on **Generate Keyframes**, See [Keyframes manager](#keyframes-manager) section for more details.

10. Audio, 3 options:

1. Put audio file in the "Speech" input. or record one with the "Record" button.

2. Generate Audio with the text to speech [coqui TTS](https://github.com/coqui-ai/TTS) integration.

1. Choose the language

2. Choose the Voice

3. Write your speech in the text area "Prompt" in text format or json format:

1. Text format:

```bash

Hello, my name is John. I am 25 years old.

```

2. Json format (you can ask chat GPT to generate discussion for you):

```bash

[

{

"start": 0.0,

"end": 3.0,

"text": "Hello, my name is John. I am 25 years old.",

"speaker": "arnold"

},

{

"start": 3.0,

"end": 4.0,

"text": "Ho really ?",

"speaker": "female_01"

},

...

]

```

3. Input Video: Allow to use audio from the input video, voices cloning and translation. see [Input Video](#input-video) section for more details.

11. **Driving Video**: Choose an avatar to generate a driving video.

- **Avatars**: Choose between 10 avatars to use for the driving video, each one will give a different driving result on lipsync output video.

- **Close Mouth**: Close the mouth of the avatar before generating the driving video.

- **Generate Driving Video**: Generate the driving video.

12. **Video Quality**:

- **Low**: Original Wav2Lip quality, fast but not very good.

- **Medium**: Better quality by apply post processing on the mouth, slower.

- **High**: Better quality by apply post processing and upscale the mouth quality, slower.

13. **Wav2lip Checkpoint**: Choose beetwen 2 wav2lip model:

- **Wav2lip**: Original Wav2Lip model, fast but not very good.

- **Wav2lip GAN**: Better quality by apply post processing on the mouth, slower.

14. **Face Restoration Model**: Choose beetwen 2 face restoration model:

- **Code Former**:

- A value of 0 offers higher quality but may significantly alter the person's facial appearance and cause noticeable flickering between frames.

- A value of 1 provides lower quality but maintains the person's face more consistently and reduces frame flickering.

- Using a value below 0.5 is not advised. Adjust this setting to achieve optimal results. Starting with a value of 0.75 is recommended.

- **GFPGAN**: Usually better quality.

15. **Volume Amplifier**: Not amplify the volume of the output audio but allows you to amplify the volume of the audio when sending it to Wav2Lip. This allows you to better control on lips movement.

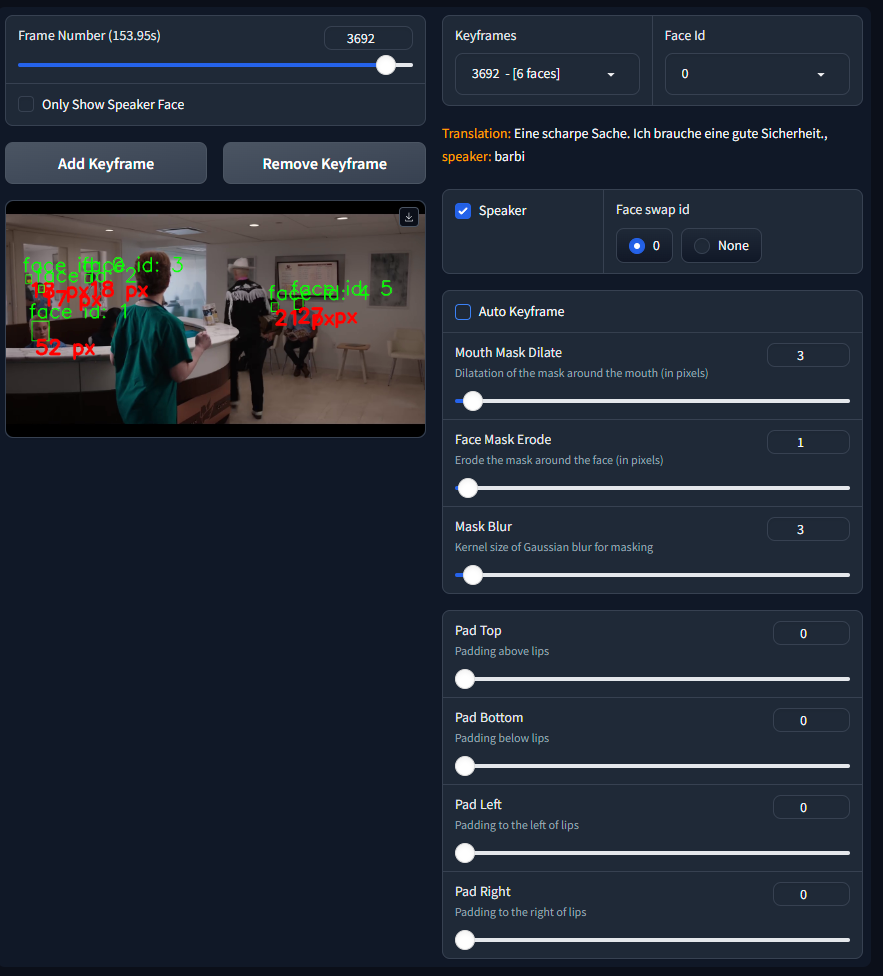

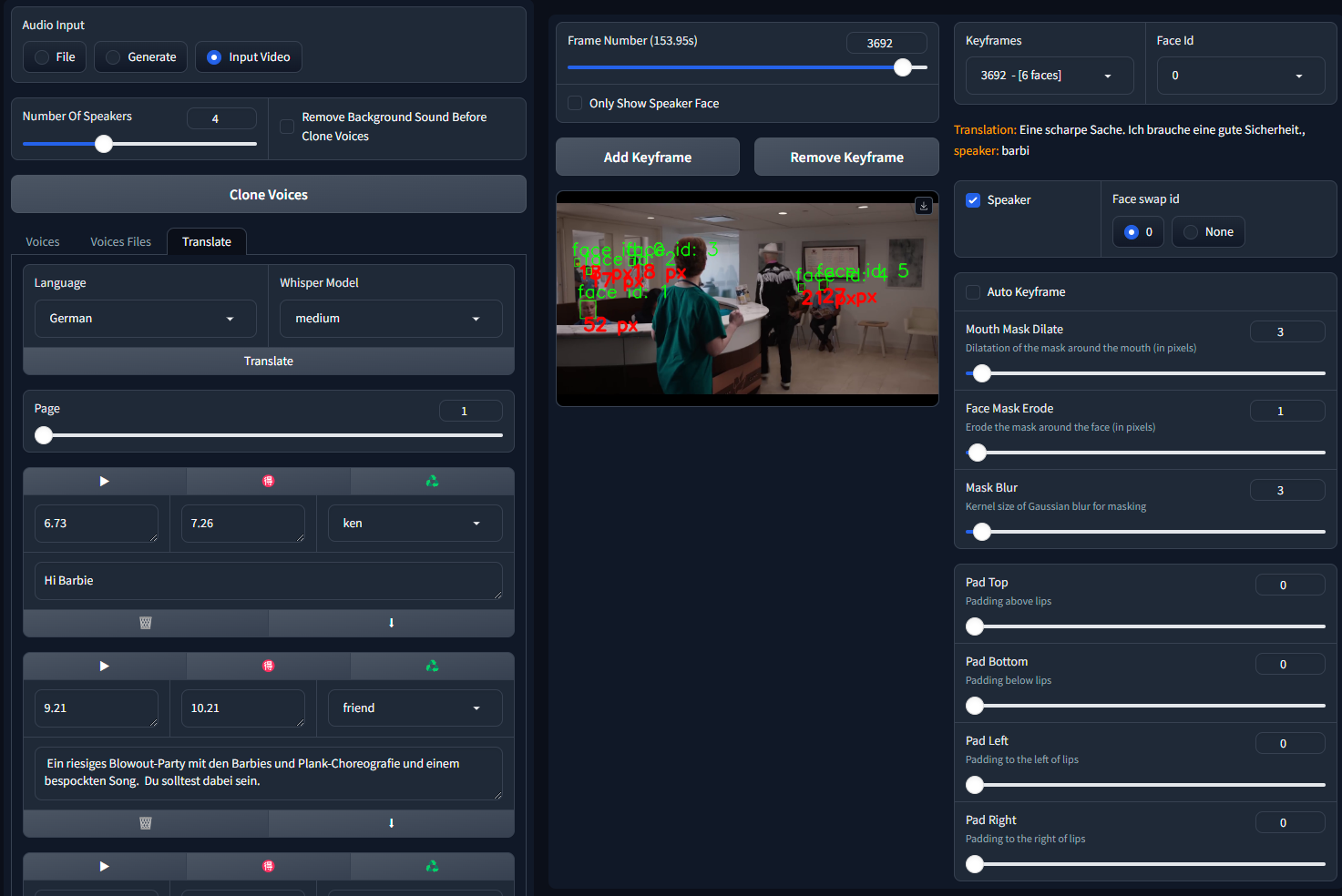

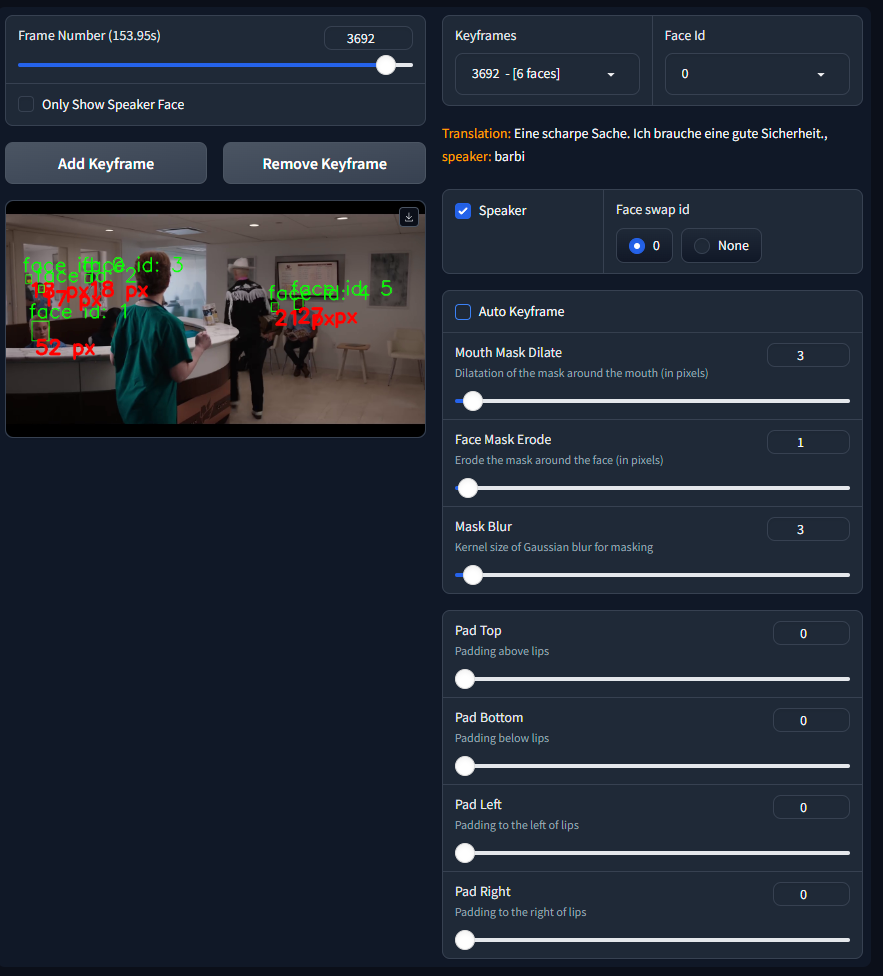

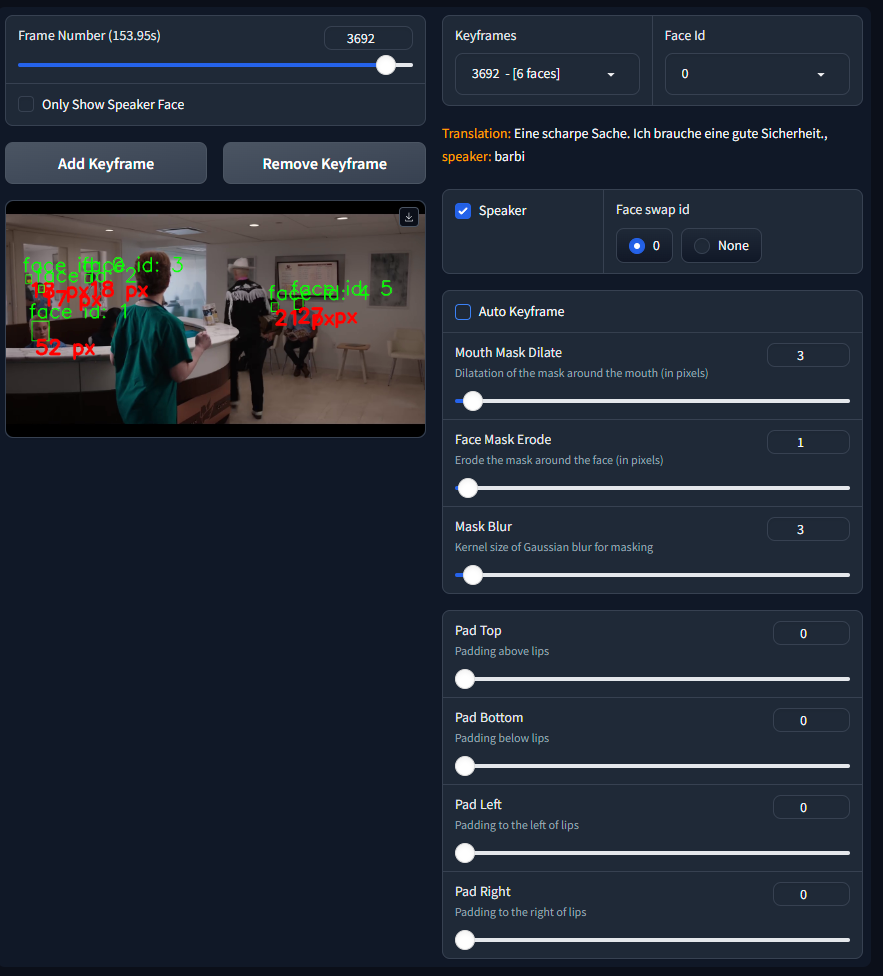

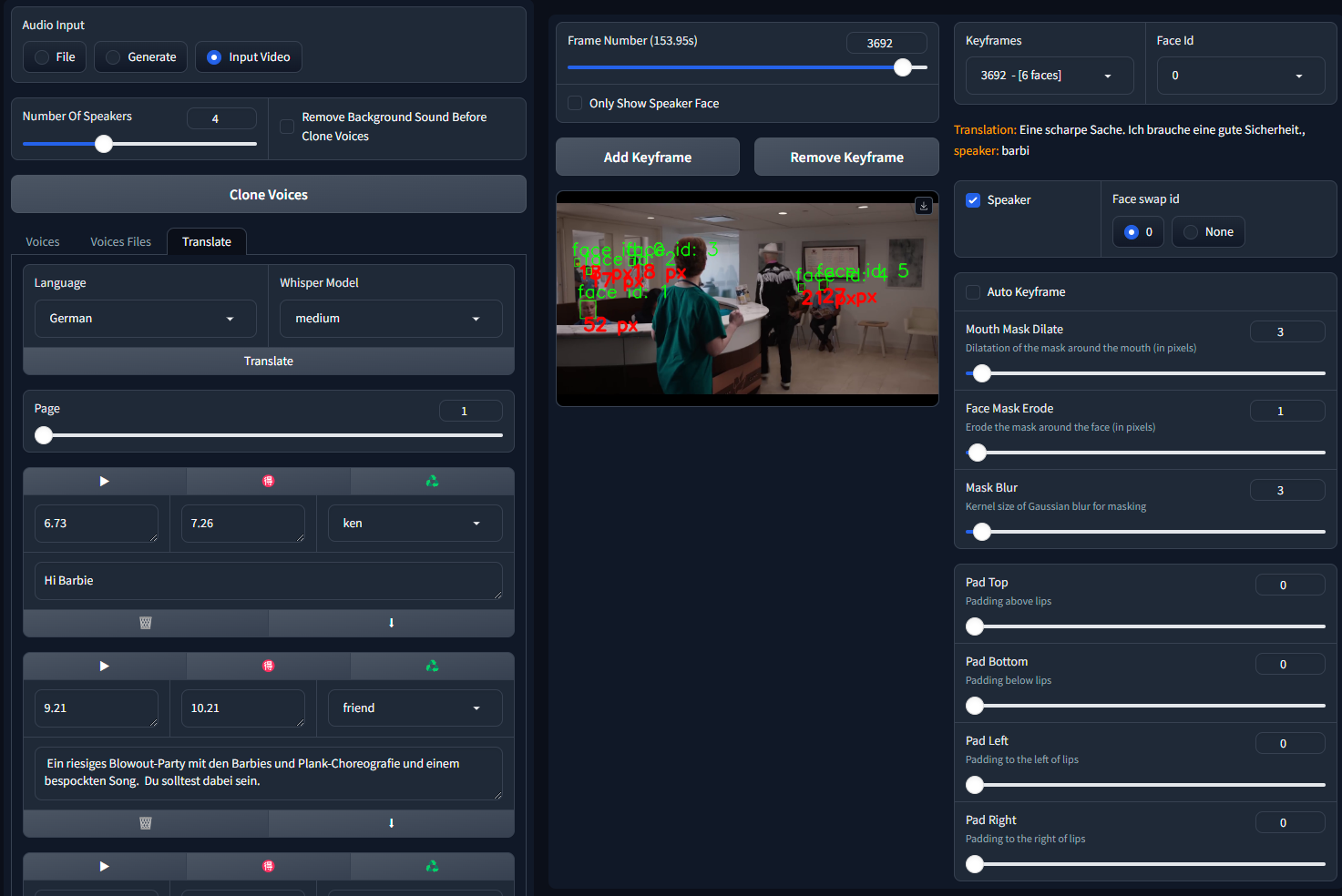

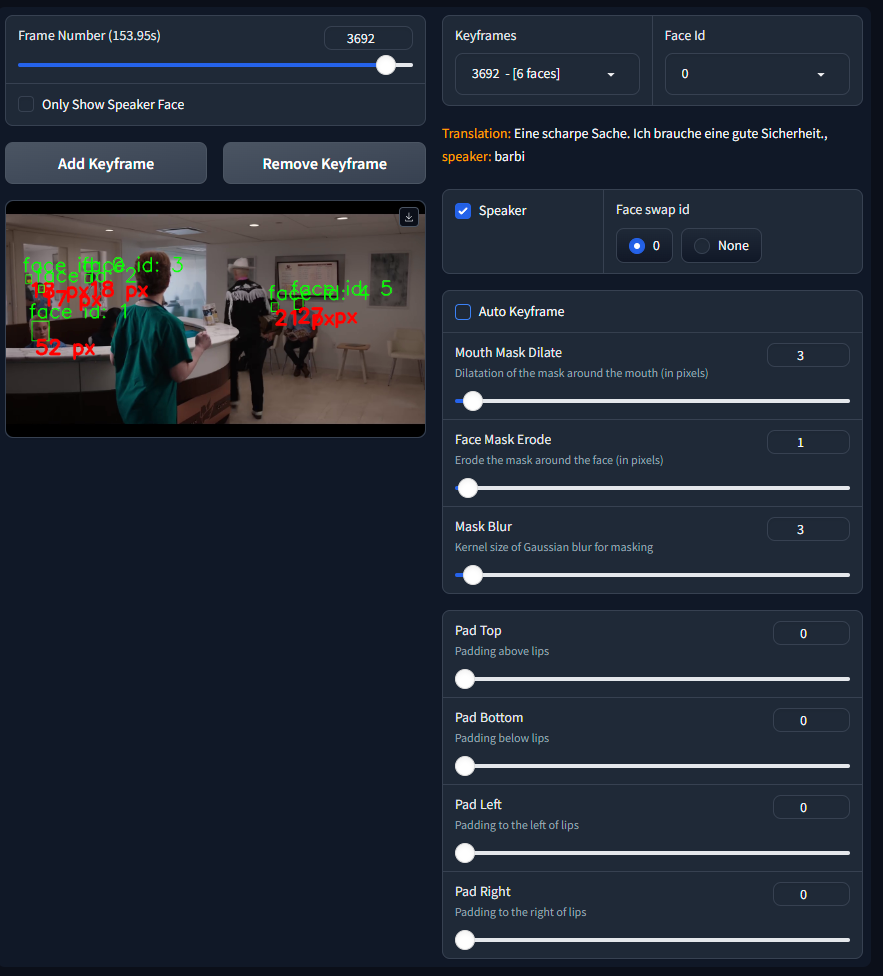

## KEYFRAMES MANAGER

###Global parameters:

1. **Only show Speaker Face**: This option allows you to only focus the face of the speaker, the other faces will be hidden.

2. **Frame Number**: A slider that allows you to move between the frames of the video.

3. **Add Keyframe**: Allows you to add a keyframe at the current Frame Number.

4. **Remove Keyframe**: Allows you to remove a keyframe at the current Frame Number.

5. **Keyframes**: A list of all the keyframes.

###For each face on keyframe:

1. **Face Id**: List of all the faces in current keyframe.

2. **translation info**: If there is a translation associate to the project it will be shown here, you can see the speaker, and then it can help to select the good speaker on this keyframe.

3. **Speaker**: Checkbox to set the speaker on the current Face Id of the current keyframe.

4. **Face Swap Id**: Checkbox to set the face swap id of the current keyframe on the current Face Id.

5. **Automatic Mask**: Default True, if False, you can draw the mask manually.

6. **Mouth Mask Dilate**: This will dilate the mouth mask to cover more area around the mouth. depends on the mouth size.

7. **Face Mask Erode**: This will erode the face mask to remove some area around the face. depends on the face size.

8. **Mask Blur**: This will blur the mask to make it more smooth, try to keep it under or equal to **Mouth Mask Dilate**.

9. **Padding sliders**: This will add padding to the head to avoid cuting the head in the video.

When you configure a keyframes, it's influence goes until next keyframe so intermediate frames will be generated with the same configuration.

Note that this configuration can't be seen in UI for intermediate frames.

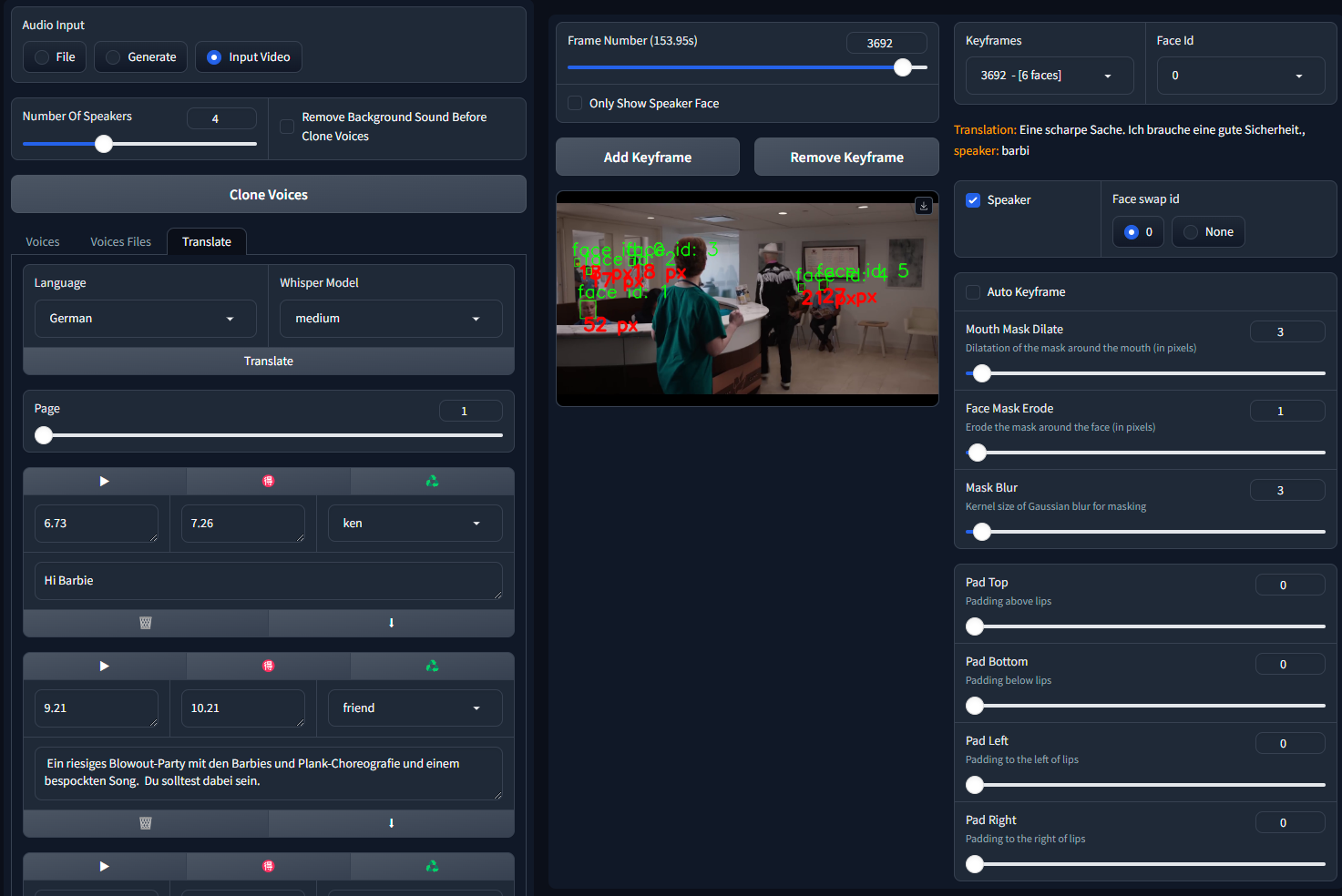

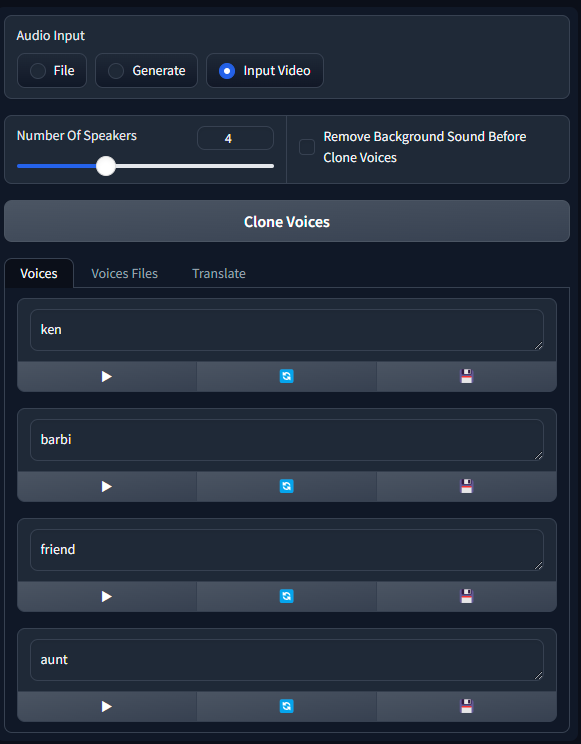

## Input Video

If no sound in translated audio, will take the audio from the input video. Can be useful if you have a bad lipsync on the input video.

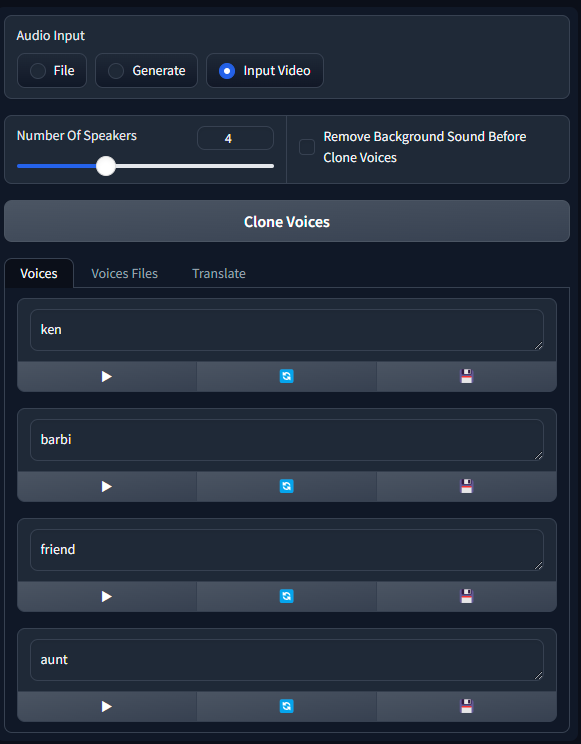

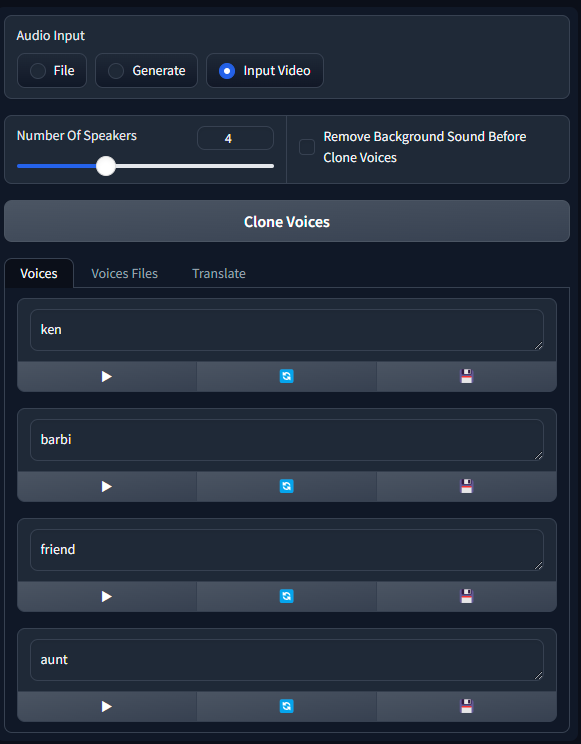

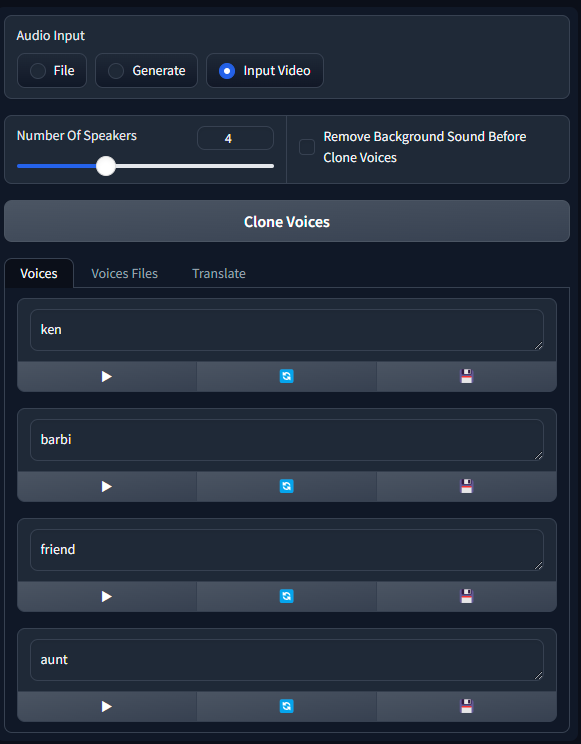

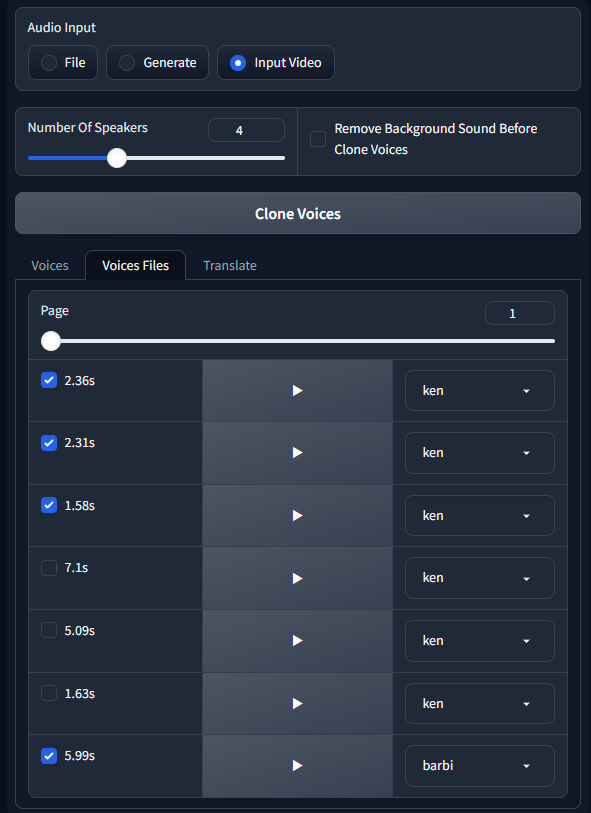

###Clone Voices:

1. **Number Of Speakers**: The number of speakers in the video. Help clone to know how many voices to clone.

2. **Remove Background Sound Before Clone**: Remove noise/music from the background sound before clone.

3. **Clone Voices**: Clone voices from the input video.

4. **Voices**: List of the cloned voices. You can rename voice to identify them in translation.

For each voices you can :

- **Play**: Listen the voice.

- **regen sentence**: Regenerate the sentence sample.

- **save voice**: Save the voice to your voices library.

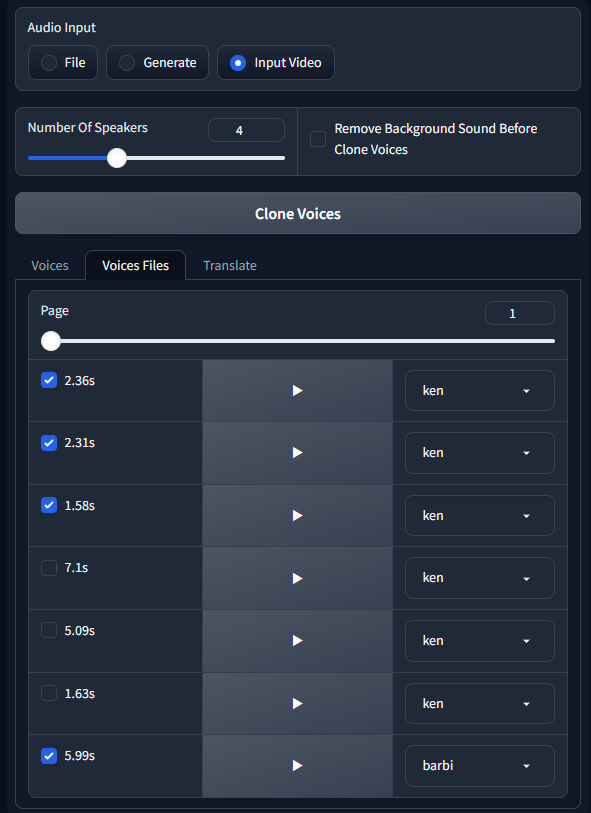

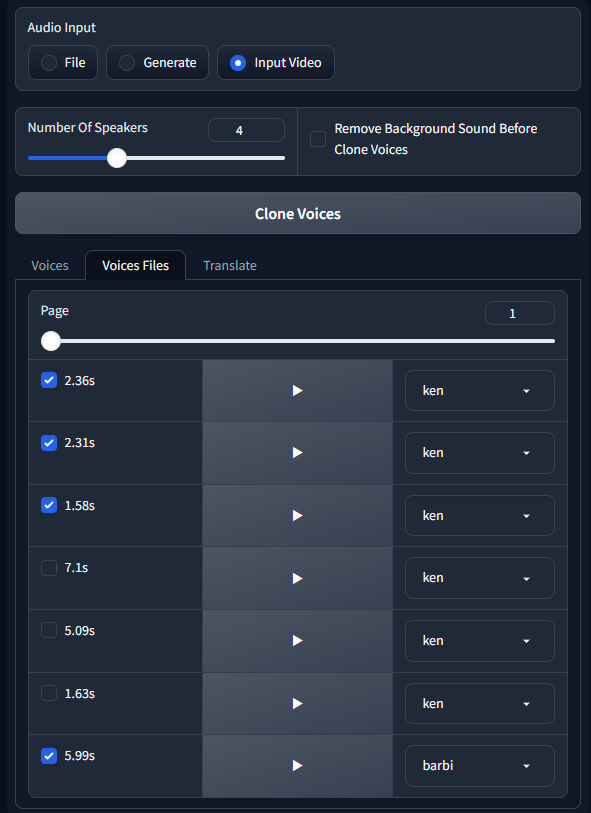

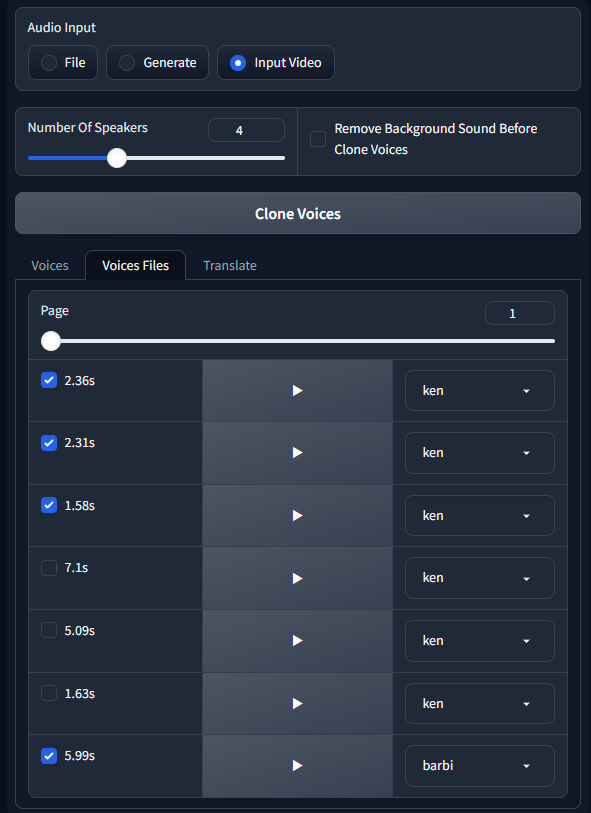

5. **Voices Files**: List of voices files used by models to create the cloned voices. You can modify the voices files to change the cloned voices. Make sure to have only one voice per file, no background sound and no music.

You can listen the voices files by clicking on the play button. and change the speaker name to identify the voice.

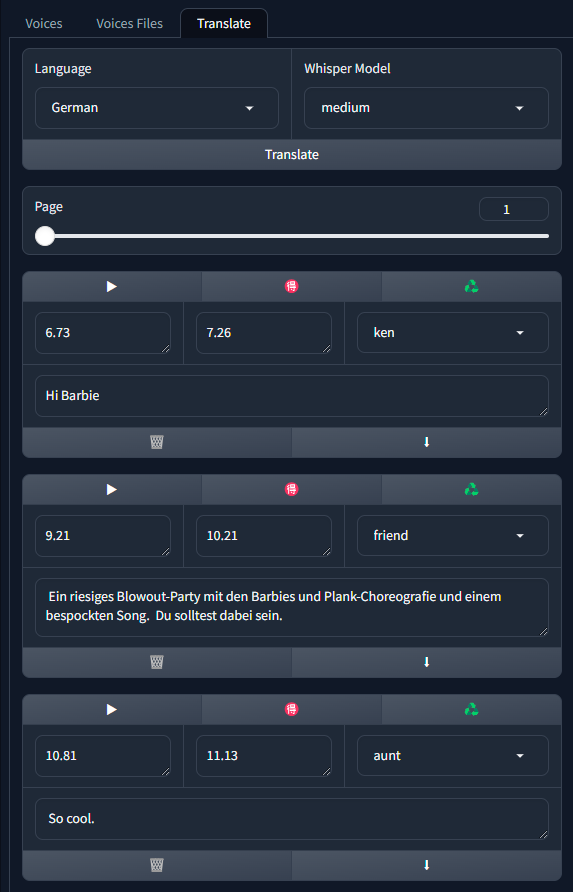

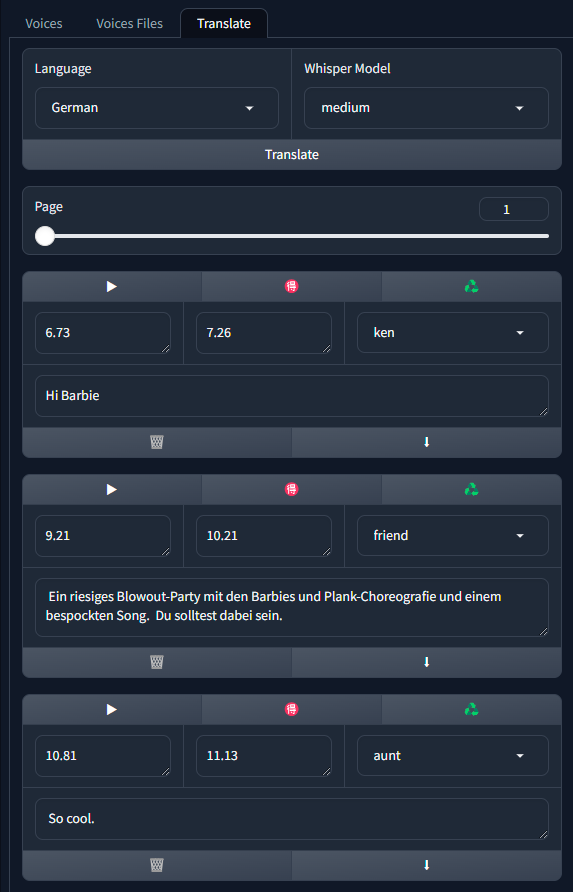

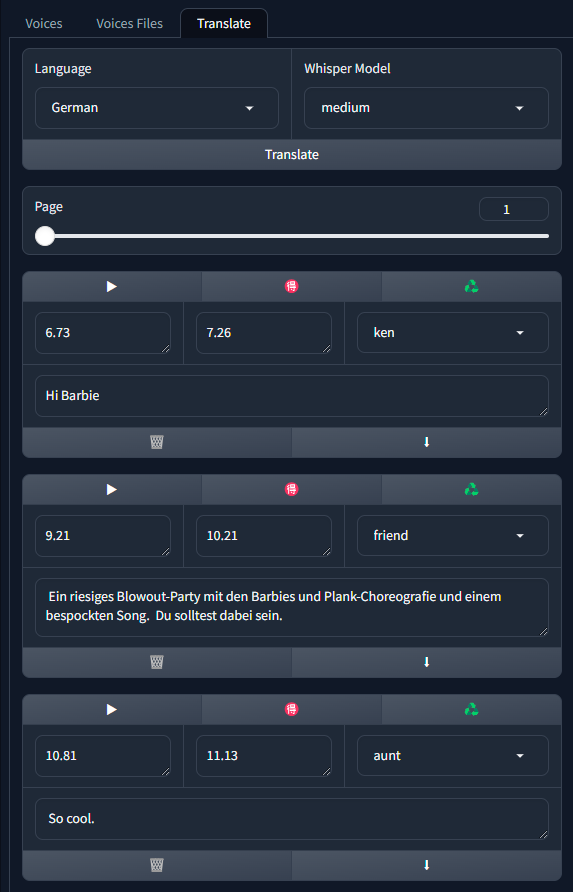

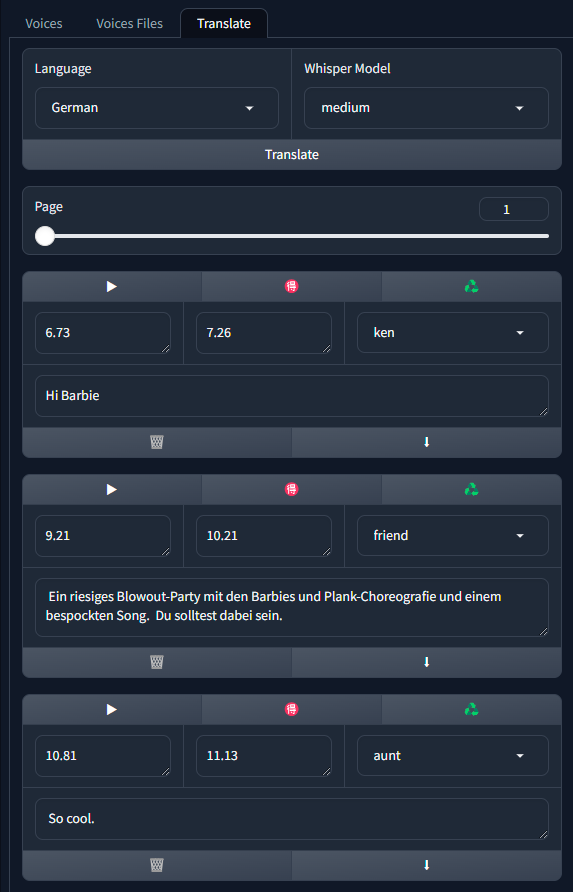

###Translation:

Translation panel is now linked to the cloned voices panel because translation will try to identify the speaker to translate the voice.

1. **Language**: Target language to translate the input video.

2. **Whisper Model**: List of the whisper models to use for the translation, choose beetwen 5 models, the higher the model the better the quality but the slower the process.

3. **Translate**: Translate the input video to the selected language.

4. **Translation**: The translated text.

5. **Translated Audio**: The translated audio.

6. **Convert To Audio**: Convert the translated text to translated audio.

For each segment of the translated text, you can :

- Modify the translated text

- Modify the time start and end of the segment.

- Change the speaker of the segment.

- listen to the original audio by click on the play button.

- listen to the translated audio by click on the red ideogram button.

- Generate the translation for this segment by click on the recycle button.

- Delete the segment by click on the trash button.

- Add a new segment under this one by click on the arrow down button.

# 📺 Examples

https://user-images.githubusercontent.com/800903/262439441-bb9d888a-d33e-4246-9f0a-1ddeac062d35.mp4

https://user-images.githubusercontent.com/800903/262442794-61b1e32f-3f87-4b36-98d6-f711822bdb1e.mp4

https://user-images.githubusercontent.com/800903/262449305-901086a3-22cb-42d2-b5be-a5f38db4549a.mp4

https://user-images.githubusercontent.com/800903/267808494-300f8cc3-9136-4810-86e2-92f2114a5f9a.mp4

# 📖 Behind the scenes

This extension operates in several stages to improve the quality of Wav2Lip-generated videos:

1. **Generate face swap video**: The script first generates the face swap video if image is in "face Swap" field, this operation take times so be patient.

2. **Generate a Wav2lip video**: Then script generates a low-quality Wav2Lip video using the input video and audio.

3. **Video Quality Enhancement**: Create a high-quality video using the low-quality video by using the enhancer define by user.

4. **Mask Creation**: The script creates a mask around the mouth and tries to keep other facial motions like those of the cheeks and chin.

5. **Video Generation**: The script then takes the high-quality mouth image and overlays it onto the original image guided by the mouth mask.

# 💪 Quality tips

- Use a high quality video as input

- Use a video with a consistent frame rate. Occasionally, videos may exhibit unusual playback frame rates (not the standard 24, 25, 30, 60), which can lead to issues with the face mask.

- Use a high quality audio file as input, without background noise or music. Clean audio with a tool like [https://podcast.adobe.com/enhance](https://podcast.adobe.com/enhance).

- Dilate the mouth mask. This will help the model retain some facial motion and hide the original mouth.

- Mask Blur maximum twice the value of Mouth Mask Dilate. If you want to increase the blur, increase the value of Mouth Mask Dilate otherwise the mouth will be blurred and the underlying mouth could be visible.

- Upscaling can be good for improving result, particularly around the mouth area. However, it will extend the processing duration. Use this tutorial from Olivio Sarikas to upscale your video: [https://www.youtube.com/watch?v=3z4MKUqFEUk](https://www.youtube.com/watch?v=3z4MKUqFEUk). Ensure the denoising strength is set between 0.0 and 0.05, select the 'revAnimated' model, and use the batch mode. i'll create a tutorial for this soon.

# ⚠ Noted Constraints

- for speed up process try to keep resolution under 1000x1000px and upscaling after process.

- If the initial phase is excessively lengthy, consider using the "resize factor" to decrease the video's dimensions.

- While there's no strict size limit for videos, larger videos will require more processing time. It's advisable to employ the "resize factor" to minimize the video size and then upscale the video once processing is complete.

# know issues:

If you have issues to install insightface, follow this step:

- Download [insightface precompiled](https://github.com/Gourieff/Assets/raw/main/Insightface/insightface-0.7.3-cp310-cp310-win_amd64.whl) and paste it in the root folder of Wav2lip-studio

- in terminal go to wav2lip-studio folder and type the following commands:

```

.\venv\Scripts\activate

python -m pip install -U pip

python -m pip install insightface-0.7.3-cp310-cp310-win_amd64.whl

```

Enjoy

# 📝 To do

- ✔️ Standalone version

- ✔️ Add a way to use a face swap image

- ✔️ Add Possibility to use a video for audio input

- ✔️ Convert avi to mp4. Avi is not show in video input but process work fine

- [ ] ComfyUI intergration

# 😎 Contributing

We welcome contributions to this project. When submitting pull requests, please provide a detailed description of the changes. see [CONTRIBUTING](CONTRIBUTING.md) for more information.

# 🙏 Appreciation

- [Wav2Lip](https://github.com/Rudrabha/Wav2Lip)

- [CodeFormer](https://github.com/sczhou/CodeFormer)

- [Coqui TTS](https://github.com/coqui-ai/TTS)

- [facefusion](https://github.com/facefusion/facefusion)

- [Vocal Remover](https://github.com/tsurumeso/vocal-remover)

# ☕ Support Wav2lip Studio

this project is open-source effort that is free to use and modify. I rely on the support of users to keep this project going and help improve it. If you'd like to support me, you can make a donation on my Patreon page. Any contribution, large or small, is greatly appreciated!

Your support helps me cover the costs of development and maintenance, and allows me to allocate more time and resources to enhancing this project. Thank you for your support!

[patreon page](https://www.patreon.com/Wav2LipStudio)

# 📝 Citation

If you use this project in your own work, in articles, tutorials, or presentations, we encourage you to cite this project to acknowledge the efforts put into it.

To cite this project, please use the following BibTeX format:

```

@misc{wav2lip_uhq,

author = {numz},

title = {Wav2Lip UHQ},

year = {2023},

howpublished = {GitHub repository},

publisher = {numz},

url = {https://github.com/numz/sd-wav2lip-uhq}

}

```

# 📜 License

* The code in this repository is released under the MIT license as found in the [LICENSE file](LICENSE).

https://user-images.githubusercontent.com/800903/262435301-af205a91-30d7-43f2-afcc-05980d581fe0.mp4

## 💡 Description

This repository contains a Wav2Lip Studio Standalone Version.

It's an all-in-one solution: just choose a video and a speech file (wav or mp3), and the tools will generate a lip-sync video, faceswap, voice clone, and translate video with voice clone (HeyGen like).

It improves the quality of the lip-sync videos generated by the [Wav2Lip tool](https://github.com/Rudrabha/Wav2Lip) by applying specific post-processing techniques.

## 📖 Quick Index

* [🚀 Updates](#-updates)

* [🔗 Requirements](#-requirements)

* [💻 Installation](#-installation)

* [🐍 Tutorial](#-tutorial)

* [🐍 Usage](#-usage)

* [👄 Keyframes Manager](#-keyframes-manager)

* [👄 Input Video](#-input-video)

* [📺 Examples](#-examples)

* [📖 Behind the scenes](#-behind-the-scenes)

* [💪 Quality tips](#-quality-tips)

* [⚠️Noted Constraints](#-noted-constraints)

* [📝 To do](#-to-do)

* [😎 Contributing](#-contributing)

* [🙏 Appreciation](#-appreciation)

* [📝 Citation](#-citation)

* [📜 License](#-license)

* [☕ Support Wav2lip Studio](#-support-wav2lip-studio)

## 🚀 Updates

**2024.10.13 Add avatar for driving video**

- 💪 Add 10 new avatars for driving video, you can now choose an avatar before generate the driving video.

- 📺 Add a feature to close or not the mouth before generating lip sync video.

- 🐛 Easy docker installation, follow instructions bellow.

- ♻ Better macos integration, follow instructions bellow.

- 🚀 In Comfyui panel, you can now regenerate mask and keyframe after modification of your video, allow better mouth mask.

**2024.09.03 ComfyUI Integration in Lip Sync Studio**

- 💪Manage and chain your comfyui worklows from end to end.

**2024.08.07 Major Update (Standalone version only)**

- 📺"Add Driving video feature": this feature allows you to generate a driving video to generate better lip sync.

**2024.05.06 Major Update (Standalone version only)**

- 🐛"Data Structure": I had to restructure the files to allow for better quality in the video output. The previous version did everything in RAM at the expense of video quality; each pass degraded the videos, for example, if you did a face swap + Wav2Lip, there was a degradation of quality because of creating a first pass for Wav2Lip and a second for face swap. You will now find a "data" directory in each project containing all the files necessary for the tool's work and maintaining quality through different passes (quality above all).

- ♻"Wav2Lip Video Outputs": After generating Wav2Lip videos, the videos are numbered in the output directory. Clicking on "video quality" loads the last video of the specified quality.

- 👄"Zero Mouth": this feature should allow closing a person's mouth before proceeding with lip-syncing, sometimes it doesn't have much effect or can add some flickering to the image, but I have had good results in some cases. Technically, this will take two passes to close the mouth, you will find the frames used by the tool in "data\zero."

- 👬"Clone Voice": the interface has been revised.

- 💪"High Quality Vs Best Quality": In this version, there is not much difference between High and Best. Best is to be used with videos where faces are large on the screen like on a 4K video for example. The process behind just uses different GFPGAN models and a different face alignment.

- ▶ "Show Frame Number": In Low Quality only, the frame number appears in the top left corner. This helps to identify the frame where you want to make modifications.

- 📺"Show Wav2Lip Output": this feature allows you to see the Wav2Lip output taking into account the input audio.

- "New Face Alignment": The face alignment has been reviewed.

- 🔑"Zoom In, Zoom Out, Move Right,...": Now you will understand why sometimes the results are strange and generate deformed lips, broken teeth, or other very strange things.I recommend the video tutorial here: https://www.patreon.com/posts/key-feature-103716855

**2024.02.09 Spped Up Update (Standalone version only)**

- 👬 Clone voice: Add controls to manage the voice clone (See Usage section)

- 🎏 translate video: Add features to translate panel to manage translation (See Usage section)

- 📺 Add Trim feature: Add a feature to trim the video.

- 🔑 Automatic mask: Add a feature to automatically calculate the mask parameters (padding, dilate...). You can change parameters if needed.

- 🚀 Speed up processes : All processes are now faster, Analysis, Face Swap, Generation in High quality

- 💪 Less disk space used : Remove temporary files after generation and keep only necessary data, will greatly reduce disk space used.

**2024.01.20 Major Update (Standalone version only)**

- ♻ Manage project: Add a feature to manage multiple project

- 👪 Introduced multiple face swap: Can now Swap multiple face in one shot (See Usage section)

- ⛔ Visible face restriction: Can now make whole process even if no face detected on frame!

- 📺 Video Size: works with high resolution video input, (test with 1980x1080, should works with 4K but slow)

- 🔑 Keyframe manager: Add a keyframe manager for better control of the video generation

- 🍪 coqui TTS integration: Remove bark integration, use coqui TTS instead (See Usage section)

- 💬 Conversation: Add a conversation feature with multiple person (See Usage section)

- 🔈 Record your own voice: Add a feature to record your own voice (See Usage section)

- 👬 Clone voice: Add a feature to clone voice from video (See Usage section)

- 🎏 translate video: Add a feature to translate video with voice clone (See Usage section)

- 🔉 Volume amplifier for wav2lip: Add a feature to amplify the volume of the wav2lip output (See Usage section)

- 🕡 Add delay before sound speech start

- 🚀 Speed up process: Speed up the process

**2023.09.13**

- 👪 Introduced face swap: facefusion integration (See Usage section) **this feature is under experimental**.

**2023.08.22**

- 👄 Introduced [bark](https://github.com/suno-ai/bark/) (See Usage section), **this feature is under experimental**.

**2023.08.20**

- 🚢 Introduced the GFPGAN model as an option.

- ▶ Added the feature to resume generation.

- 📏 Optimized to release memory post-generation.

**2023.08.17**

- 🐛 Fixed purple lips bug

**2023.08.16**

- ⚡ Added Wav2lip and enhanced video output, with the option to download the one that's best for you, likely the "generated video".

- 🚢 Updated User Interface: Introduced control over CodeFormer Fidelity.

- 👄 Removed image as input, [SadTalker](https://github.com/OpenTalker/SadTalker) is better suited for this.

- 🐛 Fixed a bug regarding the discrepancy between input and output video that incorrectly positioned the mask.

- 💪 Refined the quality process for greater efficiency.

- 🚫 Interruption will now generate videos if the process creates frames

**2023.08.13**

- ⚡ Speed-up computation

- 🚢 Change User Interface : Add controls on hidden parameters

- 👄 Only Track mouth if needed

- 📰 Control debug

- 🐛 Fix resize factor bug

# 💻 Installation

## 🔗 Requirements (windows, linux, macos)

1. FFmpeg : download it from the [official FFmpeg site](https://ffmpeg.org/download.html). Follow the instructions appropriate for your operating system, note ffmpeg have to be accessible from the command line.

- Make sure ffmpeg is in your PATH environment variable. If not, add it to your PATH environment variable.

2. pyannote.audio:You need to agree to share your contact information to access pyannote models.

To do so, go to both link:

- [pyannote diarization-3.1 huggingface repository](https://huggingface.co/pyannote/speaker-diarization-3.1)

- [pyannote segmentation-3.0 huggingface repository](https://huggingface.co/pyannote/segmentation-3.0)

set each field and click "Agree and access repository"

2. Create an access token to Huggingface:

1. Connect with your account

2. go to [access tokens](https://huggingface.co/settings/token) in settings

3. create a new token in read mode

4. copy the token

5. paste it in the file api_keys.json

```json

{

"huggingface_token": "your token"

}

```

3. Install [python 3.10.11](https://www.python.org/downloads/release/python-31011/) (for mac users follow instructions bellow)

4. Install [git](https://git-scm.com/downloads)

5. Check ffmpeg, python, cuda and git installation

```bash

python --version

git --version

ffmpeg -version

nvcc --version (only if you have a Nvidia GPU and not MacOS)

```

Must return something like

```bash

Python 3.10.11

git version 2.35.1.windows.2

ffmpeg version N-110509-g722ff74055-20230506 Copyright (c) 2000-2023 the FFmpeg developers built with gcc 12.2.0 (crosstool-NG 1.25.0.152_89671bf) bla bla bla...

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2022 NVIDIA Corporation

Built on Wed_Sep_21_10:41:10_Pacific_Daylight_Time_2022

Cuda compilation tools, release 11.8, V11.8.89

Build cuda_11.8.r11.8/compiler.31833905_0

```

## Linux Users

1. make sure to have git-lfs installed

```bash

sudo apt-get install git-lfs

```

## Windows Users

1. Install [Cuda 11.8](https://developer.nvidia.com/cuda-11-8-0-download-archive) if not ever done.

2. Install [Visual Studio](https://visualstudio.microsoft.com/fr/downloads/). During the install, make sure to include the Python and C++ packages in visual studio installer.

3. if you have multiple Python version on your computer edit launch.py and change the following line:

```bash

REM set PYTHON="your python.exe path"

```

```bash

set PYTHON="your python.exe path"

```

4. double click on wav2lip-studio.bat, that will install the requirements and download the models

## MACOS Users

1. Install python 3.9

```

brew update

brew install python@3.9

brew install ffmpeg

brew install git-lfs

git-lfs install

xcode-select --install

```

2. unzip the wav2lip-studio.zip in a folder

```

unzip wav2lip-studio.zip

```

3. Install environnement and requirements

```

cd /YourWav2lipStudioFolder

/opt/homebrew/bin/python3.9 -m venv venv

./venv/bin/python3.9 -m pip install inaSpeechSegmenter

./venv/bin/python3.9 -m pip install tyro==0.8.5 pykalman==0.9.7

./venv/bin/python3.9 -m pip install transformers==4.33.2

./venv/bin/python3.9 -m pip install spacy==3.7.4

./venv/bin/python3.9 -m pip install TTS==0.21.2

./venv/bin/python3.9 -m pip install gradio==4.14.0 imutils==0.5.4 moviepy websocket-client requests_toolbelt filetype numpy opencv-python==4.8.0.76 scipy==1.11.2 requests==2.28.1 pillow==9.3.0 librosa==0.10.0 opencv-contrib-python==4.8.0.76 huggingface_hub==0.20.2 tqdm==4.66.1 cutlet==0.3.0 numba==0.57.1 imageio_ffmpeg==0.4.9 insightface==0.7.3 unidic==1.1.0 onnx==1.14.1 onnxruntime==1.16.0 psutil==5.9.5 lpips==0.1.4 GitPython==3.1.36 facexlib==0.3.0 gfpgan==1.3.8 gdown==4.7.1 pyannote.audio==3.1.1 openai-whisper==20231117 resampy==0.4.0 scenedetect==0.6.2 uvicorn==0.23.2 starlette==0.35.1 fastapi==0.109.0

./venv/bin/python3.9 -m pip install torch==2.1.2 torchvision==0.16.2 torchaudio==2.1.2

./venv/bin/python3.9 -m pip install numpy==1.24.4

```

3.1. for silicon, one more step is needed

```

./venv/bin/python3.9 -m pip uninstall torch torchvision torchaudio

./venv/bin/python3.9 -m pip install --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/cpu

sed -i '' 's/from torchvision.transforms.functional_tensor import rgb_to_grayscale/from torchvision.transforms.functional import rgb_to_grayscale/' venv/lib/python3.9/site-packages/basicsr/data/degradations.py

```

4. Install models

```

git clone https://huggingface.co/numz/wav2lip_studio-0.2 models

git clone https://huggingface.co/KwaiVGI/LivePortrait models/pretrained_weights

```

5. Launch UI

```

mkdir projects

export PYTORCH_ENABLE_MPS_FALLBACK=1

./venv/bin/python3.9 wav2lip_studio.py

```

# Tutorial

- [FR version](https://youtu.be/43Q8YASkcUA)

- [EN Version](https://youtu.be/B84A5alpPDc)

# 🐍 Usage

##PARAMETERS

1. Enter project name and click enter.

2. Choose a video (avi or mp4 format). Note avi file will not appear in Video input but process will works.

3. Face Swap (take times so be patient):

- **Face Swap**: choose the image of the faces you want to swap with the face in the video (multiple faces are now available), left face is id 0.

4. **Resolution Divide Factor**: The resolution of the video will be divided by this factor. The higher the factor, the faster the process, but the lower the resolution of the output video.

5. **Min Face Width Detection**: The minimum width of the face to detect. Allow to ignore little face in the video.

6. **Align Faces**: allows for straightening the head before sending it for Wav2Lip processing.

7. **Keyframes On Speaker Change**: Allows you to generate a keyframe when the speaker changes. This allows you to better control the video generation.

8. **Keyframes On scene Change**: Allows you to generate a keyframe when the scene changes. This allows you to better control the video generation.

9. When parameters above are set click on **Generate Keyframes**, See [Keyframes manager](#keyframes-manager) section for more details.

10. Audio, 3 options:

1. Put audio file in the "Speech" input. or record one with the "Record" button.

2. Generate Audio with the text to speech [coqui TTS](https://github.com/coqui-ai/TTS) integration.

1. Choose the language

2. Choose the Voice

3. Write your speech in the text area "Prompt" in text format or json format:

1. Text format:

```bash

Hello, my name is John. I am 25 years old.

```

2. Json format (you can ask chat GPT to generate discussion for you):

```bash

[

{

"start": 0.0,

"end": 3.0,

"text": "Hello, my name is John. I am 25 years old.",

"speaker": "arnold"

},

{

"start": 3.0,

"end": 4.0,

"text": "Ho really ?",

"speaker": "female_01"

},

...

]

```

3. Input Video: Allow to use audio from the input video, voices cloning and translation. see [Input Video](#input-video) section for more details.

11. **Driving Video**: Choose an avatar to generate a driving video.

- **Avatars**: Choose between 10 avatars to use for the driving video, each one will give a different driving result on lipsync output video.

- **Close Mouth**: Close the mouth of the avatar before generating the driving video.

- **Generate Driving Video**: Generate the driving video.

12. **Video Quality**:

- **Low**: Original Wav2Lip quality, fast but not very good.

- **Medium**: Better quality by apply post processing on the mouth, slower.

- **High**: Better quality by apply post processing and upscale the mouth quality, slower.

13. **Wav2lip Checkpoint**: Choose beetwen 2 wav2lip model:

- **Wav2lip**: Original Wav2Lip model, fast but not very good.

- **Wav2lip GAN**: Better quality by apply post processing on the mouth, slower.

14. **Face Restoration Model**: Choose beetwen 2 face restoration model:

- **Code Former**:

- A value of 0 offers higher quality but may significantly alter the person's facial appearance and cause noticeable flickering between frames.

- A value of 1 provides lower quality but maintains the person's face more consistently and reduces frame flickering.

- Using a value below 0.5 is not advised. Adjust this setting to achieve optimal results. Starting with a value of 0.75 is recommended.

- **GFPGAN**: Usually better quality.

15. **Volume Amplifier**: Not amplify the volume of the output audio but allows you to amplify the volume of the audio when sending it to Wav2Lip. This allows you to better control on lips movement.

## KEYFRAMES MANAGER

###Global parameters:

1. **Only show Speaker Face**: This option allows you to only focus the face of the speaker, the other faces will be hidden.

2. **Frame Number**: A slider that allows you to move between the frames of the video.

3. **Add Keyframe**: Allows you to add a keyframe at the current Frame Number.

4. **Remove Keyframe**: Allows you to remove a keyframe at the current Frame Number.

5. **Keyframes**: A list of all the keyframes.

###For each face on keyframe:

1. **Face Id**: List of all the faces in current keyframe.

2. **translation info**: If there is a translation associate to the project it will be shown here, you can see the speaker, and then it can help to select the good speaker on this keyframe.

3. **Speaker**: Checkbox to set the speaker on the current Face Id of the current keyframe.

4. **Face Swap Id**: Checkbox to set the face swap id of the current keyframe on the current Face Id.

5. **Automatic Mask**: Default True, if False, you can draw the mask manually.

6. **Mouth Mask Dilate**: This will dilate the mouth mask to cover more area around the mouth. depends on the mouth size.

7. **Face Mask Erode**: This will erode the face mask to remove some area around the face. depends on the face size.

8. **Mask Blur**: This will blur the mask to make it more smooth, try to keep it under or equal to **Mouth Mask Dilate**.

9. **Padding sliders**: This will add padding to the head to avoid cuting the head in the video.

When you configure a keyframes, it's influence goes until next keyframe so intermediate frames will be generated with the same configuration.

Note that this configuration can't be seen in UI for intermediate frames.

## Input Video

If no sound in translated audio, will take the audio from the input video. Can be useful if you have a bad lipsync on the input video.

###Clone Voices:

1. **Number Of Speakers**: The number of speakers in the video. Help clone to know how many voices to clone.

2. **Remove Background Sound Before Clone**: Remove noise/music from the background sound before clone.

3. **Clone Voices**: Clone voices from the input video.

4. **Voices**: List of the cloned voices. You can rename voice to identify them in translation.

For each voices you can :

- **Play**: Listen the voice.

- **regen sentence**: Regenerate the sentence sample.

- **save voice**: Save the voice to your voices library.

5. **Voices Files**: List of voices files used by models to create the cloned voices. You can modify the voices files to change the cloned voices. Make sure to have only one voice per file, no background sound and no music.

You can listen the voices files by clicking on the play button. and change the speaker name to identify the voice.

###Translation:

Translation panel is now linked to the cloned voices panel because translation will try to identify the speaker to translate the voice.

1. **Language**: Target language to translate the input video.

2. **Whisper Model**: List of the whisper models to use for the translation, choose beetwen 5 models, the higher the model the better the quality but the slower the process.

3. **Translate**: Translate the input video to the selected language.

4. **Translation**: The translated text.

5. **Translated Audio**: The translated audio.

6. **Convert To Audio**: Convert the translated text to translated audio.

For each segment of the translated text, you can :

- Modify the translated text

- Modify the time start and end of the segment.

- Change the speaker of the segment.

- listen to the original audio by click on the play button.

- listen to the translated audio by click on the red ideogram button.

- Generate the translation for this segment by click on the recycle button.

- Delete the segment by click on the trash button.

- Add a new segment under this one by click on the arrow down button.

# 📺 Examples

https://user-images.githubusercontent.com/800903/262439441-bb9d888a-d33e-4246-9f0a-1ddeac062d35.mp4

https://user-images.githubusercontent.com/800903/262442794-61b1e32f-3f87-4b36-98d6-f711822bdb1e.mp4

https://user-images.githubusercontent.com/800903/262449305-901086a3-22cb-42d2-b5be-a5f38db4549a.mp4

https://user-images.githubusercontent.com/800903/267808494-300f8cc3-9136-4810-86e2-92f2114a5f9a.mp4

# 📖 Behind the scenes

This extension operates in several stages to improve the quality of Wav2Lip-generated videos:

1. **Generate face swap video**: The script first generates the face swap video if image is in "face Swap" field, this operation take times so be patient.

2. **Generate a Wav2lip video**: Then script generates a low-quality Wav2Lip video using the input video and audio.

3. **Video Quality Enhancement**: Create a high-quality video using the low-quality video by using the enhancer define by user.

4. **Mask Creation**: The script creates a mask around the mouth and tries to keep other facial motions like those of the cheeks and chin.

5. **Video Generation**: The script then takes the high-quality mouth image and overlays it onto the original image guided by the mouth mask.

# 💪 Quality tips

- Use a high quality video as input

- Use a video with a consistent frame rate. Occasionally, videos may exhibit unusual playback frame rates (not the standard 24, 25, 30, 60), which can lead to issues with the face mask.

- Use a high quality audio file as input, without background noise or music. Clean audio with a tool like [https://podcast.adobe.com/enhance](https://podcast.adobe.com/enhance).

- Dilate the mouth mask. This will help the model retain some facial motion and hide the original mouth.

- Mask Blur maximum twice the value of Mouth Mask Dilate. If you want to increase the blur, increase the value of Mouth Mask Dilate otherwise the mouth will be blurred and the underlying mouth could be visible.

- Upscaling can be good for improving result, particularly around the mouth area. However, it will extend the processing duration. Use this tutorial from Olivio Sarikas to upscale your video: [https://www.youtube.com/watch?v=3z4MKUqFEUk](https://www.youtube.com/watch?v=3z4MKUqFEUk). Ensure the denoising strength is set between 0.0 and 0.05, select the 'revAnimated' model, and use the batch mode. i'll create a tutorial for this soon.

# ⚠ Noted Constraints

- for speed up process try to keep resolution under 1000x1000px and upscaling after process.

- If the initial phase is excessively lengthy, consider using the "resize factor" to decrease the video's dimensions.

- While there's no strict size limit for videos, larger videos will require more processing time. It's advisable to employ the "resize factor" to minimize the video size and then upscale the video once processing is complete.

# know issues:

If you have issues to install insightface, follow this step:

- Download [insightface precompiled](https://github.com/Gourieff/Assets/raw/main/Insightface/insightface-0.7.3-cp310-cp310-win_amd64.whl) and paste it in the root folder of Wav2lip-studio

- in terminal go to wav2lip-studio folder and type the following commands:

```

.\venv\Scripts\activate

python -m pip install -U pip

python -m pip install insightface-0.7.3-cp310-cp310-win_amd64.whl

```

Enjoy

# 📝 To do

- ✔️ Standalone version

- ✔️ Add a way to use a face swap image

- ✔️ Add Possibility to use a video for audio input

- ✔️ Convert avi to mp4. Avi is not show in video input but process work fine

- [ ] ComfyUI intergration

# 😎 Contributing

We welcome contributions to this project. When submitting pull requests, please provide a detailed description of the changes. see [CONTRIBUTING](CONTRIBUTING.md) for more information.

# 🙏 Appreciation

- [Wav2Lip](https://github.com/Rudrabha/Wav2Lip)

- [CodeFormer](https://github.com/sczhou/CodeFormer)

- [Coqui TTS](https://github.com/coqui-ai/TTS)

- [facefusion](https://github.com/facefusion/facefusion)

- [Vocal Remover](https://github.com/tsurumeso/vocal-remover)

# ☕ Support Wav2lip Studio

this project is open-source effort that is free to use and modify. I rely on the support of users to keep this project going and help improve it. If you'd like to support me, you can make a donation on my Patreon page. Any contribution, large or small, is greatly appreciated!

Your support helps me cover the costs of development and maintenance, and allows me to allocate more time and resources to enhancing this project. Thank you for your support!

[patreon page](https://www.patreon.com/Wav2LipStudio)

# 📝 Citation

If you use this project in your own work, in articles, tutorials, or presentations, we encourage you to cite this project to acknowledge the efforts put into it.

To cite this project, please use the following BibTeX format:

```

@misc{wav2lip_uhq,

author = {numz},

title = {Wav2Lip UHQ},

year = {2023},

howpublished = {GitHub repository},

publisher = {numz},

url = {https://github.com/numz/sd-wav2lip-uhq}

}

```

# 📜 License

* The code in this repository is released under the MIT license as found in the [LICENSE file](LICENSE).

https://user-images.githubusercontent.com/800903/262435301-af205a91-30d7-43f2-afcc-05980d581fe0.mp4

## 💡 Description

This repository contains a Wav2Lip Studio Standalone Version.

It's an all-in-one solution: just choose a video and a speech file (wav or mp3), and the tools will generate a lip-sync video, faceswap, voice clone, and translate video with voice clone (HeyGen like).

It improves the quality of the lip-sync videos generated by the [Wav2Lip tool](https://github.com/Rudrabha/Wav2Lip) by applying specific post-processing techniques.

## 📖 Quick Index

* [🚀 Updates](#-updates)

* [🔗 Requirements](#-requirements)

* [💻 Installation](#-installation)

* [🐍 Tutorial](#-tutorial)

* [🐍 Usage](#-usage)

* [👄 Keyframes Manager](#-keyframes-manager)

* [👄 Input Video](#-input-video)

* [📺 Examples](#-examples)

* [📖 Behind the scenes](#-behind-the-scenes)

* [💪 Quality tips](#-quality-tips)

* [⚠️Noted Constraints](#-noted-constraints)

* [📝 To do](#-to-do)

* [😎 Contributing](#-contributing)

* [🙏 Appreciation](#-appreciation)

* [📝 Citation](#-citation)

* [📜 License](#-license)

* [☕ Support Wav2lip Studio](#-support-wav2lip-studio)

## 🚀 Updates

**2024.10.13 Add avatar for driving video**

- 💪 Add 10 new avatars for driving video, you can now choose an avatar before generate the driving video.

- 📺 Add a feature to close or not the mouth before generating lip sync video.

- 🐛 Easy docker installation, follow instructions bellow.

- ♻ Better macos integration, follow instructions bellow.

- 🚀 In Comfyui panel, you can now regenerate mask and keyframe after modification of your video, allow better mouth mask.

**2024.09.03 ComfyUI Integration in Lip Sync Studio**

- 💪Manage and chain your comfyui worklows from end to end.

**2024.08.07 Major Update (Standalone version only)**

- 📺"Add Driving video feature": this feature allows you to generate a driving video to generate better lip sync.

**2024.05.06 Major Update (Standalone version only)**

- 🐛"Data Structure": I had to restructure the files to allow for better quality in the video output. The previous version did everything in RAM at the expense of video quality; each pass degraded the videos, for example, if you did a face swap + Wav2Lip, there was a degradation of quality because of creating a first pass for Wav2Lip and a second for face swap. You will now find a "data" directory in each project containing all the files necessary for the tool's work and maintaining quality through different passes (quality above all).

- ♻"Wav2Lip Video Outputs": After generating Wav2Lip videos, the videos are numbered in the output directory. Clicking on "video quality" loads the last video of the specified quality.

- 👄"Zero Mouth": this feature should allow closing a person's mouth before proceeding with lip-syncing, sometimes it doesn't have much effect or can add some flickering to the image, but I have had good results in some cases. Technically, this will take two passes to close the mouth, you will find the frames used by the tool in "data\zero."

- 👬"Clone Voice": the interface has been revised.

- 💪"High Quality Vs Best Quality": In this version, there is not much difference between High and Best. Best is to be used with videos where faces are large on the screen like on a 4K video for example. The process behind just uses different GFPGAN models and a different face alignment.

- ▶ "Show Frame Number": In Low Quality only, the frame number appears in the top left corner. This helps to identify the frame where you want to make modifications.

- 📺"Show Wav2Lip Output": this feature allows you to see the Wav2Lip output taking into account the input audio.

- "New Face Alignment": The face alignment has been reviewed.

- 🔑"Zoom In, Zoom Out, Move Right,...": Now you will understand why sometimes the results are strange and generate deformed lips, broken teeth, or other very strange things.I recommend the video tutorial here: https://www.patreon.com/posts/key-feature-103716855

**2024.02.09 Spped Up Update (Standalone version only)**

- 👬 Clone voice: Add controls to manage the voice clone (See Usage section)

- 🎏 translate video: Add features to translate panel to manage translation (See Usage section)

- 📺 Add Trim feature: Add a feature to trim the video.

- 🔑 Automatic mask: Add a feature to automatically calculate the mask parameters (padding, dilate...). You can change parameters if needed.

- 🚀 Speed up processes : All processes are now faster, Analysis, Face Swap, Generation in High quality

- 💪 Less disk space used : Remove temporary files after generation and keep only necessary data, will greatly reduce disk space used.

**2024.01.20 Major Update (Standalone version only)**

- ♻ Manage project: Add a feature to manage multiple project

- 👪 Introduced multiple face swap: Can now Swap multiple face in one shot (See Usage section)

- ⛔ Visible face restriction: Can now make whole process even if no face detected on frame!

- 📺 Video Size: works with high resolution video input, (test with 1980x1080, should works with 4K but slow)

- 🔑 Keyframe manager: Add a keyframe manager for better control of the video generation

- 🍪 coqui TTS integration: Remove bark integration, use coqui TTS instead (See Usage section)

- 💬 Conversation: Add a conversation feature with multiple person (See Usage section)

- 🔈 Record your own voice: Add a feature to record your own voice (See Usage section)

- 👬 Clone voice: Add a feature to clone voice from video (See Usage section)

- 🎏 translate video: Add a feature to translate video with voice clone (See Usage section)

- 🔉 Volume amplifier for wav2lip: Add a feature to amplify the volume of the wav2lip output (See Usage section)

- 🕡 Add delay before sound speech start

- 🚀 Speed up process: Speed up the process

**2023.09.13**

- 👪 Introduced face swap: facefusion integration (See Usage section) **this feature is under experimental**.

**2023.08.22**

- 👄 Introduced [bark](https://github.com/suno-ai/bark/) (See Usage section), **this feature is under experimental**.

**2023.08.20**

- 🚢 Introduced the GFPGAN model as an option.

- ▶ Added the feature to resume generation.

- 📏 Optimized to release memory post-generation.

**2023.08.17**

- 🐛 Fixed purple lips bug

**2023.08.16**

- ⚡ Added Wav2lip and enhanced video output, with the option to download the one that's best for you, likely the "generated video".

- 🚢 Updated User Interface: Introduced control over CodeFormer Fidelity.

- 👄 Removed image as input, [SadTalker](https://github.com/OpenTalker/SadTalker) is better suited for this.

- 🐛 Fixed a bug regarding the discrepancy between input and output video that incorrectly positioned the mask.

- 💪 Refined the quality process for greater efficiency.

- 🚫 Interruption will now generate videos if the process creates frames

**2023.08.13**

- ⚡ Speed-up computation

- 🚢 Change User Interface : Add controls on hidden parameters

- 👄 Only Track mouth if needed

- 📰 Control debug

- 🐛 Fix resize factor bug

# 💻 Installation

## 🔗 Requirements (windows, linux, macos)

1. FFmpeg : download it from the [official FFmpeg site](https://ffmpeg.org/download.html). Follow the instructions appropriate for your operating system, note ffmpeg have to be accessible from the command line.

- Make sure ffmpeg is in your PATH environment variable. If not, add it to your PATH environment variable.

2. pyannote.audio:You need to agree to share your contact information to access pyannote models.

To do so, go to both link:

- [pyannote diarization-3.1 huggingface repository](https://huggingface.co/pyannote/speaker-diarization-3.1)

- [pyannote segmentation-3.0 huggingface repository](https://huggingface.co/pyannote/segmentation-3.0)

set each field and click "Agree and access repository"

2. Create an access token to Huggingface:

1. Connect with your account

2. go to [access tokens](https://huggingface.co/settings/token) in settings

3. create a new token in read mode

4. copy the token

5. paste it in the file api_keys.json

```json

{

"huggingface_token": "your token"

}

```

3. Install [python 3.10.11](https://www.python.org/downloads/release/python-31011/) (for mac users follow instructions bellow)

4. Install [git](https://git-scm.com/downloads)

5. Check ffmpeg, python, cuda and git installation

```bash

python --version

git --version

ffmpeg -version

nvcc --version (only if you have a Nvidia GPU and not MacOS)

```

Must return something like

```bash

Python 3.10.11

git version 2.35.1.windows.2

ffmpeg version N-110509-g722ff74055-20230506 Copyright (c) 2000-2023 the FFmpeg developers built with gcc 12.2.0 (crosstool-NG 1.25.0.152_89671bf) bla bla bla...

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2022 NVIDIA Corporation

Built on Wed_Sep_21_10:41:10_Pacific_Daylight_Time_2022

Cuda compilation tools, release 11.8, V11.8.89

Build cuda_11.8.r11.8/compiler.31833905_0

```

## Linux Users

1. make sure to have git-lfs installed

```bash

sudo apt-get install git-lfs

```

## Windows Users

1. Install [Cuda 11.8](https://developer.nvidia.com/cuda-11-8-0-download-archive) if not ever done.

2. Install [Visual Studio](https://visualstudio.microsoft.com/fr/downloads/). During the install, make sure to include the Python and C++ packages in visual studio installer.

3. if you have multiple Python version on your computer edit launch.py and change the following line:

```bash

REM set PYTHON="your python.exe path"

```

```bash

set PYTHON="your python.exe path"

```

4. double click on wav2lip-studio.bat, that will install the requirements and download the models

## MACOS Users

1. Install python 3.9

```

brew update

brew install python@3.9

brew install ffmpeg

brew install git-lfs

git-lfs install

xcode-select --install

```

2. unzip the wav2lip-studio.zip in a folder

```

unzip wav2lip-studio.zip

```

3. Install environnement and requirements

```

cd /YourWav2lipStudioFolder

/opt/homebrew/bin/python3.9 -m venv venv

./venv/bin/python3.9 -m pip install inaSpeechSegmenter

./venv/bin/python3.9 -m pip install tyro==0.8.5 pykalman==0.9.7

./venv/bin/python3.9 -m pip install transformers==4.33.2

./venv/bin/python3.9 -m pip install spacy==3.7.4

./venv/bin/python3.9 -m pip install TTS==0.21.2

./venv/bin/python3.9 -m pip install gradio==4.14.0 imutils==0.5.4 moviepy websocket-client requests_toolbelt filetype numpy opencv-python==4.8.0.76 scipy==1.11.2 requests==2.28.1 pillow==9.3.0 librosa==0.10.0 opencv-contrib-python==4.8.0.76 huggingface_hub==0.20.2 tqdm==4.66.1 cutlet==0.3.0 numba==0.57.1 imageio_ffmpeg==0.4.9 insightface==0.7.3 unidic==1.1.0 onnx==1.14.1 onnxruntime==1.16.0 psutil==5.9.5 lpips==0.1.4 GitPython==3.1.36 facexlib==0.3.0 gfpgan==1.3.8 gdown==4.7.1 pyannote.audio==3.1.1 openai-whisper==20231117 resampy==0.4.0 scenedetect==0.6.2 uvicorn==0.23.2 starlette==0.35.1 fastapi==0.109.0

./venv/bin/python3.9 -m pip install torch==2.1.2 torchvision==0.16.2 torchaudio==2.1.2

./venv/bin/python3.9 -m pip install numpy==1.24.4

```

3.1. for silicon, one more step is needed

```

./venv/bin/python3.9 -m pip uninstall torch torchvision torchaudio

./venv/bin/python3.9 -m pip install --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/cpu

sed -i '' 's/from torchvision.transforms.functional_tensor import rgb_to_grayscale/from torchvision.transforms.functional import rgb_to_grayscale/' venv/lib/python3.9/site-packages/basicsr/data/degradations.py

```

4. Install models

```

git clone https://huggingface.co/numz/wav2lip_studio-0.2 models

git clone https://huggingface.co/KwaiVGI/LivePortrait models/pretrained_weights

```

5. Launch UI

```

mkdir projects

export PYTORCH_ENABLE_MPS_FALLBACK=1

./venv/bin/python3.9 wav2lip_studio.py

```

# Tutorial

- [FR version](https://youtu.be/43Q8YASkcUA)

- [EN Version](https://youtu.be/B84A5alpPDc)

# 🐍 Usage

##PARAMETERS

1. Enter project name and click enter.

2. Choose a video (avi or mp4 format). Note avi file will not appear in Video input but process will works.

3. Face Swap (take times so be patient):

- **Face Swap**: choose the image of the faces you want to swap with the face in the video (multiple faces are now available), left face is id 0.

4. **Resolution Divide Factor**: The resolution of the video will be divided by this factor. The higher the factor, the faster the process, but the lower the resolution of the output video.

5. **Min Face Width Detection**: The minimum width of the face to detect. Allow to ignore little face in the video.

6. **Align Faces**: allows for straightening the head before sending it for Wav2Lip processing.

7. **Keyframes On Speaker Change**: Allows you to generate a keyframe when the speaker changes. This allows you to better control the video generation.

8. **Keyframes On scene Change**: Allows you to generate a keyframe when the scene changes. This allows you to better control the video generation.

9. When parameters above are set click on **Generate Keyframes**, See [Keyframes manager](#keyframes-manager) section for more details.

10. Audio, 3 options:

1. Put audio file in the "Speech" input. or record one with the "Record" button.

2. Generate Audio with the text to speech [coqui TTS](https://github.com/coqui-ai/TTS) integration.

1. Choose the language

2. Choose the Voice

3. Write your speech in the text area "Prompt" in text format or json format:

1. Text format:

```bash

Hello, my name is John. I am 25 years old.

```

2. Json format (you can ask chat GPT to generate discussion for you):

```bash

[

{

"start": 0.0,

"end": 3.0,

"text": "Hello, my name is John. I am 25 years old.",

"speaker": "arnold"

},

{

"start": 3.0,

"end": 4.0,

"text": "Ho really ?",

"speaker": "female_01"

},

...

]

```

3. Input Video: Allow to use audio from the input video, voices cloning and translation. see [Input Video](#input-video) section for more details.

11. **Driving Video**: Choose an avatar to generate a driving video.

- **Avatars**: Choose between 10 avatars to use for the driving video, each one will give a different driving result on lipsync output video.

- **Close Mouth**: Close the mouth of the avatar before generating the driving video.

- **Generate Driving Video**: Generate the driving video.

12. **Video Quality**:

- **Low**: Original Wav2Lip quality, fast but not very good.

- **Medium**: Better quality by apply post processing on the mouth, slower.

- **High**: Better quality by apply post processing and upscale the mouth quality, slower.

13. **Wav2lip Checkpoint**: Choose beetwen 2 wav2lip model:

- **Wav2lip**: Original Wav2Lip model, fast but not very good.

- **Wav2lip GAN**: Better quality by apply post processing on the mouth, slower.

14. **Face Restoration Model**: Choose beetwen 2 face restoration model:

- **Code Former**:

- A value of 0 offers higher quality but may significantly alter the person's facial appearance and cause noticeable flickering between frames.

- A value of 1 provides lower quality but maintains the person's face more consistently and reduces frame flickering.

- Using a value below 0.5 is not advised. Adjust this setting to achieve optimal results. Starting with a value of 0.75 is recommended.

- **GFPGAN**: Usually better quality.

15. **Volume Amplifier**: Not amplify the volume of the output audio but allows you to amplify the volume of the audio when sending it to Wav2Lip. This allows you to better control on lips movement.

## KEYFRAMES MANAGER

###Global parameters:

1. **Only show Speaker Face**: This option allows you to only focus the face of the speaker, the other faces will be hidden.

2. **Frame Number**: A slider that allows you to move between the frames of the video.

3. **Add Keyframe**: Allows you to add a keyframe at the current Frame Number.

4. **Remove Keyframe**: Allows you to remove a keyframe at the current Frame Number.

5. **Keyframes**: A list of all the keyframes.

###For each face on keyframe:

1. **Face Id**: List of all the faces in current keyframe.

2. **translation info**: If there is a translation associate to the project it will be shown here, you can see the speaker, and then it can help to select the good speaker on this keyframe.

3. **Speaker**: Checkbox to set the speaker on the current Face Id of the current keyframe.

4. **Face Swap Id**: Checkbox to set the face swap id of the current keyframe on the current Face Id.

5. **Automatic Mask**: Default True, if False, you can draw the mask manually.

6. **Mouth Mask Dilate**: This will dilate the mouth mask to cover more area around the mouth. depends on the mouth size.

7. **Face Mask Erode**: This will erode the face mask to remove some area around the face. depends on the face size.

8. **Mask Blur**: This will blur the mask to make it more smooth, try to keep it under or equal to **Mouth Mask Dilate**.

9. **Padding sliders**: This will add padding to the head to avoid cuting the head in the video.

When you configure a keyframes, it's influence goes until next keyframe so intermediate frames will be generated with the same configuration.

Note that this configuration can't be seen in UI for intermediate frames.

## Input Video

If no sound in translated audio, will take the audio from the input video. Can be useful if you have a bad lipsync on the input video.

###Clone Voices:

1. **Number Of Speakers**: The number of speakers in the video. Help clone to know how many voices to clone.

2. **Remove Background Sound Before Clone**: Remove noise/music from the background sound before clone.

3. **Clone Voices**: Clone voices from the input video.

4. **Voices**: List of the cloned voices. You can rename voice to identify them in translation.

For each voices you can :

- **Play**: Listen the voice.

- **regen sentence**: Regenerate the sentence sample.

- **save voice**: Save the voice to your voices library.

5. **Voices Files**: List of voices files used by models to create the cloned voices. You can modify the voices files to change the cloned voices. Make sure to have only one voice per file, no background sound and no music.

You can listen the voices files by clicking on the play button. and change the speaker name to identify the voice.

###Translation:

Translation panel is now linked to the cloned voices panel because translation will try to identify the speaker to translate the voice.

1. **Language**: Target language to translate the input video.

2. **Whisper Model**: List of the whisper models to use for the translation, choose beetwen 5 models, the higher the model the better the quality but the slower the process.

3. **Translate**: Translate the input video to the selected language.

4. **Translation**: The translated text.

5. **Translated Audio**: The translated audio.

6. **Convert To Audio**: Convert the translated text to translated audio.

For each segment of the translated text, you can :

- Modify the translated text

- Modify the time start and end of the segment.

- Change the speaker of the segment.

- listen to the original audio by click on the play button.

- listen to the translated audio by click on the red ideogram button.

- Generate the translation for this segment by click on the recycle button.

- Delete the segment by click on the trash button.

- Add a new segment under this one by click on the arrow down button.

# 📺 Examples

https://user-images.githubusercontent.com/800903/262439441-bb9d888a-d33e-4246-9f0a-1ddeac062d35.mp4

https://user-images.githubusercontent.com/800903/262442794-61b1e32f-3f87-4b36-98d6-f711822bdb1e.mp4

https://user-images.githubusercontent.com/800903/262449305-901086a3-22cb-42d2-b5be-a5f38db4549a.mp4

https://user-images.githubusercontent.com/800903/267808494-300f8cc3-9136-4810-86e2-92f2114a5f9a.mp4

# 📖 Behind the scenes

This extension operates in several stages to improve the quality of Wav2Lip-generated videos:

1. **Generate face swap video**: The script first generates the face swap video if image is in "face Swap" field, this operation take times so be patient.

2. **Generate a Wav2lip video**: Then script generates a low-quality Wav2Lip video using the input video and audio.

3. **Video Quality Enhancement**: Create a high-quality video using the low-quality video by using the enhancer define by user.

4. **Mask Creation**: The script creates a mask around the mouth and tries to keep other facial motions like those of the cheeks and chin.

5. **Video Generation**: The script then takes the high-quality mouth image and overlays it onto the original image guided by the mouth mask.

# 💪 Quality tips

- Use a high quality video as input

- Use a video with a consistent frame rate. Occasionally, videos may exhibit unusual playback frame rates (not the standard 24, 25, 30, 60), which can lead to issues with the face mask.

- Use a high quality audio file as input, without background noise or music. Clean audio with a tool like [https://podcast.adobe.com/enhance](https://podcast.adobe.com/enhance).

- Dilate the mouth mask. This will help the model retain some facial motion and hide the original mouth.

- Mask Blur maximum twice the value of Mouth Mask Dilate. If you want to increase the blur, increase the value of Mouth Mask Dilate otherwise the mouth will be blurred and the underlying mouth could be visible.

- Upscaling can be good for improving result, particularly around the mouth area. However, it will extend the processing duration. Use this tutorial from Olivio Sarikas to upscale your video: [https://www.youtube.com/watch?v=3z4MKUqFEUk](https://www.youtube.com/watch?v=3z4MKUqFEUk). Ensure the denoising strength is set between 0.0 and 0.05, select the 'revAnimated' model, and use the batch mode. i'll create a tutorial for this soon.

# ⚠ Noted Constraints

- for speed up process try to keep resolution under 1000x1000px and upscaling after process.

- If the initial phase is excessively lengthy, consider using the "resize factor" to decrease the video's dimensions.

- While there's no strict size limit for videos, larger videos will require more processing time. It's advisable to employ the "resize factor" to minimize the video size and then upscale the video once processing is complete.

# know issues:

If you have issues to install insightface, follow this step:

- Download [insightface precompiled](https://github.com/Gourieff/Assets/raw/main/Insightface/insightface-0.7.3-cp310-cp310-win_amd64.whl) and paste it in the root folder of Wav2lip-studio

- in terminal go to wav2lip-studio folder and type the following commands:

```

.\venv\Scripts\activate

python -m pip install -U pip

python -m pip install insightface-0.7.3-cp310-cp310-win_amd64.whl

```

Enjoy

# 📝 To do

- ✔️ Standalone version

- ✔️ Add a way to use a face swap image

- ✔️ Add Possibility to use a video for audio input

- ✔️ Convert avi to mp4. Avi is not show in video input but process work fine

- [ ] ComfyUI intergration

# 😎 Contributing

We welcome contributions to this project. When submitting pull requests, please provide a detailed description of the changes. see [CONTRIBUTING](CONTRIBUTING.md) for more information.

# 🙏 Appreciation

- [Wav2Lip](https://github.com/Rudrabha/Wav2Lip)

- [CodeFormer](https://github.com/sczhou/CodeFormer)

- [Coqui TTS](https://github.com/coqui-ai/TTS)

- [facefusion](https://github.com/facefusion/facefusion)

- [Vocal Remover](https://github.com/tsurumeso/vocal-remover)

# ☕ Support Wav2lip Studio

this project is open-source effort that is free to use and modify. I rely on the support of users to keep this project going and help improve it. If you'd like to support me, you can make a donation on my Patreon page. Any contribution, large or small, is greatly appreciated!

Your support helps me cover the costs of development and maintenance, and allows me to allocate more time and resources to enhancing this project. Thank you for your support!

[patreon page](https://www.patreon.com/Wav2LipStudio)

# 📝 Citation

If you use this project in your own work, in articles, tutorials, or presentations, we encourage you to cite this project to acknowledge the efforts put into it.

To cite this project, please use the following BibTeX format:

```

@misc{wav2lip_uhq,

author = {numz},

title = {Wav2Lip UHQ},

year = {2023},

howpublished = {GitHub repository},

publisher = {numz},

url = {https://github.com/numz/sd-wav2lip-uhq}

}

```

# 📜 License

* The code in this repository is released under the MIT license as found in the [LICENSE file](LICENSE).

https://user-images.githubusercontent.com/800903/262435301-af205a91-30d7-43f2-afcc-05980d581fe0.mp4

## 💡 Description

This repository contains a Wav2Lip Studio Standalone Version.

It's an all-in-one solution: just choose a video and a speech file (wav or mp3), and the tools will generate a lip-sync video, faceswap, voice clone, and translate video with voice clone (HeyGen like).

It improves the quality of the lip-sync videos generated by the [Wav2Lip tool](https://github.com/Rudrabha/Wav2Lip) by applying specific post-processing techniques.

## 📖 Quick Index

* [🚀 Updates](#-updates)

* [🔗 Requirements](#-requirements)

* [💻 Installation](#-installation)

* [🐍 Tutorial](#-tutorial)