Post

🔍 Today's pick in Interpretability & Analysis of LMs:

LM Transparency Tool: Interactive Tool for Analyzing Transformer

Language Models (2404.07004) by

@igortufanov

@mahnerak

@javifer

@lena-voita

The LLM transparency toolkit is an open source toolkit and visual interface to efficiently identify component circuits in LMs responsible for their predictions, using the Information Flow Routes approach ( Information Flow Routes: Automatically Interpreting Language Models at Scale (2403.00824)).

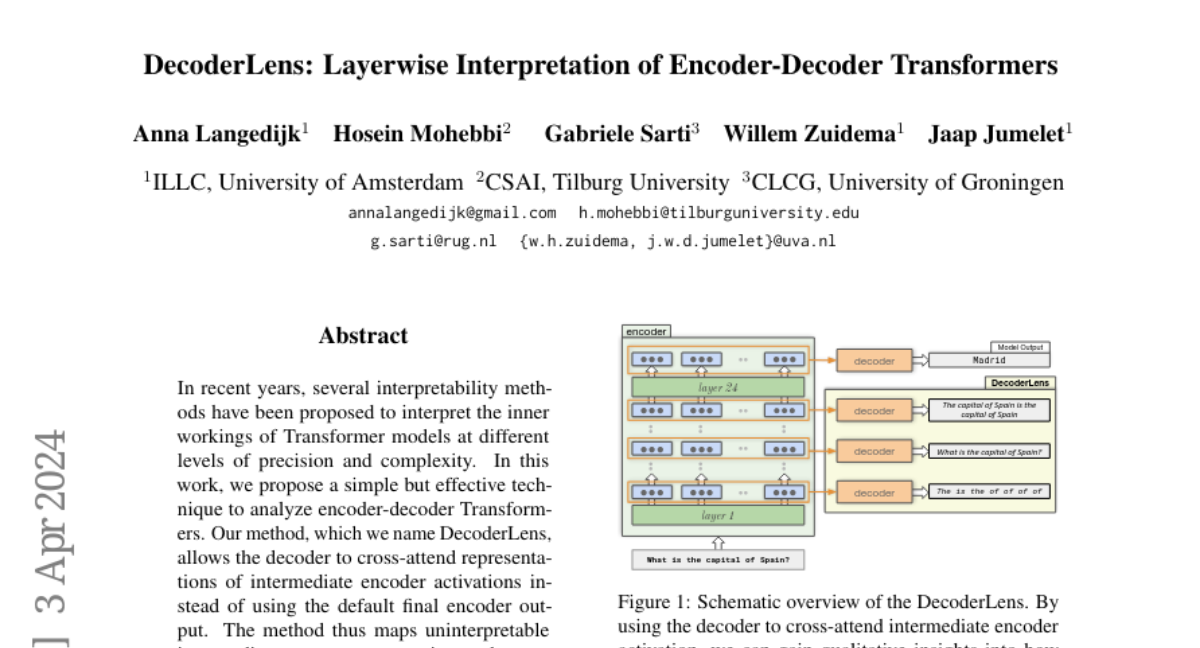

The tool enables fine-grained customization, highlighting the importance of individual FFN neurons and attention heads. Moreover, vocabulary projections computed using the logit lens approach are provided to examine intermediate predictions of the residual stream, and tokens promoted by specific component updates.

💻 Code: https://github.com/facebookresearch/llm-transparency-tool

🚀 Demo: facebook/llm-transparency-tool-demo

🔍 All daily picks: https://huggingface.co/collections/gsarti/daily-picks-in-interpretability-and-analysis-ofc-lms-65ae3339949c5675d25de2f9

The LLM transparency toolkit is an open source toolkit and visual interface to efficiently identify component circuits in LMs responsible for their predictions, using the Information Flow Routes approach ( Information Flow Routes: Automatically Interpreting Language Models at Scale (2403.00824)).

The tool enables fine-grained customization, highlighting the importance of individual FFN neurons and attention heads. Moreover, vocabulary projections computed using the logit lens approach are provided to examine intermediate predictions of the residual stream, and tokens promoted by specific component updates.

💻 Code: https://github.com/facebookresearch/llm-transparency-tool

🚀 Demo: facebook/llm-transparency-tool-demo

🔍 All daily picks: https://huggingface.co/collections/gsarti/daily-picks-in-interpretability-and-analysis-ofc-lms-65ae3339949c5675d25de2f9