---

title: Dreambooth Submission

library_name: keras

pipeline_tag: text-to-image

tags:

- keras-dreambooth

- wild-card

---

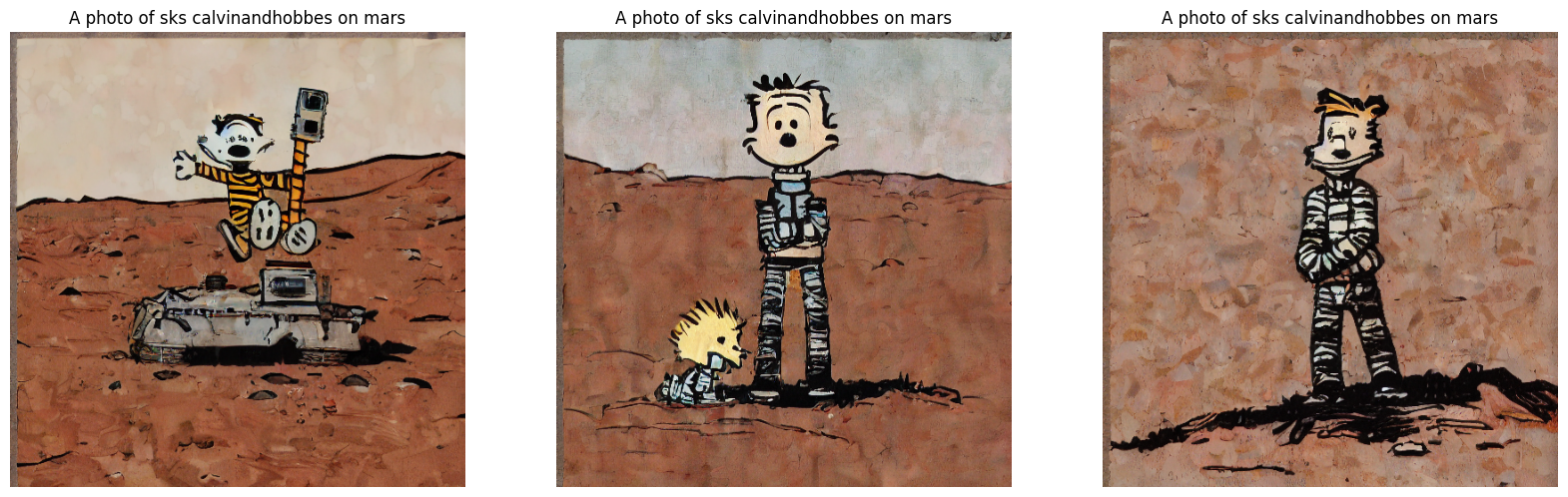

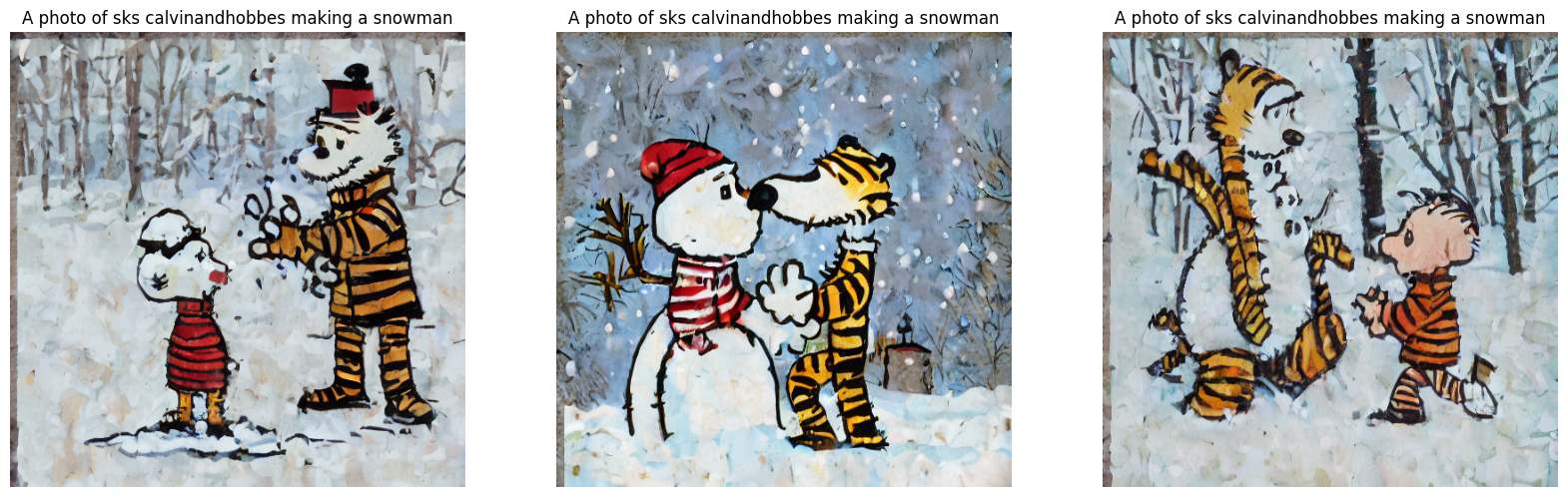

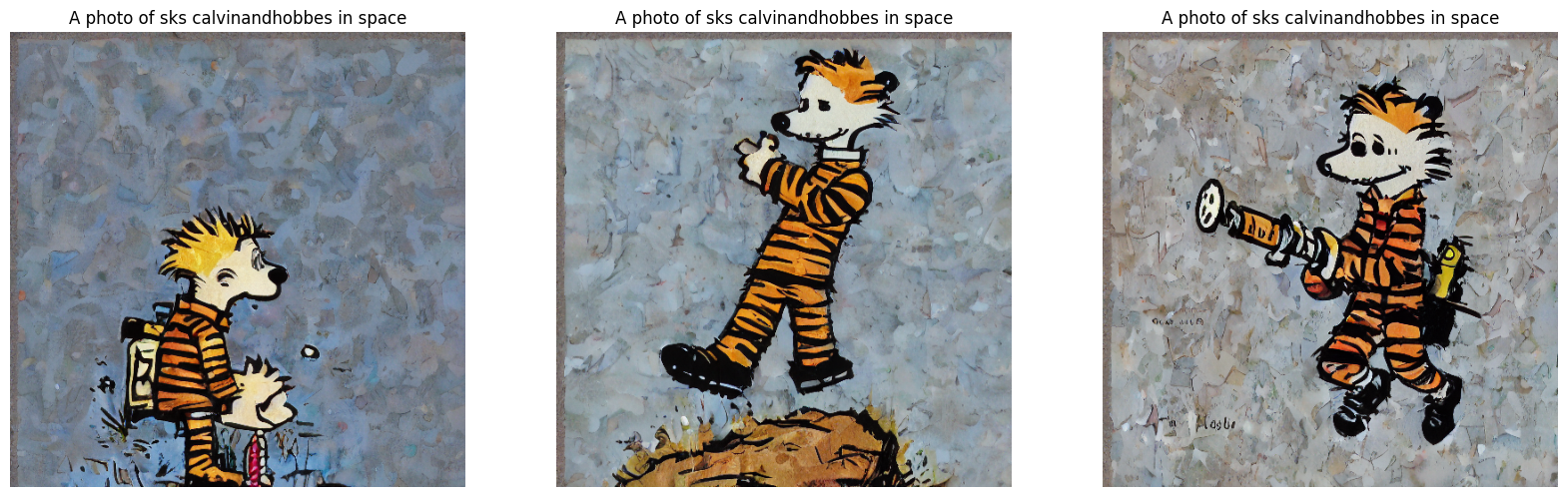

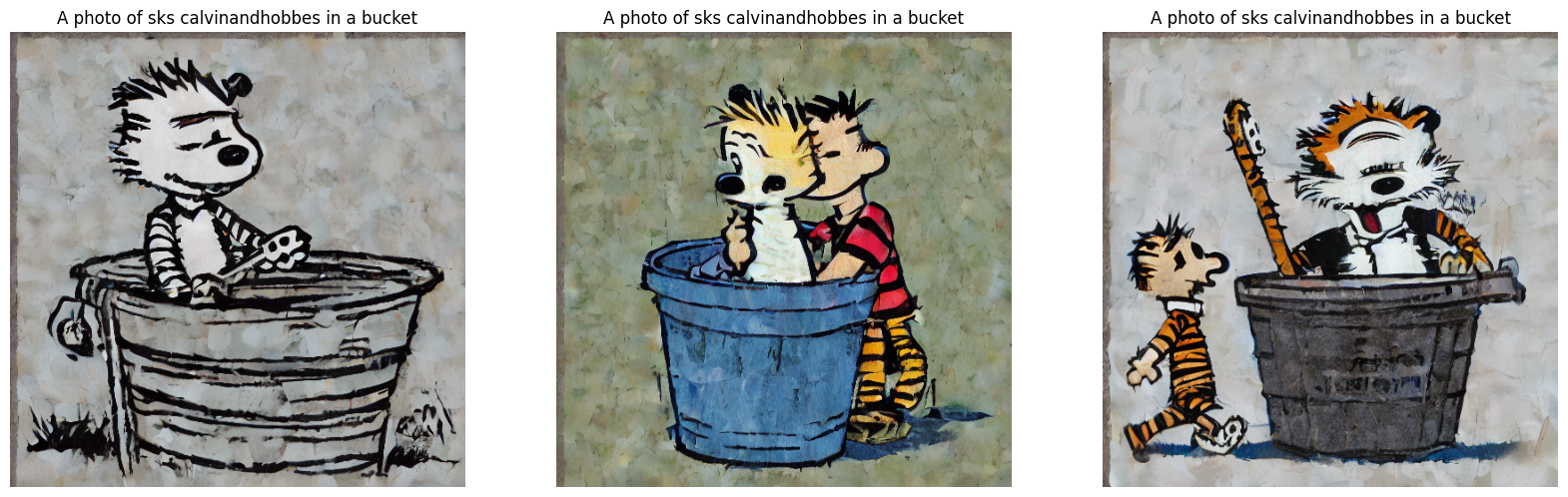

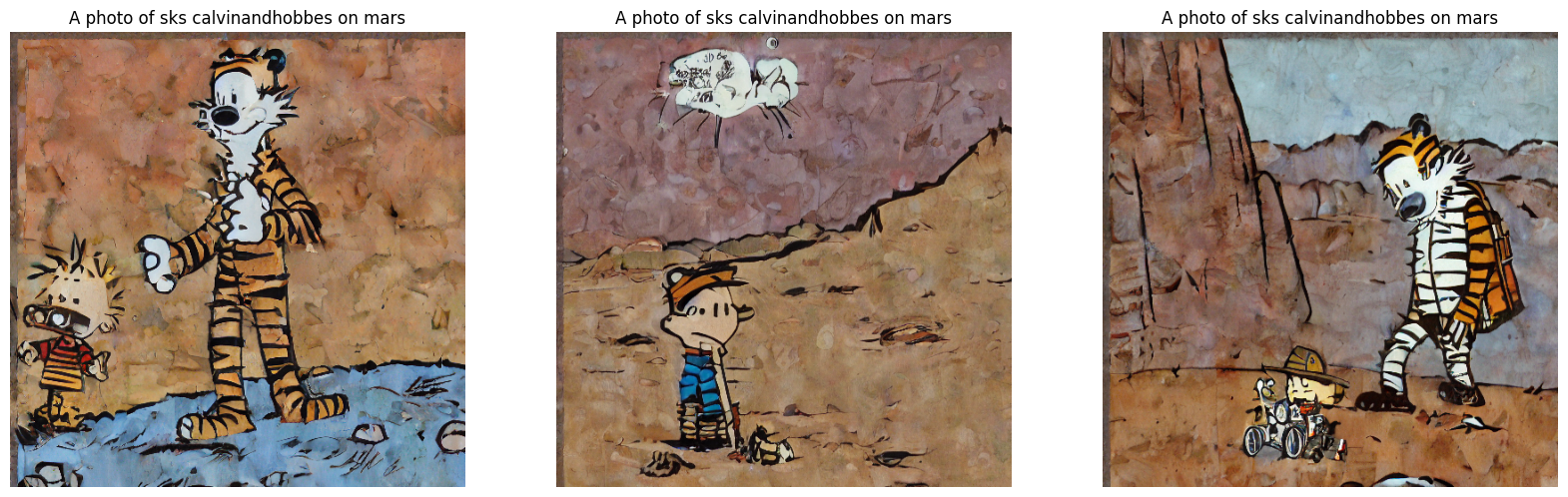

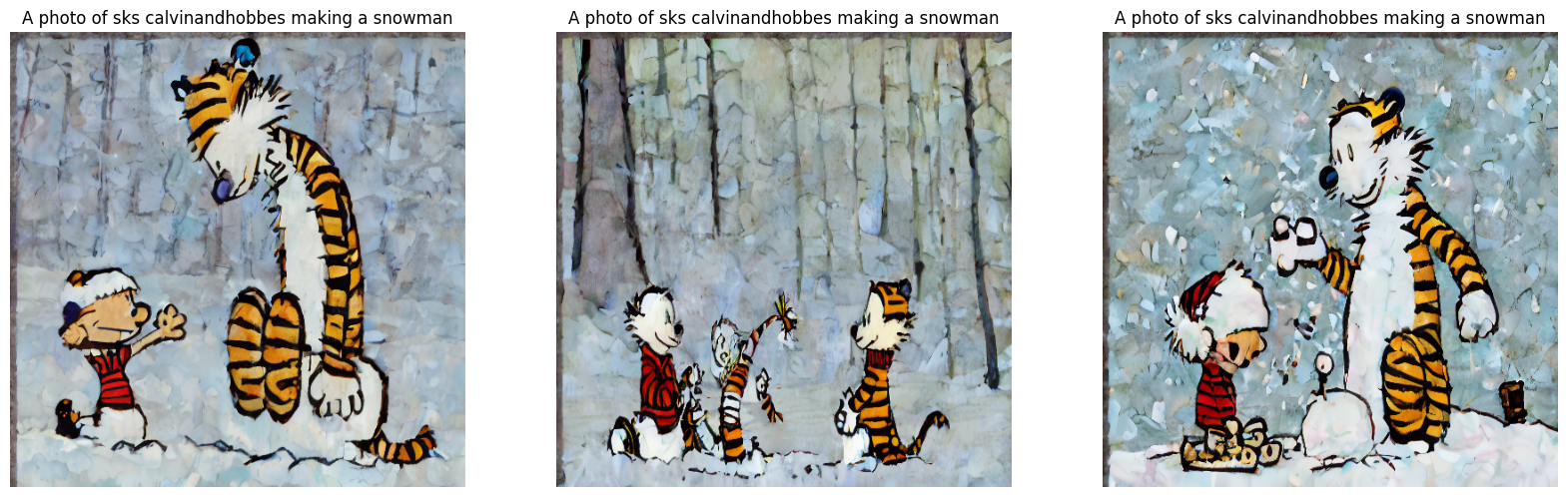

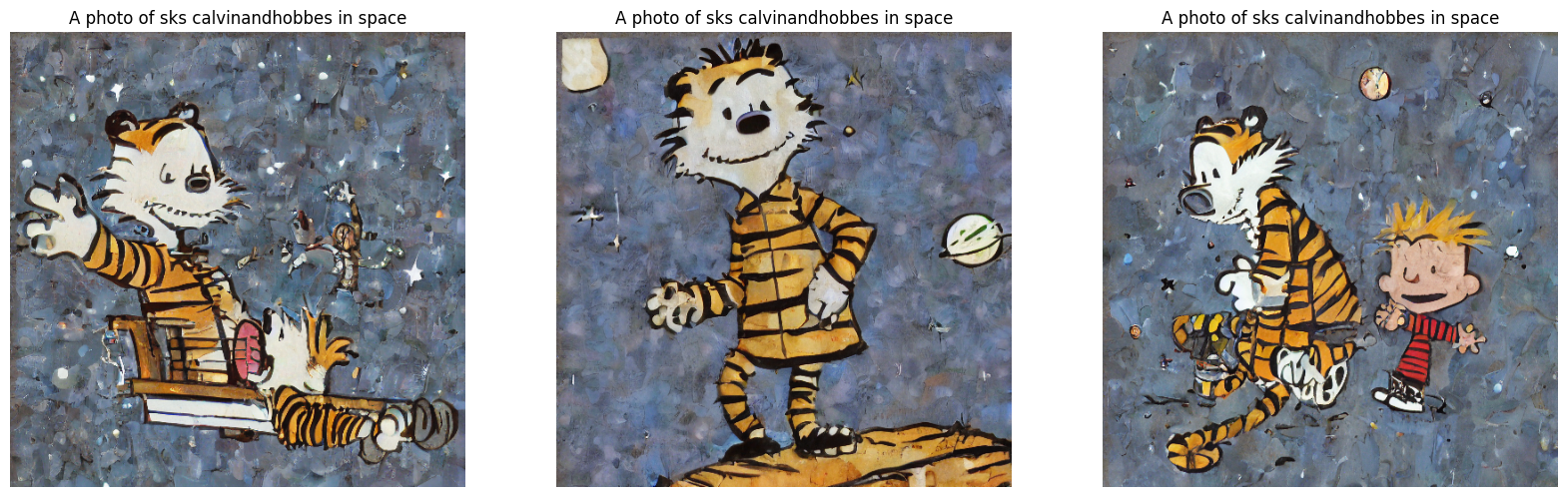

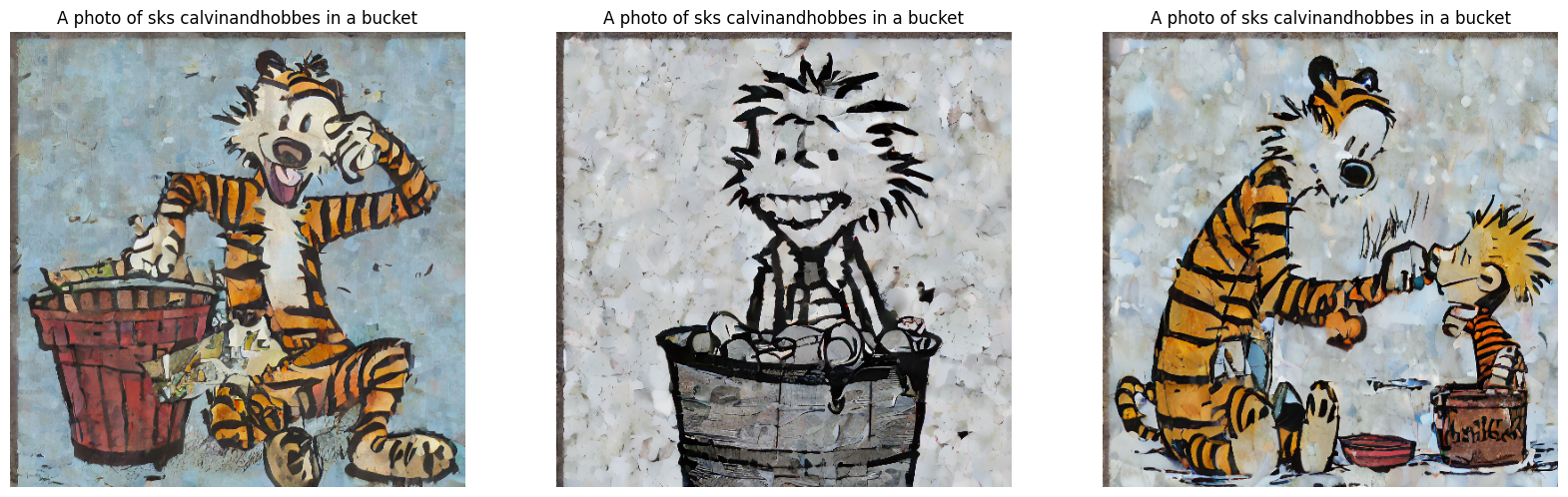

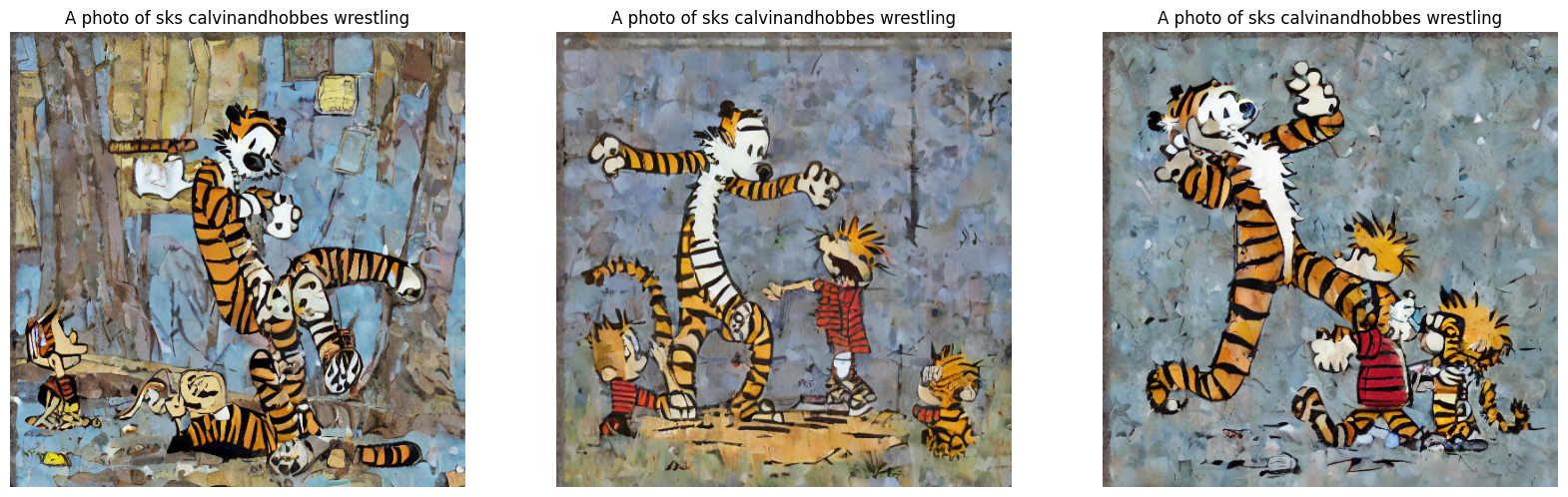

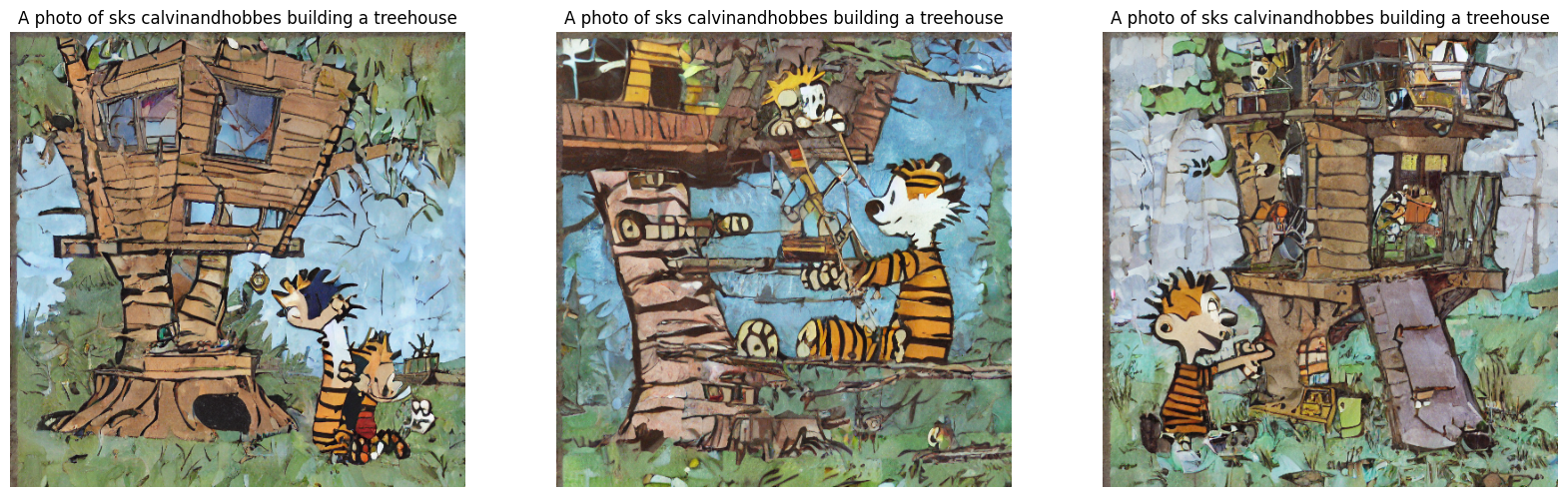

## Model description

This is a Stable Diffusion model fine-tuned using Dreambooth on Calvin and Hobbes images 🐯. Part of the [Keras Dreambooth Event](https://huggingface.co/keras-dreambooth). An improvement on [Original Dreambooth - CalHobbes](https://huggingface.co/drmeeseeks/dreambooth_diffusion_model) using 50 images and 11 training epochs (V2) versus V1 (10 images and 3 training epochs).

## Intended uses & limitations

- For experimentation and curiosity.

## Training and evaluation data

- This model was fine-tuned on images of Calvin and Hobbes.

## Training procedure

Starting from the provided [Keras Dreambooth Sprity - HuggingFace - GITHUB](https://github.com/huggingface/community-events/tree/main/keras-dreambooth-sprint), the provided IPYNB was modified to accomidate user images and optimze for cost. The entire training process was done using Colab. Data preperation can complete using the free tier, but you will need a premium GPU (A100 - 40G) to train. Generate the images for `class-images` using free tier Colab, this will take 2-3 hours for 300 images. Once complete, you have 2 options, create the folder`/root/.keras/datasets/class-images` and copy the images to that directory, _or_ create a tar.gz file and download the files for backup using the snippit below. Downloading the file will save you the compute of having to redo it again as it can simply be uploaded into the `contents` folder at a later time. You can collect the images for `my_images` into a folder in either PNG or JPG format, and upload the TAR.GZ version of the folder containing the images.

```python

import glob

import tarfile

output_filename = "class-images.tar.gz"

with tarfile.open(output_filename, "w:gz") as tar:

for file in glob.glob('class-images/*'):

tar.write(file)

```

To use `tf.keras.util.get_file` with a tar.gz file located on your running VM you will need `instance_images_root = tf.keras.utils.get_file(origin="file:///LOCATION_TO_TARGZ_FILE/my_images.tar.gz",untar=True)` [get_util - Tensorflow Docs](https://www.tensorflow.org/api_docs/python/tf/keras/utils/get_file). The command, in my case, places the files in `'/root/.keras/datasets/my_images'`. This will not work. The following work around resolved the issue:

```bash

# From within the Colab Notebook

!mkdir /root/.keras/datasets/class-images

!mkdir /root/.keras/datasets/my_images

!cp /root/.keras/datasets/my_images.tar.gz /root/.keras/datasets/my_images/

!cp /root/.keras/datasets/class-images.tar.gz /root/.keras/datasets/class-images/

!tar -xvzf /root/.keras/datasets/class-images/class-images.tar.gz -C /root/.keras/datasets/class-images

!tar -xvzf /root/.keras/datasets/my_images/my_images.tar.gz -C /root/.keras/datasets/my_images

```

Having more `my_images` will improve the results. Current results used 10, but 20-30 is recommended based on [Implementation of DreamBooth using KerasCV and TensorFlow - Notes on preparing data for DreamBooth training of faces](https://github.com/sayakpaul/dreambooth-keras#notes-on-preparing-data-for-dreambooth-training-of-faces).

If you decide to train using [Lambda GPU - Dreambooth - GIT](https://github.com/huggingface/community-events/blob/main/keras-dreambooth-sprint/compute-with-lambda.md) be sure the `python -m pip install tensorflow==2.11` instead of `python -m pip install tensorflow` as 2.12 will be downloaded by default and there will be a CUDA version mismatch, causing computation to occur on the CPU instead of the GPU. A second note is setting `export XLA_FLAGS=--xla_gpu_cuda_data_dir=/usr/lib/cuda` and `export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$CONDA_PREFIX/lib/` from a terminal will not transfer to a notebook. Be sure to set the environment variables in the jupyter notbook to enable the proper CUDA libraries to be used otherwise the GPU will not be accessable. `%env MY_ENV_VAR=value` can help and must be the first code block of the notebook.

## Training results

View Inference Images - V1

View Inference Images - V2

### Training hyperparameters

The following hyperparameters were used during training:

| Hyperparameters | Value |

| :-- | :-- |

| inner_optimizer.class_name | Custom>RMSprop |

| inner_optimizer.config.name | RMSprop |

| inner_optimizer.config.weight_decay | None |

| inner_optimizer.config.clipnorm | None |

| inner_optimizer.config.global_clipnorm | None |

| inner_optimizer.config.clipvalue | None |

| inner_optimizer.config.use_ema | False |

| inner_optimizer.config.ema_momentum | 0.99 |

| inner_optimizer.config.ema_overwrite_frequency | 100 |

| inner_optimizer.config.jit_compile | True |

| inner_optimizer.config.is_legacy_optimizer | False |

| inner_optimizer.config.learning_rate | 0.0010000000474974513 |

| inner_optimizer.config.rho | 0.9 |

| inner_optimizer.config.momentum | 0.0 |

| inner_optimizer.config.epsilon | 1e-07 |

| inner_optimizer.config.centered | False |

| dynamic | True |

| initial_scale | 32768.0 |

| dynamic_growth_steps | 2000 |

| training_precision | mixed_float16 |

### Recommendations

- Access to A100 GPUs can be a challenege. A variety of providers, at the time of this writing (April 2023), either do not have additional resources or may be excessivly expensive. Spot instances, or breaking the data prep and training onto different types of machines can alleviate this issue.

### Environmental Impact

Carbon emissions were estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). In total roughly 10 hours were used primarily in US Central, with AWS as the reference. Additional resources are available at [Our World in Data - CO2 Emissions](https://ourworldindata.org/co2-emissions)

- __Hardware Type__: Intel Xeon (12 core VM), 85GB RAM, 80GB Disk, with NVIDIA A100-SXM 40GB

- __Hours Used__: 10 hrs

- __Cloud Provider__: Colab Free + Colab Premium

- __Compute Region__: US Central

- __Carbon Emitted__: 1.42 kg (GPU) + 0.59 kg (CPU) = 2 kg (the weight of 2 liters of water) - 2 kg offset = 0

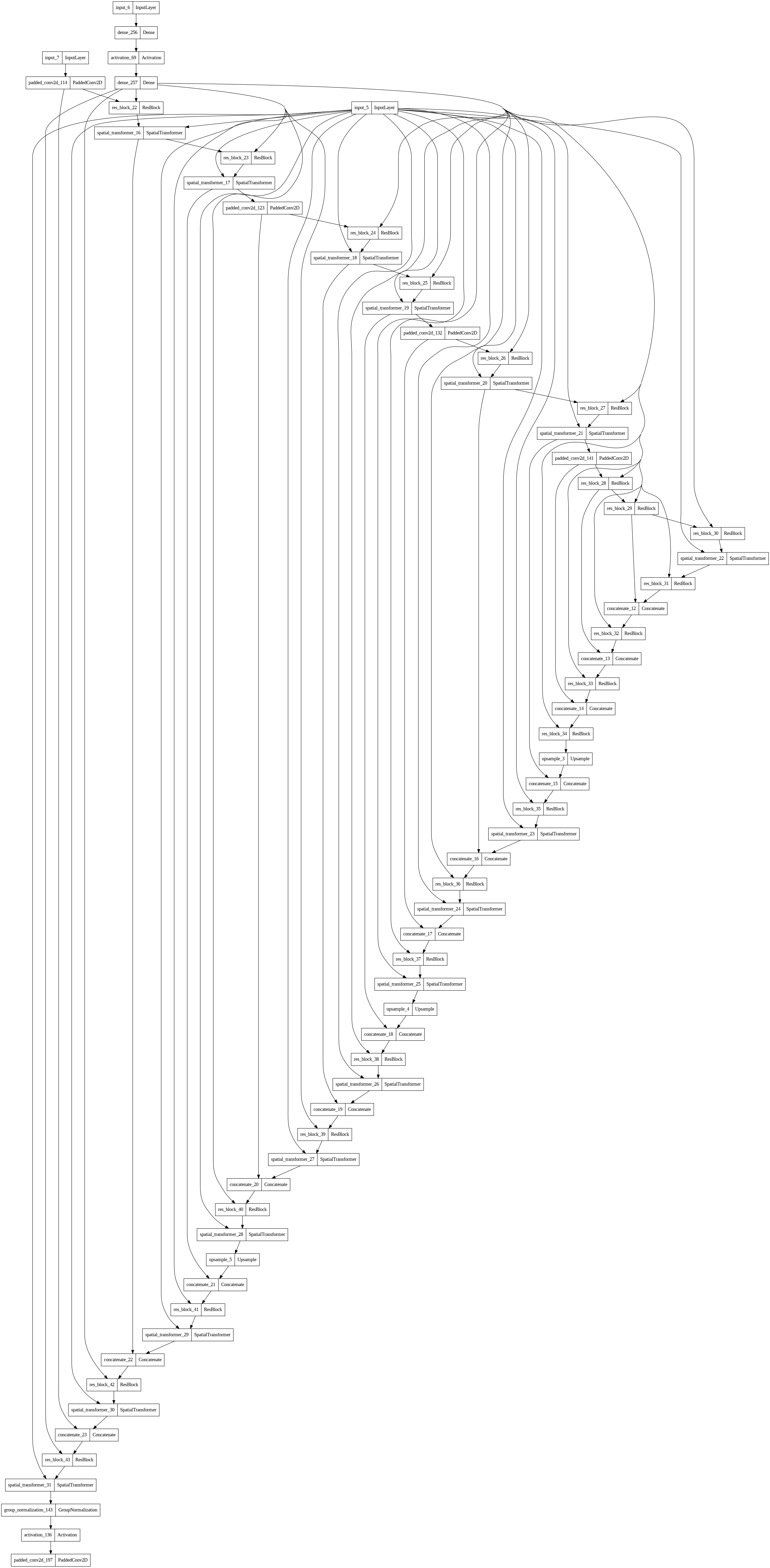

## Model Plot

View Model Plot

### Citation

- [Diffusers - HuggingFace - GITHUB](https://github.com/huggingface/diffusers)

- [Model Card - HuggingFace Hub - GITHUB](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/modelcard_template.md)

```bibtex

@online{ruizDreamBoothFineTuning2023,

title = {{{DreamBooth}}: {{Fine Tuning Text-to-Image Diffusion Models}} for {{Subject-Driven Generation}}},

shorttitle = {{{DreamBooth}}},

author = {Ruiz, Nataniel and Li, Yuanzhen and Jampani, Varun and Pritch, Yael and Rubinstein, Michael and Aberman, Kfir},

date = {2023-03-15},

number = {arXiv:2208.12242},

eprint = {arXiv:2208.12242},

eprinttype = {arxiv},

url = {http://arxiv.org/abs/2208.12242},

urldate = {2023-03-26},

abstract = {Large text-to-image models achieved a remarkable leap in the evolution of AI, enabling high-quality and diverse synthesis of images from a given text prompt. However, these models lack the ability to mimic the appearance of subjects in a given reference set and synthesize novel renditions of them in different contexts. In this work, we present a new approach for "personalization" of text-to-image diffusion models. Given as input just a few images of a subject, we fine-tune a pretrained text-to-image model such that it learns to bind a unique identifier with that specific subject. Once the subject is embedded in the output domain of the model, the unique identifier can be used to synthesize novel photorealistic images of the subject contextualized in different scenes. By leveraging the semantic prior embedded in the model with a new autogenous class-specific prior preservation loss, our technique enables synthesizing the subject in diverse scenes, poses, views and lighting conditions that do not appear in the reference images. We apply our technique to several previously-unassailable tasks, including subject recontextualization, text-guided view synthesis, and artistic rendering, all while preserving the subject's key features. We also provide a new dataset and evaluation protocol for this new task of subject-driven generation. Project page: https://dreambooth.github.io/},

pubstate = {preprint}

}

@article{owidco2andothergreenhousegasemissions,

author = {Hannah Ritchie and Max Roser and Pablo Rosado},

title = {CO₂ and Greenhouse Gas Emissions},

journal = {Our World in Data},

year = {2020},

note = {https://ourworldindata.org/co2-and-other-greenhouse-gas-emissions}

}

@article{lacoste2019quantifying,

title={Quantifying the Carbon Emissions of Machine Learning},

author={Lacoste, Alexandre and Luccioni, Alexandra and Schmidt, Victor and Dandres, Thomas},

journal={arXiv preprint arXiv:1910.09700},

year={2019}

}

```