The BEiT model was proposed in BEiT: BERT Pre-Training of Image Transformers by Hangbo Bao, Li Dong and Furu Wei. Inspired by BERT, BEiT is the first paper that makes self-supervised pre-training of Vision Transformers (ViTs) outperform supervised pre-training. Rather than pre-training the model to predict the class of an image (as done in the original ViT paper), BEiT models are pre-trained to predict visual tokens from the codebook of OpenAI’s DALL-E model given masked patches.

The abstract from the paper is the following:

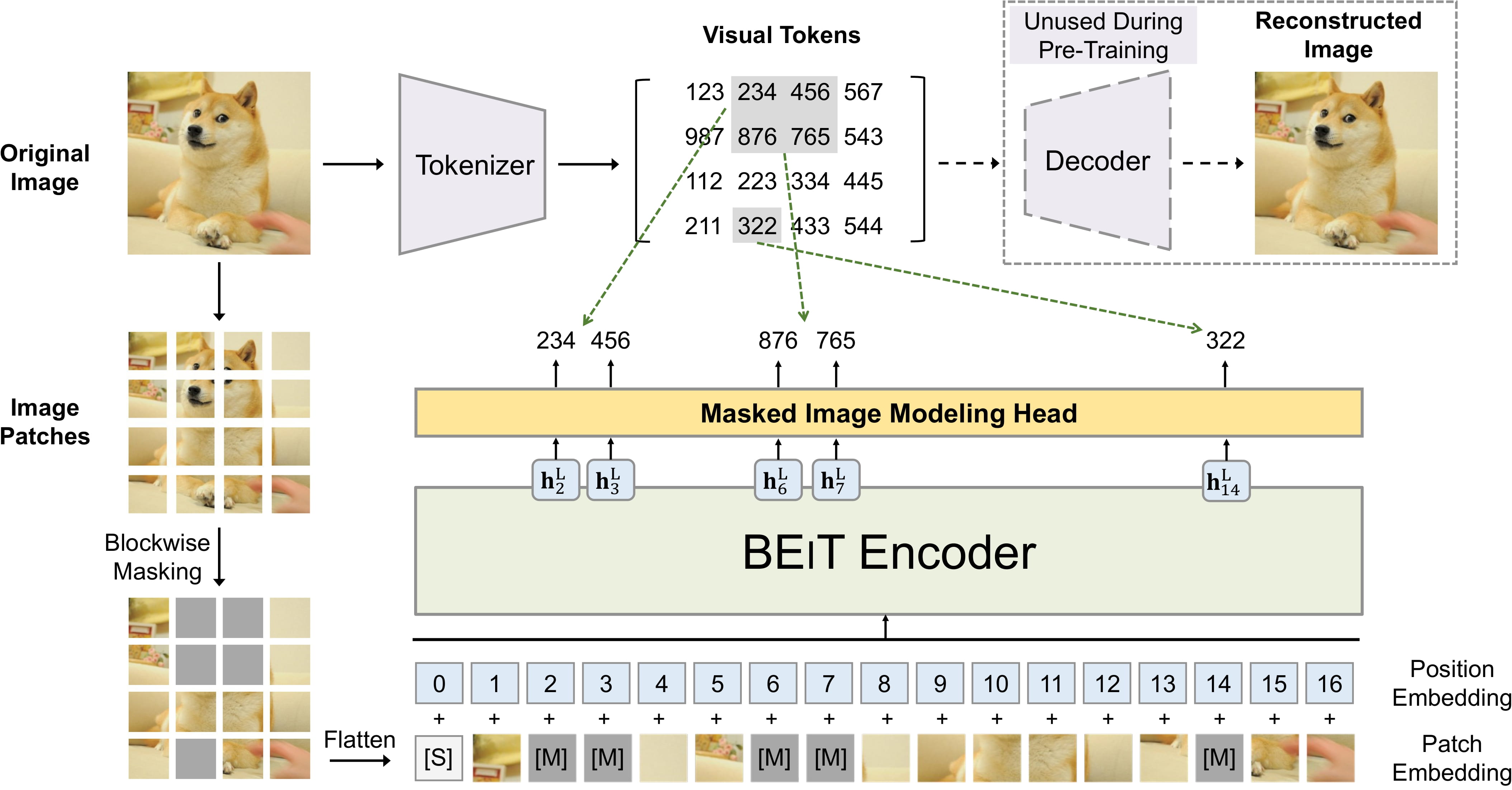

We introduce a self-supervised vision representation model BEiT, which stands for Bidirectional Encoder representation from Image Transformers. Following BERT developed in the natural language processing area, we propose a masked image modeling task to pretrain vision Transformers. Specifically, each image has two views in our pre-training, i.e, image patches (such as 16x16 pixels), and visual tokens (i.e., discrete tokens). We first “tokenize” the original image into visual tokens. Then we randomly mask some image patches and fed them into the backbone Transformer. The pre-training objective is to recover the original visual tokens based on the corrupted image patches. After pre-training BEiT, we directly fine-tune the model parameters on downstream tasks by appending task layers upon the pretrained encoder. Experimental results on image classification and semantic segmentation show that our model achieves competitive results with previous pre-training methods. For example, base-size BEiT achieves 83.2% top-1 accuracy on ImageNet-1K, significantly outperforming from-scratch DeiT training (81.8%) with the same setup. Moreover, large-size BEiT obtains 86.3% only using ImageNet-1K, even outperforming ViT-L with supervised pre-training on ImageNet-22K (85.2%).

Tips:

microsoft/beit-base-patch16-224 refers to a base-sized architecture with patch

resolution of 16x16 and fine-tuning resolution of 224x224. All checkpoints can be found on the hub.use_relative_position_bias or the

use_relative_position_bias attribute of BeitConfig to True in order to add

position embeddings. BEiT pre-training. Taken from the original paper.

BEiT pre-training. Taken from the original paper.

This model was contributed by nielsr. The JAX/FLAX version of this model was contributed by kamalkraj. The original code can be found here.

( last_hidden_state: FloatTensor = None pooler_output: FloatTensor = None hidden_states: typing.Optional[typing.Tuple[torch.FloatTensor]] = None attentions: typing.Optional[typing.Tuple[torch.FloatTensor]] = None )

Parameters

torch.FloatTensor of shape (batch_size, sequence_length, hidden_size)) —

Sequence of hidden-states at the output of the last layer of the model.

torch.FloatTensor of shape (batch_size, hidden_size)) —

Average of the last layer hidden states of the patch tokens (excluding the [CLS] token) if

config.use_mean_pooling is set to True. If set to False, then the final hidden state of the [CLS] token

will be returned.

tuple(torch.FloatTensor), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) —

Tuple of torch.FloatTensor (one for the output of the embeddings + one for the output of each layer) of

shape (batch_size, sequence_length, hidden_size).

Hidden-states of the model at the output of each layer plus the initial embedding outputs.

tuple(torch.FloatTensor), optional, returned when output_attentions=True is passed or when config.output_attentions=True) —

Tuple of torch.FloatTensor (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

Class for outputs of BeitModel.

( last_hidden_state: ndarray = None pooler_output: ndarray = None hidden_states: typing.Optional[typing.Tuple[jax._src.numpy.ndarray.ndarray]] = None attentions: typing.Optional[typing.Tuple[jax._src.numpy.ndarray.ndarray]] = None )

Parameters

jnp.ndarray of shape (batch_size, sequence_length, hidden_size)) —

Sequence of hidden-states at the output of the last layer of the model.

jnp.ndarray of shape (batch_size, hidden_size)) —

Average of the last layer hidden states of the patch tokens (excluding the [CLS] token) if

config.use_mean_pooling is set to True. If set to False, then the final hidden state of the [CLS] token

will be returned.

tuple(jnp.ndarray), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) —

Tuple of jnp.ndarray (one for the output of the embeddings + one for the output of each layer) of shape

(batch_size, sequence_length, hidden_size). Hidden-states of the model at the output of each layer plus

the initial embedding outputs.

tuple(jnp.ndarray), optional, returned when output_attentions=True is passed or when config.output_attentions=True) —

Tuple of jnp.ndarray (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in

the self-attention heads.

Class for outputs of FlaxBeitModel.

( vocab_size = 8192 hidden_size = 768 num_hidden_layers = 12 num_attention_heads = 12 intermediate_size = 3072 hidden_act = 'gelu' hidden_dropout_prob = 0.0 attention_probs_dropout_prob = 0.0 initializer_range = 0.02 layer_norm_eps = 1e-12 is_encoder_decoder = False image_size = 224 patch_size = 16 num_channels = 3 use_mask_token = False use_absolute_position_embeddings = False use_relative_position_bias = False use_shared_relative_position_bias = False layer_scale_init_value = 0.1 drop_path_rate = 0.1 use_mean_pooling = True out_indices = [3, 5, 7, 11] pool_scales = [1, 2, 3, 6] use_auxiliary_head = True auxiliary_loss_weight = 0.4 auxiliary_channels = 256 auxiliary_num_convs = 1 auxiliary_concat_input = False semantic_loss_ignore_index = 255 **kwargs )

Parameters

int, optional, defaults to 8092) —

Vocabulary size of the BEiT model. Defines the number of different image tokens that can be used during

pre-training.

int, optional, defaults to 768) —

Dimensionality of the encoder layers and the pooler layer.

int, optional, defaults to 12) —

Number of hidden layers in the Transformer encoder.

int, optional, defaults to 12) —

Number of attention heads for each attention layer in the Transformer encoder.

int, optional, defaults to 3072) —

Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Transformer encoder.

str or function, optional, defaults to "gelu") —

The non-linear activation function (function or string) in the encoder and pooler. If string, "gelu",

"relu", "selu" and "gelu_new" are supported.

float, optional, defaults to 0.0) —

The dropout probability for all fully connected layers in the embeddings, encoder, and pooler.

float, optional, defaults to 0.0) —

The dropout ratio for the attention probabilities.

float, optional, defaults to 0.02) —

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

float, optional, defaults to 1e-12) —

The epsilon used by the layer normalization layers.

int, optional, defaults to 224) —

The size (resolution) of each image.

int, optional, defaults to 16) —

The size (resolution) of each patch.

int, optional, defaults to 3) —

The number of input channels.

bool, optional, defaults to False) —

Whether to use a mask token for masked image modeling.

bool, optional, defaults to False) —

Whether to use BERT-style absolute position embeddings.

bool, optional, defaults to False) —

Whether to use T5-style relative position embeddings in the self-attention layers.

bool, optional, defaults to False) —

Whether to use the same relative position embeddings across all self-attention layers of the Transformer.

float, optional, defaults to 0.1) —

Scale to use in the self-attention layers. 0.1 for base, 1e-5 for large. Set 0 to disable layer scale.

float, optional, defaults to 0.1) —

Stochastic depth rate per sample (when applied in the main path of residual layers).

bool, optional, defaults to True) —

Whether to mean pool the final hidden states of the patches instead of using the final hidden state of the

CLS token, before applying the classification head.

List[int], optional, defaults to [3, 5, 7, 11]) —

Indices of the feature maps to use for semantic segmentation.

Tuple[int], optional, defaults to [1, 2, 3, 6]) —

Pooling scales used in Pooling Pyramid Module applied on the last feature map.

bool, optional, defaults to True) —

Whether to use an auxiliary head during training.

float, optional, defaults to 0.4) —

Weight of the cross-entropy loss of the auxiliary head.

int, optional, defaults to 256) —

Number of channels to use in the auxiliary head.

int, optional, defaults to 1) —

Number of convolutional layers to use in the auxiliary head.

bool, optional, defaults to False) —

Whether to concatenate the output of the auxiliary head with the input before the classification layer.

int, optional, defaults to 255) —

The index that is ignored by the loss function of the semantic segmentation model.

This is the configuration class to store the configuration of a BeitModel. It is used to instantiate an BEiT model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the BEiT microsoft/beit-base-patch16-224-pt22k architecture.

Example:

>>> from transformers import BeitConfig, BeitModel

>>> # Initializing a BEiT beit-base-patch16-224-pt22k style configuration

>>> configuration = BeitConfig()

>>> # Initializing a model (with random weights) from the beit-base-patch16-224-pt22k style configuration

>>> model = BeitModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.config( do_resize = True size = 256 resample = <Resampling.BICUBIC: 3> do_center_crop = True crop_size = 224 do_normalize = True image_mean = None image_std = None reduce_labels = False **kwargs )

Parameters

bool, optional, defaults to True) —

Whether to resize the input to a certain size.

int or Tuple(int), optional, defaults to 256) —

Resize the input to the given size. If a tuple is provided, it should be (width, height). If only an

integer is provided, then the input will be resized to (size, size). Only has an effect if do_resize is

set to True.

int, optional, defaults to PIL.Image.Resampling.BICUBIC) —

An optional resampling filter. This can be one of PIL.Image.Resampling.NEAREST,

PIL.Image.Resampling.BOX, PIL.Image.Resampling.BILINEAR, PIL.Image.Resampling.HAMMING,

PIL.Image.Resampling.BICUBIC or PIL.Image.Resampling.LANCZOS. Only has an effect if do_resize is set

to True.

bool, optional, defaults to True) —

Whether to crop the input at the center. If the input size is smaller than crop_size along any edge, the

image is padded with 0’s and then center cropped.

int, optional, defaults to 224) —

Desired output size when applying center-cropping. Only has an effect if do_center_crop is set to True.

bool, optional, defaults to True) —

Whether or not to normalize the input with image_mean and image_std.

List[int], defaults to [0.5, 0.5, 0.5]) —

The sequence of means for each channel, to be used when normalizing images.

List[int], defaults to [0.5, 0.5, 0.5]) —

The sequence of standard deviations for each channel, to be used when normalizing images.

bool, optional, defaults to False) —

Whether or not to reduce all label values of segmentation maps by 1. Usually used for datasets where 0 is

used for background, and background itself is not included in all classes of a dataset (e.g. ADE20k). The

background label will be replaced by 255.

Constructs a BEiT feature extractor.

This feature extractor inherits from FeatureExtractionMixin which contains most of the main methods. Users should refer to this superclass for more information regarding those methods.

( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), typing.List[ForwardRef('PIL.Image.Image')], typing.List[numpy.ndarray], typing.List[ForwardRef('torch.Tensor')]] segmentation_maps: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), typing.List[ForwardRef('PIL.Image.Image')], typing.List[numpy.ndarray], typing.List[ForwardRef('torch.Tensor')]] = None return_tensors: typing.Union[str, transformers.utils.generic.TensorType, NoneType] = None **kwargs ) → BatchFeature

Parameters

PIL.Image.Image, np.ndarray, torch.Tensor, List[PIL.Image.Image], List[np.ndarray], List[torch.Tensor]) —

The image or batch of images to be prepared. Each image can be a PIL image, NumPy array or PyTorch

tensor. In case of a NumPy array/PyTorch tensor, each image should be of shape (C, H, W), where C is a

number of channels, H and W are image height and width.

PIL.Image.Image, np.ndarray, torch.Tensor, List[PIL.Image.Image], List[np.ndarray], List[torch.Tensor], optional) —

Optionally, the corresponding semantic segmentation maps with the pixel-wise annotations.

str or TensorType, optional, defaults to 'np') —

If set, will return tensors of a particular framework. Acceptable values are:

'tf': Return TensorFlow tf.constant objects.'pt': Return PyTorch torch.Tensor objects.'np': Return NumPy np.ndarray objects.'jax': Return JAX jnp.ndarray objects.Returns

A BatchFeature with the following fields:

segmentation_maps are provided)Main method to prepare for the model one or several image(s).

NumPy arrays and PyTorch tensors are converted to PIL images when resizing, so the most efficient is to pass PIL images.

( outputs target_sizes: typing.List[typing.Tuple] = None ) → semantic_segmentation

Parameters

List[Tuple] of length batch_size, optional) —

List of tuples corresponding to the requested final size (height, width) of each prediction. If left to

None, predictions will not be resized.

Returns

semantic_segmentation

List[torch.Tensor] of length batch_size, where each item is a semantic

segmentation map of shape (height, width) corresponding to the target_sizes entry (if target_sizes is

specified). Each entry of each torch.Tensor correspond to a semantic class id.

Converts the output of BeitForSemanticSegmentation into semantic segmentation maps. Only supports PyTorch.

( config: BeitConfig add_pooling_layer: bool = True )

Parameters

The bare Beit Model transformer outputting raw hidden-states without any specific head on top. This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

(

pixel_values: typing.Optional[torch.Tensor] = None

bool_masked_pos: typing.Optional[torch.BoolTensor] = None

head_mask: typing.Optional[torch.Tensor] = None

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.models.beit.modeling_beit.BeitModelOutputWithPooling or tuple(torch.FloatTensor)

Parameters

torch.FloatTensor of shape (batch_size, num_channels, height, width)) —

Pixel values. Pixel values can be obtained using BeitFeatureExtractor. See

BeitFeatureExtractor.call() for details.

torch.FloatTensor of shape (num_heads,) or (num_layers, num_heads), optional) —

Mask to nullify selected heads of the self-attention modules. Mask values selected in [0, 1]:

bool, optional) —

Whether or not to return the attentions tensors of all attention layers. See attentions under returned

tensors for more detail.

bool, optional) —

Whether or not to return the hidden states of all layers. See hidden_states under returned tensors for

more detail.

bool, optional) —

Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.beit.modeling_beit.BeitModelOutputWithPooling or tuple(torch.FloatTensor)

A transformers.models.beit.modeling_beit.BeitModelOutputWithPooling or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (BeitConfig) and inputs.

last_hidden_state (torch.FloatTensor of shape (batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model.

pooler_output (torch.FloatTensor of shape (batch_size, hidden_size)) — Average of the last layer hidden states of the patch tokens (excluding the [CLS] token) if

config.use_mean_pooling is set to True. If set to False, then the final hidden state of the [CLS] token

will be returned.

hidden_states (tuple(torch.FloatTensor), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of torch.FloatTensor (one for the output of the embeddings + one for the output of each layer) of

shape (batch_size, sequence_length, hidden_size).

Hidden-states of the model at the output of each layer plus the initial embedding outputs.

attentions (tuple(torch.FloatTensor), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of torch.FloatTensor (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The BeitModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import BeitFeatureExtractor, BeitModel

>>> import torch

>>> from datasets import load_dataset

>>> dataset = load_dataset("huggingface/cats-image")

>>> image = dataset["test"]["image"][0]

>>> feature_extractor = BeitFeatureExtractor.from_pretrained("microsoft/beit-base-patch16-224-pt22k")

>>> model = BeitModel.from_pretrained("microsoft/beit-base-patch16-224-pt22k")

>>> inputs = feature_extractor(image, return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_state

>>> list(last_hidden_states.shape)

[1, 197, 768]( config: BeitConfig )

Parameters

Beit Model transformer with a ‘language’ modeling head on top. BEiT does masked image modeling by predicting visual tokens of a Vector-Quantize Variational Autoencoder (VQ-VAE), whereas other vision models like ViT and DeiT predict RGB pixel values. As a result, this class is incompatible with AutoModelForMaskedImageModeling, so you will need to use BeitForMaskedImageModeling directly if you wish to do masked image modeling with BEiT. This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

(

pixel_values: typing.Optional[torch.Tensor] = None

bool_masked_pos: typing.Optional[torch.BoolTensor] = None

head_mask: typing.Optional[torch.Tensor] = None

labels: typing.Optional[torch.Tensor] = None

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.modeling_outputs.MaskedLMOutput or tuple(torch.FloatTensor)

Parameters

torch.FloatTensor of shape (batch_size, num_channels, height, width)) —

Pixel values. Pixel values can be obtained using BeitFeatureExtractor. See

BeitFeatureExtractor.call() for details.

torch.FloatTensor of shape (num_heads,) or (num_layers, num_heads), optional) —

Mask to nullify selected heads of the self-attention modules. Mask values selected in [0, 1]:

bool, optional) —

Whether or not to return the attentions tensors of all attention layers. See attentions under returned

tensors for more detail.

bool, optional) —

Whether or not to return the hidden states of all layers. See hidden_states under returned tensors for

more detail.

bool, optional) —

Whether or not to return a ModelOutput instead of a plain tuple.

torch.BoolTensor of shape (batch_size, num_patches)) —

Boolean masked positions. Indicates which patches are masked (1) and which aren’t (0).

torch.LongTensor of shape (batch_size,), optional) —

Labels for computing the image classification/regression loss. Indices should be in [0, ..., config.num_labels - 1]. If config.num_labels == 1 a regression loss is computed (Mean-Square loss), If

config.num_labels > 1 a classification loss is computed (Cross-Entropy).

Returns

transformers.modeling_outputs.MaskedLMOutput or tuple(torch.FloatTensor)

A transformers.modeling_outputs.MaskedLMOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (BeitConfig) and inputs.

loss (torch.FloatTensor of shape (1,), optional, returned when labels is provided) — Masked language modeling (MLM) loss.

logits (torch.FloatTensor of shape (batch_size, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax).

hidden_states (tuple(torch.FloatTensor), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of torch.FloatTensor (one for the output of the embeddings, if the model has an embedding layer, +

one for the output of each layer) of shape (batch_size, sequence_length, hidden_size).

Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

attentions (tuple(torch.FloatTensor), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of torch.FloatTensor (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The BeitForMaskedImageModeling forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import BeitFeatureExtractor, BeitForMaskedImageModeling

>>> import torch

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = BeitFeatureExtractor.from_pretrained("microsoft/beit-base-patch16-224-pt22k")

>>> model = BeitForMaskedImageModeling.from_pretrained("microsoft/beit-base-patch16-224-pt22k")

>>> num_patches = (model.config.image_size // model.config.patch_size) ** 2

>>> pixel_values = feature_extractor(images=image, return_tensors="pt").pixel_values

>>> # create random boolean mask of shape (batch_size, num_patches)

>>> bool_masked_pos = torch.randint(low=0, high=2, size=(1, num_patches)).bool()

>>> outputs = model(pixel_values, bool_masked_pos=bool_masked_pos)

>>> loss, logits = outputs.loss, outputs.logits

>>> list(logits.shape)

[1, 196, 8192]( config: BeitConfig )

Parameters

Beit Model transformer with an image classification head on top (a linear layer on top of the average of the final hidden states of the patch tokens) e.g. for ImageNet.

This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

(

pixel_values: typing.Optional[torch.Tensor] = None

head_mask: typing.Optional[torch.Tensor] = None

labels: typing.Optional[torch.Tensor] = None

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.modeling_outputs.ImageClassifierOutput or tuple(torch.FloatTensor)

Parameters

torch.FloatTensor of shape (batch_size, num_channels, height, width)) —

Pixel values. Pixel values can be obtained using BeitFeatureExtractor. See

BeitFeatureExtractor.call() for details.

torch.FloatTensor of shape (num_heads,) or (num_layers, num_heads), optional) —

Mask to nullify selected heads of the self-attention modules. Mask values selected in [0, 1]:

bool, optional) —

Whether or not to return the attentions tensors of all attention layers. See attentions under returned

tensors for more detail.

bool, optional) —

Whether or not to return the hidden states of all layers. See hidden_states under returned tensors for

more detail.

bool, optional) —

Whether or not to return a ModelOutput instead of a plain tuple.

torch.LongTensor of shape (batch_size,), optional) —

Labels for computing the image classification/regression loss. Indices should be in [0, ..., config.num_labels - 1]. If config.num_labels == 1 a regression loss is computed (Mean-Square loss), If

config.num_labels > 1 a classification loss is computed (Cross-Entropy).

Returns

transformers.modeling_outputs.ImageClassifierOutput or tuple(torch.FloatTensor)

A transformers.modeling_outputs.ImageClassifierOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (BeitConfig) and inputs.

loss (torch.FloatTensor of shape (1,), optional, returned when labels is provided) — Classification (or regression if config.num_labels==1) loss.

logits (torch.FloatTensor of shape (batch_size, config.num_labels)) — Classification (or regression if config.num_labels==1) scores (before SoftMax).

hidden_states (tuple(torch.FloatTensor), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of torch.FloatTensor (one for the output of the embeddings, if the model has an embedding layer, +

one for the output of each stage) of shape (batch_size, sequence_length, hidden_size). Hidden-states

(also called feature maps) of the model at the output of each stage.

attentions (tuple(torch.FloatTensor), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of torch.FloatTensor (one for each layer) of shape (batch_size, num_heads, patch_size, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The BeitForImageClassification forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import BeitFeatureExtractor, BeitForImageClassification

>>> import torch

>>> from datasets import load_dataset

>>> dataset = load_dataset("huggingface/cats-image")

>>> image = dataset["test"]["image"][0]

>>> feature_extractor = BeitFeatureExtractor.from_pretrained("microsoft/beit-base-patch16-224")

>>> model = BeitForImageClassification.from_pretrained("microsoft/beit-base-patch16-224")

>>> inputs = feature_extractor(image, return_tensors="pt")

>>> with torch.no_grad():

... logits = model(**inputs).logits

>>> # model predicts one of the 1000 ImageNet classes

>>> predicted_label = logits.argmax(-1).item()

>>> print(model.config.id2label[predicted_label])

tabby, tabby cat( config: BeitConfig )

Parameters

Beit Model transformer with a semantic segmentation head on top e.g. for ADE20k, CityScapes.

This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

(

pixel_values: typing.Optional[torch.Tensor] = None

head_mask: typing.Optional[torch.Tensor] = None

labels: typing.Optional[torch.Tensor] = None

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.modeling_outputs.SemanticSegmenterOutput or tuple(torch.FloatTensor)

Parameters

torch.FloatTensor of shape (batch_size, num_channels, height, width)) —

Pixel values. Pixel values can be obtained using BeitFeatureExtractor. See

BeitFeatureExtractor.call() for details.

torch.FloatTensor of shape (num_heads,) or (num_layers, num_heads), optional) —

Mask to nullify selected heads of the self-attention modules. Mask values selected in [0, 1]:

bool, optional) —

Whether or not to return the attentions tensors of all attention layers. See attentions under returned

tensors for more detail.

bool, optional) —

Whether or not to return the hidden states of all layers. See hidden_states under returned tensors for

more detail.

bool, optional) —

Whether or not to return a ModelOutput instead of a plain tuple.

torch.LongTensor of shape (batch_size, height, width), optional) —

Ground truth semantic segmentation maps for computing the loss. Indices should be in [0, ..., config.num_labels - 1]. If config.num_labels > 1, a classification loss is computed (Cross-Entropy).

Returns

transformers.modeling_outputs.SemanticSegmenterOutput or tuple(torch.FloatTensor)

A transformers.modeling_outputs.SemanticSegmenterOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (BeitConfig) and inputs.

loss (torch.FloatTensor of shape (1,), optional, returned when labels is provided) — Classification (or regression if config.num_labels==1) loss.

logits (torch.FloatTensor of shape (batch_size, config.num_labels, logits_height, logits_width)) — Classification scores for each pixel.

The logits returned do not necessarily have the same size as the pixel_values passed as inputs. This is

to avoid doing two interpolations and lose some quality when a user needs to resize the logits to the

original image size as post-processing. You should always check your logits shape and resize as needed.

hidden_states (tuple(torch.FloatTensor), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of torch.FloatTensor (one for the output of the embeddings, if the model has an embedding layer, +

one for the output of each layer) of shape (batch_size, patch_size, hidden_size).

Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

attentions (tuple(torch.FloatTensor), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of torch.FloatTensor (one for each layer) of shape (batch_size, num_heads, patch_size, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The BeitForSemanticSegmentation forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoFeatureExtractor, BeitForSemanticSegmentation

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/beit-base-finetuned-ade-640-640")

>>> model = BeitForSemanticSegmentation.from_pretrained("microsoft/beit-base-finetuned-ade-640-640")

>>> inputs = feature_extractor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> # logits are of shape (batch_size, num_labels, height, width)

>>> logits = outputs.logits( config: BeitConfig input_shape = None seed: int = 0 dtype: dtype = <class 'jax.numpy.float32'> _do_init: bool = True **kwargs )

Parameters

jax.numpy.dtype, optional, defaults to jax.numpy.float32) —

The data type of the computation. Can be one of jax.numpy.float32, jax.numpy.float16 (on GPUs) and

jax.numpy.bfloat16 (on TPUs).

This can be used to enable mixed-precision training or half-precision inference on GPUs or TPUs. If

specified all the computation will be performed with the given dtype.

Note that this only specifies the dtype of the computation and does not influence the dtype of model parameters.

If you wish to change the dtype of the model parameters, see to_fp16() and to_bf16().

The bare Beit Model transformer outputting raw hidden-states without any specific head on top.

This model inherits from FlaxPreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading, saving and converting weights from PyTorch models)

This model is also a Flax Linen flax.linen.Module subclass. Use it as a regular Flax linen Module and refer to the Flax documentation for all matter related to general usage and behavior.

Finally, this model supports inherent JAX features such as:

(

pixel_values

bool_masked_pos = None

params: dict = None

dropout_rng: PRNGKey = None

train: bool = False

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.models.beit.modeling_flax_beit.FlaxBeitModelOutputWithPooling or tuple(torch.FloatTensor)

Returns

transformers.models.beit.modeling_flax_beit.FlaxBeitModelOutputWithPooling or tuple(torch.FloatTensor)

A transformers.models.beit.modeling_flax_beit.FlaxBeitModelOutputWithPooling or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (<class 'transformers.models.beit.configuration_beit.BeitConfig'>) and inputs.

jnp.ndarray of shape (batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model.jnp.ndarray of shape (batch_size, hidden_size)) — Average of the last layer hidden states of the patch tokens (excluding the [CLS] token) if

config.use_mean_pooling is set to True. If set to False, then the final hidden state of the [CLS] token

will be returned.tuple(jnp.ndarray), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of jnp.ndarray (one for the output of the embeddings + one for the output of each layer) of shape

(batch_size, sequence_length, hidden_size). Hidden-states of the model at the output of each layer plus

the initial embedding outputs.tuple(jnp.ndarray), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of jnp.ndarray (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in

the self-attention heads.The FlaxBeitPreTrainedModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import BeitFeatureExtractor, FlaxBeitModel

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = BeitFeatureExtractor.from_pretrained("microsoft/beit-base-patch16-224-pt22k-ft22k")

>>> model = FlaxBeitModel.from_pretrained("microsoft/beit-base-patch16-224-pt22k-ft22k")

>>> inputs = feature_extractor(images=image, return_tensors="np")

>>> outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_state( config: BeitConfig input_shape = None seed: int = 0 dtype: dtype = <class 'jax.numpy.float32'> _do_init: bool = True **kwargs )

Parameters

jax.numpy.dtype, optional, defaults to jax.numpy.float32) —

The data type of the computation. Can be one of jax.numpy.float32, jax.numpy.float16 (on GPUs) and

jax.numpy.bfloat16 (on TPUs).

This can be used to enable mixed-precision training or half-precision inference on GPUs or TPUs. If

specified all the computation will be performed with the given dtype.

Note that this only specifies the dtype of the computation and does not influence the dtype of model parameters.

If you wish to change the dtype of the model parameters, see to_fp16() and to_bf16().

Beit Model transformer with a ‘language’ modeling head on top (to predict visual tokens).

This model inherits from FlaxPreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading, saving and converting weights from PyTorch models)

This model is also a Flax Linen flax.linen.Module subclass. Use it as a regular Flax linen Module and refer to the Flax documentation for all matter related to general usage and behavior.

Finally, this model supports inherent JAX features such as:

(

pixel_values

bool_masked_pos = None

params: dict = None

dropout_rng: PRNGKey = None

train: bool = False

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.modeling_flax_outputs.FlaxMaskedLMOutput or tuple(torch.FloatTensor)

Returns

transformers.modeling_flax_outputs.FlaxMaskedLMOutput or tuple(torch.FloatTensor)

A transformers.modeling_flax_outputs.FlaxMaskedLMOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (<class 'transformers.models.beit.configuration_beit.BeitConfig'>) and inputs.

logits (jnp.ndarray of shape (batch_size, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax).

hidden_states (tuple(jnp.ndarray), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of jnp.ndarray (one for the output of the embeddings + one for the output of each layer) of shape

(batch_size, sequence_length, hidden_size).

Hidden-states of the model at the output of each layer plus the initial embedding outputs.

attentions (tuple(jnp.ndarray), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of jnp.ndarray (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The FlaxBeitPreTrainedModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

bool_masked_pos (numpy.ndarray of shape (batch_size, num_patches)):

Boolean masked positions. Indicates which patches are masked (1) and which aren’t (0).

Examples:

>>> from transformers import BeitFeatureExtractor, BeitForMaskedImageModeling

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = BeitFeatureExtractor.from_pretrained("microsoft/beit-base-patch16-224-pt22k")

>>> model = BeitForMaskedImageModeling.from_pretrained("microsoft/beit-base-patch16-224-pt22k")

>>> inputs = feature_extractor(images=image, return_tensors="np")

>>> outputs = model(**inputs)

>>> logits = outputs.logits( config: BeitConfig input_shape = None seed: int = 0 dtype: dtype = <class 'jax.numpy.float32'> _do_init: bool = True **kwargs )

Parameters

jax.numpy.dtype, optional, defaults to jax.numpy.float32) —

The data type of the computation. Can be one of jax.numpy.float32, jax.numpy.float16 (on GPUs) and

jax.numpy.bfloat16 (on TPUs).

This can be used to enable mixed-precision training or half-precision inference on GPUs or TPUs. If

specified all the computation will be performed with the given dtype.

Note that this only specifies the dtype of the computation and does not influence the dtype of model parameters.

If you wish to change the dtype of the model parameters, see to_fp16() and to_bf16().

Beit Model transformer with an image classification head on top (a linear layer on top of the average of the final hidden states of the patch tokens) e.g. for ImageNet.

This model inherits from FlaxPreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading, saving and converting weights from PyTorch models)

This model is also a Flax Linen flax.linen.Module subclass. Use it as a regular Flax linen Module and refer to the Flax documentation for all matter related to general usage and behavior.

Finally, this model supports inherent JAX features such as:

(

pixel_values

bool_masked_pos = None

params: dict = None

dropout_rng: PRNGKey = None

train: bool = False

output_attentions: typing.Optional[bool] = None

output_hidden_states: typing.Optional[bool] = None

return_dict: typing.Optional[bool] = None

)

→

transformers.modeling_flax_outputs.FlaxSequenceClassifierOutput or tuple(torch.FloatTensor)

Returns

transformers.modeling_flax_outputs.FlaxSequenceClassifierOutput or tuple(torch.FloatTensor)

A transformers.modeling_flax_outputs.FlaxSequenceClassifierOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (<class 'transformers.models.beit.configuration_beit.BeitConfig'>) and inputs.

logits (jnp.ndarray of shape (batch_size, config.num_labels)) — Classification (or regression if config.num_labels==1) scores (before SoftMax).

hidden_states (tuple(jnp.ndarray), optional, returned when output_hidden_states=True is passed or when config.output_hidden_states=True) — Tuple of jnp.ndarray (one for the output of the embeddings + one for the output of each layer) of shape

(batch_size, sequence_length, hidden_size).

Hidden-states of the model at the output of each layer plus the initial embedding outputs.

attentions (tuple(jnp.ndarray), optional, returned when output_attentions=True is passed or when config.output_attentions=True) — Tuple of jnp.ndarray (one for each layer) of shape (batch_size, num_heads, sequence_length, sequence_length).

Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The FlaxBeitPreTrainedModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import BeitFeatureExtractor, FlaxBeitForImageClassification

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = BeitFeatureExtractor.from_pretrained("microsoft/beit-base-patch16-224")

>>> model = FlaxBeitForImageClassification.from_pretrained("microsoft/beit-base-patch16-224")

>>> inputs = feature_extractor(images=image, return_tensors="np")

>>> outputs = model(**inputs)

>>> logits = outputs.logits

>>> # model predicts one of the 1000 ImageNet classes

>>> predicted_class_idx = logits.argmax(-1).item()

>>> print("Predicted class:", model.config.id2label[predicted_class_idx])