FRACTAL-LidarHD_7cl_randlanet

The general characteristics of this specific model FRACTAL-LidarHD_7cl_randlanet are :

- Trained with the FRACTAL dataset

- Aerial lidar point clouds, colorized with rgb + near-infrared, with high point density (~40 pts/m²)

- RandLa-Net architecture as implemented in the Myria3D library

- 7 class nomenclature : [other, ground, vegetation, building, water, bridge, permanent structure]

## Model Informations

- **Code repository:** https://github.com/IGNF/myria3d (V3.8)

- **Paper:** TBD

- **Developed by:** IGN

- **Compute infrastructure:**

- software: python, pytorch-lightning

- hardware: in-house HPC/AI resources

- **License:** : Etalab 2.0

---

## Uses

The model was specifically trained and designed for the **semantic segmentation of aerial lidar point clouds from the Lidar HD program (2020-2025)**.

The Lidar HD is an ambitious initiative that aim to obtain a 3D description of the French territory by 2026.

While the model could be applied to other types of point clouds, [Lidar HD](https://geoservices.ign.fr/lidarhd) data have specific geometric specifications. Furthermore, the training data was colorized

with very-high-definition aerial images from the ([BD ORTHO®](https://geoservices.ign.fr/bdortho)), which have their own spatial and radiometric specifications. Consequently, the model's prediction would improve for aerial lidar point clouds with similar densities and colorimetries than the original ones.

**_Data preprocessing_**: ?? keep ?

**_Multi-domain model_**: The FRACTAL dataset used for training covers 5 spatial domains from 5 southern regions of metropolitan France.

The 250 km² of data in FRACTAL were sampled from an original 17440 km² area, and cover a wide diversity of landscapes and scenes.

While large and diverse, this data only covers a fraction of the French territory, and the model should be used with adequate verifications when applied to new domains.

This being said, while domain shifts are frequent for aerial imageries due to different acquisition conditions and downstream data processing,

the aerial lidar point clouds are expected to have more consistent characteristiques

(density, range of acquisition angle, etc.) across spatial domains.

## Bias, Risks, Limitations and Recommendations

---

## How to Get Started with the Model

Model was trained in an open source deep learning code repository developped in-house: [github.com/IGNF/myria3d](https://github.com/IGNF/myria3d)).

Inference is only supported in this library, and inference instructions are detailed in the code repository documentation.

Patched inference from large point clouds (e.g. 1 x 1 km Lidar HD tiles) is supported, with or without (by default) overlapping sliding windows.

The original point cloud is augmented with several dimensions: a PredictedClassification dimension, an entropy dimension, and (optionnaly) class probability dimensions (e.g. building, ground...).

For convenience and scalable model deployment, Myria3D comes with a Dockerfile.

---

## Training Details

The data comes from the Lidar HD program, more specifically from acquisition areas that underwent automated classification followed by manual correction (so-called "optimized Lidar HD").

It meets the quality requirements of the Lidar HD program, which accepts a controlled level of classification errors for each semantic class.

### Training Data

80,000 point cloud patches of 50 x 50 meters were used to train the **FRACTAL-LidarHD_7cl_randlanet** model.

10,000 additional patches were used for model validation.

### Training Procedure

#### Preprocessing

Point clouds were preprocessed for training with point subsampling, filtering of artefacts points, on-the-fly creation of colorimetric features, and normalization of features and coordinates.

For inference, a preprocessing as close as possible should be used. Refer to the inference configuration file, and to the Myria3D code repository (V3.8).

#### Training Hyperparameters

* Model architecture: RandLa-Net (implemented with the Pytorch-Geometric framework in [Myria3D](https://github.com/IGNF/myria3d/blob/main/myria3d/models/modules/pyg_randla_net.py))

* Augmentation :

* VerticalFlip(p=0.5)

* HorizontalFlip(p=0.5)

* Features:

* Lidar: x, y, z, echo number (1-based numbering), number of echos, reflectance (a.k.a intensity)

* Colors:

* Original: RGB + Near-Infrared (colorization from aerial images by vertical pixel-point alignement)

* Derived: average color = (R+G+B)/3 and NDVI.

* Input preprocessing:

* grid sampling: 0.25 m

* random sampling: 40,000 (if higher)

* horizontal normalization: mean xy substraction

* vertical normalization: min z substraction

* coordinates normalization: division by 25 meters

* basic occlusion model: nullify color channels if echo_number > 1

* features scaling (0-1 range):

* echo number and number of echos: division by 7

* color (r, g, b, near-infrared, average color): division by 65280 (i.e. 255*256)

* features normalization:

* reflectance: log-normalization, standardization, clipping of amplitude above 3 standard deviations.

* average color: same as reflectance.

* Batch size: 10

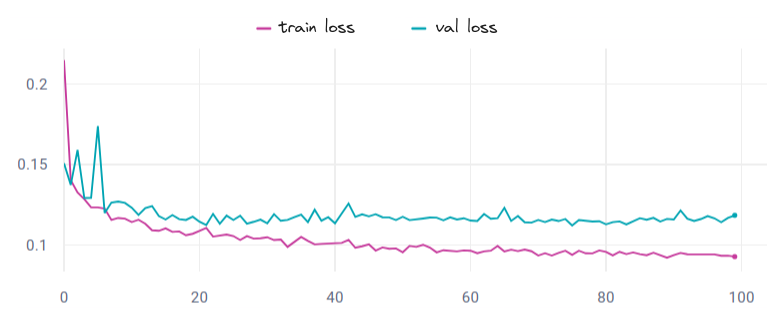

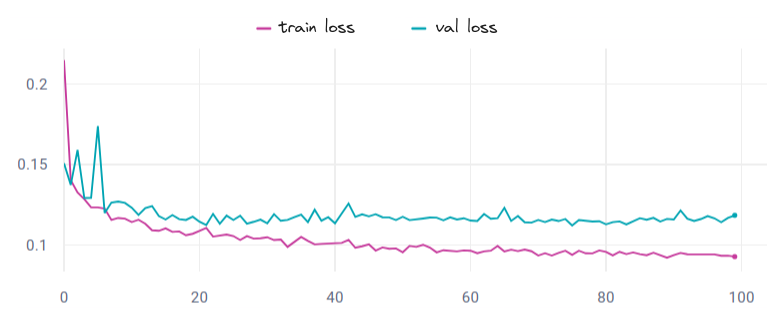

* Number of epochs : 100 (min) - 150 (max)

* Early stopping : patience 6 and val_loss as monitor criterium

* Optimizer : Adam

* Schaeduler : mode = "min", factor = 0.5, patience = 20, cooldown = 5

* Learning rate : 0.004

#### Speeds, Sizes, Times

The **FRACTAL-LidarHD_7cl_randlanet** model was trained on an in-house HPC cluster.

6 V100 GPUs were used (2 nodes, 3 GPUS per node). With this configuration the approximate learning time is 30 minutes per epoch.

The model was obtained for num_epoch=21 with corresponding val_loss=0.112.