---

license: etalab-2.0

tags:

- segmentation

- pytorch

- aerial imagery

- landcover

- IGN

model-index:

- name: FLAIR-INC_RVBIE_unetresnet34_15cl_norm

results:

- task:

type: semantic-segmentation

dataset:

name: IGNF/FLAIR/

type: earth-observation-dataset

metrics:

- name: mIoU

type: mIoU

value: 54.7168

- name: Overall Accuracy

type: OA

value: 76.3711

- name: Fscore

type: Fscore

value: 67.6063

- name: Precision

type: Precision

value: 69.3481

- name: Recall

type: Recall

value: 67.6565

- name: IoU Buildings

type: IoU

value: 82.6313

- name: IoU Pervious surface

type: IoU

value: 53.2351

- name: IoU Impervious surface

type: IoU

value: 74.1742

- name: IoU Bare soil

type: IoU

value: 60.3958

- name: IoU Water

type: IoU

value: 87.5887

- name: IoU Coniferous

type: IoU

value: 46.3504

- name: IoU Deciduous

type: IoU

value: 67.4473

- name: IoU Brushwood

type: IoU

value: 30.2346

- name: IoU Vineyard

type: IoU

value: 82.9251

- name: IoU Herbaceous vegetation

type: IoU

value: 55.0283

- name: IoU Agricultural land

type: IoU

value: 52.0145

- name: IoU Plowed land

type: IoU

value: 40.8387

- name: IoU Swimming pool

type: IoU

value: 48.4433

- name: IoU Greenhouse

type: IoU

value: 39.4447

pipeline_tag: image-segmentation

---

# FLAIR model collection

The FLAIR models is a collection of semantic segmentation models initially developed to classify land cover on very high resolution aerial ortho-images ([BD ORTHO®](https://geoservices.ign.fr/bdortho)).

The distributed pre-trained models differ in their :

* dataset for training : [**FLAIR** dataset] (https://huggingface.co/datasets/IGNF/FLAIR) or the increased version of this dataset **FLAIR-INC** (x 3.5 patches) .

* input modalities : **RGB** (natural colours), **RGBI** (natural colours + infrared), **RGBIE** (natural colours + infrared + elevation)

* model architecture : **resnet34_unet** (U-Net with a Resnet-34 encoder), **deeplab**

* target class nomenclature : **12cl** (12 land cover classes) or **15cl** (15 land cover classes)

# FLAIR FLAIR-INC_RVBIE_resnet34_unet_15cl_norm model

The general characteristics of this specific model **FLAIR-INC_RVBIE_resnet34_unet_15cl_norm** are :

* RGBIE images (true colours + infrared + elevation)

* U-Net with a Resnet-34 encoder

* 15 class nomenclature : [building, pervious_ surface, impervious_surface, bare_soil, water, coniferous, deciduous, brushwood, vineyard, herbaceous, agricultural_land, plowed_land, swimming pool, snow, greenhouse]

## Model Informations

- **Repository:** https://github.com/IGNF/FLAIR-1-AI-Challenge

- **Paper [optional]:** https://arxiv.org/pdf/2211.12979.pdf

- **Developed by:** IGN

- **Compute infrastructure:**

- software: python, pytorch-lightning

- hardware: GENCI, XXX

- **License:** : Apache 2.0

## Uses

Although the model can be applied to other type of very high spatial earth observation images, it was initially developed to tackle the problem of classifying aerial images acquired on the French Territory.

The product called ([BD ORTHO®](https://geoservices.ign.fr/bdortho)) has its own spatial and radiometric specifications. The model is not intended to be generic to other type of very high spatial resolution images but specific to BD ORTHO images.

As a result, the prediction produced by the model would be all the better as the user images are similar to the original ones.

_**Radiometry of input images**_ :

The BD ORTHO input images are distributed in 8-bit encoding format per channel. When traning the model, input normalization was performed (see section **Traing Details**).

It is recommended that the user apply the same type of input normalization while inferring the model.

_**Multi-domain model**_ :

The FLAIR-INC dataset that was used for training is composed of 75 radiometric domains. In the case of aerial images, domain shifts are frequent and are mainly due to : the date of acquisition of the aerial survey (april to november), the spatial domain (equivalent to a french department administrative division) and downstream radimetric processing.

By construction (sampling 75 domains) the model is robust to these shifts, and can be applied to any images of the ([BD ORTHO® product](https://geoservices.ign.fr/bdortho)).

_**Land Cover classes of prediction**_ :

The orginial class nomenclature of the FLAIR Dataset is made up of 19 classes(See the [FLAIR dataset](https://huggingface.co/datasets/IGNF/FLAIR) page for details).

However 3 classes corresponding to uncertain labelisation (Mixed (16), Ligneous (17) and Other (19)) and 1 class with very poor labelling (Clear cut (15)) were deasctivated during training.

As a result, the logits produced by the model are of size 19x1, but class 15,16,17 and 19 : (1) should appear at 0 in the logits (2) should never predicted in the Argmax.

## Bias, Risks, Limitations and Recommendations

_**Using the model on input images with other spatial resolution**_ :

The FLAIR-INC_RVBIE_resnet34_unet_15cl_norm model was trained with fixed scale conditions.All patches used for training are derived from aerial images of 0.2 meters spatial resolution. Only flip and rotate augmentation were performed during the training process.

No data augmentation method concerning scale change was used during training. The user should pay attention that generalization issues can occur while applying this model to images that have different spatial resolutions.

_**Using the model for other remote sensing sensors**_ :

The FLAIR-INC_RVBIE_resnet34_unet_15cl_norm model was trained with aerial images of the ([BD ORTHO® product](https://geoservices.ign.fr/bdortho)) that encopass very specific radiometric image processing.

Using the model on other type of aerial images or satellite images may imply the use of transfer learning or domain adaptation techniques.

_**Using the model on other spatial areas**_ :

The FLAIR-INC_RVBIE_resnet34_unet_15cl_norm model was trained on patches reprensenting the French Metropolitan territory.

The user should be aware that applying the model to other type of landscapes may imply a drop in model metrics.

## How to Get Started with the Model

Use the code below to get started with the model.

{{ get_started_code | default("[More Information Needed]", true)}}

## Training Details

### Training Data

218 400 patches of 512 x 512 pixels were used to train the **FLAIR-INC_RVBIE_resnet34_unet_15cl_norm** model.

The train/validation split was performed patchwise to obtain a 80% / 20% distribution between train and validation.

Annotation was performed at the _zone_ level (~100 patches per _zone_). Spatial independancy between patches is guaranted as patches from the same _zone_ were assigned to the same set (TRAIN or VALIDATION).

Here are the number of patches used for train and validation :

| TRAIN set | 174 700 patches |

| VALIDATION set | 43 700 patchs |

### Training Procedure

#### Preprocessing [optional]

For traning the model, input normalization was performed so as the input dataset has **a mean=0** and a **standard deviation = 1** channel wise.

We used the statistics of TRAIN+VALIDATION for input normalization. It is recommended that the user apply the same type of input normalization. Here are the statistics of the TRAIN+VALIDATIOn set :

| Modalities | Mean (Train + Validation) |Std (Train + Validation) |

| ----------------------- | ----------- |----------- |

| Red Channel (R) | 105.08 |52.17 |

| Green Channel (G) | 110.87 |45.38 |

| Blue Channel (B) | 101.82 |44.00 |

| Infrared Channel (I) | 106.38 |39.69 |

| Elevation Channel (E) | 53.26 |79.30 |

#### Training Hyperparameters

- **Training regime:** {{ training_regime | default("[More Information Needed]", true)}}

* Model architecture: Unet (implementation from the [Segmentation Models Pytorch library](https://segmentation-modelspytorch.readthedocs.io/en/latest/docs/api.html#unet)

* Encoder : Resnet-34 pre-trained with ImageNet

* Augmentation :

* VerticalFlip(p=0.5)

* HorizontalFlip(p=0.5)

* RandomRotate90(p=0.5)

* Input normalization (mean=0 | std=1):

* norm_means: [105.08, 110.87, 101.82, 106.38, 53.26]

* norm_stds: [52.17, 45.38, 44, 39.69, 79.3]

* Seed: 2022

* Batch size: 10

* Number of epochs : 200

* Early stopping : patience 30 and val_loss as monitor criterium

* Optimizer : SGD

* Schaeduler : mode = "min", factor = 0.5, patience = 10, cooldown = 4, min_lr = 1e-7

* Learning rate : 0.02

* Class Weights : [1-building: 1.0 , 2-pervious surface: 1.0 , 3-impervious surface: 1.0 , 4-bare soil: 1.0 , 5-water: 1.0 , 6-coniferous: 1.0 , 7-deciduous: 1.0 , 8-brushwood: 1.0 , 9-vineyard: 1.0 , 10-herbaceous vegetation: 1.0 , 11-agricultural land: 1.0 , 12-plowed land: 1.0 , 13-swimming_pool: 1.0 , 14-snow: 1.0 , 15-clear cut: 0.0 , 16-mixed: 0.0 , 17-ligneous: 0.0 , 18-greenhouse: 1.0 , 19-other: 0.0]

#### Speeds, Sizes, Times [optional]

The FLAIR-INC_RVBIE_resnet34_unet_15cl_norm model was trained on a HPC/AI resources provided by GENCI-IDRIS (Grant 2022-A0131013803). 16 V100 GPUs were requested ( 4 nodes, 4 GPUS per node).

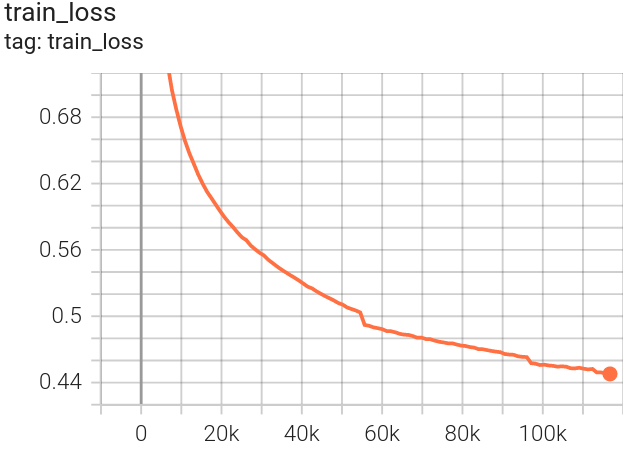

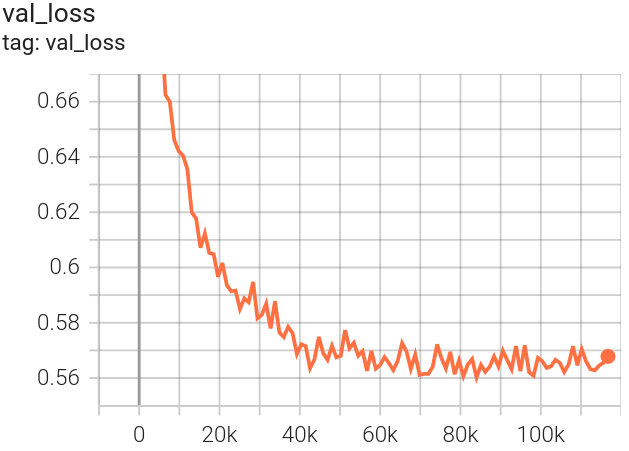

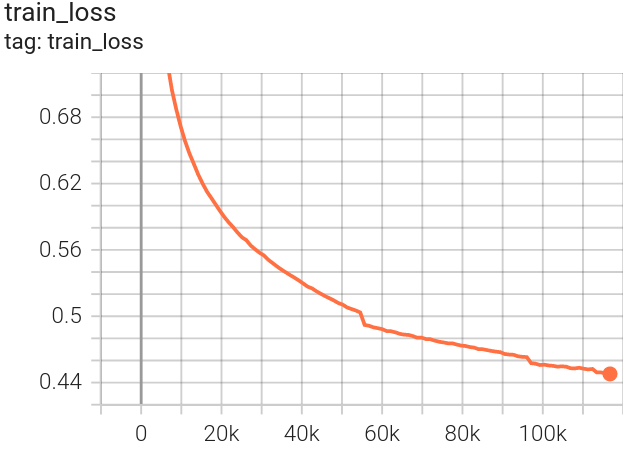

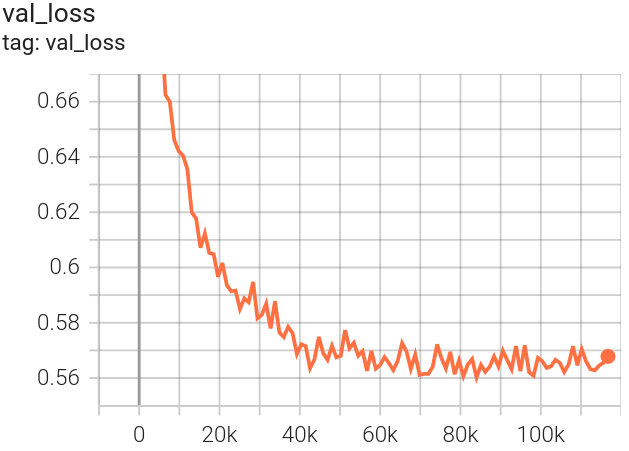

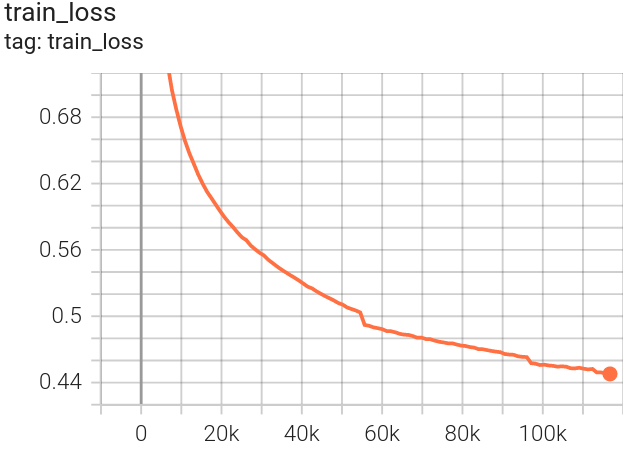

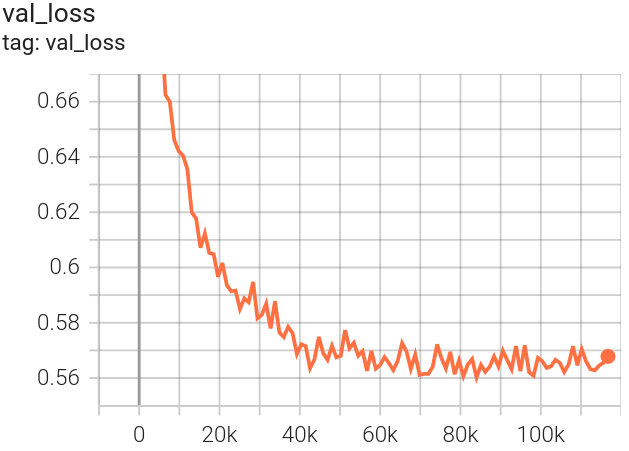

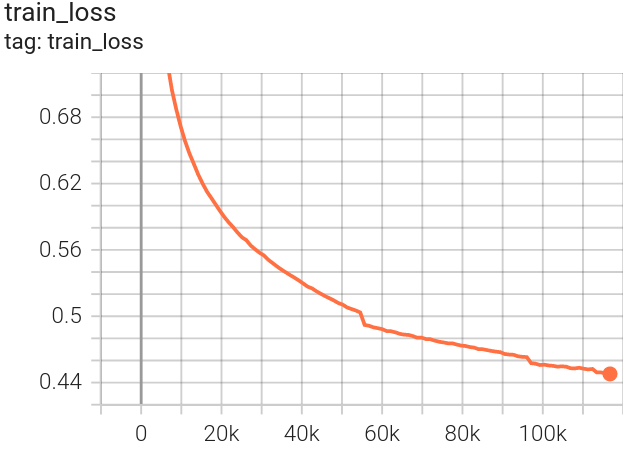

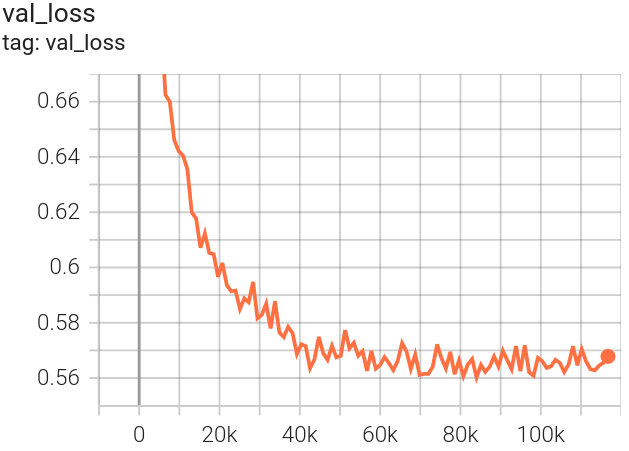

FLAIR-INC_RVBIE_resnet34_unet_15cl_norm was obtained for num_epoch=76 with corresponding val_loss=0.56.

| TRAIN loss

|VALIDATION loss

|

| --------------------------------------- | ------------------------------------- |

| ` |`

|` |

## Evaluation

### Testing Data, Factors & Metrics

#### Testing Data

The evaluation was performed on a TEST set of 31750 patches that are independant from the TRAIN and VALIDATION patches. They represent 15 spatio-temporal domains.

The TEST set corresponds to the reunion of the TEST set of scientific challenges FLAIR#1 and FLAIR#2. See the [FLAIR challenge page](https://ignf.github.io/FLAIR/) for more details.

The choice of a separate TEST set instead of cross validation was made to be coherent with the FLAIR challenges.

However the metrics for the Challenge were calculated on 12 classes and the TEST set acordingly. As a result the _Snow_ class is unfortunately absent from the TEST set.

#### Metrics

With the evaluation protocol, the **FLAIR-INC_RVBIE_resnet34_unet_15cl_norm** have been evaluated to **OA= 76.37%** and **mIoU=54.71%**.

The following table give the class-wise metrics :

| Modalities | IoU (%) | Fscore (%) | Precision (%) | Recall (%) |

| ----------------------- | ----------|---------|---------|---------|

| building | 82.63 | 90.49 | 90.26 | 90.72 |

| pervious surface | 53.24 | 69.48 | 68.97 | 70.00 |

| impervious surface | 74.17 | 85.17 | 86.28 | 84.09 |

| bare soil | 60.40 | 75.31 | 80.49 | 70.75 |

| water | 87.59 | 93.38 | 93.16 | 93.61 |

| coniferous | 46.35 | 63.34 | 63.52 | 63.16 |

| deciduous | 67.45 | 80.56 | 77.44 | 83.94 |

| brushwood | 30.23 | 46.43 | 63.55 | 36.58 |

| vineyard | 82.93 | 90.67 | 91.35 | 89.99 |

| herbaceous vegetation | 55.03 | 70.99 | 70.59 | 71.40 |

| agricultural land | 52.01 | 68.43 | 59.18 | 81.12 |

| plowed land | 40.84 | 57.99 | 68.28 | 50.40 |

| swimming_pool | 48.44 | 65.27 | 81.62 | 54.37 |

| snow | 00.00 | 00.00 | 00.00 | 00.00 |

| greenhouse | 39.45 | 56.57 | 45.52 | 74.72 |

| ----------------------- | ----------|---------|---------|---------|

| average | 54.72 | 67.60 | 69.35 | 67.66 |

{{ testing_metrics | default("[More Information Needed]", true)}}

|

|

## Evaluation

### Testing Data, Factors & Metrics

#### Testing Data

The evaluation was performed on a TEST set of 31750 patches that are independant from the TRAIN and VALIDATION patches. They represent 15 spatio-temporal domains.

The TEST set corresponds to the reunion of the TEST set of scientific challenges FLAIR#1 and FLAIR#2. See the [FLAIR challenge page](https://ignf.github.io/FLAIR/) for more details.

The choice of a separate TEST set instead of cross validation was made to be coherent with the FLAIR challenges.

However the metrics for the Challenge were calculated on 12 classes and the TEST set acordingly. As a result the _Snow_ class is unfortunately absent from the TEST set.

#### Metrics

With the evaluation protocol, the **FLAIR-INC_RVBIE_resnet34_unet_15cl_norm** have been evaluated to **OA= 76.37%** and **mIoU=54.71%**.

The following table give the class-wise metrics :

| Modalities | IoU (%) | Fscore (%) | Precision (%) | Recall (%) |

| ----------------------- | ----------|---------|---------|---------|

| building | 82.63 | 90.49 | 90.26 | 90.72 |

| pervious surface | 53.24 | 69.48 | 68.97 | 70.00 |

| impervious surface | 74.17 | 85.17 | 86.28 | 84.09 |

| bare soil | 60.40 | 75.31 | 80.49 | 70.75 |

| water | 87.59 | 93.38 | 93.16 | 93.61 |

| coniferous | 46.35 | 63.34 | 63.52 | 63.16 |

| deciduous | 67.45 | 80.56 | 77.44 | 83.94 |

| brushwood | 30.23 | 46.43 | 63.55 | 36.58 |

| vineyard | 82.93 | 90.67 | 91.35 | 89.99 |

| herbaceous vegetation | 55.03 | 70.99 | 70.59 | 71.40 |

| agricultural land | 52.01 | 68.43 | 59.18 | 81.12 |

| plowed land | 40.84 | 57.99 | 68.28 | 50.40 |

| swimming_pool | 48.44 | 65.27 | 81.62 | 54.37 |

| snow | 00.00 | 00.00 | 00.00 | 00.00 |

| greenhouse | 39.45 | 56.57 | 45.52 | 74.72 |

| ----------------------- | ----------|---------|---------|---------|

| average | 54.72 | 67.60 | 69.35 | 67.66 |

{{ testing_metrics | default("[More Information Needed]", true)}}

| Confusion Matrix (precision)

|Confusion Matrix (recall)

|

| --------------------------------------- | ------------------------------------- |

| ` |`

|` |

### Results

{{ results | default("[More Information Needed]", true)}}

#### Summary

{{ results_summary | default("", true) }}

## Technical Specifications [optional]

### Model Architecture and Objective

{{ model_specs | default("[More Information Needed]", true)}}

## Citation [optional]

**BibTeX:**

{{ citation_bibtex | default("[More Information Needed]", true)}}

**APA:**

{{ citation_apa | default("[More Information Needed]", true)}}

## Contact

ai-challenge@ign.fr

|

### Results

{{ results | default("[More Information Needed]", true)}}

#### Summary

{{ results_summary | default("", true) }}

## Technical Specifications [optional]

### Model Architecture and Objective

{{ model_specs | default("[More Information Needed]", true)}}

## Citation [optional]

**BibTeX:**

{{ citation_bibtex | default("[More Information Needed]", true)}}

**APA:**

{{ citation_apa | default("[More Information Needed]", true)}}

## Contact

ai-challenge@ign.fr

|`

|` |

## Evaluation

### Testing Data, Factors & Metrics

#### Testing Data

The evaluation was performed on a TEST set of 31750 patches that are independant from the TRAIN and VALIDATION patches. They represent 15 spatio-temporal domains.

The TEST set corresponds to the reunion of the TEST set of scientific challenges FLAIR#1 and FLAIR#2. See the [FLAIR challenge page](https://ignf.github.io/FLAIR/) for more details.

The choice of a separate TEST set instead of cross validation was made to be coherent with the FLAIR challenges.

However the metrics for the Challenge were calculated on 12 classes and the TEST set acordingly. As a result the _Snow_ class is unfortunately absent from the TEST set.

#### Metrics

With the evaluation protocol, the **FLAIR-INC_RVBIE_resnet34_unet_15cl_norm** have been evaluated to **OA= 76.37%** and **mIoU=54.71%**.

The following table give the class-wise metrics :

| Modalities | IoU (%) | Fscore (%) | Precision (%) | Recall (%) |

| ----------------------- | ----------|---------|---------|---------|

| building | 82.63 | 90.49 | 90.26 | 90.72 |

| pervious surface | 53.24 | 69.48 | 68.97 | 70.00 |

| impervious surface | 74.17 | 85.17 | 86.28 | 84.09 |

| bare soil | 60.40 | 75.31 | 80.49 | 70.75 |

| water | 87.59 | 93.38 | 93.16 | 93.61 |

| coniferous | 46.35 | 63.34 | 63.52 | 63.16 |

| deciduous | 67.45 | 80.56 | 77.44 | 83.94 |

| brushwood | 30.23 | 46.43 | 63.55 | 36.58 |

| vineyard | 82.93 | 90.67 | 91.35 | 89.99 |

| herbaceous vegetation | 55.03 | 70.99 | 70.59 | 71.40 |

| agricultural land | 52.01 | 68.43 | 59.18 | 81.12 |

| plowed land | 40.84 | 57.99 | 68.28 | 50.40 |

| swimming_pool | 48.44 | 65.27 | 81.62 | 54.37 |

| snow | 00.00 | 00.00 | 00.00 | 00.00 |

| greenhouse | 39.45 | 56.57 | 45.52 | 74.72 |

| ----------------------- | ----------|---------|---------|---------|

| average | 54.72 | 67.60 | 69.35 | 67.66 |

{{ testing_metrics | default("[More Information Needed]", true)}}

|

|

## Evaluation

### Testing Data, Factors & Metrics

#### Testing Data

The evaluation was performed on a TEST set of 31750 patches that are independant from the TRAIN and VALIDATION patches. They represent 15 spatio-temporal domains.

The TEST set corresponds to the reunion of the TEST set of scientific challenges FLAIR#1 and FLAIR#2. See the [FLAIR challenge page](https://ignf.github.io/FLAIR/) for more details.

The choice of a separate TEST set instead of cross validation was made to be coherent with the FLAIR challenges.

However the metrics for the Challenge were calculated on 12 classes and the TEST set acordingly. As a result the _Snow_ class is unfortunately absent from the TEST set.

#### Metrics

With the evaluation protocol, the **FLAIR-INC_RVBIE_resnet34_unet_15cl_norm** have been evaluated to **OA= 76.37%** and **mIoU=54.71%**.

The following table give the class-wise metrics :

| Modalities | IoU (%) | Fscore (%) | Precision (%) | Recall (%) |

| ----------------------- | ----------|---------|---------|---------|

| building | 82.63 | 90.49 | 90.26 | 90.72 |

| pervious surface | 53.24 | 69.48 | 68.97 | 70.00 |

| impervious surface | 74.17 | 85.17 | 86.28 | 84.09 |

| bare soil | 60.40 | 75.31 | 80.49 | 70.75 |

| water | 87.59 | 93.38 | 93.16 | 93.61 |

| coniferous | 46.35 | 63.34 | 63.52 | 63.16 |

| deciduous | 67.45 | 80.56 | 77.44 | 83.94 |

| brushwood | 30.23 | 46.43 | 63.55 | 36.58 |

| vineyard | 82.93 | 90.67 | 91.35 | 89.99 |

| herbaceous vegetation | 55.03 | 70.99 | 70.59 | 71.40 |

| agricultural land | 52.01 | 68.43 | 59.18 | 81.12 |

| plowed land | 40.84 | 57.99 | 68.28 | 50.40 |

| swimming_pool | 48.44 | 65.27 | 81.62 | 54.37 |

| snow | 00.00 | 00.00 | 00.00 | 00.00 |

| greenhouse | 39.45 | 56.57 | 45.52 | 74.72 |

| ----------------------- | ----------|---------|---------|---------|

| average | 54.72 | 67.60 | 69.35 | 67.66 |

{{ testing_metrics | default("[More Information Needed]", true)}}

|  |`

|` |

### Results

{{ results | default("[More Information Needed]", true)}}

#### Summary

{{ results_summary | default("", true) }}

## Technical Specifications [optional]

### Model Architecture and Objective

{{ model_specs | default("[More Information Needed]", true)}}

## Citation [optional]

**BibTeX:**

{{ citation_bibtex | default("[More Information Needed]", true)}}

**APA:**

{{ citation_apa | default("[More Information Needed]", true)}}

## Contact

ai-challenge@ign.fr

|

### Results

{{ results | default("[More Information Needed]", true)}}

#### Summary

{{ results_summary | default("", true) }}

## Technical Specifications [optional]

### Model Architecture and Objective

{{ model_specs | default("[More Information Needed]", true)}}

## Citation [optional]

**BibTeX:**

{{ citation_bibtex | default("[More Information Needed]", true)}}

**APA:**

{{ citation_apa | default("[More Information Needed]", true)}}

## Contact

ai-challenge@ign.fr