---

# For reference on model card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/modelcard.md?plain=1

# Doc / guide: https://huggingface.co/docs/hub/model-cards

{}

---

# ToonCrafter (512x320) Generative Cartoon Interpolation Model Card

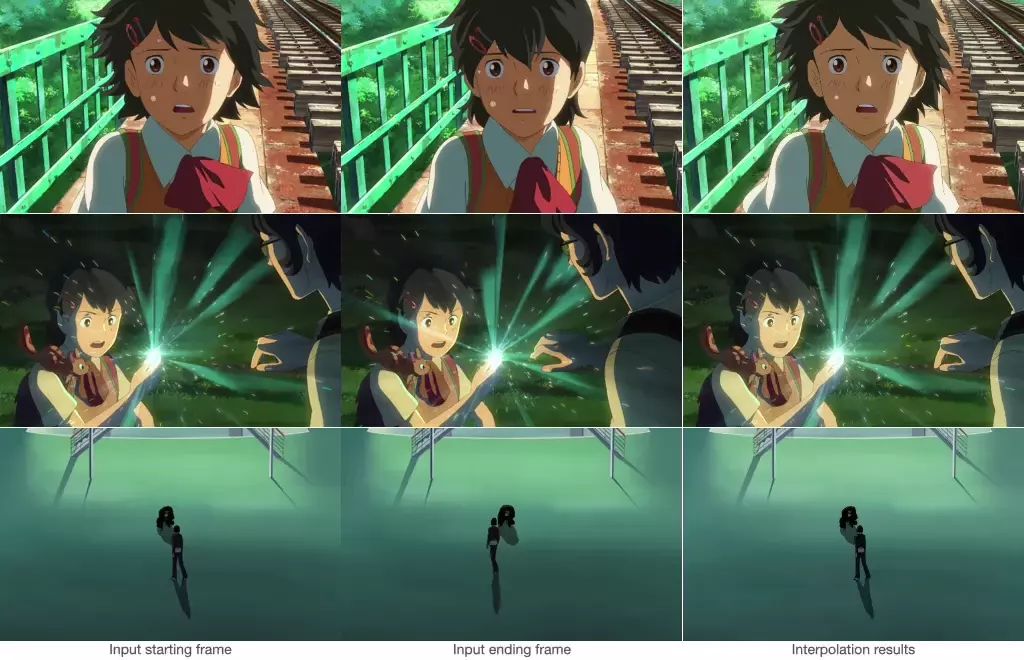

ToonCrafter (512x320) is a video diffusion model that

takes in two still images as conditioning images and text prompt describing dynamics,

and generates interpolation videos from them.

## Model Details

### Model Description

ToonCrafter, a generative cartoon interpolation approach, aims to generate

short video clips (~2 seconds) from two conditioning images (starting frame and ending frame) and text prompt.

This model was trained to generate 16 video frames at a resolution of 512x320

given a context frame of the same resolution.

- **Developed by:** CUHK & Tencent AI Lab

- **Funded by:** CUHK & Tencent AI Lab

- **Model type:** Video Diffusion Model

- **Finetuned from model:** DynamiCrafter-interpolation (512x320)

### Model Sources

For research purpose, we recommend our Github repository (https://github.com/ToonCrafter/ToonCrafter),

which includes detailed implementations.

- **Repository:** https://github.com/ToonCrafter/ToonCrafter

- **Paper:** https://arxiv.org/abs/2405.17933

- **Project page:** https://doubiiu.github.io/projects/ToonCrafter/

- **Demo1:** https://huggingface.co/spaces/Doubiiu/tooncrafter

- **Demo2:** https://replicate.com/fofr/tooncrafter

## Uses

Feel free to use it under the Apache-2.0 license. Note that we don't have any official commercial product for ToonCrafter currently.

## Limitations

- The generated videos are relatively short (2 seconds, FPS=8).

- The model cannot render legible text.

- The autoencoding part of the model is lossy, resulting in slight flickering artifacts.

## How to Get Started with the Model

Check out https://github.com/ToonCrafter/ToonCrafter