---

base_model: m-a-p/OpenCodeInterpreter-DS-6.7B

language:

- en

pipeline_tag: text-generation

tags:

- code

license: apache-2.0

model_creator: Multimodal Art Projection (M-A-P)

model_name: OpenCodeInterpreter DS 6.7B

model_type: deepseek

datasets:

- m-a-p/CodeFeedback-Filtered-Instruction

quantized_by: CISC

---

# OpenCodeInterpreter DS 6.7B - SOTA GGUF

- Model creator: [Multimodal Art Projection](https://huggingface.co/m-a-p)

- Original model: [OpenCodeInterpreter DS 6.7B](https://huggingface.co/m-a-p/OpenCodeInterpreter-DS-6.7B)

## Description

This repo contains State Of The Art quantized GGUF format model files for [OpenCodeInterpreter DS 6.7B](https://huggingface.co/m-a-p/OpenCodeInterpreter-DS-6.7B).

Quantization was done with an importance matrix that was trained for ~1M tokens (2000 batches of 512 tokens) of answers from the [CodeFeedback-Filtered-Instruction](https://huggingface.co/datasets/m-a-p/CodeFeedback-Filtered-Instruction) dataset.

Even though the 1-bit quantized model file "works" it is **not recommended** for normal use ~~as it is extremely error-prone~~, I've requantized it with a 4K-context imatrix which seems to have improved it a little bit but it still defaults to infinite loops, you have been warned. 🧐

## Prompt template: DeepSeek

```

You are an AI programming assistant, utilizing the Deepseek Coder model, developed by Deepseek Company, and you only answer questions related to computer science. For politically sensitive questions, security and privacy issues, and other non-computer science questions, you will refuse to answer.

### Instruction:

{prompt}

### Response:

```

## Compatibility

These quantised GGUFv3 files are compatible with llama.cpp from February 26th 2024 onwards, as of commit [0becb22](https://github.com/ggerganov/llama.cpp/commit/0becb22ac05b6542bd9d5f2235691aa1d3d4d307)

They are also compatible with many third party UIs and libraries provided they are built using a recent llama.cpp.

## Explanation of quantisation methods

Click to see details

The new methods available are:

* GGML_TYPE_IQ1_S - 1-bit quantization in super-blocks with an importance matrix applied, effectively using 1.56 bits per weight (bpw)

* GGML_TYPE_IQ2_XXS - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.06 bpw

* GGML_TYPE_IQ2_XS - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.31 bpw

* GGML_TYPE_IQ2_S - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.5 bpw

* GGML_TYPE_IQ2_M - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.7 bpw

* GGML_TYPE_IQ3_XXS - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.06 bpw

* GGML_TYPE_IQ3_XS - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.3 bpw

* GGML_TYPE_IQ3_S - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.44 bpw

* GGML_TYPE_IQ3_M - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.66 bpw

* GGML_TYPE_IQ4_XS - 4-bit quantization in super-blocks with an importance matrix applied, effectively using 4.25 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [OpenCodeInterpreter-DS-6.7B.IQ1_S.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ1_S.gguf) | IQ1_S | 1 | 1.5 GB| 3.5 GB | smallest, significant quality loss - not recommended **at all** |

| [OpenCodeInterpreter-DS-6.7B.IQ2_XXS.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ2_XXS.gguf) | IQ2_XXS | 2 | 1.8 GB| 3.8 GB | very small, high quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ2_XS.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ2_XS.gguf) | IQ2_XS | 2 | 1.9 GB| 3.9 GB | very small, high quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ2_S.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ2_S.gguf) | IQ2_S | 2 | 2.1 GB| 4.1 GB | small, substantial quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ2_M.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ2_M.gguf) | IQ2_M | 2 | 2.2 GB| 4.2 GB | small, greater quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ3_XXS.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ3_XXS.gguf) | IQ3_XXS | 3 | 2.5 GB| 4.5 GB | very small, high quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ3_XS.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ3_XS.gguf) | IQ3_XS | 3 | 2.7 GB| 4.7 GB | small, substantial quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ3_S.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ3_S.gguf) | IQ3_S | 3 | 2.8 GB| 4.8 GB | small, greater quality loss |

| [OpenCodeInterpreter-DS-6.7B.IQ3_M.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ3_M.gguf) | IQ3_M | 3 | 3.0 GB| 5.0 GB | medium, balanced quality - recommended |

| [OpenCodeInterpreter-DS-6.7B.IQ4_XS.gguf](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.IQ4_XS.gguf) | IQ4_XS | 4 | 3.4 GB| 5.4 GB | small, substantial quality loss |

Generated importance matrix file: [OpenCodeInterpreter-DS-6.7B.imatrix.dat](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.imatrix.dat)

Generated importance matrix file (4K context): [OpenCodeInterpreter-DS-6.7B.imatrix-4096.dat](https://huggingface.co/CISCai/OpenCodeInterpreter-DS-6.7B-SOTA-GGUF/blob/main/OpenCodeInterpreter-DS-6.7B.imatrix-4096.dat)

**Note**: the above RAM figures assume no GPU offloading with 4K context. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

## Example `llama.cpp` command

Make sure you are using `llama.cpp` from commit [0becb22](https://github.com/ggerganov/llama.cpp/commit/0becb22ac05b6542bd9d5f2235691aa1d3d4d307) or later.

```shell

./main -ngl 33 -m OpenCodeInterpreter-DS-6.7B.IQ3_M.gguf --color -c 16384 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "You are an AI programming assistant, utilizing the Deepseek Coder model, developed by Deepseek Company, and you only answer questions related to computer science. For politically sensitive questions, security and privacy issues, and other non-computer science questions, you will refuse to answer.\n### Instruction:\n{prompt}\n### Response:"

```

Change `-ngl 33` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change `-c 16384` to the desired sequence length.

If you want to have a chat-style conversation, replace the `-p ` argument with `-i -ins`

For other parameters and how to use them, please refer to [the llama.cpp documentation](https://github.com/ggerganov/llama.cpp/blob/master/examples/main/README.md)

# Original model card: Multimodal Art Projection's OpenCodeInterpreter DS 6.7B

OpenCodeInterpreter: Integrating Code Generation with Execution and Refinement

[🏠Homepage]

|

[🛠️Code]

## Introduction

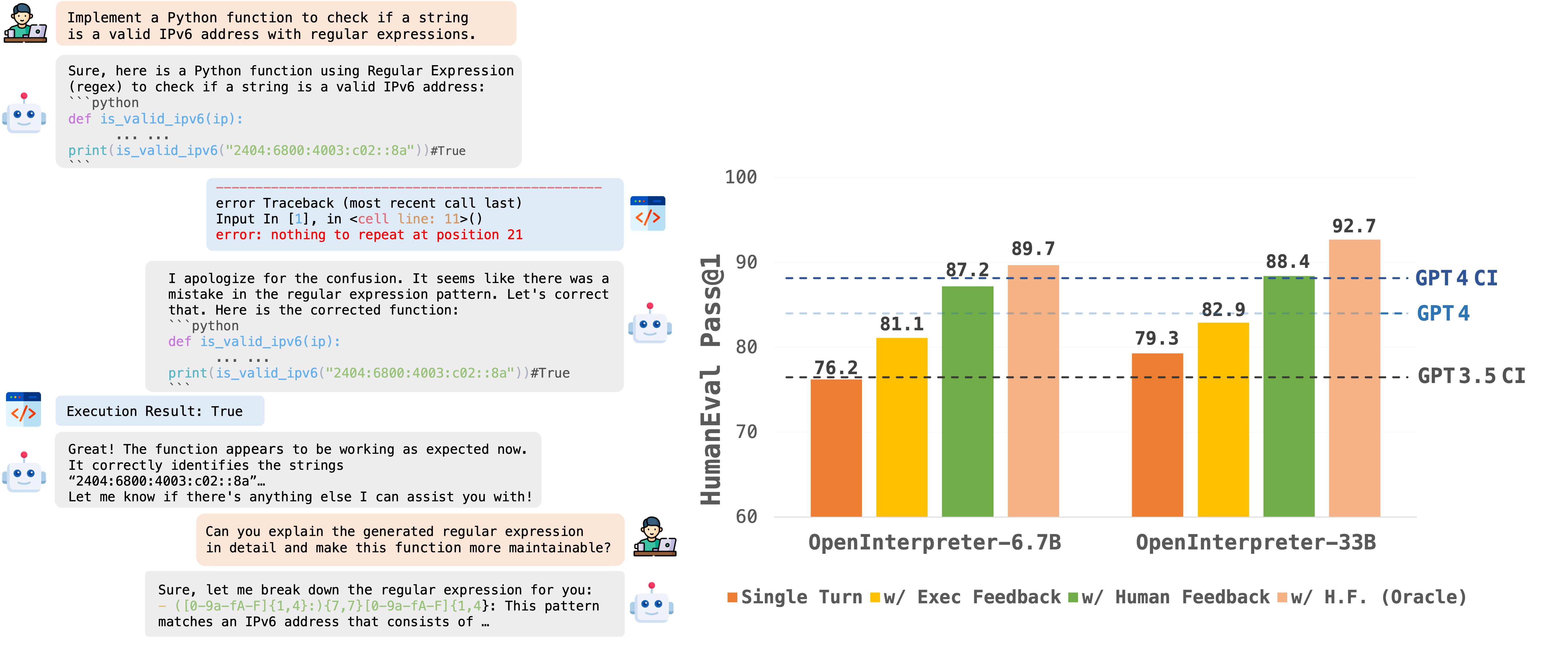

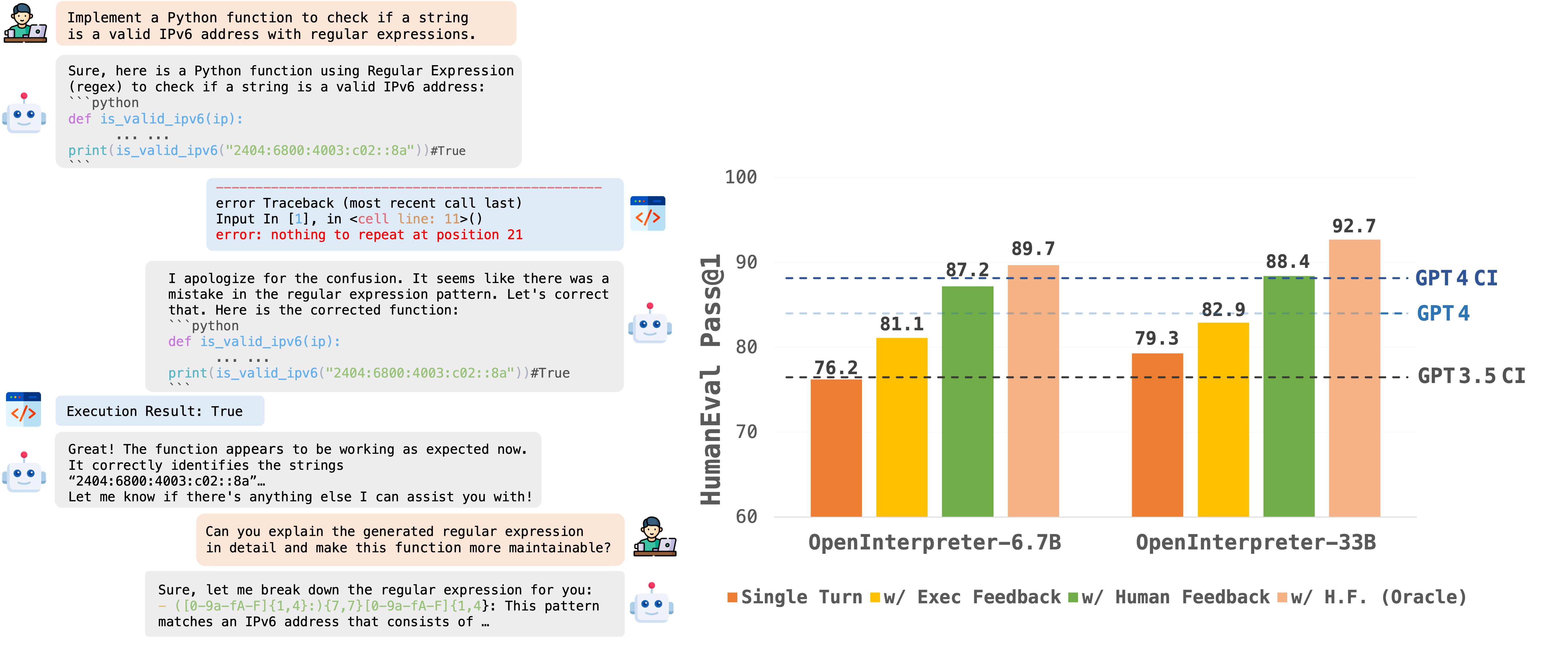

OpenCodeInterpreter is a family of open-source code generation systems designed to bridge the gap between large language models and advanced proprietary systems like the GPT-4 Code Interpreter. It significantly advances code generation capabilities by integrating execution and iterative refinement functionalities.

For further information and related work, refer to our paper: ["OpenCodeInterpreter: A System for Enhanced Code Generation and Execution"](https://arxiv.org/abs/2402.14658) available on arXiv.

## Model Information

This model is based on [deepseek-coder-6.7b-base](https://huggingface.co/deepseek-ai/deepseek-coder-6.7b-base).

## Model Usage

### Inference

```python

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_path="m-a-p/OpenCodeInterpreter-DS-6.7B"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForCausalLM.from_pretrained(

model_path,

torch_dtype=torch.bfloat16,

device_map="auto",

)

model.eval()

prompt = "Write a function to find the shared elements from the given two lists."

inputs = tokenizer.apply_chat_template(

[{'role': 'user', 'content': prompt }],

return_tensors="pt"

).to(model.device)

outputs = model.generate(

inputs,

max_new_tokens=1024,

do_sample=False,

pad_token_id=tokenizer.eos_token_id,

eos_token_id=tokenizer.eos_token_id,

)

print(tokenizer.decode(outputs[0][len(inputs[0]):], skip_special_tokens=True))

```

## Contact

If you have any inquiries, please feel free to raise an issue or reach out to us via email at: xiangyue.work@gmail.com, zhengtianyu0428@gmail.com.

We're here to assist you!"